Agentic AI refers to AI systems that autonomously plan, execute multi-step workflows, and adapt based on feedback, all without requiring a human prompt at each step. Unlike conversational models that respond to a single query, agentic systems use a foundation model as a reasoning core, connect it to tools and memory, and coordinate multiple specialized agents to complete complex, real-world tasks.

Why Agentic AI Is the Next Enterprise Infrastructure Layer

Agentic AI systems are the next layer of enterprise infrastructure, and the gap between teams that understand this and teams that do not is widening by the quarter.

According to McKinsey’s State of AI 2025 report, 88% of enterprises now use AI regularly in at least one business function. Yet only 23% are scaling agentic systems anywhere in the business. That delta is where the competitive advantage lives right now.

Gartner (2025) projects that 40% of enterprise applications will integrate task-specific AI agents by end of 2026, up from less than 5% today. That is a 33-fold expansion in under two years. The global agentic AI market stood at $5.25 billion in 2024 and is projected to reach $199 billion by 2034, compounding at nearly 44% annually.

The message to engineering leaders is plain: this is not a trend to monitor. It is infrastructure to build.

“The gap between experimenting with AI and scaling it is not a technology problem. It is an architecture problem.”

What Makes a System Truly Agentic: The Four Core Properties

Not every LLM wrapper is an agent. Calling an API in a loop is not agentic behavior. A system qualifies as agentic AI when it demonstrates four specific properties.

Autonomy means the system operates without a human prompt at each step. Goal-orientation means it pursues a defined objective across multiple actions, not a single response. Environment interaction means it reads from and writes to external systems including databases, APIs, and code execution environments. Adaptive learning means it revises its plan when a step fails or returns unexpected results.

The research is clear on why all four matter. Yuksel and Sawaf (2024, arXiv:2412.17149) demonstrated that multi-agent systems using iterative feedback loops achieve significant quality improvements over monolithic approaches, because agents can evaluate and revise each other’s outputs before committing. A single-model approach lacks this self-correction layer.

Agentic AI Architecture: How the Layers Connect

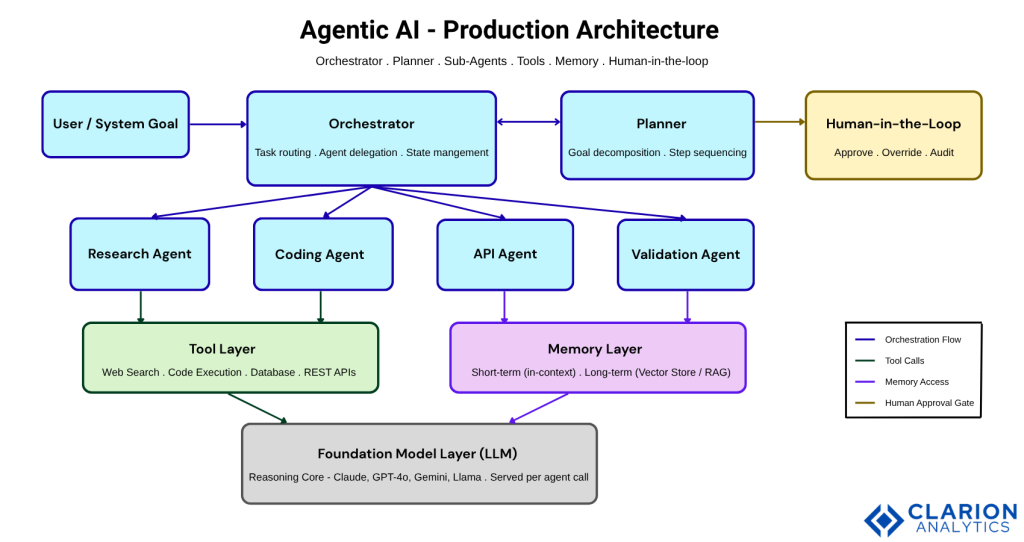

A production agentic AI architecture has five layers. Understanding each layer and its responsibilities is what separates systems that hold up in production from those that collapse under load or produce unauditable outputs.

The Orchestrator manages task routing and agent delegation. It receives a high-level goal and decides which sub-agents to invoke and in what order. The Planner decomposes goals into atomic steps that map to individual agent calls. The Tool Layer exposes APIs, database connectors, web search, and code execution environments. The Memory Layer provides short-term working memory for in-progress reasoning and long-term vector storage for retrieval across sessions. The Model Layer is the LLM that performs reasoning within each agent node.

A recent arXiv paper on multi-agent orchestration architectures (2026) formalizes two interoperability protocols that underpin scalable systems: the Model Context Protocol (MCP) for tool access, and the Agent-to-Agent (A2A) protocol for peer coordination. Both have been donated to the Linux Foundation and are now the de facto communication substrate for enterprise multi-agent deployments.

Figure 1: A production agentic AI system routes all tasks through an Orchestrator that delegates to specialized sub-agents. Each sub-agent accesses a Tool Layer (APIs, databases, code execution) and a Memory Layer (in-context and vector store). The Planner decomposes high-level goals into atomic steps. Human-in-the-loop checkpoints sit between the Orchestrator and any external write operations to enforce governance.

“Agentic AI does not replace your existing systems. It adds a reasoning layer that connects them.”

Real-World Use Cases: Where Agentic AI Delivers Measurable ROI

The strongest early returns from agentic AI appear in three domains: software engineering, customer service, and financial services.

In software engineering, agentic AI enables 4x faster code debugging and is already embedded in the daily workflows of teams using tools like GitHub Copilot Workspace and Devin. The pattern is the same in every case: a developer defines the objective, the agent iterates across the codebase, and a human reviews the output before merging.

Customer service follows the same structure. By 2028, Cisco projects that agentic AI will manage 68% of all customer service interactions with technology vendors. In financial services, BFSI leads enterprise adoption for fraud detection, compliance automation, and personalized advisory.

Code Snippet 1: LangGraph Python ReAct Agent

Source: langchain-ai/langgraph, README.md

This snippet wires an LLM to a custom Python function using LangGraph’s create_react_agent. The agent runs a Reason-Act loop: the model decides whether to call the tool or produce a final answer. It demonstrates the three primitives every production agent needs: a model, a tool schema, and a state-managed execution graph. In practice, teams building their first production agent use this pattern as the core, then extend it with retrieval tools, database connectors, and parallel sub-agent calls.

Framework Decision Guide: LangGraph, AutoGen, or CrewAI?

Framework selection is the single most consequential early decision in an agentic project. Changing frameworks after you reach production scale means a 50-80% rewrite.

| Framework | Key Strength | Best Used When |

|---|---|---|

| LangGraph (langchain-ai, 27.4k stars) | Graph-based state management; durable execution; human-in-the-loop native; 34.5M monthly downloads | Building complex, stateful production workflows that must recover from failures and support observability |

| AutoGen (Microsoft, 54k stars) | Multi-agent conversation patterns; group chats; nested conversations; Azure-native | Running collaborative agent teams in the Microsoft ecosystem or research-grade experiments |

| CrewAI (55.6k stars, 60% Fortune 500 users) | Role-based task decomposition; fast prototyping | Shipping business workflows quickly: content pipelines, sales automation, research assistants |

| OpenAI Agents SDK (19k stars, 10.3M monthly downloads) | Lightweight; provider-agnostic; 100+ LLM support; built-in tracing | Teams wanting multi-LLM flexibility with minimal boilerplate |

“Framework choice is not a library decision. It is an architectural commitment that determines how far your agents can scale.”

Code Snippet 2: LangGraph.js TypeScript ReAct Agent

Source: langchain-ai/langgraphjs, README.md

The TypeScript version shows the identical agentic loop running on Node.js with typed tool schemas enforced via Zod. This matters for enterprise teams: agentic AI is not a Python-only capability. Full-stack teams can integrate the same orchestration patterns into their existing TypeScript services without context-switching to a separate data science stack.

Implementation Playbook: Building Your First Production Agent

Teams building this typically find that the code is the easy part. The hard part is everything around the code: observability, memory strategy, failure recovery, and governance.

Before writing a single line, answer five questions. First, what is the exact boundary of the task the agent owns? Second, which framework matches your team’s language and production requirements? Third, what is the tool schema (inputs, outputs, error codes) for every external system the agent touches? Fourth, does the agent need session memory, cross-session memory, or both? Fifth, at which steps does a human need to approve the agent’s action before it executes?

McKinsey’s September 2025 research on deploying agentic AI found that governance cannot remain a periodic exercise when agents operate continuously. It must become real-time, data-driven, and embedded, with humans holding final accountability.

“The teams shipping reliable agentic AI are not the ones with the best models. They are the ones with the best observability.”

Frequently Asked Questions

What is agentic AI and how is it different from generative AI? Generative AI produces a single output in response to a single input. Agentic AI plans a sequence of actions, calls tools, evaluates results, and adapts its behavior across multiple steps to achieve a goal, all without a human prompt at each stage. Generative AI answers questions. Agentic AI completes tasks.

Which framework should I use to build AI agents in 2025? Use LangGraph if you need fine-grained control over stateful Python workflows with durable execution and observability. Use AutoGen if you are building in the Microsoft Azure ecosystem or need complex multi-agent conversation patterns. Use CrewAI if you want to ship a role-based agent team quickly. All three are production-tested and actively maintained.

How do I architect a multi-agent system for enterprise workloads? Start with an Orchestrator that delegates to specialized sub-agents. Each sub-agent should own exactly one capability: retrieval, code execution, API calls, or validation. Connect all agents through a shared memory layer and expose every agent action through a logging interface. Never let an agent write to an external system without a human-in-the-loop checkpoint.

What is the biggest risk when deploying agentic AI in production? The primary risk is unauditable autonomous action: an agent takes an external action (sending an email, writing to a database, making an API call) that cannot be traced, explained, or reversed. Mitigate this by treating every external write as a checkpoint requiring human approval during the first 90 days of deployment.

How long does it take to build a working AI agent? A basic single-agent prototype using LangGraph or CrewAI takes one to three days for an experienced developer. A production-grade system with memory, observability, error recovery, and human-in-the-loop governance takes four to eight weeks per agent workflow, depending on the complexity of the tool integrations.

The Agentic Shift Is Already Happening. Are You Building for It?

Three insights stand above all others from this analysis. Agentic AI is not an upgrade to generative AI; it is a distinct architectural paradigm built on orchestration, memory, and tool integration. Framework selection is an architectural decision with long-term consequences, not a library preference. And governance is not a compliance checkbox; it is the engineering discipline that separates scalable agentic systems from expensive experiments.

The 23% of enterprises currently scaling agentic systems have a narrowing lead. The 77% who are still experimenting face a choice: build the architecture now, when frameworks are mature and patterns are documented, or wait until the window closes.

What is the first workflow in your organization that a well-designed agent could own end-to-end?

Table of Content

- Why Agentic AI Is the Next Enterprise Infrastructure Layer

- What Makes a System Truly Agentic: The Four Core Properties

- Agentic AI Architecture: How the Layers Connect

- Real-World Use Cases: Where Agentic AI Delivers Measurable ROI

- Framework Decision Guide: LangGraph, AutoGen, or CrewAI?

- Implementation Playbook: Building Your First Production Agent

- Frequently Asked Questions

- The Agentic Shift Is Already Happening. Are You Building for It?