Definition: An AI agent is a modular software system powered by a large language model that executes a single, well-defined task within explicit boundaries. Agentic AI is the orchestration layer above agents: a multi-agent system that sets its own goals, decomposes complex workflows, routes subtasks to specialized agents, maintains persistent memory, and adapts its plan as conditions change.

Why This Distinction Is Costing Teams Real Money

The terms “AI agents” and “agentic AI” are used interchangeably across vendor pitch decks, developer forums, and analyst reports. That confusion has a measurable cost. Gartner (2025) predicts over 40% of agentic AI projects will be cancelled by end of 2027, citing escalating costs and unclear business value as the primary causes. Much of that failure traces back to a single architectural mistake: teams deploy a single-task AI agent to solve a multi-step, cross-system workflow that only an agentic orchestration layer can handle.

When you confuse the two, you end up with fragmented automation, stalled ROI, and a system that cannot scale. The distinction matters now more than ever, because “agent” has become the default label for everything from a glorified chatbot to a fully autonomous multi-agent pipeline.

“AI agents wait to be called. Agentic AI takes initiative and that autonomy gap is where enterprise value is being won or lost.”

What Is an AI Agent? Definition and Core Architecture

An AI agent perceives inputs, applies LLM-based reasoning, uses tools via APIs, and executes a single defined task. It is reactive, not proactive. It waits for a call, processes the input within explicit boundaries set at design time, and returns a result.

MIT Sloan (2025) defines AI agents as autonomous software systems that perceive, reason, and act in digital environments to achieve goals on behalf of human principals, with capabilities for tool use, economic transactions, and strategic interaction. That definition captures the unit precisely: one goal, one agent, one outcome.

Practical examples of AI agents in production today include: a customer support bot that resolves a ticket, an invoice processor that extracts and matches line items, a code reviewer that flags security vulnerabilities, and a scheduling agent that books a meeting based on calendar availability. Each of these is bounded, predictable, and designed for a single, well-defined task.

The critical limit of an AI agent is scope. It does not orchestrate other agents, it does not set its own goals, and it does not replan when the environment changes. When the task stays within its design envelope, it works reliably. When the task crosses a boundary, it stalls.

What Is Agentic AI? Definition and Core Architecture

Agentic AI is the system above the agents. It receives a high-level goal, decomposes it into subtasks, routes those subtasks to specialized agents, collects and synthesizes their outputs, evaluates progress against the original goal, and replans when something changes. The key properties are multi-agent collaboration, dynamic task decomposition, persistent memory across agents, and self-directed planning loops.

A landmark 2025 academic review by Sapkota, Roumeliotis, and Karkee (arXiv / Information Fusion) offers the first formal conceptual taxonomy distinguishing the two paradigms. They characterize agentic AI systems as representing a paradigmatic shift marked by multi-agent collaboration, dynamic task decomposition, persistent memory, and orchestrated autonomy. Application domains for agentic AI include research automation, robotic coordination, and medical decision support tasks that individual agents could not tackle alone.

Think of the orchestrator as the executive function layer. Individual agents are specialists. The agentic system is the manager that knows which specialist to call, in what order, and what to do when a specialist returns an unexpected result. That is the architectural leap that turns isolated automations into a coherent, goal-directed workflow.

Head-to-Head: AI Agents vs. Agentic AI

The core difference is scope and autonomy. AI agents handle one task with predictable outputs. Agentic AI orchestrates multiple agents across dynamic, multi-step workflows, adapting to changing conditions without human re-prompting. According to McKinsey’s State of AI (2025), 62% of organizations are experimenting with AI agents, but only 23% have successfully scaled agentic systems, a gap that reflects exactly how much harder the orchestration layer is to deploy than a single agent.

| Dimension | AI Agent | Agentic AI |

|---|---|---|

| Scope | Single, well-defined task | Multi-step, goal-directed workflow |

| Autonomy | Reactive, executes when called | Proactive, sets and re-prioritizes goals |

| Memory | Session-limited or stateless | Persistent across agents and sessions |

| Architecture | Single LLM + tools | Orchestrator + multiple specialized agents |

| Planning | Fixed or scripted logic | Dynamic decomposition and replanning |

| Best Used When | Predictable, repeatable tasks with clear inputs and outputs | Complex cross-system workflows requiring adaptation |

Real-World Use Cases: When to Use Which

Use an AI agent when the task is well-bounded and the outcome is predictable. Use agentic AI when the problem requires multiple steps, cross-system data, dynamic planning, or specialized collaboration across functions.

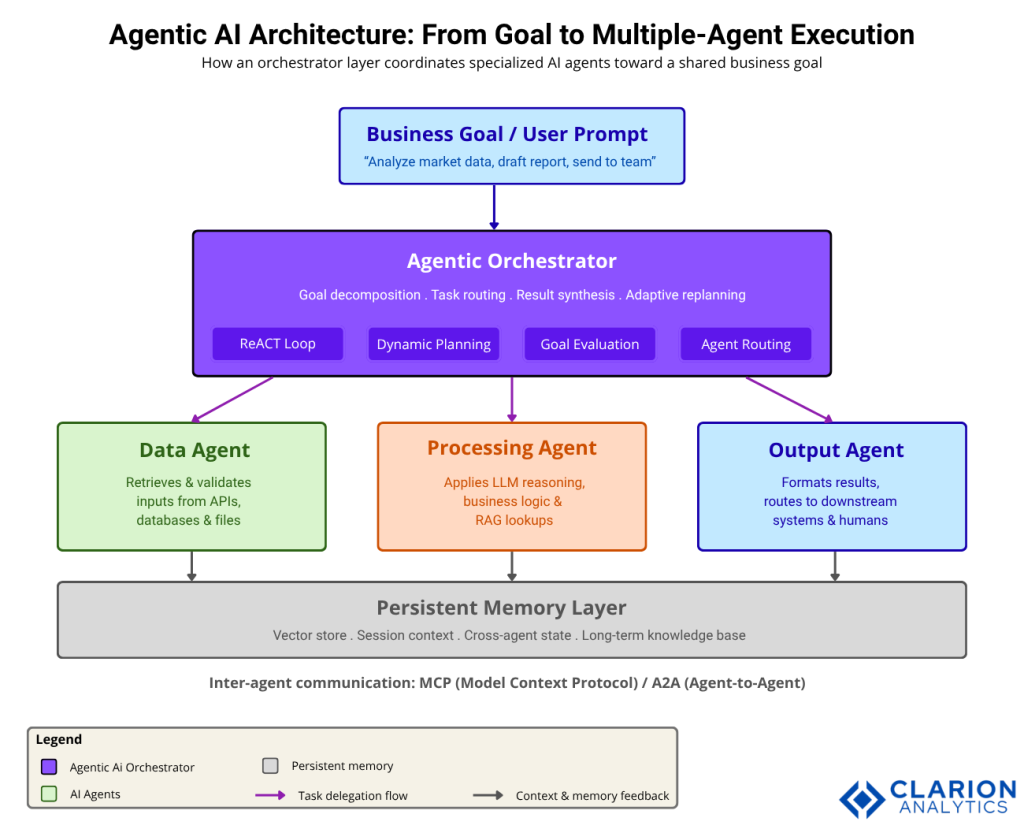

The diagram shows a two-tier architecture. The lower tier contains three specialized AI agents: a Data Agent (retrieves and validates inputs), a Processing Agent (applies business logic and LLM reasoning), and an Output Agent (formats and routes results). The upper tier is the Agentic Orchestrator, which receives a high-level goal, decomposes it into subtasks, routes them to the appropriate agents via MCP or A2A protocols, collects outputs, evaluates progress, and replans as needed. Persistent memory (vector store + session context) runs horizontally across all agents, enabling context sharing without re-prompting.

“If individual AI agents are players on a field, agentic AI is the coach, the playbook, and the entire game plan operating at once.”

Code Snippet 1: A Single LangGraph Agent Node (AI Agent Pattern)

Source: langchain-ai/langchain (LangGraph)

This snippet defines a single stateful LangGraph node; the fundamental AI Agent unit. It receives an input state, calls an LLM, and returns an output. To build agentic AI, you wire multiple nodes like this into an orchestrated graph, adding a coordinator that manages routing, replanning, and persistent memory.

The Framework Landscape: LangChain, CrewAI, and AutoGen

LangGraph (part of LangChain) is the leading production-grade agentic orchestration runtime. CrewAI is fastest for role-based multi-agent prototypes. Microsoft AutoGen, now being unified with Semantic Kernel into the Microsoft Agent Framework, is best for enterprise Azure environments. LangChain crossed 90K+ GitHub stars in 2025 and CrewAI reached 25K+ stars while being adopted by 60% of Fortune 500 companies for role-based agentic workflows.

Teams building agentic systems in practice typically find that the framework choice is not a technical detail — it is an architectural commitment that determines how far the system can scale, how easy it is to observe, and how well it holds up when requirements change.

Code Snippet 2: A Two-Agent CrewAI Crew (Agentic AI Pattern)

Source: crewAIInc/crewAI

This snippet defines two specialized agents with distinct roles, goals, and backstories, then chains their tasks so the Writer receives the Researcher’s output as context. This role-based delegation is the hallmark of agentic AI: individual agents are autonomous, but the Crew orchestrates them toward a shared goal.

“The framework you choose is not a technical detail, it is an architectural commitment that determines how far your agentic system can scale.”

Implementation Guidance for CTOs and Engineering Leads

Start with a single, high-value AI agent in production to prove the pattern. Deploy it against a well-bounded task where success is measurable: invoice processing, support triage, code review, or data extraction. Once you have a reliable agent, layer an orchestration framework to build agentic workflows; never attempt enterprise-wide agentic deployment without first establishing governance, observability, and human-in-the-loop checkpoints.

BCG’s The Widening AI Value Gap (2025), based on a survey of 1,250 senior executives, found that agentic AI already drives 17% of total AI business value, with that share expected to reach 29% by 2028. Future-built firms those with a mature agentic strategy achieve 3.6x higher three-year TSR than laggards. The gap is widening, not narrowing.

In practice, teams building agentic systems spend roughly 80% of their effort on infrastructure work: standardizing data formats for cross-agent sharing, building observability into every agent interaction, establishing governance policies for autonomous actions, and integrating with existing enterprise systems. The model quality matters far less than the plumbing that surrounds it.

“Eighty percent of the work in deploying an agentic AI system is data engineering, stakeholder alignment, and governance, not prompt engineering.”

Frequently Asked Questions

What is the simplest way to explain AI agents vs agentic AI?

An AI agent is a specialist, it handles one defined task when called. Agentic AI is the system that manages a team of specialists: it sets the goal, assigns tasks, integrates results, and adapts when something goes wrong. One is a tool. The other is an operating model.

Do I need agentic AI, or will a single AI agent solve my problem?

If your workflow is linear, has clear inputs and outputs, and always produces a predictable result, a single AI agent is the right choice. If the task crosses systems, requires branching decisions, or needs to adapt to changing inputs mid-execution, you need an agentic architecture with an orchestration layer above the agents.

Which framework should I use to build a multi-agent agentic AI system?

LangGraph is the strongest choice for production systems requiring complex control flow and observability. CrewAI is fastest for role-based agent teams when requirements are stable. Microsoft Agent Framework (the merger of AutoGen and Semantic Kernel) is the right path for Azure-native enterprises targeting GA in early 2026.

How is agentic AI different from traditional automation like RPA?

RPA executes fixed, rule-based scripts on structured data and breaks on exceptions. Agentic AI reasons through ambiguity, handles unstructured data, decomposes novel problems dynamically, and invokes external tools, APIs, and other agents. Where RPA automates repetition, agentic AI automates judgment within defined guardrails.

What is the biggest risk in deploying agentic AI at enterprise scale?

Gartner (2025) predicts over 40% of agentic AI projects will be cancelled by 2027, primarily due to unclear business value, escalating costs, and inadequate governance. The two most common failure patterns are deploying agentic architectures for tasks a single agent handles better, and launching without observability or human-in-the-loop controls.

Three Things That Should Change How You Build

First, the taxonomy distinction is an architectural decision, not semantics. Choosing whether to build a single AI agent or an agentic orchestration layer determines your system’s scope, scalability, and governance model. Get this wrong and the ROI never materializes.

Second, start with AI agents and graduate to agentic orchestration when tasks cross boundaries. The AI agent is the validated unit. Agentic AI is the system you build when one agent is no longer enough. This is not a leap, it is a ladder.

Third, governance and observability are non-negotiable before scaling. Every autonomous action an agentic system takes needs an audit trail, a fallback, and a human checkpoint. The companies pulling ahead are not the ones moving fastest, they are the ones moving deliberately.

What is the one workflow in your stack today where a single AI agent keeps hitting its ceiling? That ceiling is exactly where your agentic architecture begins.

Table of Content

- Why This Distinction Is Costing Teams Real Money

- What Is an AI Agent? Definition and Core Architecture

- What Is Agentic AI? Definition and Core Architecture

- Head-to-Head: AI Agents vs. Agentic AI

- Real-World Use Cases: When to Use Which

- The Framework Landscape: LangChain, CrewAI, and AutoGen

- Implementation Guidance for CTOs and Engineering Leads

- Frequently Asked Questions

- Three Things That Should Change How You Build