DEFINITION – AI in manufacturing refers to the deployment of machine learning, computer vision, and data-driven analytics across production systems to automate decisions, predict failures, and optimise quality in real time. It spans applications from edge-based defect detection to enterprise-wide supply chain orchestration. Unlike traditional automation, which follows fixed rules, AI systems learn continuously from sensor data, enabling factories to adapt faster than any human operator could.

The $43 Billion Signal Your Factory Cannot Afford to Ignore

The global industrial AI market reached $43.6 billion in 2024 and is forecast to grow at a 23% CAGR to $153.9 billion by 2030, according to IoT Analytics (2025). That is not a forecast built on hype. It reflects a measurable shift: AI in manufacturing is moving from pilot projects to production-grade deployments that reshape cost structures.

According to McKinsey (2023), AI-enabled “lighthouse” factories achieve two to three times the productivity of conventional plants, alongside a 50% improvement in service levels. Manufacturers applying machine learning are three times more likely to improve their key performance indicators. The gap between early adopters and laggards is not closing; it is widening.

This post maps the five highest-ROI use cases, provides a reference architecture your team can build against, and includes working code snippets drawn from production-grade open-source repositories.

“The factories winning today are not the ones with the newest machines. They are the ones whose machines predict their own failures.”

The Five Core Use Cases Driving Real Returns

The five highest-ROI AI applications in manufacturing are predictive maintenance, computer vision quality control, production scheduling optimisation, supply chain intelligence, and generative AI-assisted product design.

Predictive maintenance (PdM) is the dominant application. Grand View Research (2025) confirms that machine learning held the largest technology share in 2024. A 2025 arXiv systematic review quantifies the stakes: a single day of unplanned downtime costs between €100,000 and €200,000, and industrial component failures increased 40% between 2010 and 2018. Deloitte (2024) reports that companies implementing PdM reduce maintenance costs by 25% and cut equipment failures by 70%.

Computer vision quality control is the second pillar. AI-based visual inspection systems analyse every part at line speed, flagging defect patterns that human inspectors miss at fatigue. 60% of manufacturers now use AI for quality monitoring, detecting 200% more supply chain disruptions than manual processes.

Production planning and scheduling converts sensor telemetry into actionable throughput decisions. 41% of manufacturers now leverage AI to manage supply chain data, and operators using AI-optimised scheduling report a 10–15% boost in production throughput alongside a 4–5% increase in EBITDA.

Supply chain intelligence allows AI systems to anticipate disruptions weeks before they surface. Models trained on logistics, supplier, and geopolitical data give procurement teams a decision window that manual monitoring cannot match.

Generative AI for product design is the newest frontier. Dai et al. (2025), published in Frontiers in Artificial Intelligence, shows that generative AI paired with digital twins enables real-time failure scenario simulation and synthetic data augmentation, cutting the labelled-data bottleneck that stalls most ML programmes.

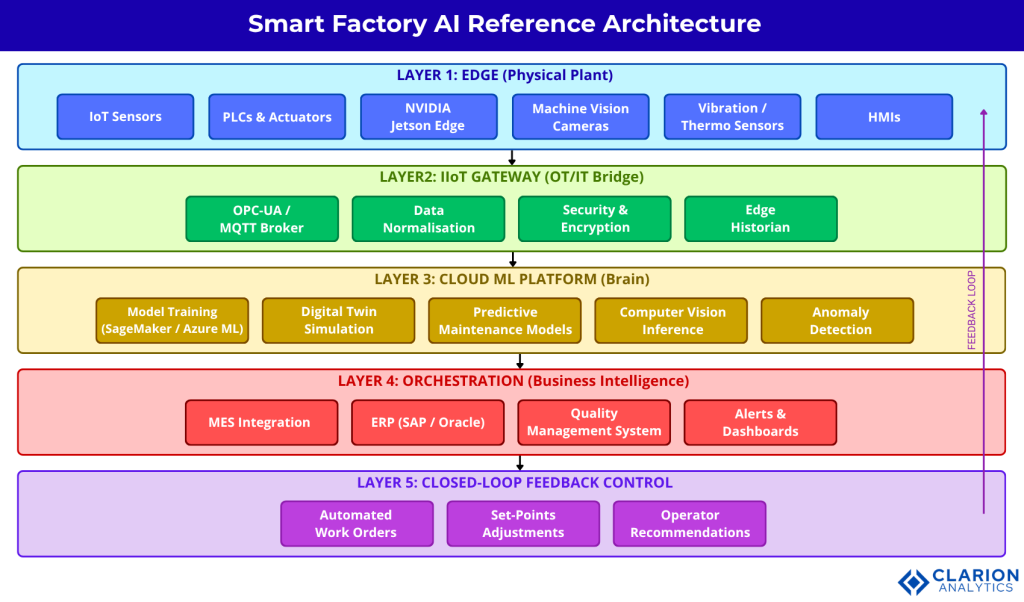

Reference Architecture – How an AI-Enabled Smart Factory Is Actually Wired

A smart factory AI architecture flows from physical sensors at the edge, through an industrial IoT gateway, into a cloud ML pipeline, and back to operational technology via closed-loop control. Understanding this flow is what separates teams that pilot successfully from those that never reach production.

Figure 1. Smart Factory AI Reference Architecture – Five-Layer Model. The diagram illustrates a five-layer smart factory AI architecture. Layer 1 (Edge) captures sensor telemetry and runs lightweight inference on PLCs and Jetson-class devices. Layer 2 (IIoT Gateway) aggregates, filters, and secures OT data before passing it to Layer 3 (Cloud ML Platform) where model training, digital twin simulation, and batch scoring occur. Layers 4 and 5 represent the Orchestration tier (MES/ERP integration) and the Feedback Control loop that closes the operational cycle and updates physical actuators in near-real time.

The ScienceDirect systematic review (2024) documents how automotive OEMs have spent decades building toward exactly this architecture, transitioning from manual time-based maintenance to data-driven frameworks, then adding real-time PLC data feeds and ML-driven decision layers. The path is proven. The remaining challenge is integration discipline, not AI capability.

“Teams building this typically find that 80 percent of the effort lives in the data pipeline, not the model.”

The Technology Stack – Choosing the Right Tools

The core AI manufacturing technology stack combines industrial IoT sensors, edge inference runtimes, cloud ML platforms, and digital twin frameworks. Choosing where to process data matters as much as which model to run.

| Approach | Key Strength | Best Used When |

|---|---|---|

| Cloud-First ML (AWS SageMaker / Azure ML) | Scalable training, managed MLOps, broad ecosystem integration | High data volumes, existing cloud footprint, non-latency-critical inference |

| Edge AI (NVIDIA Jetson, Infineon DEEPCRAFT) | Sub-5ms inference, offline-capable, OT-native deployment | Real-time defect detection, line-speed vision inspection, air-gapped facilities |

| Digital Twin (Siemens Xcelerator, Azure Digital Twins) | Full system simulation, what-if analysis, lifecycle management | Complex multi-asset environments, new plant design, virtual commissioning |

IoT Analytics (2025) identifies edge AI as the fastest-growing deployment pattern, driven by Infineon’s DEEPCRAFT (October 2024) and Qualcomm’s Edge Impulse acquisition (March 2025). Inference is moving closer to the machine.

The McKinsey State of AI (2025) confirms that AI high-performers invest more than 20% of their digital budgets in AI and are three times more likely to scale successfully, pointing at sustained infrastructure investment, not one-off pilots.

Implementation Playbook – From Pilot to Production

A manufacturing AI rollout succeeds in three phases: a targeted pilot on one production line, a validated integration sprint connecting AI to existing MES/ERP systems, and a governed scale-out standardising model retraining and drift monitoring. Each phase has a different failure mode.

In practice, the fastest wins come from predictive maintenance because the data already exists. Sensor historians, SCADA logs, and maintenance records are sufficient to train an initial model. Start there.

Code Snippet 1 – Bidirectional LSTM for Remaining Useful Life Prediction

Source: awslabs/predictive-maintenance-using-machine-learning — sagemaker_predictive_maintenance_entry_point.py

This snippet configures a bidirectional LSTM that reads raw sensor time-series from both temporal directions simultaneously. The final Dense(1) layer outputs a scalar Remaining Useful Life prediction. Swap the NASA turbofan dataset for your own sensor historian exports and retrain. The SageMaker orchestration in the full repository handles training job scheduling and model versioning out of the box.

Code Snippet 2 – TensorRT-Accelerated Defect Detection Inference

Source: HROlive/Computer-Vision-for-Industrial-Inspection – industrial_inspection.ipynb (illustrative extract)

This snippet demonstrates the TensorRT inference path achieving sub-5ms per-frame classification on NVIDIA Jetson hardware. The CUDA memory management pattern uses persistent GPU buffers to minimise transfer overhead. For CTOs evaluating vision hardware: this benchmark confirms that 100% inline inspection is feasible without slowing the production line.

“Manufacturers that apply machine learning are 3 times more likely to improve their key performance indicators; the gap between early adopters and laggards is widening fast.”

The Deloitte State of AI 2025 identifies the AI skills gap as the single biggest barrier to integration, not technology readiness. Teams building smart factory systems should plan for a dedicated ML engineering hire or system integrator partnership early in the roadmap. Worker access to AI rose 50% in 2025, and the number of companies with 40%+ AI projects in active production is set to double within six months.

FAQ – AI in Manufacturing: Questions Engineers and CTOs Actually Ask

What is AI in manufacturing and how does it differ from traditional automation?

Traditional automation executes fixed rules; a robot arm moves when a sensor triggers. AI in manufacturing learns from historical and real-time data to make probabilistic decisions: predicting when a bearing will fail, or classifying a part as defective without explicit programming for each defect type. The difference is adaptability. AI systems improve with data; rule-based automation does not.

How long does it take to see ROI from predictive maintenance AI?

Most deployments show measurable ROI within 6 to 12 months. Deloitte (2024) documents organisations achieving full AI ROI within that window. The fastest returns come from facilities with existing sensor infrastructure and accessible maintenance history logs. Cold-start deployments without historical data typically require a 3–6 month data collection phase before model training begins.

Do I need to replace existing PLCs and SCADA systems to implement AI?

No. The dominant pattern is non-invasive integration: read-only data connectors pull telemetry from existing historians and OPC-UA endpoints into an IIoT gateway layer. The AI sits above the OT stack, sending recommendations to operators or writing set-point adjustments back through a controlled interface. Full rip-and-replace is unnecessary and rarely justified by ROI.

What is the difference between edge AI and cloud AI for factory applications?

Edge AI runs inference on hardware located at or near the machine, NVIDIA Jetson devices, industrial PCs, or microcontrollers, achieving sub-5ms latency and operating without internet connectivity. Cloud AI handles model training, large-batch scoring, and digital twin simulation where latency is not critical. Most production architectures use both: edge for real-time decisions, cloud for model improvement.

How do digital twins work with AI in a manufacturing environment?

A digital twin is a continuously updated virtual replica of a physical asset or process, fed by real sensor data. AI enhances it in two directions: predictive models forecast future states (remaining useful life, thermal runaway risk), while generative AI synthesises rare failure scenarios to augment training datasets. Dai et al. (2025) demonstrates this integration in production-relevant manufacturing environments.

Conclusion – Three Truths, One Decision

Three conclusions hold across every source reviewed. First, the market is committed: Grand View Research (2025) puts the AI manufacturing market on track for a 46.5% CAGR through 2030. Second, the data pipeline is the real project: data engineering, OT integration, and labelling consume 80% of implementation effort, and the model is the easy part. Third, the skills gap is the ceiling: Deloitte (2025) confirms it is the number-one barrier, and it will not resolve itself.

“The question is no longer whether AI will transform manufacturing. It is whether your team will lead that transformation or respond to someone else’s.”

Table of Content

- The $43 Billion Signal Your Factory Cannot Afford to Ignore

- The Five Core Use Cases Driving Real Returns

- Reference Architecture – How an AI-Enabled Smart Factory Is Actually Wired

- The Technology Stack – Choosing the Right Tools

- Implementation Playbook – From Pilot to Production

- FAQ – AI in Manufacturing: Questions Engineers and CTOs Actually Ask

- Conclusion – Three Truths, One Decision