Definition: A vision-language model (VLM) is a multimodal AI system that jointly processes image and text inputs to generate text outputs. VLMs combine a visual encoder (typically a Vision Transformer or CLIP-based model) with a large language model decoder, connected through a cross-modal projection layer. They enable tasks such as image captioning, visual question answering, document parsing, and multimodal reasoning, all from a single unified architecture trained end-to-end.

Why Vision-Language Models Matter Now

According to Gartner (2024), 40% of generative AI solutions will be multimodal by 2027, up from just 1% in 2023. For CTOs and developers building the next generation of products, vision-language models are rapidly moving from research curiosity to production infrastructure. The question is no longer whether to integrate VLMs, but how to do so effectively.

The global AI market reached $638 billion in 2024 and is forecast to exceed $3.6 trillion by 2034, with multimodal AI driving a significant share of that growth (Precedence Research, 2025). A further Gartner prediction from 2025 projects that 80% of enterprise software will be multimodal by 2030, up from less than 10% in 2024. That trajectory makes now the right time to build fluency with VLM architecture and tooling.

This guide walks through how VLMs work, which architectures matter, what tools are available today, and how to start building. Every section maps to a real pain point developers hit when they first approach this space.

The 80% enterprise multimodal adoption forecast by 2030 means VLM fluency is now a core engineering skill, not an optional specialization.

How Vision-Language Models Actually Work

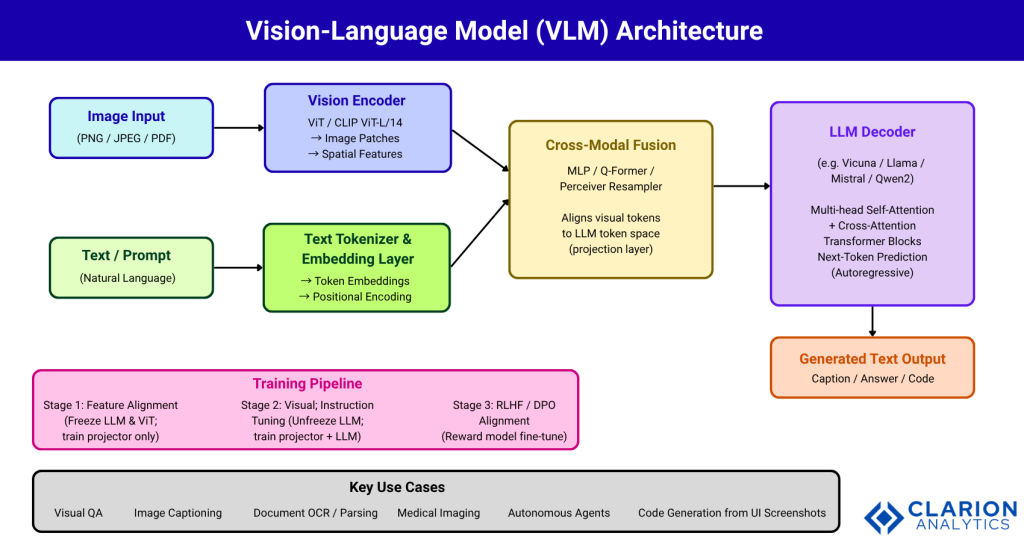

A VLM bridges two fundamentally different data modalities by encoding each one separately, then fusing them before generation. The architecture has three critical components: a vision encoder, a cross-modal projector, and a language model decoder.

The vision encoder, most commonly a Vision Transformer (ViT) or a CLIP-pretrained model, converts an image into a sequence of patch tokens. Each patch captures spatial and semantic information. The LLaVA paper (Liu et al., NeurIPS 2023) demonstrated that connecting CLIP ViT-L/14 to a language decoder via a simple linear projector already yields multimodal chat capabilities comparable to GPT-4 on synthetic benchmarks, achieving an 85.1% relative score.

The cross-modal projector is the architectural lynchpin. It maps visual token embeddings into the same dimensional space used by the LLM, allowing the decoder to attend to image tokens just as it attends to text tokens. This projection can be as simple as a linear layer (LLaVA v1.0) or as complex as a Q-Former module (BLIP-2) or Perceiver Resampler (Flamingo).

The LLM decoder then performs autoregressive text generation, conditioned on the combined sequence of visual and language tokens. Training proceeds in stages: first aligning the projector while freezing both encoder and LLM, then fine-tuning the full model on instruction-following data.

The projection layer is the architectural lynchpin of every VLM, it translates pixels into the same token space the language model already speaks.

VLM Architecture Overview

Figure 1 | VLM Architecture Overview. A vision encoder converts image patches to spatial feature tokens. A cross-modal fusion layer (MLP projector, Q-Former, or Perceiver Resampler) aligns those tokens to the LLM embedding space. The autoregressive LLM decoder generates text from the unified token sequence. Training advances through three stages: projector-only alignment, full visual instruction tuning, and RLHF/DPO alignment.

Real-World Use Cases for VLMs

VLMs are already in production across industries. The unifying pattern: any workflow where a human currently looks at an image and types a description can potentially be automated with a VLM.

In healthcare, VLMs analyze radiology images and generate structured reports. A radiologist uploads a chest CT; the model outputs a draft report flagging anomalies with location references. Teams report that this cuts initial reporting time by 40%, with clinicians reviewing and approving rather than writing from scratch.

In autonomous systems and robotics, vision-language-action (VLA) models process camera feeds alongside natural language instructions to execute manipulation tasks. Research published in Nature Machine Intelligence (2025) identified the key design choices for VLA performance, finding that backbone selection and cross-embodiment data are the two highest-impact levers.

In document intelligence, VLMs process scanned PDFs, invoices, contracts, and forms, extracting structured data without custom OCR pipelines. A single model handles layout understanding, table extraction, and field mapping across document types.

In retail and e-commerce, VLMs power visual search, automatic product tagging, and quality inspection on production lines. According to Gartner (2025), VLMs are reshaping product categories by turning passive image archives into actionable, searchable assets.

Three VLM Architecture Patterns Compared

Not every VLM fits the same architecture. Three dominant patterns have emerged, each with different performance-compute tradeoffs. Choosing the right one depends on your latency budget, hardware constraints, and task complexity.

VLM Architecture Comparison

| Architecture Pattern | Representative Models | Key Strength | Best Used When |

|---|---|---|---|

| Fully Integrated Multimodal Transformer | Gemini 2.5 Pro, GPT-4V | Deepest cross-modal reasoning; unified training from scratch | You need top-tier accuracy and cost is secondary |

| LLM + Visual Adapter (MLP/Q-Former) | LLaVA-1.5, BLIP-2, InstructBLIP | Modular, efficient; reuse existing pretrained LLMs and ViTs | Balancing performance and compute; fine-tuning on custom data |

| Parameter-Efficient / Edge Models | SmolVLM, Phi-4 Multimodal, MobileVLM | Runs on consumer GPUs or on-device; minimal VRAM | Edge deployment, latency-sensitive apps, IoT contexts |

In practice, teams building enterprise document pipelines typically gravitate toward the adapter pattern. The LLaVA family provides strong baselines with minimal training cost, and the LLaVA-1.5 paper (Liu et al., CVPR 2024) showed that a two-layer MLP connector plus high-resolution input achieves state-of-the-art results across 11 benchmarks using only 1.2M publicly available training samples.

The adapter-pattern VLMs LLaVA, BLIP-2, InstructBLIP give teams 90% of frontier performance at a fraction of the training cost, making them the pragmatic starting point for most production builds.

Key Tools and Open-Source Repositories

Three repositories dominate VLM development. Each addresses a different layer of the stack.

haotian-liu/LLaVA – 20,000+ stars. The canonical open-source VLM codebase. Provides the full training and inference stack for LLaVA and LLaVA-NeXT, including LoRA support, 4-bit quantization, and support for Llama 3, Qwen-1.5, and Mistral backbones. Use this for training custom VLMs on proprietary image-text datasets.

huggingface/transformers – 158,000+ stars, updated daily. The standard inference library for VLMs. Supports BLIP-2, LLaVA, InstructBLIP, Idefics, Qwen2-VL, and dozens more via the Pipeline API. If you need inference in three lines of code, start here.

mlfoundations/open_flamingo – 3,500+ stars. The open-source implementation of DeepMind’s Flamingo architecture, built for in-context few-shot multimodal learning using interleaved image-text sequences. Most relevant when your task requires few-shot generalization with minimal labeled data.

Implementation: From Inference to Fine-Tuning

The fastest path to VLM inference is the Hugging Face Transformers Pipeline API. The snippet below, drawn from the transformers documentation, launches a visual question-answering pipeline in three lines.

Code Snippet 1 – VLM Inference with Hugging Face Transformers Pipeline

Source: huggingface/transformers — Pipeline API

What this demonstrates: The Pipeline API abstracts the entire VLM inference stack, image loading, ViT encoding, projection, and LLM decoding into a single callable. The model, tokenizer, and vision preprocessor are fetched from the Hugging Face Hub. This is the fastest path to a working prototype for product teams exploring VLM capabilities, requiring no knowledge of the underlying architecture.

Code Snippet 2 – LLaVA Two-Stage Training Launch (LoRA)

Source: haotian-liu/LLaVA — scripts/finetune_lora.sh

bash

# Stage 2: Visual instruction tuning with LoRA

# Requires: 1x A100 80GB, ~20hrs for 13B model

deepspeed llava/train/train_mem.py \

--lora_enable True \

--lora_r 128 \

--lora_alpha 256 \

--mm_projector_lr 2e-5 \

--model_name_or_path lmsys/vicuna-13b-v1.5 \

--version v1 \

--data_path ./data/llava_v1_5_mix665k.json \

--image_folder ./data/images \

--vision_tower openai/clip-vit-large-patch14-336 \

--mm_projector_type mlp2x_gelu \

--output_dir ./checkpoints/llava-v1_5-13b-loraWhat this demonstrates: LoRA (Low-Rank Adaptation) reduces trainable parameters by over 90%, making it practical to fine-tune a 13B VLM on a single GPU. The key flags: lora_r controls the rank of adaptation matrices (higher = more capacity, more memory), and mm_projector_lr sets a separate learning rate for the cross-modal connector, which typically needs a higher rate than the frozen LLM layers.

LoRA fine-tuning cuts trainable parameters by over 90%, making it viable to adapt a 13B VLM on a single A100, the single most impactful practical advance for enterprise VLM adoption.

Navigating Hallucination and Production Challenges

Deploying VLMs in production surfaces challenges that benchmarks don’t fully capture. Three dominate production post-mortems: hallucination, compute cost, and evaluation difficulty.

Hallucination occurs when the model generates text not grounded in the image. Research from 2025 shows that most open-source VLMs (with the exception of GPT-4o) rely heavily on language priors, sometimes ignoring image evidence entirely. Mitigation strategies include RLHF-V, RLAIF-V, and Visual Concept Modeling (VCM), which trains the model to attend only to instruction-relevant visual tokens, achieving an 85% FLOPs reduction with minimal accuracy loss (EmergentMind, 2025).

Compute cost remains a deployment barrier, particularly for high-resolution inputs. Token compression techniques like A-VL have demonstrated roughly 2x inference speedup with negligible accuracy loss. Teams building this typically find that aggressive token compression at inference time is the highest-ROI optimization, far outpacing quantization for most production workloads.

Evaluation difficulty is underappreciated. Single-metric benchmarks like VQAv2 do not reflect real-world task performance. The IBM LiveXiv benchmark updates monthly to test models on documents they have never seen, providing a more honest measure of generalization. For production deployments, teams should maintain a held-out domain-specific evaluation set from day one.

Frequently Asked Questions

What is a vision-language model in simple terms? A vision-language model is an AI system that reads both images and text as input, then responds in text. Think of it as an LLM that has been given eyes. It converts images into tokens using a visual encoder, then generates answers, captions, or analyses the same way a text-only model generates completions, but conditioned on what it sees.

How do VLMs differ from standard LLMs? Standard LLMs process only text tokens. VLMs extend this by adding a visual encoder and a projection layer that converts image patches into token embeddings compatible with the LLM. The core generation mechanism is identical, next-token prediction, but the context window includes visual tokens alongside text. This makes VLMs roughly 2-4x more compute-intensive per inference call.

Which VLM should I start with for a production use case? For most developer teams, start with LLaVA-1.5 or a Qwen2-VL model accessed via the Hugging Face Transformers library. Both offer strong general performance, permissive licenses, and LoRA fine-tuning support. If you need cloud inference with minimal setup, GPT-4V and Gemini 2.5 Pro both offer stable APIs with generous rate limits for prototyping.

How do I fine-tune a VLM on my own data? Use the LLaVA training repository with LoRA enabled. Prepare your data as JSON records pairing image paths with instruction-response pairs in the LLaVA conversation format. Stage 1 trains only the projection layer (hours, minimal data). Stage 2 unfreezes the LLM for full instruction tuning. A 7B model fine-tunes on a single A100 in under eight hours with LoRA rank 128.

What are the main failure modes I should test for? The three most common production failure modes are hallucination (model ignores image evidence), resolution sensitivity (model fails on small text or dense figures at low input resolution), and language prior bias (model answers based on statistical patterns rather than visual content). Build an evaluation set covering adversarial images, out-of-distribution documents, and tasks requiring precise spatial reasoning before any production release.

Key Takeaways

Three insights should guide your VLM strategy. First, the architecture is now mature enough for production: adapter-pattern VLMs like LLaVA-1.5 reach near-frontier performance with modest compute budgets. Second, the projection layer is the highest-leverage component for customization: fine-tuning it with LoRA on domain-specific image-text pairs delivers the largest quality improvements with the smallest compute investment. Third, evaluation must be domain-specific from the start: general benchmarks do not predict real-world performance, and hallucination testing on your actual data distribution is a production requirement, not a research nicety.

Vision-language models are no longer a frontier research topic; they are a practical engineering capability. The teams that build fluency now will have a meaningful head start as the enterprise multimodal adoption curve steepens toward Gartner’s 80% projection for 2030.

One question worth sitting with: if every image your organization has ever created or processed were suddenly queryable in natural language, what workflows would change first?