Object detection is a computer vision discipline that combines localization and classification: given an image or video frame, a detection model outputs a set of bounding boxes, each paired with a class label and confidence score. Unlike image classification, which assigns a single label to an entire image, object detection pinpoints multiple objects simultaneously with precise spatial coordinates. Modern deep learning detectors achieve this using convolutional, transformer, or hybrid neural architectures trained end-to-end on annotated datasets.

Why Object Detection Is Now a Board-Level Priority

Object detection has moved from research labs into enterprise operations because it enables real-time automation of visual tasks, from warehouse cycle-counting to autonomous vehicles at a scale and accuracy that classification alone cannot deliver.

The market numbers make the urgency concrete. According to Gartner (2024), enterprise computer vision software, hardware, and services are projected to reach $386 billion in global revenue by 2031, up from $126 billion in 2022. That growth is not driven by research curiosity. It is driven by warehouse managers, factory directors, and hospital administrators who need systems that see, locate, and act on visual information in milliseconds.

The adoption signal is equally clear at the operational level. Gartner (June 2024) predicts that 50% of companies with warehouse operations will use AI-enabled vision systems by 2027, yet only 20% had adopted them as of late 2023. That gap is the opportunity window for development teams building now.

Meanwhile, the 2024 Gartner Hype Cycle for Artificial Intelligence places computer vision squarely in the Plateau of Productivity, meaning real-world use cases have matured, adoption is increasing steadily, and ROI is visible. For CTOs, this signals that the risk of investing has fallen sharply, while the cost of waiting keeps rising.

The global deep learning market was estimated at $96.8 billion in 2024 and is forecast to reach $526.7 billion by 2030 at a 31.8% CAGR (Grand View Research, 2024). Image recognition, the engine underneath most detection pipelines, holds the largest application share at approximately 43.38%.

“Classification tells you what is in a frame. Detection tells you where, how many, and whether to act.”

The Core Architectures: Single-Stage vs. Two-Stage Detectors

Single-stage detectors (YOLO, SSD) process the image once for both localization and classification, achieving faster inference. Two-stage detectors (Faster R-CNN, Mask R-CNN) first propose regions of interest and then classify them, trading speed for higher accuracy on complex or densely packed scenes.

Single-stage models dominate real-time applications. The YOLO series, maintained by Ultralytics, has become the production default for edge and latency-critical workloads. YOLOv8 (Yaseen, 2024) introduced a CSPNet backbone for improved feature extraction, an FPN+PAN neck for multi-scale detection, and an anchor-free head that simplifies training while boosting accuracy. The YOLOv8n variant achieves a mAP of 37.3 on the COCO dataset with sub-millisecond inference on A100 TensorRT hardware. YOLO11, released in September 2024, extends these gains further.

Two-stage models like Faster R-CNN and Mask R-CNN, available through Facebook Research’s Detectron2 (30k+ GitHub stars), remain the preferred choice for applications where precision outweighs latency, medical imaging, satellite analysis, and fine-grained industrial inspection. The two-pass architecture region proposal then classification, sacrifices throughput for richer contextual understanding of each candidate object.

Architecture Comparison

| Approach | Key Strength | Best Used When |

|---|---|---|

| YOLO / single-stage | Sub-millisecond inference; edge-deployable; unified training pipeline | Real-time video, edge hardware, retail analytics, autonomous vehicles |

| Faster R-CNN / two-stage | High accuracy on small, dense, or partially occluded objects | Medical imaging, satellite analysis, precision quality inspection |

| DETR / RT-DETR / transformer | End-to-end, eliminates NMS; strong global context modelling | Cloud-scale batch inference; complex multi-object scenes |

Transformer-Based Detection: The Architecture Reshaping the Field

DETR (Detection Transformer) treats object detection as a set-prediction problem using a transformer encoder-decoder, eliminating hand-crafted components like anchor boxes and non-maximum suppression. RT-DETR extends this to real-time speeds, outperforming YOLO detectors on COCO benchmarks in both speed and accuracy.

The foundational paper by Zhao et al. (2023/2024) established RT-DETR as the first real-time end-to-end object detector to outperform the YOLO family without NMS post-processing. Removing NMS eliminates a hyperparameter-sensitive step that introduces latency and recall instability, a meaningful engineering win at production scale.

The facebookresearch/detr repository (14.8k stars) introduced the foundational DETR architecture in 2020. The key insight: replace hand-crafted pipeline stages with a Transformer encoder-decoder and a bipartite matching loss that forces unique, parallel predictions. Inference needs just 50 lines of PyTorch.

The frontier is moving further still. Wang et al. (2024, Mamba YOLO) introduce a State Space Model (SSM) backbone that replaces self-attention’s O(n²) complexity with linear-complexity sequence modelling. Their tiny variant achieves a 7.5% mAP improvement on COCO with 1.5 ms inference on a single GPU, pointing toward architectures that close the efficiency gap between transformers and CNNs.

Real-World Use Cases Driving Enterprise Adoption

Object detection powers autonomous vehicles, manufacturing quality inspection, medical imaging, retail analytics, and warehouse automation industries where locating and counting specific objects at speed directly affects operational outcomes.

Warehouse and supply chain: Gartner projects that AI-enabled vision systems will replace traditional barcode-scanning cycle counts for 50% of warehouse operators by 2027. A camera mounted above a conveyor can count, classify, and log items without human intervention, reducing both labor costs and error rates.

Automotive and autonomous systems: NVIDIA’s 2025 partnership expansions with Toyota, Aurora, and Continental directly leverage object detection for Level 3 and Level 4 autonomous driving functions. The sensor fusion pipeline camera, LiDAR, radar depends on high-confidence detection at every frame.

Healthcare: Deep learning-based detection tools for diagnostic imaging allow clinicians to identify pathologies in X-rays and scans with error rates below 5% on competitive benchmarks, matching human-level performance on structured classification tasks (Grand View Research, 2024).

Manufacturing quality assurance: In practice, teams building this kind of system find that the annotation quality of their training data, not the model architecture, is typically the largest determinant of production performance. Cognex’s In-Sight L38 3D Vision System combines 2D and 3D object detection to inspect parts with sub-millimetre precision.

“The teams winning with object detection are not the ones with the best model, they are the ones who matched architecture to inference budget.”

Code Snippet 1: YOLO Inference in Python (Ultralytics)

Source: ultralytics/ultralytics

This five-line pattern covers the full detect-and-annotate loop. The model is downloaded automatically on first use. Swap yolo11n.pt for any pretrained variant (small, medium, large, xlarge) to trade latency for accuracy. Export to ONNX or TensorRT with a single additional call for edge deployment.

System Architecture: Deploying Object Detection at Scale

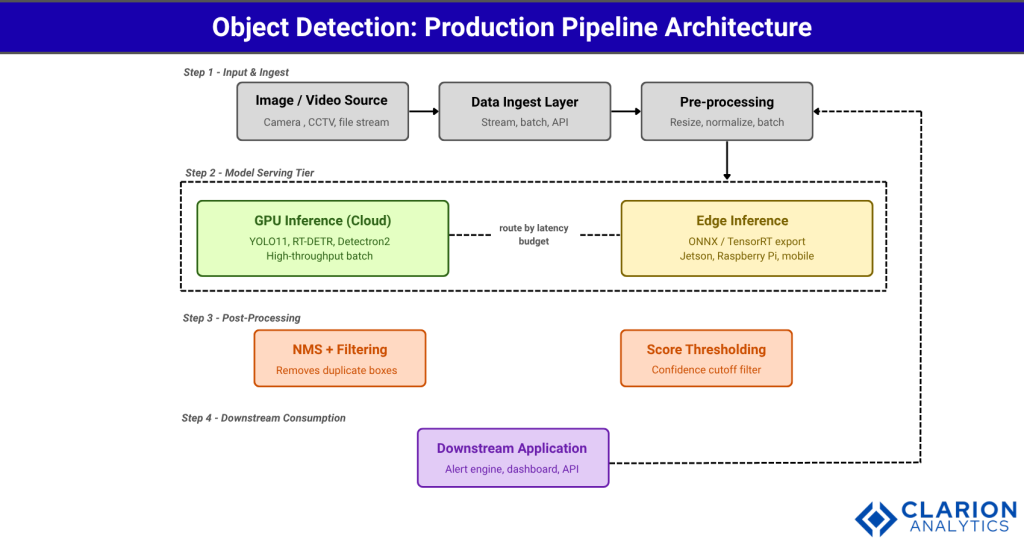

A production object detection system typically includes a data ingestion layer, a model serving tier, either GPU-backed cloud or edge device, a post-processing module for bounding box filtering, and a downstream consumer such as an alert engine or analytics dashboard.

This diagram illustrates a production-grade object detection pipeline moving from raw image or video input through preprocessing, model inference, NMS post-processing, and downstream application consumption. The model serving layer supports both GPU-backed cloud instances and edge deployments via ONNX or TensorRT export. A data flywheel loop connects production outputs back to the labeling and retraining pipeline, ensuring the model improves with real-world distribution shifts. Arrows indicate the directional flow of data; each component is independently scalable and replaceable.

The cloud GPU tier, using frameworks like Detectron2 or Ultralytics YOLO, handles batch workloads and model retraining. The edge tier, exported via ONNX or TensorRT, handles latency-sensitive streams like live surveillance or robotic vision. The same base model can power both tiers from a single training run.

The data flywheel, production predictions that are sampled, reviewed, and fed back into the training set, is the architectural feature most teams underestimate early. Building this loop into your initial system design typically costs an extra sprint but compounds over the model’s production lifetime.

Choosing and Fine-Tuning Your Detection Model

Select a single-stage model like YOLO11 for edge or real-time use; choose Faster R-CNN or DETR variants for cloud workloads requiring high precision. Fine-tune on your domain dataset for at least 50 epochs using transfer learning from COCO pretrained weights to close the distribution gap.

The decision framework comes down to three variables: inference latency budget, object density per frame, and available training data. For latency under 10 ms, single-stage YOLO is the answer. For scenes with 20+ objects per frame where missed detections carry high cost, medical, safety, a two-stage model like Faster R-CNN or a well-tuned DETR variant gives the recall headroom you need.

Fine-tuning changes the calculus on data requirements significantly. Self-supervised learning (SSL) techniques now reduce labelled data needs by up to 80%, according to market analysis of SSL adoption in 2024. A team with 500 well-annotated domain images and transfer learning from COCO weights can routinely surpass a general-purpose model trained on 500,000 generic examples.

“A well-fine-tuned YOLOv8 on 500 domain images routinely outperforms a general-purpose model trained on 500,000.”

Code Snippet 2: Detectron2 DefaultPredictor (Two-Stage Pipeline)

Source: facebookresearch/detectron2

This snippet shows the Detectron2 two-stage pipeline: load a Faster R-CNN config from the model zoo, apply a pretrained COCO checkpoint, set a confidence threshold, and run inference with a single call to DefaultPredictor. The contrast with the YOLO snippet above illustrates the tradeoff: Detectron2 gives researchers more configuration surface but requires more boilerplate. Choose it when you need the richer Mask R-CNN or panoptic segmentation extensions.

FAQ: Object Detection Deep Learning

How does object detection differ from image classification?

Image classification assigns a single label to an entire image, “this is a dog.” Object detection outputs multiple bounding boxes, each with a label and confidence score, “there is a dog at coordinates (x1, y1, x2, y2) and a cat at these other coordinates.” Detection models handle multi-object, multi-class scenes; classifiers do not. For real-world automation tasks, detection is almost always the right starting point.

Which object detection model is best for real-time applications?

YOLO11 or YOLO26 from Ultralytics are the default choices for real-time workloads. They achieve single-digit millisecond inference on GPU hardware and can be exported to ONNX or TensorRT for edge deployment. RT-DETR is a strong alternative when you need end-to-end inference without NMS post-processing and can tolerate slightly higher latency on smaller hardware.

Can I run a deep learning object detector on edge hardware?

Yes. Export your trained YOLO model to ONNX or TensorRT format with one command: yolo export model=yolo11n.pt format=onnx. Ultralytics has validated deployment on NVIDIA Jetson, Raspberry Pi, and mobile platforms via CoreML. Edge inference trades some accuracy for elimination of network round-trips, which matters for latency-sensitive applications like robotic vision or real-time surveillance.

How much training data do I need for a custom object detection model?

With transfer learning from COCO-pretrained weights, 200 to 500 annotated images per class is a practical starting point for many industrial applications. Fine-tuning on a well-annotated domain dataset typically outperforms training from scratch on 10 to 100 times as many generic images. Self-supervised learning techniques, which reduce labelled data requirements by up to 80%, are increasingly viable for teams with limited annotation budgets.

What is the difference between YOLO and DETR?

YOLO is a CNN-based single-stage detector that processes images in one pass and relies on NMS post-processing to remove duplicate boxes. DETR uses a transformer encoder-decoder and bipartite matching loss to produce unique predictions directly, eliminating NMS. YOLO is faster and easier to deploy on constrained hardware; DETR offers cleaner end-to-end training and stronger global context modelling at the cost of higher compute requirements.

What to Build Next

Three insights carry the most weight from this post. First, advanced deep learning object detection techniques are mature enough for production; Gartner’s Plateau of Productivity signal is reliable here. Second, architecture choice is primarily an inference budget and use-case decision: YOLO for real-time and edge, two-stage models for precision, transformers for end-to-end cloud pipelines. Third, fine-tuning on domain data compounds faster than scaling generic training data, so annotation quality and the data flywheel deserve architectural investment from day one.

Start with a YOLO11 baseline on the Ultralytics repository, validate on your domain data, then evaluate whether the latency or accuracy profile justifies moving to a two-stage or transformer-based model. Most production teams find the nano or small YOLO variant handles 80% of their use cases without further complexity.

The question worth sitting with: if your team deployed a working object detector tomorrow, which single operational bottleneck would it remove that your competitors are still solving manually?