Job orchestration in a MERN stack means scheduling and managing background tasks asynchronously outside the main HTTP request cycle, so the application stays fast under load. Celery, a Python distributed task queue, connects to Node.js via Redis as a shared message broker, letting Express dispatch tasks to Python workers without rewriting the core JavaScript stack.

Why Node.js Alone Cannot Handle Heavy Background Work

Node.js runs on a single-threaded event loop. Any CPU-bound or long-running synchronous operation blocks that loop and degrades response times for all concurrent users, making external task offloading essential for production MERN applications.

According to performance analysis data cited by Google, a 50 ms delay increases bounce rates by 10%. More critically, a single 100 ms synchronous stall can reduce throughput by 30% under load. That is not a theoretical edge case. It happens when your Express route tries to generate a PDF, run a machine learning model, or send 10,000 emails inline. Celery job orchestration in a MERN stack exists precisely to prevent that collapse.

NodeSource’s 2024 State of Node.js Performance report confirms that Node.js v22 handles approximately 55% more event operations than v18. The runtime keeps improving. But no Node.js version changes the fundamental constraint: the event loop was built for I/O, not computation.

What Celery Brings to a JavaScript Stack

Celery is a battle-tested Python distributed task queue that runs workers independently of your Node.js server. It supports task retries, scheduling, result storage, and horizontal scaling, all configurable without touching your Express codebase.

Celery has over 25,000 GitHub stars and is the de facto standard for background processing in the Python ecosystem. Its feature set includes automatic retries with exponential back-off, priority queues, task routing by name, scheduled periodic tasks via Celery Beat, and result backends for tracking outcomes.

In practice, teams building this typically find that Celery’s learning curve is concentrated in one area: understanding the broker/backend separation. The broker (Redis or RabbitMQ) carries messages. The result backend (also Redis in most MERN setups) stores task outcomes. Once that mental model clicks, the rest follows quickly.

Architecture – How MERN and Celery Communicate

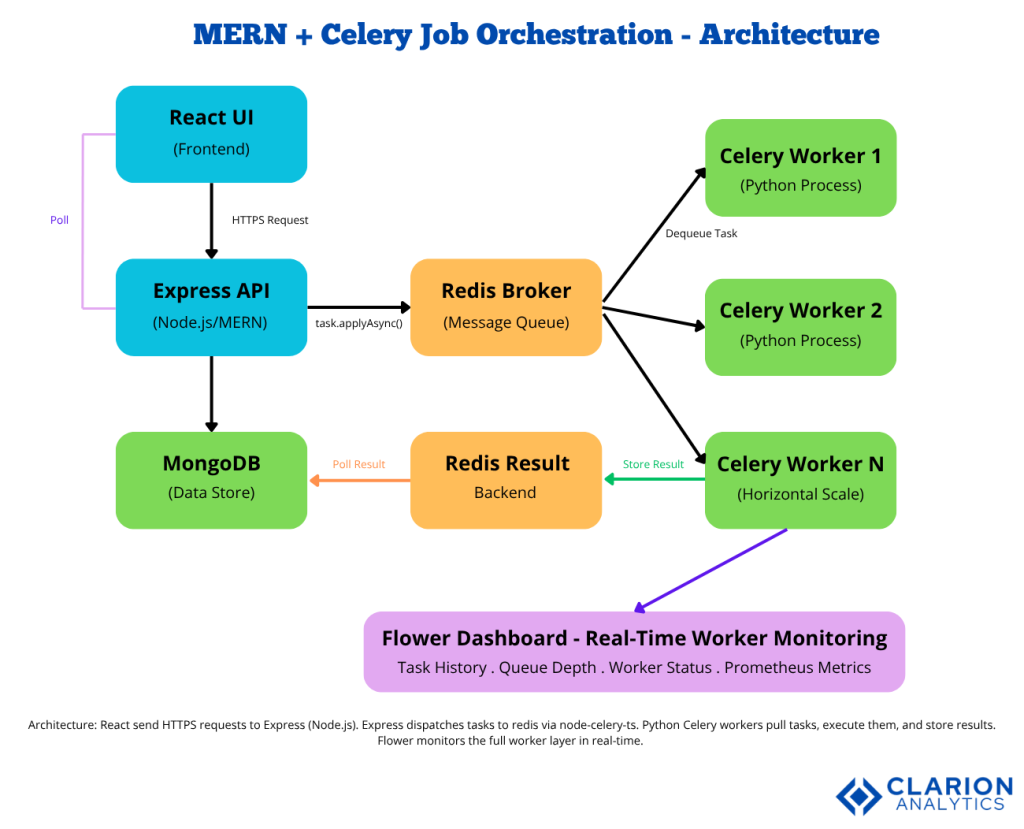

Node.js (Express) publishes task messages to Redis. Celery workers subscribe to those queues, execute tasks in Python, and write results back to Redis. React polls or listens via WebSocket for completion status.

The MERN + Celery architecture separates concerns into four layers. React sends API requests to Express. Express serializes tasks and pushes them onto Redis queues. Celery workers pull tasks from Redis, execute them, ML inference, email delivery, data processing, and store results back in Redis. Express retrieves results and returns them to React. Flower provides a real-time monitoring dashboard over the entire worker layer.

Maharjan et al. (2023) in the Telecom journal found that Redis excelled in low-latency message delivery, making it the most memory-efficient option for task-queue broker roles in microservices architectures.

Real-World Use Cases for Celery in MERN Apps

MERN applications benefit most from Celery when handling email campaigns, PDF generation, image processing, machine learning inference, and large database exports, operations that would otherwise block Node.js for seconds or minutes.

Five patterns appear regularly in production:

- Email and notification delivery. An Express route accepts a registration, stores the user in MongoDB, dispatches a

send_welcome_emailCelery task, and returns 200ms instantly. The email goes out whenever the worker gets to it. - Image and media processing. React uploads an image. Express dispatches a

resize_and_watermarktask. Celery runs Pillow in Python. The user polls for completion without blocking the API. - Machine learning inference. Data science teams already run Python models. Wrapping them as Celery tasks lets Node.js call them without a separate REST service.

- Scheduled reporting. Celery Beat handles cron-style periodic tasks, generating nightly reports, syncing external APIs, or archiving stale records in MongoDB.

- Webhook fanout. When a single event must trigger dozens of downstream calls, Celery distributes them across workers in parallel rather than sequentially from Node.js.

“The moment a Node.js server starts waiting instead of routing, Celery is the answer, not a bigger server.”

Step-by-Step Implementation Guide

Set up Redis as the message broker, create a Python Celery app with your tasks defined, install node-celery-ts in Express, then call task.applyAsync() from any Express route to dispatch work to Python workers asynchronously.

Step 1 – Start Redis

Run Redis locally or use Upstash for serverless Redis. Both Celery and Node.js connect to redis://localhost:6379 by default.

Step 2 – Define Python Celery Tasks

Source: celery/celery documentation – canonical task definition pattern

The @app.task decorator registers the function with Celery. The bind=True parameter gives the task access to retry mechanics. When Node.js dispatches this task, Celery routes it to an available Python worker automatically.

Step 3 – Dispatch Tasks from Express

Source: node-celery-ts/node-celery-ts – TypeScript Celery client

createClient() connects Express to Redis. createTask() references the Python function by its dotted name. applyAsync() dispatches it and returns a task ID in milliseconds. Express returns immediately the worker picks up the job in the background. Express is a producer, never a consumer.

Step 4 – Start Celery Workers

bash

celery -A tasks worker --loglevel=info --concurrency=4 -OfairThe -Ofair flag prevents work from piling up on busy processes by routing tasks only to idle workers — a critical production optimization noted in Param Rajani’s production Celery guide on ITNEXT.

“You cannot optimize what you cannot see; Flower turns a black-box task queue into a fully observable production system.”

Monitoring and Observability with Flower

Flower is a real-time web dashboard for Celery that shows worker status, task progress, retry history, queue depth, and per-task runtime statistics. It integrates with Prometheus and Grafana for production-grade alerting.

bash

celery -A tasks flower --port=5555The dashboard at localhost:5555 shows every worker, every active task, and queue backlog in real time. For production, Flower’s Prometheus integration exports metrics that trigger alerts when task runtime exceeds thresholds or workers go offline.

Choosing the Right Approach – Celery vs. Alternatives

| Option | Key Strength | Best Used When |

|---|---|---|

| Celery + Redis (Python) | Production-grade retries, scheduling, multi-broker support, Flower monitoring | You need Python ML/data processing workers alongside a Node.js API layer |

| BullMQ (Node.js) | Pure JavaScript, tight Express integration, TypeScript-first | Your entire stack is JavaScript and tasks don’t require Python libraries |

| AWS SQS + Lambda | Fully managed, serverless, zero infrastructure | You want to eliminate worker server management and can accept vendor lock-in |

Gartner (2022) found that microservice complexity, not the concept itself, drives developer disillusionment. Celery addresses this by providing a single, well-understood abstraction for the task layer, regardless of language on either side.

“Integrating a Python task queue into a JavaScript app sounds complex but Redis is the shared language both sides already speak.”

Frequently Asked Questions

Can Celery workers communicate directly with MongoDB in a MERN stack? Yes. Celery workers are standard Python processes. Install pymongo in your worker environment and connect directly to the same MongoDB instance your Node.js server uses. Workers can read, write, and aggregate data independently of Express, which is ideal for long-running data jobs.

What happens if a Celery worker crashes mid-task? Celery tracks task acknowledgment via the broker. If a worker process dies before acknowledging a task, the broker requeues it and another worker picks it up. Set acks_late=True on your task to ensure acknowledgment only happens after successful completion, preventing silent data loss.

How do I return results from Celery back to React in real time? The two most common patterns are polling and WebSockets. Express exposes a /tasks/:taskId/status endpoint that React polls every few seconds, reading from the Redis result backend. For lower latency, connect React to a Socket.io server that Express signals when a task completes.

Is Redis required, or can I use RabbitMQ instead? Both work. Research by Maharjan et al. (2023) shows Redis leads on low-latency delivery and memory efficiency, making it the natural first choice for MERN teams already using Redis for session storage or caching. RabbitMQ adds AMQP routing features for complex multi-queue topologies.

How many Celery workers do I need for production? Start with worker concurrency set to the number of CPU cores minus one on each machine. Scale horizontally by adding more worker servers, all connecting to the same Redis broker. Flower’s queue-length graphs tell you exactly when to add capacity before users feel the slowdown.

Conclusion

Three insights define this integration. First, Node.js’ single-threaded model is a feature for I/O and a liability for computation; Celery resolves that liability without touching your JavaScript code. Second, Redis as a shared message broker makes Python and Node.js natural collaborators, not awkward strangers. Third, Flower gives operations teams the visibility they need to maintain distributed task systems at scale.

If your MERN app is growing and response times are suffering, the real question is not whether to add job orchestration; it is how soon.