A large language model is a deep learning system trained on massive text corpora to predict, generate, and transform human language. Built on the Transformer architecture, LLMs learn statistical relationships between tokens using self-attention mechanisms. When integrated with retrieval systems, APIs, or fine-tuning pipelines, they become programmable language interfaces, tools that software teams can query, customize, and deploy to automate language-intensive workflows at scale.

Why NLP Fundamentals Still Matter in the LLM Era

Large language models did not replace natural language processing; they absorbed it. Every inference call you make to an LLM relies on NLP sub-tasks: tokenization, embedding, named entity recognition, and semantic similarity. Understanding those layers lets you control model behavior rather than just call an API and hope for the best.

According to McKinsey (2025), enterprise AI adoption jumped from 55% to 78% of organizations in a single year. Generative AI specifically reached 71% adoption. That pace means developers who understand how large language models process language will outpace peers who treat them as black-box APIs.

The gap is not in access to models. It is in knowing why a response fails, which integration pattern fits the use case, and what to instrument before scaling.

“Understanding NLP fundamentals is not academic overhead, it is the difference between integrating an LLM and actually controlling one.”

The Transformer Architecture: What Every Developer Should Know

The Transformer is the architectural foundation of every major LLM in production today. Introduced by Vaswani et al. (2017) in “Attention Is All You Need,” it replaced recurrent networks with a fully parallel, attention-based design, cutting training time drastically while improving sequence modeling quality.

The mechanism works in three stages. First, raw text is split into tokens (sub-word units) and mapped to high-dimensional embedding vectors. Second, positional encodings inject sequence-order information, since the architecture has no inherent notion of “before” or “after.” Third, multi-head self-attention computes relationships between every token and every other token simultaneously, enabling the model to resolve pronouns, track entities, and maintain context across thousands of words in a single forward pass.

Gartner (2023) projects that by 2027, foundation models will underpin 70% of NLP use cases, up from less than 5% in 2022. For software teams, that means transformer fundamentals are now a core infrastructure skill, not a research topic.

Code Snippet 1 – Source: huggingface/transformers · pipeline() for text generation

This four-line pattern (after imports) loads any HuggingFace-compatible model, applies a structured system and user prompt, and returns a typed response object. The device detection ensures the same code runs on a laptop CPU or a cloud GPU without any conditional logic in application code.

Core NLP Tasks LLMs Perform (And When to Use Each)

LLMs are general-purpose engines, but each inference call performs one or more specific NLP tasks under the hood. Naming the task explicitly in your system prompt or your API parameters produces more consistent outputs than open-ended instructions.

The tasks that matter most in enterprise software are text classification (routing support tickets, labeling documents), named entity recognition (extracting names, dates, and product codes from unstructured text), semantic similarity (matching queries to knowledge base chunks), summarization (compressing long documents for downstream processing), and code generation. According to Menlo Ventures (2025), code generation is now the fastest-growing enterprise use case at 26% of all LLM deployments.

In practice, teams building classification pipelines often discover the model performs three tasks simultaneously – intent detection, entity extraction, and response generation, without explicit separation. That ambiguity is where latency and cost accumulate. Decomposing compound requests into task-specific sub-calls is a reliable optimization pattern.

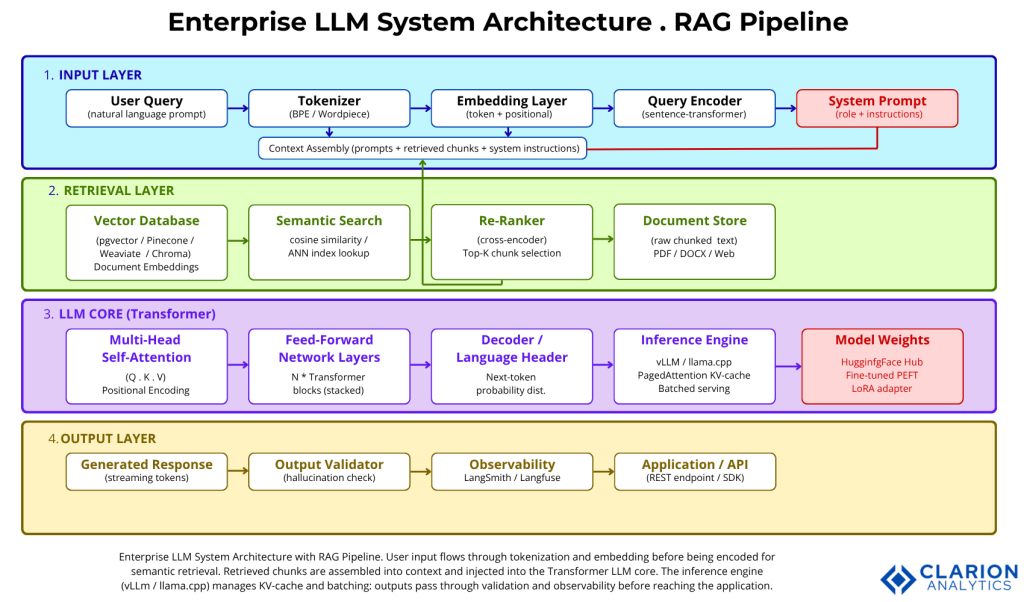

Figure 1 caption: User input flows through tokenization and embedding before being encoded for semantic retrieval. Retrieved document chunks are assembled with the system prompt into a context window injected into the Transformer LLM core. The inference engine (vLLM / llama.cpp) manages KV-cache and batching; outputs pass through validation and observability layers before reaching the application.

Three Integration Patterns: Prompting, RAG, and Fine-Tuning

The three patterns are not interchangeable. Each addresses a different problem, and choosing the wrong one wastes weeks of engineering effort.

Prompting works when the task is general, the required data fits inside the context window, and accuracy requirements are moderate. Zero engineering overhead, but the model has no access to private or real-time data.

RAG (Retrieval-Augmented Generation) solves the private-data problem by retrieving relevant document chunks from a vector database at inference time and injecting them into the prompt. Gao et al. (2023) define three RAG maturity levels: Naive RAG (simple retrieve-then-generate), Advanced RAG (with re-ranking and query rewriting), and Modular RAG (composable pipelines with feedback loops). According to Menlo Ventures (2025), RAG-based models captured 38.41% of enterprise LLM revenue in 2025, the single largest deployment pattern.

Fine-tuning modifies the model’s weights using domain-specific examples. It produces the best accuracy for narrow tasks but requires labeled data, compute, and an ongoing retraining pipeline. Gartner (2025) projects that by 2027, organizations will deploy task-specific models at three times the volume of general-purpose LLMs.

“Most enterprise teams don’t need to fine-tune a model, they need to stop hallucination, and RAG solves that faster.”

| Option | Key Strength | Best Used When |

|---|---|---|

| Prompting | Zero setup, lowest cost | General tasks, public knowledge, rapid prototyping |

| RAG | Grounds responses in live private data; auditable citations | Enterprise QA, document search, knowledge bases with frequent updates |

| Fine-Tuning | Highest domain accuracy; smaller models possible | Narrow repeatable tasks with labeled data; compliance-sensitive outputs |

Code Snippet 2 – Source: langchain-ai/langchain · RAG chain with HuggingFace embeddings

This snippet wires a HuggingFace-hosted model into LangChain’s message interface. Injecting {retrieved_chunk} from a vector search upstream is the core of a RAG pipeline. The "Answer only from the provided context" system instruction is the single most effective hallucination-reduction technique available without touching model weights.

The Production Stack: Libraries, Frameworks, and Infrastructure

A mature LLM production stack has four layers. Each has dominant open-source options with proven enterprise adoption.

Model access – huggingface/transformers (140k+ stars) is the standard. It abstracts model loading, tokenization, and inference across hundreds of model families. Databricks (2024) reports that 76% of enterprise LLM users choose open-source models, with Llama variants dominating.

Orchestration – langchain-ai/langchain (~100k stars) provides composable chains, agent runtimes, and first-class RAG tooling. LlamaIndex is the main alternative, with stronger defaults for document-heavy retrieval architectures.

Inference serving – vllm-project/vLLM (~45k stars, weekly releases) uses PagedAttention to manage KV-cache memory efficiently, delivering 2-4x throughput improvements over naive serving. It is the standard for multi-GPU production deployments.

Vector storage – pgvector, Pinecone, Weaviate, and Chroma are the main options. Databricks (2024) reports 377% year-over-year growth in vector database adoption; the infrastructure signal that RAG is no longer experimental.

Implementation Guidance: From First API Call to Scalable System

Teams building this typically follow a five-step progression that keeps scope manageable and failure modes visible at every stage.

Step 1 – Baseline the model. Make direct API calls with representative prompts. Measure output quality manually before writing any application code. This catches model selection mistakes before they propagate downstream.

Step 2 – Evaluate before you build. Define 20-50 test cases with expected outputs. Run automated evals (RAGAS for RAG pipelines, LLM-as-judge for generation quality) from the start. Industry data documents a 95% failure rate for GenAI programs that don’t establish systematic evaluation before scaling.

Step 3 – Add retrieval. Connect a vector database, chunk your documents, embed with a sentence transformer model, and wire the retrieval step into LangChain or LlamaIndex. Test retrieval precision independently before combining it with generation.

Step 4 – Instrument everything. Log prompts, retrieved chunks, and outputs. Trace latency per pipeline component. LangSmith and Langfuse both integrate with LangChain out of the box. Without observability, production debugging becomes guesswork.

Step 5 – Optimize for cost and latency. Swap large models for smaller task-specific ones where accuracy holds. Use vLLM for high-concurrency endpoints. Cache common retrieval results. Gartner (2025) predicts 90% of enterprise software engineers will use AI code assistants by 2028; the infrastructure to serve them reliably requires intentional capacity planning from day one.

“The 95% of GenAI pilots that never reach production share one trait: they skipped evaluation before scaling.”

Frequently Asked Questions

What is the difference between NLP and an LLM? NLP is the field of techniques for processing human language; tokenization, parsing, classification, and entity recognition. An LLM is a specific type of model built on NLP techniques, pre-trained on vast text data. NLP defines the tasks; LLMs are a powerful, general-purpose way to perform many of them simultaneously.

How does the Transformer attention mechanism work? Self-attention computes a relevance score between every token pair in a sequence. Each token generates a query, a key, and a value vector. Queries from one token are matched against keys from all others; the results weight the value vectors to produce a context-aware representation. Multi-head attention runs this process in parallel across several representation subspaces.

When should I use RAG instead of fine-tuning? Choose RAG when your knowledge base changes frequently, when you need citations for auditability, or when you lack labeled training data. Fine-tune when you need the model to adopt a specific output format or reasoning pattern that prompt engineering cannot reliably produce. Most teams reach production faster with RAG and fine-tune later if accuracy gaps remain.

Which open-source LLM library should I start with? Start with huggingface/transformers for model access and experimentation, and langchain-ai/langchain for application logic and RAG pipelines. Both have extensive documentation and active communities. Add vllm-project/vLLM when you need to serve multiple concurrent users with low latency.

How do I stop an LLM from hallucinating in production? Use RAG to ground responses in retrieved documents and instruct the model to answer only from provided context. Implement output validation LLM-as-judge or rule-based checks to catch unsupported claims before they reach users. Log and review failed cases systematically; hallucination patterns in production are domain-specific and fixable with targeted retrieval improvements.

Three Principles to Build LLM Systems That Hold Up

The NLP fundamentals that power large language models are not obscure theory; they are the control surface for every developer working with these systems. Three things matter most.

First, know the architecture. Self-attention, tokenization, and positional encoding are not implementation details you can ignore; they determine context limits, failure modes, and cost structure. Second, match the pattern to the problem. Prompting, RAG, and fine-tuning solve different problems; reaching for fine-tuning before validating RAG is the most common source of wasted engineering effort. Third, instrument before you scale. Evaluation and observability are not optional phases; they are the mechanism that converts a prototype into a production system.

The $37 billion enterprises spent on generative AI in 2025 (Menlo Ventures) will compound only for teams that understand what they are building. The question worth sitting with: which layer of your current LLM stack is a black box you cannot debug?