What is Agentic AI? Agentic AI refers to software systems powered by large language models that can perceive their environment, plan multi-step strategies, call external tools, and execute tasks autonomously without constant human direction. Unlike chatbots that respond to single prompts, agentic systems pursue goals across extended workflows. They can write code, query APIs, analyze results, and trigger downstream actions, all in a single automated loop.

The Business Case Is No Longer Theoretical

Startups deploying agentic AI report productivity gains of 37% in targeted workflows and are reclaiming 40+ hours per team each month, removing the backlog of repetitive tasks that has always blocked strategic work.

The numbers behind this shift are hard to ignore. McKinsey’s State of AI Global Survey (2025) found that 88% of enterprises now report regular AI use in their organizations. More striking, McKinsey projects AI agents could add $2.6 to $4.4 trillion in annual value across business use cases. That is not a forecast for 2040. It is a projection for a market already in motion.

For startups, the opportunity is asymmetric. Large incumbents face the innovator’s dilemma, they risk cannibalizing existing product lines by going agent-native. Startups face no such constraint. They can redesign workflows from scratch around autonomous systems, operating with the efficiency of a much larger organization. Deloitte (2025) predicts 25% of companies using generative AI will launch agentic pilots in 2025, growing to 50% by 2027. The window to move early and build durable advantages is right now.

“By 2028, at least 15% of daily work decisions will be made autonomously by AI agents, up from zero in 2024.”

What Separates Agentic AI from Automation You Already Have

Traditional RPA breaks when conditions change. Agentic AI handles ambiguity by reasoning: it reads context, selects tools, and recovers from partial failures without a human rewriting rules.

Most teams building automation today use one of three patterns: rule-based RPA (Robotic Process Automation), chatbots, or data pipelines. Each requires a human to define every possible state in advance. Change the input format, rename an API field, or add a new edge case, and the automation breaks.

Agentic AI works differently. According to the arXiv paper “Agentic Artificial Intelligence: Architectures, Taxonomies, and Evaluation” (2026), modern LLM-based agents use a cognitive pipeline: perception, planning, tool use, memory, and collaboration. The agent reasons about what it sees, selects tools from a registry, and adapts when something changes. It does not need every state predefined, it infers what to do next.

The practical difference is significant. Gartner (2025) predicts agentic AI will autonomously resolve 80% of common customer-service issues by 2029, cutting operational costs by 30%. No RPA system delivers that breadth, because RPA cannot reason about novel inputs.

Five Startup Use Cases Generating Measurable ROI

The highest-ROI deployments in 2025 span autonomous SDR pipelines, customer-support resolution agents, AI software engineers, legal document analyzers, and supply-chain anomaly detection, each reducing cost-per-task by 60 to 80%.

Sales Development: The Autonomous SDR

B2B companies spend $50,000 to $150,000 per year on a single sales development representative. An autonomous SDR agent handles prospecting, outreach, lead qualification, appointment setting, and follow-up nurturing across email, LinkedIn, and phone for a fraction of that cost. Sweep raised $22.5M in 2025 specifically to automate CRM hygiene and pipeline nudges. The key technical requirement is maintaining conversation context across multiple touchpoints and knowing when to escalate to a human.

Customer Support: Closing Tickets Without Humans

Companies handling 10,000 or more support tickets per month spend $100,000 to $500,000 annually on support staff. An AI agent resolving 60 to 70% of those tickets autonomously delivers measurable cost savings from month one. Salesforce Agentforce deployments (2024) at Wiley showed a 40% boost in case resolution rates and a 28% reduction in average handling time. The metric to track is autonomous resolution rate, not just deflection.

Software Engineering: The AI Pair That Never Sleeps

Coding agents are the use case drawing the most venture capital. Anysphere (Cursor) tripled its valuation to $9B in 2025 while reaching over one million developers. Cognition’s Devin resolved 13.86% of GitHub issues end-to-end without human assistance in benchmark testing, twice the rate of LLM-based chatbots. For startups, coding agents compress the sprint cycle: a three-person team with capable coding agents can ship at the pace of a team twice its size.

Legal and Compliance: Structured Document Analysis at Scale

Harvey AI became one of the fastest-growing vertical AI agents by targeting legal workflows, contract review, due diligence, and regulatory analysis. Active use of generative AI in law firms nearly doubled from 14% in 2024 to 26% in 2025, with 45% planning to make it central within one year. The key architectural requirement is the hybrid symbolic-neural design flagged in the Springer Nature survey (2025): symbolic logic handles deterministic rules; neural LLMs handle unstructured text.

Supply Chain: Real-Time Decision Agents

Supply chain disruption costs enterprises billions annually. Agentic systems continuously monitor supplier data, inventory levels, and demand signals, and trigger reorder or rerouting actions autonomously. Unlike static dashboards, these agents act: they call procurement APIs, notify logistics partners, and update ERP records, all without a human approving each step. Teams building this typically find that the hardest part is not the agent logic but integrating with legacy ERP systems over REST APIs.

“Startups deploying vertical AI agents report 3 to 5x higher retention rates than those using horizontal, general-purpose tools.”

The Architecture Inside a Production-Ready Agent System

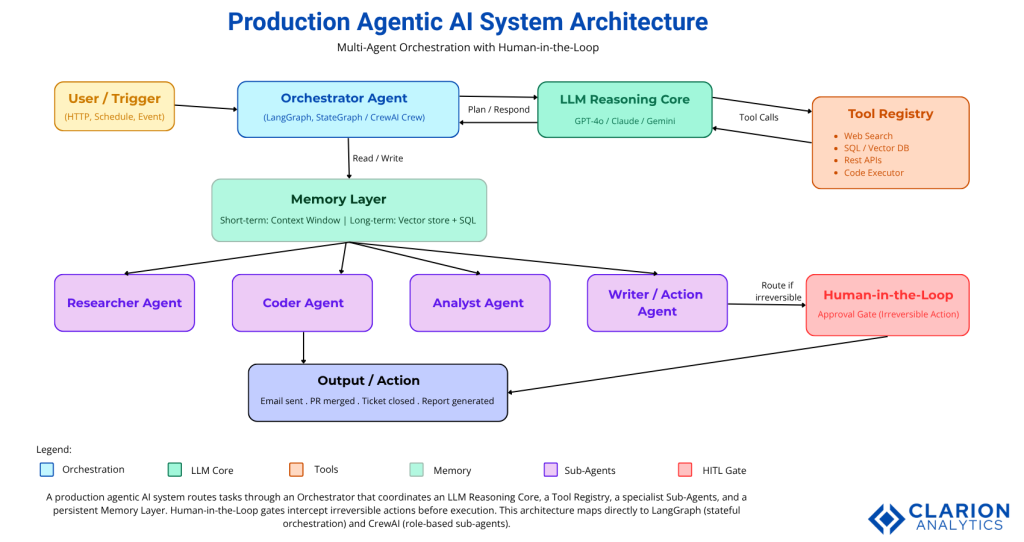

A production agentic system has five layers: a perception/input layer, an LLM reasoning core, a tool-use layer, a memory layer, and an orchestration layer managing multi-agent routing and state.

The arXiv paper on multi-agent orchestration (2026) formalizes this as a unified orchestration framework integrating two key protocols: the Model Context Protocol (MCP), which standardizes how agents connect to external tools, and the Agent-to-Agent Protocol (A2A), which governs how agents from different vendors communicate with each other. Together these protocols are the HTTP-equivalent layer for agentic AI, enabling plug-and-play connectivity instead of custom integration work.

Figure 1: A production agentic AI system routes tasks through an Orchestrator that coordinates an LLM Reasoning Core, a Tool Registry, specialist Sub-Agents, and a persistent Memory Layer. Human-in-the-Loop gates intercept irreversible actions before execution. This architecture maps directly to LangGraph (stateful orchestration) and CrewAI (role-based sub-agents).

Framework Comparison

| Framework | Key Strength | Best Used When |

|---|---|---|

| LangGraph | Fine-grained stateful orchestration, durable execution, conditional branching, human-in-the-loop checkpoints | Complex workflows with multiple decision trees, long-running tasks, or enterprise-grade reliability in production |

| CrewAI | Fastest time-to-prototype; role-based agent design; minimal boilerplate; 44k+ GitHub stars | You need a working multi-agent demo in under two weeks and workflow maps to sequential role handoffs |

| Microsoft Agent Framework | Full Azure integration, SOC 2/HIPAA compliance, multi-language support (Python, C#, Java), formal SLAs | Organization runs on Azure and needs enterprise support contracts, regulatory compliance, and production SLAs |

| OpenAI Agents SDK | Clean API, tight GPT-4o integration, built-in tracing, 19k+ stars since March 2025 launch | Already paying for OpenAI models and want a lightweight, well-documented framework without heavy orchestration overhead |

“The organizations that win won’t just use AI agents, they’ll redesign their operating model to build around them.”

Choosing Your Stack: A Practical Guide for CTOs

Start with CrewAI to validate the use case in two weeks, then migrate to LangGraph when you hit the complexity ceiling. If you are Azure-native, go directly to Microsoft Agent Framework.

The AI agent framework landscape consolidated dramatically in 2025. Microsoft merged AutoGen and Semantic Kernel into a unified Agent Framework. LangChain’s team explicitly directed teams toward LangGraph for production agents. CrewAI raised $18M and powers agents at 60% of Fortune 500 companies. The chaos has resolved into a clear decision tree.

In practice, the selection decision comes down to three questions. First, how fast do you need a working prototype? CrewAI wins this race, its role-based abstraction ships in days, not weeks. Second, how complex is your workflow? If you need conditional branching, error recovery, human approval gates, or tasks running longer than 30 seconds, move to LangGraph. Third, what is your cloud platform? Azure-native organizations should evaluate Microsoft Agent Framework first. Its enterprise SLAs and compliance guarantees justify the Microsoft lock-in for mission-critical applications.

One warning: most teams that start with CrewAI hit its ceiling 6 to 12 months in and face a painful rewrite to LangGraph. If your requirements include long-horizon tasks, multi-step conditional logic, or workflow state that must survive failures, build on LangGraph from day one.

The Three Failure Modes That Kill Agentic AI Projects

Gartner (2025) warns that over 40% of agentic AI projects will be canceled by 2027. The three dominant failure modes are unclear business value before build, no governance framework for tool permissions, and treating demo performance as production-ready.

Failure Mode 1: Building before validating business value. Gartner’s analyst Anushree Verma stated directly: most agentic AI projects right now are early-stage experiments driven by hype. The fix is to define a single, measurable outcome before writing a line of code. “Reduce support ticket resolution time by 40%” is a target. “Explore AI agents” is not.

Failure Mode 2: No governance framework. Autonomous agents call APIs, write files, send emails, and execute code. Without scoped permissions and audit trails, a single hallucination becomes a security incident. 75% of technology leaders cite governance as their primary deployment concern when deploying agentic AI (2025 survey data). Implement OAuth 2.1 with token scoping, tool-level access controls, and complete action logging before you go to production.

Failure Mode 3: Demo-to-production gap. Only 11% of agentic AI pilots make it into full production. The model demos fine, the slide looks good, and then the rollout runs into integration friction, latency budgets, and change-management resistance. Build for failure from the start: every agent action should have a retry policy, a timeout, and a fallback path to human handling.

Frequently Asked Questions About Agentic AI for Startups

What is the difference between agentic AI and a chatbot?

A chatbot responds to individual prompts and stops. An agentic AI system perceives its environment, plans a multi-step sequence of actions, calls external tools such as APIs or databases, and executes tasks without further human input. The core difference is autonomous goal pursuit versus reactive response.

Which AI agent framework should a startup choose in 2026?

Start with CrewAI for fast prototyping or LangGraph for complex stateful workflows. If your team is Azure-native, move directly to the Microsoft Agent Framework (AutoGen + Semantic Kernel). Match the framework to your workflow complexity before committing, most teams regret over-engineering from day one.

What ROI can I realistically expect from autonomous AI agents?

Organizations implementing AI agent solutions report average productivity gains of 37% in targeted workflows, according to Forrester Research (2024). Agentic AI systems handling customer support can reduce operational costs by 30%. Voice and support agents typically show the fastest payback, often within three months of production deployment.

How do I prevent agentic AI projects from failing in production?

Gartner (2025) identifies three root causes of project cancellation: unclear ROI targets, inadequate governance, and integration complexity with legacy systems. Start with one high-value use case, instrument every agent action with tracing and audit logs, and add human-in-the-loop gates before any irreversible side-effects occur.

What is the Model Context Protocol (MCP) and why does it matter for agents?

MCP, introduced by Anthropic and broadly adopted through 2025, is a standard that lets any agent connect to external tools, databases, and APIs using a plug-and-play interface. It functions like HTTP for agents, enabling interoperability across frameworks and vendors without custom integration work per tool.

Three Things Every CTO Needs to Know Right Now

Three facts should drive your next decision on agentic AI for startups.

First, the adoption window is narrowing fast. Gartner (August 2025) projects that 40% of enterprise applications will embed task-specific agents by end of 2026, up from less than 5% today. C-level executives have a three-to-six month window to define their strategy before falling behind. For startups, the math is even sharper: early architecture decisions compound. Getting this right now beats catching up in 18 months.

Second, architecture and governance matter more than model selection. The best LLM in a poorly governed system will still get your project canceled. Pick a framework matched to your complexity level, instrument everything with traces and audit logs, and build human-in-the-loop gates before your first production deployment.

Third, the teams that win are those redesigning workflows around agents, not adding agents to existing workflows as an afterthought. The McKinsey concept of “Superagency” captures this: AI’s real payoff is not task automation in isolation but the amplification of human capability when people stop doing low-value repetitive work entirely.

Which one of your current workflows is most exposed to disruption by autonomous AI agents? Start there.