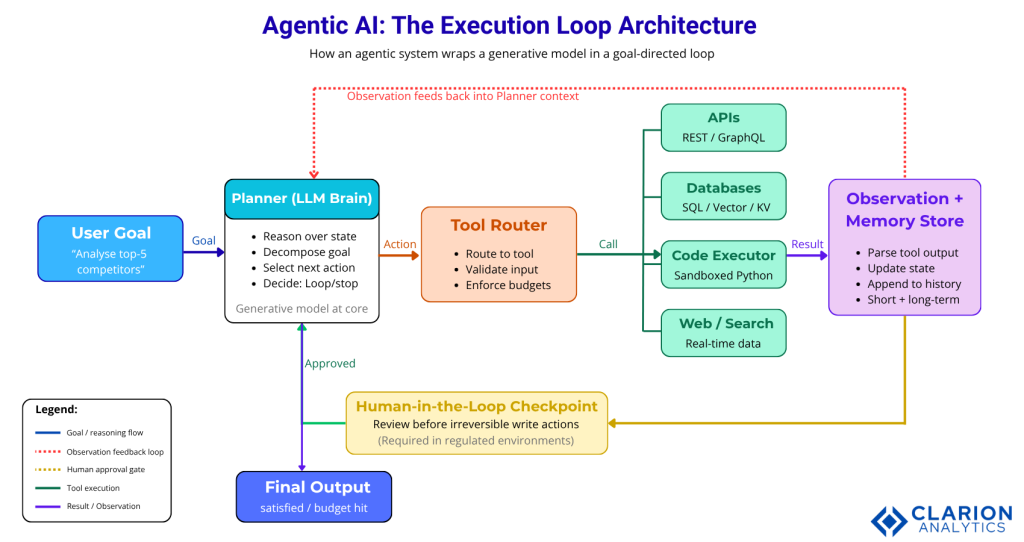

Generative AI transforms input into output, one turn at a time. Agentic AI goes further: it perceives a goal, plans a sequence of steps, calls tools, observes results, and loops until the task is complete. The difference is not model size but system architecture. An agentic system wraps a generative model inside a persistent, goal-directed execution loop that can act, fail, retry, and adapt.

The $4.4 Trillion Gap Between Generating and Doing

Generative AI creates content. Agentic AI completes goals. Most organisations have deployed the former but capture enterprise value only with the latter.

McKinsey (2025) puts the annual value potential of generative and agentic AI at $2.6 to $4.4 trillion. Yet the same research found that 78% of organisations have deployed some form of generative AI while only 23% are actively scaling agentic systems. That gap is not a technology problem. It is an architecture problem.

The issue McKinsey calls the “gen AI paradox” is real. Wide deployment, shallow impact. Chatbots answer questions. Copilots help individuals. But the highest-value workflows, credit-risk memos, multi-system customer resolution, end-to-end code review require a system that acts, not one that merely responds.

Gartner (2025) projects that 40% of enterprise applications will include task-specific AI agents by end of 2026, up from less than 5% today. CTOs who do not define their agentic AI strategy in the next three to six months risk falling behind peers who already have.

How Generative AI Works and Where It Stops

A generative AI model takes a prompt and returns a completion. It is stateless between calls, has no ability to trigger external actions, and has no feedback loop. Every conversation starts fresh.

That architecture is exactly right for tasks like drafting a blog post, generating a code suggestion, or summarising a meeting transcript. The model is powerful at synthesis and generation. Its weakness is persistence and action.

When you ask a generative AI system to “research our top five competitors and update the CRM,” it can write you a plan. But it cannot execute it. No memory carries across API calls. No tool writes to Salesforce. No loop checks whether the task finished.

“Deploying a chatbot is not building an agent. One answers questions; the other completes goals.”

This is the ceiling of pure generative AI. It is a powerful component in a system, not the system itself. The moment a workflow requires more than one step, external data, or a decision that depends on a previous result, you need an agentic layer.

What Makes AI Truly Agentic

An agentic system adds four capabilities on top of a generative model: persistent memory, tool use, multi-step planning, and a feedback loop that evaluates its own output before deciding what to do next.

The foundational academic survey by Wang et al. (2024), updated in Frontiers of Computer Science, defines the key subsystems as: (1) a memory backend that persists state across steps, (2) a planning module that decomposes goals into sub-tasks, (3) a tool executor that interacts with APIs, databases, and code environments, and (4) a reflection module that reviews outputs and retries failures.

A more recent taxonomy from Kapoor et al. (2025, arXiv) adds Perception (how the agent reads its environment) and Collaboration (how agents share state in multi-agent systems). Together, these components produce a system that is qualitatively different from a chat interface.

Figure 1: The Agentic AI execution loop. A user goal enters the Planner (LLM Brain), which routes to the Tool Router. The Tool Router dispatches calls to External Tools (APIs, Databases, Code Executors). Observations return to the Memory Store, which updates the Planner context. A Human-in-the-Loop checkpoint after the Observation stage allows human review before consequential actions are confirmed. The loop continues until the goal state is satisfied or a budget limit is reached.

Real-World Use Cases: Where Each Paradigm Wins

Generative AI excels at drafting, summarising, and answering questions. Agentic AI excels at multi-step workflows where a task requires retrieving data from several systems, making decisions, and writing results back.

In practice, some of the clearest wins come from finance. McKinsey (2025) documented a retail bank that restructured its credit-risk memo process: relationship managers had spent weeks manually pulling data from ten sources. An agentic system now extracts data, drafts memo sections, generates confidence scores, and flags follow-up questions. The result was a 20 to 60 percent productivity gain and 30 percent faster credit turnaround.

Software engineering is another high-value domain. A global bank, also cited by McKinsey, cut its IT modernisation timeline by more than 50% by deploying agents to assist engineering teams. Another firm used multi-agent systems to interpret complex market data, unlocking $3 million in projected annual savings.

These are not isolated experiments. Deloitte (2025) projects that 50% of enterprises using generative AI will deploy autonomous agents by 2027, up from 25% in 2025. Teams building this typically find that the hardest part is not the model. It is wiring the memory store and tool boundaries correctly.

Code Snippet 1: LangGraph – Defining a Stateful Agent Loop

Source: langchain-ai/langgraph, examples/agent_executor/base.ipynb

This snippet shows the core architectural difference between agentic and generative AI. The conditional edge looping from “llm_step” back through “tool_step” is what makes the system agentic: the model observes tool results and decides whether to continue acting or finish. A plain llm.invoke() call has no such loop. Every developer building on LLMs should understand this pattern before adding any agent framework.

“Agentic AI high performers are three times more likely than peers to be scaling agents across the organisation.” ~McKinsey (2025)

Choosing a Framework: LangGraph, AutoGen, or CrewAI

LangGraph gives the most explicit control for complex branching workflows. AutoGen is best for multi-agent conversation in Microsoft Azure environments. CrewAI is the fastest path to working role-based multi-agent teams with minimal boilerplate.

The table below compares the three leading open-source frameworks plus the baseline of using generative AI with no agent layer.

| Option | Key Strength | Best Used When |

|---|---|---|

| LangGraph | Stateful graph orchestration, explicit conditional branching, full debugging control | Complex multi-step workflows requiring deterministic logic and audit trails in regulated environments |

| Microsoft AutoGen | Multi-agent conversation loops, native Azure identity and security integration | Collaborative specialist agents, organisations already on the Microsoft Azure stack |

| CrewAI | Role-based agent teams, minimal boilerplate, 5.76x faster than LangGraph on certain task types | Rapid prototyping, content pipelines, research summarisation, teams new to agentic development |

| Generative AI (no agent layer) | Simplicity, lowest latency, easiest to govern and predict | Single-turn Q&A, document drafting, summarisation, and tasks with no external action required |

Code Snippet 2: CrewAI – Defining Agents, Tasks, and a Crew

Source: crewAIInc/crewAI, docs/concepts/crews.mdx

CrewAI shows how specialist agents replace hand-coded logic with role definitions. The role, goal, backstory, and tools fields define each agent’s behaviour without explicit code. This maps directly to enterprise use cases: replace “researcher” with “compliance checker” and “writer” with “report generator” and you have a regulatory filing agent. CrewAI reports 100,000+ certified developers and executes up to 5.76x faster than LangGraph on certain task types.

Building Agentic Systems That Survive Production

The three most common failure modes are prompt injection, hallucination-in-action, and uncontrolled cost loops. Guarding against them requires guardrails at the tool boundary, output verification steps, and per-agent budget caps.

Gartner (June 2025) predicts that more than 40% of agentic AI projects will be cancelled by end of 2027. The cause is almost never the model. It is escalating compute costs, absent ROI metrics, and inadequate risk controls. Of the 3,400+ practitioners surveyed, only 19% had made significant investments; 42% were making conservative bets; 31% were waiting.

The academic literature supports a focused implementation approach. Kapoor et al. (2025) identify prompt injection (malicious content in tool outputs hijacking agent behaviour) and hallucination-in-action (a model taking a wrong real-world action) as the two highest-severity failure modes. Both require tool-boundary validation and sandboxed execution environments.

Practically, teams building this typically find three governance controls make the biggest difference: (1) a hard token-budget per agent invocation, (2) a human-in-the-loop checkpoint before any write action, and (3) structured output schemas that prevent the model from returning free text where a database expects a typed field.

“Gartner (2025) predicts over 40% of agentic AI projects will be cancelled by 2027. The cause is almost never the model; it is missing governance.”

The Springer survey (Masterman et al., 2025) adds a useful architectural insight: symbolic, rule-based approaches remain the correct choice for safety-critical domains (healthcare, robotics), while neural/generative orchestration dominates data-rich, adaptive use cases (finance, marketing). Do not default to neural agents for a process that requires deterministic guarantees.

Frequently Asked Questions

What is the main difference between agentic AI and generative AI? Generative AI takes a prompt and returns a single completion. It is stateless and cannot take actions. Agentic AI wraps a generative model in a persistent loop that can plan, call external tools, observe results, and iterate until a goal is met. The difference is architectural, not a matter of model capability.

When should I use an AI agent instead of a standard LLM call? Use an agent when a task requires more than one step, depends on external data, or needs to write results back to a system. Single-turn tasks like drafting or summarising are better served by a direct LLM call. Agents add latency and cost; apply them only where the workflow genuinely requires autonomy and iteration.

What are the best open-source frameworks for building agentic AI systems? LangGraph is best for complex, stateful workflows with conditional branching. Microsoft AutoGen is strongest for multi-agent collaboration in Azure environments. CrewAI is the fastest path to role-based multi-agent systems with minimal boilerplate. All three are actively maintained on GitHub with strong enterprise adoption.

How do I stop an AI agent from going into a runaway loop? Set a hard maximum on the number of agent iterations and a token-budget cap per invocation. Add a human-in-the-loop checkpoint before any irreversible write action. Use structured output schemas so the model cannot return free-form text where your tool expects a typed input. Log every tool call for post-hoc audit.

Is agentic AI safe to deploy in regulated industries like banking or healthcare? Yes, with the right architecture. Symbolic or rule-based orchestration layers are preferable for deterministic, safety-critical processes. Neural agents are well-suited to data-rich, adaptive tasks. In both cases, human-in-the-loop checkpoints, sandboxed tool execution, and output verification are non-negotiable before deploying to production.

Where to Take This Next

Three things stand out from this comparison. First, the architectural gap between generative and agentic AI is a loop, a memory store, and a set of tool boundaries. Second, the value is concentrated in vertical, workflow-level use cases, not in horizontal copilots. Third, governance failures, cost, ROI measurement, and risk controls will kill more agentic projects by 2027 than any model limitation.

The organisations pulling ahead are not necessarily running better models. They are the ones that redesigned their workflows around agents, established hard budget controls, and put a human-in-the-loop checkpoint before every consequential action.

The question worth sitting with is this: if 33% of your enterprise software will include agentic AI by 2028, as Gartner projects, which of your core workflows are you redesigning today?

“The organisations capturing outsized AI returns are not the ones with the best models. They are the ones that redesigned their workflows around agents.”