Document intelligence in financial services refers to the use of AI, including OCR, large language models (LLMs), and agentic workflows, to automatically ingest, classify, extract, and verify information from KYC identity documents, customer contracts, and regulatory filings. The system routes extracted data to downstream compliance engines and writes every decision step to an immutable, timestamped audit log that satisfies regulatory examination requirements without manual intervention.

Why Manual KYC Review Is Costing You More Than You Think

AI KYC document review automation is no longer a future-state ambition for financial institutions. It is the difference between onboarding a client in 24 hours and losing them to a competitor who can.

According to McKinsey (2025), banks assign 10 to 15 percent of all full-time staff to KYC and AML activities alone. Despite that investment, the financial industry detects only around 2 percent of global financial crime flows. Spending rises by up to 10 percent per year in advanced markets, yet the detection ceiling barely moves. That is not a resource problem. It is a process problem.

The root cause is well understood. Compliance teams collect documents manually, reconcile data across fragmented systems, and process alerts one by one. Deloitte’s 2025 EMEA Model Risk Management Survey confirms that 58 percent of banks already deploy AI for KYC and AML, making it the single most common AI use case in banking. The question is no longer whether to automate, but how to do it in a way that satisfies regulators and survives examination.

The audit trail sits at the centre of that question. A system that automates document review but cannot prove what it decided, when it decided it, and on what basis is not compliant. It is a liability.

“A single AI agent can supervise the KYC review of 20 or more documents simultaneously, with every decision recorded for regulators.”

What AI Document Intelligence Actually Does in a KYC Workflow

AI document intelligence ingests raw document images, applies OCR and layout analysis to extract structured fields, classifies the document type, cross-validates extracted data against watchlists and internal records, and writes a timestamped decision log, all without a human reviewer touching the file.

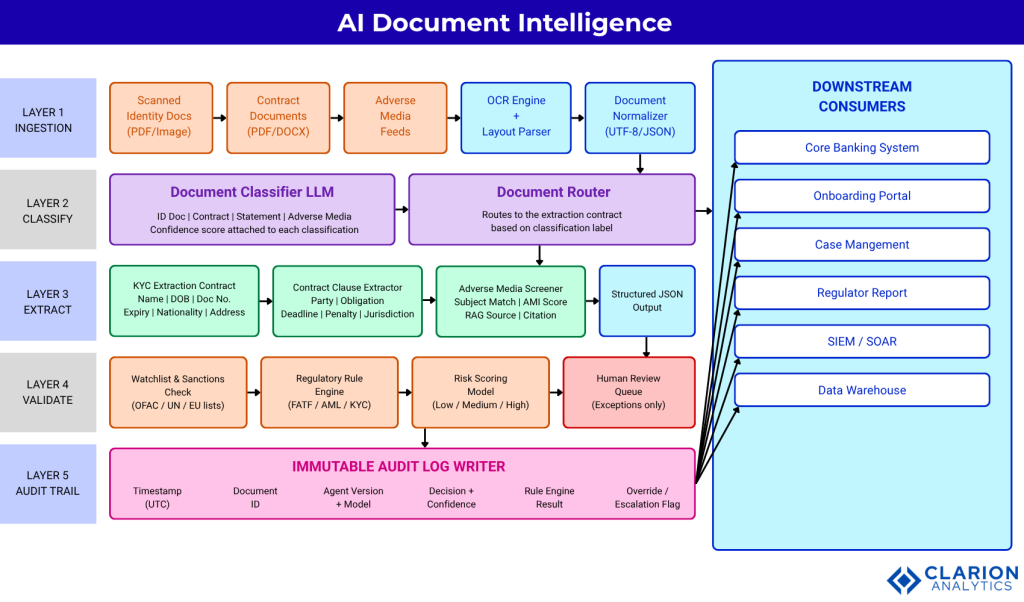

Breaking that down into practical steps matters for CXOs evaluating build-versus-buy decisions. The pipeline has five distinct functional layers:

- Layer 1: Document ingestion via OCR and layout parsing. Raw files (scanned PDFs, images, DOCX) are normalised into machine-readable JSON.

- Layer 2: Classification. An LLM identifies the document type (identity document, contract, bank statement, adverse media article) and assigns a confidence score.

- Layer 3: Structured extraction. A typed extraction contract pulls specific fields (name, date of birth, document number, jurisdiction, obligation) depending on document type.

- Layer 4: Validation. Extracted data is checked against sanctions lists, AML rule engines, and risk-scoring models. Exceptions are routed to a human review queue.

- Layer 5: Audit trail. Every agent action, confidence score, rule engine result, and human override is written to an immutable log with a UTC timestamp and model version.

Architecture Diagram

Fig. 1: Five-layer AI KYC document intelligence pipeline. Raw documents enter at Layer 1 via OCR and layout parsing, are classified and routed by an LLM at Layer 2, structured data is extracted using typed contracts at Layer 3, validated against sanctions lists and rule engines at Layer 4, and every agent decision is written to an immutable audit log at Layer 5 before downstream systems receive the result. Human review is triggered only for high-risk exceptions flagged by the risk-scoring model.

Three Real-World Use Cases Driving Adoption

The three highest-value KYC automation use cases are identity document verification at onboarding, ongoing contract clause extraction for periodic review, and adverse media screening. Each use case reduces manual review hours by 50 to 90 percent according to documented deployments.

Use Case 1: Onboarding Identity Verification

A large Dutch financial institution reported a 90 percent reduction in KYC onboarding time after deploying a combination of AI innovations, while staff workload dropped by 30 percent. HSBC achieved a comparable result, compressing certain KYC procedures from 12 days to under 24 hours while accuracy improved from 87 to 99 percent.

Use Case 2: Periodic Contract Review and Clause Extraction

Compliance teams performing ongoing customer due diligence must re-review contracts, term sheets, and facility agreements on a periodic cycle. LLM-based clause extraction frameworks pull parties, obligations, deadlines, and penalty clauses into structured records automatically. PwC (2025) reports that AI tools can automatically generate compliance documentation, compare outputs under different conditions, and flag anomalies for model risk review.

In practice, teams using this find that the biggest initial win is not accuracy. It is coverage. Reviewers finally see every clause in every contract, not just the ones they had time to read.

Use Case 3: Adverse Media Screening with Agentic RAG

Traditional keyword-based adverse media searches generate high false-positive rates and demand extensive manual review. Chernakov, Jafarnejad, and Frank (University of Luxembourg, 2026) present a peer-reviewed agentic LLM system that uses Retrieval-Augmented Generation (RAG) to search the web, retrieve and process relevant documents, and compute an Adverse Media Index (AMI) score for each subject. The multi-step approach substantially reduces false positives versus keyword-only methods.

Code Example 1: Defining a KYC Extraction Contract

Source: enoch3712/ExtractThinker, README.md

This snippet defines a Pydantic-typed KYC extraction contract with five fields. ExtractThinker combines a Tesseract OCR document loader with any LLM provider to extract structured data from a scanned document image in a single function call. The typed contract makes the output auditable by design: every extracted field has a name, a type constraint, and can be diff-compared against a previous version of the same document.

Choosing Your Tools: A Comparison of Approaches

The three main approaches to KYC document automation are rule-based OCR platforms, LLM extraction frameworks, and full agentic orchestration layers. Each suits different budget, explainability, and throughput requirements.

| Approach | Key Strength | Best Used When | Audit Trail Quality |

|---|---|---|---|

| Rule-Based OCR Platform (e.g., ABBYY, AWS Textract) | High speed, low cost, deterministic output | Document formats are standardised and rarely change | Partial: field-level logs only; no decision rationale |

| LLM Extraction Framework (e.g., ExtractThinker, Azure AI Document Intelligence) | Handles variable layouts; extracts semantic meaning, not just text | Documents are heterogeneous or contain unstructured narrative clauses | Strong: confidence scores and model version recorded per extraction |

| Agentic KYC Orchestration (e.g., multi-agent RAG pipeline) | End-to-end automation from ingestion to risk decision with human-in-loop escalation | Full KYC lifecycle automation is required at scale with regulatory oversight | Comprehensive: every agent step, tool call, and override is timestamped and logged |

Code Example 2: Multi-Document Classification Pipeline

Source: ExtractThinker-v2, README.md

This snippet extends the first example with a multi-document classification pipeline. When a customer submits a bundle of KYC documents in unknown order, the Process object loads the file, the splitter segments it into individual documents, and the classifier routes each segment to the correct extraction contract. The chain handles document variability automatically. Every classification decision and extracted field is available for downstream audit log ingestion.

“The audit trail is not a feature of AI-powered KYC. It is the product. Every decision must be traceable before a single regulator asks.”

Implementation Guidance: What Teams Building This Actually Find

Teams deploying KYC document AI should plan for three phases: a data quality audit to unify fragmented document stores, a controlled pilot covering one document type, and a governance layer before any at-scale rollout.

In practice, the first obstacle is never the model. It is the data. Documents are stored across five different systems, scanned at inconsistent resolution, and named according to whatever convention each branch office invented in 2008. The data quality audit is not a preprocessing step. It is a strategic project in its own right.

The second phase is a deliberate narrow pilot. Choose one document type, for example passports, or a single contract template, and instrument it end-to-end before expanding. BCG (2024) reports that institutions adopting AI with specialist teams see up to 60 percent efficiency gains and 40 percent cost reductions in onboarding and compliance. Those gains do not materialise from broad rollouts. They come from tightly scoped implementations with measurable feedback loops.

The third phase is governance. Before any model processes a live customer document, the team must define: the minimum acceptable confidence threshold for auto-approval, the escalation path when that threshold is not met, and the model versioning scheme that links each extraction result to the exact model weights used. The audit log writer in Layer 5 is the technical implementation of this governance policy.

A 2025 ACM survey of approximately 300 papers on LLMs in document intelligence confirms that RAG and fine-tuning approaches significantly outperform base models for domain-specific extraction tasks in finance, law, and medicine. Teams that fine-tune on their own historical document sets see the most reliable extraction with the fewest hallucinations.

Governance, Explainability, and the Regulatory Expectation

Under the EU AI Act and the NIST AI Risk Management Framework, financial institutions must document model decisions, maintain audit logs, and demonstrate human-in-the-loop escalation for high-risk determinations, all of which a well-architected AI KYC pipeline produces automatically.

PwC’s analysis of AI-powered risk and compliance (2025) describes a practical implementation: build virtual regulators, AI personas informed by an agency’s body of regulations and past decisions, to pressure-test your pipeline before go-live. Build a virtual auditor that generates documentation, compares outputs under different conditions, and flags data drift. These are not optional enhancements. They are the evidence a regulator will request at examination.

KPMG’s Workbench, launched June 2025, is emblematic of where the market is heading. Built-in logs document every agent-to-agent handoff, maintaining accountability across the entire workflow. Deloitte’s 2025 research on agentic AI in banking reinforces the same conclusion: early adopters achieve up to 50 percent faster processing and significant improvements in audit readiness. The competitive moat is not speed alone. It is the proof of quality that the audit trail provides.

“Regulators do not ask whether you used AI. They ask whether you can prove what the AI decided, when it decided it, and why.”

Frequently Asked Questions

How does AI automate KYC document review in financial services?

AI KYC automation combines OCR for text extraction, LLMs for document classification and field extraction, and rule engines for regulatory validation. An agentic pipeline handles the full workflow from document ingestion to risk decision without a human reviewer, escalating only exceptions that fall below a confidence threshold or trigger a sanctions match. Every step is timestamped and logged for regulatory examination.

What is an automated audit trail and why do regulators require it?

An automated audit trail is an immutable, chronologically ordered log that records every decision made during a document review: which model version processed the document, what fields were extracted, what confidence scores were assigned, which rules were applied, and whether a human override occurred. Regulators require it because manual review leaves no consistent evidence trail. Automated logs make compliance examinations faster and reduce the risk of enforcement actions.

What is the difference between OCR-based and LLM-based KYC document review?

OCR-based systems extract text from fixed positions on standardised document templates. They are fast and cheap but brittle when document layouts vary. LLM-based systems understand the semantic meaning of a document, not just its layout, so they handle heterogeneous formats, freeform narrative clauses, and multi-page contracts that OCR alone cannot reliably parse. LLM systems also produce confidence scores per extraction, which OCR platforms typically do not.

How long does it take to implement an AI KYC document pipeline?

A focused pilot covering one document type typically takes six to twelve weeks: two to four weeks for data preparation and OCR tuning, two weeks for extraction contract definition and testing, and two to four weeks for governance layer setup and integration with the core banking system. Expanding to additional document types and full agentic orchestration adds three to six months of integration and change management work.

Can AI KYC systems pass regulatory examination in the EU and US?

Yes, if they are architected for explainability from the start. The EU AI Act requires documentation of model decisions and human oversight for high-risk AI applications. The NIST AI Risk Management Framework in the US requires governance, transparency, and model explainability. A pipeline that records model version, confidence scores, rule engine results, and human overrides in an immutable log satisfies the evidence requirements both frameworks demand.

The Compliance Imperative Is Also a Competitive One

Three insights define the current moment. First, the scale of the inefficiency is structural: assigning 10 to 15 percent of your workforce to KYC with a 2 percent detection rate is not a compliance posture, it is a liability. Second, the technology for automating that workflow end-to-end is production-ready. Real institutions have achieved 90 percent onboarding time reductions with documented accuracy improvements. Third, the audit trail is not optional. Under EU and US regulatory frameworks, every institution deploying AI in a high-risk context must prove its decisions. A well-designed pipeline generates that proof automatically, turning a compliance cost into a competitive differentiator.

The teams winning this transition are not the ones with the largest AI budgets. They are the ones that started with a narrow pilot, governed it rigorously, and built the audit evidence before the regulator asked for it.

What would your compliance posture look like if your audit trail wrote itself?