DEFINITION. Enterprise AI ROI is the measurable financial and operational return an organisation generates from artificial intelligence investments, expressed as a ratio of quantified benefits (cost reduction, revenue uplift, productivity gain) to total costs (software, talent, data infrastructure, change management, and ongoing governance). Unlike traditional IT ROI, it requires a multi-horizon measurement framework because strategic AI value often compounds over 24 to 36 months, well beyond a standard payback period.

Why Proving AI Value Is Now Finance’s Hardest Problem

Enterprise AI ROI has become the defining test of whether a technology programme belongs in the boardroom or the basement. According to McKinsey’s 2025 State of AI report, 88% of organisations now use AI in at least one business function. Yet only 39% report any impact on enterprise-level EBIT. Adoption is widespread. Proof is scarce.

That gap is not a technology problem. It is a measurement problem. Boards are asking CXOs to justify ballooning AI budgets, and finance teams are reaching for a metric framework that does not quite fit. Traditional payback-period analysis was designed for capital equipment with predictable, linear returns. AI compounds differently, delivers value across multiple functions simultaneously, and carries costs that most business cases deliberately obscure.

The result is a credibility crisis. Gartner (2024) placed generative AI in the trough of disillusionment on its Hype Cycle, a phase where inflated expectations collide with the demand for real results. CFOs are being told to expect a question on every earnings call: what was the ROI? The organisations that cannot answer that question precisely will find their AI budgets shrinking fast.

“AI has graduated from tech novelty to strategic investment. Like any investment, it must justify itself.”

The good news is that board-ready enterprise AI ROI measurement is achievable. It requires a structured framework, the right set of metrics, honest accounting of total costs, and a narrative that connects technology decisions to business outcomes. This post covers all four.

The Three-Layer ROI Framework Every CFO Needs to See

A board-ready AI ROI framework organises returns into three time-bound layers: operational efficiency (0 to 6 months), productivity compounding (6 to 18 months), and strategic differentiation (18 to 36 months). Each layer uses different metrics and different evidence standards, which prevents cherry-picking and builds credibility with finance teams.

Layer 1: Operational Efficiency (Months 0 to 6)

This layer captures the most measurable, attributable returns. Process automation cost savings, error-rate reductions, and cycle-time improvements are all traceable to specific AI deployments. They require minimal statistical inference and survive CFO scrutiny because they link directly to existing operational KPIs.

Measure: cost per transaction before and after deployment, time-to-resolution in target processes, error or exception rates. These metrics should be established as baselines before the AI goes live, not reconstructed after.

Layer 2: Productivity Compounding (Months 6 to 18)

This layer captures the value of reclaimed human time applied to higher-value work. It is harder to quantify but not impossible. According to BCG’s 2024 AI research, AI can help professionals reclaim 26 to 36 percent of their time in routine, data-heavy tasks. The key is measuring what that time produces, not just the hours saved.

Measure: revenue per employee, output volume per team, speed of decision cycles, and employee-reported time allocation surveys. A control group of comparable teams not yet using the AI tool provides the attribution baseline.

Layer 3: Strategic Differentiation (Months 18 to 36)

This layer is where the largest returns live and where most business cases go wrong. Strategic AI value, new products launched faster, customer retention improved through personalisation, and market-share gains driven by analytical advantage, does not appear in a 12-month payback calculation. It requires a forward projection with explicit assumptions.

Measure: new-product launch velocity, net promoter score delta attributable to AI-enhanced experiences, and market-share change in targeted segments. Every assumption must be stress-tested. Boards are most likely to approve a conservative projection with clear sensitivity analysis than an optimistic one with no uncertainty range.

“The companies seeing the strongest AI returns measure three things: what the AI does, what people do differently because of it, and what the business achieves as a result.”

Which AI Use Cases Deliver the Fastest Verified Returns

Not all AI use cases are equal when it comes to ROI speed and measurability. Selecting the right starting point is one of the most consequential decisions in any enterprise AI business case.

Deloitte’s Q4 2024 State of Generative AI in the Enterprise report, surveying 2,773 director-to-C-suite respondents across 14 countries, found that 74% of organisations say their most advanced GenAI initiative is meeting or exceeding ROI expectations. Cybersecurity implementations led all functions, with 44% delivering ROI above expectations. IT operations and finance automation followed closely.

The pattern is consistent: functions with high transaction volume, clear baselines, and binary success criteria produce the most defensible early ROI. Service operations, supply chain, and marketing follow, typically within 12 to 18 months, but require more sophisticated attribution methodology.

| AI Use Case | Key Strength | Best Used When | Typical Time to ROI |

|---|---|---|---|

| Cybersecurity / Threat Detection | Highest rate of ROI exceeding expectations (44%, Deloitte 2024) | High alert volumes with clear SLA baselines | 3 to 9 months |

| IT Operations / AIOps | 42% average reduction in manual monitoring effort (IDC 2024) | IT team has measurable ticket volume and resolution-time KPIs | 6 to 12 months |

| Finance Automation | Clear process baselines; high data quality | Standardised workflows and audit trails exist | 6 to 18 months |

| Supply Chain Optimisation | Up to 67% of respondents report revenue increases (McKinsey 2024) | Inventory data is centralised and demand forecasting is measured | 12 to 24 months |

| Customer Service / Contact Centre | Measurable CSAT and AHT baselines exist | Large ticket volumes with consistent query types allow clean A/B comparison | 6 to 15 months |

| Strategic / Analytics | Highest revenue-uplift potential (70%, McKinsey H2 2024) | Senior leadership owns the use case and controls the data | 18 to 36 months |

In practice, teams building their first board-ready AI business case typically find that selecting one use case per layer, one quick win for Layer 1, one productivity pilot for Layer 2, and one strategic initiative for Layer 3, gives the board both immediate financial proof and a credible long-term trajectory.

The Hidden Costs That Sink AI Business Cases

Most AI business cases fail board scrutiny not because the benefits are overstated, but because the costs are understated. A financially literate board will find the omissions faster than any AI model finds insights.

Academic research by Stange et al. (2024), published on arXiv, identifies what they call the “hidden technical debt” problem. Organisations frequently calculate AI ROI using only initial development and deployment costs, ignoring the substantial ongoing operational expenditure required to keep a model viable. This omission systematically inflates apparent returns.

The Four Cost Categories Most Business Cases Miss

Model maintenance and retraining. Models degrade as data distributions shift. The cost of keeping a production model accurate is often 40 to 60 percent of the initial build cost, recurring annually.

Data quality and governance. AI is only as good as the data it runs on. Most enterprises discover mid-project that data pipelines need significant investment to produce clean, labelled, timely inputs.

Change management and training. MIT Sloan Management Review research found that companies reporting the highest AI ROI invest 70 percent more in change management, workflow redesign, and capability building than lower-performing peers.

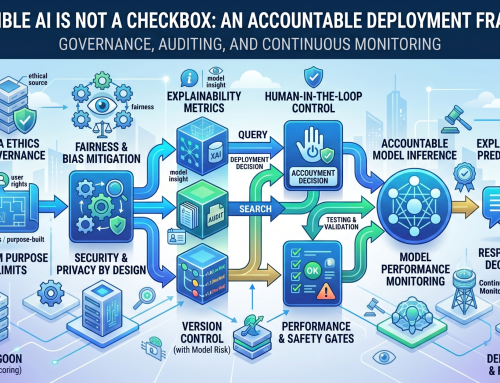

Governance and compliance overhead. Responsible AI governance, model auditing, bias testing, and regulatory compliance are not optional. Including them in the denominator of your ROI calculation is not pessimism. It is accuracy.

The business case that acknowledges these costs and still demonstrates positive ROI will survive a CFO’s review. The one that ignores them will be sent back.

“A board-ready business case includes all four cost categories. The one that survives scrutiny is honest about the denominator, not just optimistic about the numerator.”

How to Set the Right Time Horizon for Board Approval

Boards approve AI investments most reliably when the business case presents a 90-day proof point, a 12-month payback milestone, and a 3-year value trajectory. These three horizons satisfy both short-term financial discipline and long-term strategic ambition simultaneously.

The 90-day proof point is not a full ROI calculation. It is a measurable operational change that demonstrates the AI is working as designed. This could be a 30 percent reduction in claims processing time, a halving of false-positive security alerts, or a 15-point improvement in first-call resolution rate. The number is not the point. The verifiability is.

BCG’s Center for CFO Excellence survey (March 2025), covering over 280 finance executives, found that median reported AI ROI in the finance function is just 10 percent, well below the 20 percent that most CFOs target. The teams generating the strongest returns focus on value from the start rather than learning for learning’s sake.

For the 3-year trajectory, McKinsey’s CxO survey (2024) found that 51 percent of executives anticipate AI delivering a revenue increase of more than 5 percent, with 17 percent expecting increases greater than 10 percent. These projections need to be tied to named business outcomes, not technology capability statements, to carry weight in a board presentation.

Building the Measurement Infrastructure Before You Present

You cannot report AI ROI you never measured. Before any board presentation, organisations need baseline data, a control-group methodology for attribution, and an experiment-tracking platform that produces audit-ready logs of model performance and business-outcome changes.

Gartner’s 2025 AI Maturity survey found that 63 percent of leaders from high-maturity organisations run financial analysis on risk factors, conduct formal ROI analysis, and concretely measure customer impact. This discipline is built into the deployment design from day one.

Three Infrastructure Requirements

Baseline data capture. Before deploying any AI, measure the process you intend to improve at a granular level. Ticket volume, resolution time, error rate, cost per unit. You cannot demonstrate improvement without a pre-deployment benchmark.

Experiment tracking. Open-source platforms such as MLflow provide audit-ready logs of model versions, performance metrics, and deployment history. This level of documentation is what separates an anecdote from a board-ready evidence package.

Attribution methodology. The cleanest approach is a randomised controlled experiment: deploy the AI to one team or geography and hold another back as a control group. Where this is not feasible, a difference-in-differences statistical approach can isolate AI’s contribution from concurrent business changes.

PwC’s AI benchmarking framework (2025) recommends a monthly “scale or stop” review: only projects with measured movement on a defined business metric receive additional funding. This governance cadence keeps the measurement infrastructure active and gives finance teams the regular evidence they need to maintain board confidence.

From Metrics to Narrative: Making the Board Presentation Land

Metrics are necessary but not sufficient. A board presentation that opens with model accuracy statistics and closes with an NPV projection will lose the room before it gets to the recommendation. The narrative structure matters as much as the numbers.

A compelling board AI narrative connects three elements in sequence: a dollar-denominated baseline problem, a use-case intervention with measured output, and a forward projection tied to a named strategic objective.

PwC’s 2026 AI Predictions report captures the mindset shift required: there is little patience for exploratory AI investments. Each dollar spent should fuel measurable outcomes that accelerate business value. That is the standard a board-ready AI narrative must meet.

Three practical rules for building the presentation:

Lead with the business outcome, not the technology. “We reduced claims processing cost by 28 percent” lands better than “We deployed a transformer-based NLP model.”

Quantify the counterfactual. What does it cost to do nothing? The cost of inaction is as important to the business case as the cost of the investment.

Show the governance guardrails. Boards are increasingly aware of AI liability. Demonstrating responsible-AI controls, bias testing protocols, and a model-monitoring framework builds the trust needed to approve continued investment.

“The board is not asking whether AI works. It is asking whether your organisation knows how to make it work for your numbers.”

Frequently Asked Questions About Enterprise AI ROI

How long does it take to see ROI from an enterprise AI investment?

Most organisations see measurable operational efficiency gains within 3 to 9 months for well-scoped use cases. According to MIT Sloan Management Review (2024), the average time to substantial enterprise-level ROI is 18 to 24 months. Cybersecurity and IT operations consistently deliver the fastest payback, while strategic and analytical functions typically require 24 to 36 months.

What metrics should I use to justify AI investment to the board?

Use a three-layer framework. Layer 1 (months 0 to 6): cost per transaction, error rates, cycle time. Layer 2 (months 6 to 18): revenue per employee, output volume, decision speed. Layer 3 (months 18 to 36): new-product velocity, customer retention delta, and market-share change. Each layer requires pre-deployment baselines to make attribution defensible.

How do I separate AI’s contribution from other business changes happening at the same time?

The cleanest method is a randomised controlled experiment: deploy AI to one group and hold another as a control. Where that is impractical, use difference-in-differences analysis, comparing the target group’s performance trajectory against a statistically matched peer group before and after deployment. Document the methodology explicitly in your board materials.

Why do so many AI pilots fail to show business value?

BCG research shows only 4 percent of companies have achieved enterprise-wide AI at scale. Most pilots fail because they optimise for a single use case in isolation, ignore data-quality investment, exclude change-management costs, and measure too early. Pilots succeed when they target high-volume functions with existing KPI baselines and are designed for measurement from day one.

What is a realistic ROI target to promise the board for a first AI initiative?

For a well-scoped Layer 1 use case, a 15 to 30 percent cost reduction in the targeted process within 12 months is defensible based on Deloitte and McKinsey benchmarks. BCG’s finance-sector survey found a median AI ROI of 10 percent and a top-performer target of 20 percent. Set expectations conservatively in the first year, demonstrate credibly, and use that foundation to justify higher-ambition investments in year two.

The Three Things That Separate AI Leaders from the Pack

Enterprise AI ROI is measurable, but only for organisations willing to measure it properly. The gap between the 39 percent of organisations reporting EBIT-level impact and the rest is not a technology gap. It is a discipline gap.

Three disciplines separate the leaders. First, measurement infrastructure built before deployment, not bolted on afterwards. Second, total cost honesty, including model maintenance, data quality, change management, and governance in the denominator. Third, a board narrative structured around business outcomes, not technology capabilities.

The AI budget conversation is no longer about whether to invest. It is about proving the investment was worth it. The organisations that have already answered that question are widening their lead every quarter.

The board is not asking whether AI works. It is asking whether your organisation knows how to make it work for your numbers. The answer has to be yes before you walk into the room.