Definition: Agentic AI refers to systems where one or more AI models perceive their environment, plan a sequence of actions, invoke tools, and execute multi-step workflows autonomously to achieve a defined goal with minimal human intervention at each step. Unlike prompt-response LLMs, agentic systems maintain state, recover from errors, and delegate sub-tasks to specialized agents working in coordinated pipelines.

From Copilots to Autonomous Systems: Why 2026 Is the Inflection Point

Agentic AI trends in 2026 mark the shift from assistive copilots to autonomous systems that plan, act, and iterate across complex multi-step workflows without a human confirming every step.

The numbers are decisive. According to Gartner (2025), 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from under 5% in 2025. That is not gradual adoption; it is a step-change in how enterprise software is architected.

Agentic AI is the operating principle behind that jump. Where a copilot waits for you to ask a question, an agent accepts a goal and figures out the steps. It searches, reasons, calls APIs, writes code, validates its own output, and escalates when it hits a decision it cannot make autonomously. The result is qualitatively different from anything previous generations of automation could deliver.

McKinsey’s State of AI survey (2025) found that 23% of organizations are already scaling an agentic AI system somewhere in their enterprise, with high performers three times more likely to have fundamentally redesigned workflows around agents than their peers. The gap between the leaders and the laggards is widening faster than most executive teams realize.

“The question in 2026 is not whether your stack needs agents. It is whether your team can move agents from pilot into production before your competitors do.”

Trend 1: Multi-Agent Orchestration Becomes the Default Architecture

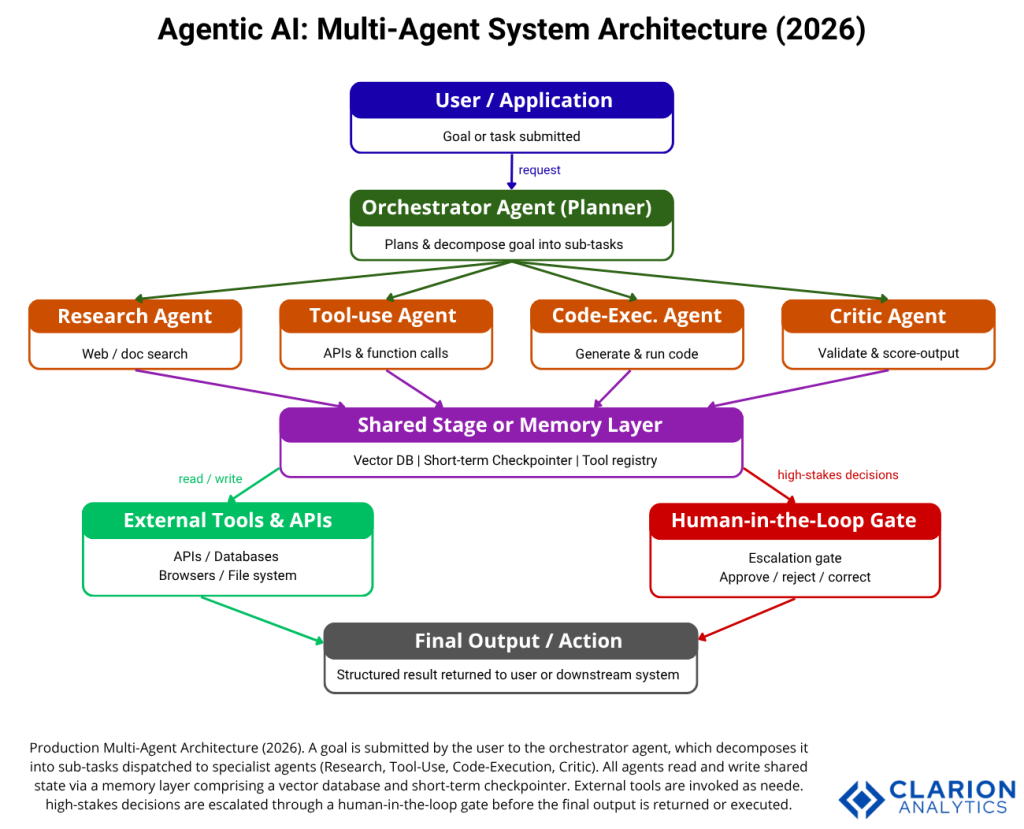

Multi-agent orchestration replaces single-agent copilots because specialized agents working in coordinated pipelines deliver deterministically higher output quality. Research shows an 80x improvement in actionable recommendations versus single-agent approaches.

The empirical case is now settled. A 2025 arXiv study by Philip Drammeh ran 348 controlled trials comparing single-agent LLMs against multi-agent orchestration for incident response. Single agents produced actionable recommendations 1.7% of the time. Multi-agent systems produced actionable recommendations 100% of the time, with zero quality variance across trials. That is not a marginal improvement; it is the difference between a proof of concept and a production system.

The architecture behind this result is straightforward: a planner-orchestrator decomposes an incoming goal into sub-tasks and dispatches them to specialist sub-agents. One agent researches. Another writes code. A third runs the code. A fourth critiques the output. The orchestrator collects results and decides the next step. No single agent carries the cognitive load of the entire workflow.

A comprehensive 2025 survey by Tran et al. on multi-agent collaboration mechanisms identifies three structural patterns dominating production deployments: peer-to-peer (agents negotiate directly), centralized (an orchestrator routes all tasks), and distributed (agents self-select tasks from a shared queue). The centralized pattern currently offers the best balance of control, debuggability, and throughput for enterprise deployments.

Figure 1: A production multi-agent architecture. The orchestrator decomposes a goal, dispatches to specialist agents, persists state in a shared memory layer, escalates high-stakes decisions to a human gate, and delivers a final structured output.

Trend 2: The Model Context Protocol Standardizes the Tool Layer

MCP is an open standard that lets agents discover and invoke tools, APIs, and data sources through a unified interface, ending the era of one-off custom integrations for every agent-tool pair and enabling portable, composable agent pipelines.

Every agent needs tools. Without a standard way to describe and invoke them, every engineering team builds its own integration layer. That approach does not scale past three or four tools, and it makes agents non-portable across infrastructure. The Model Context Protocol (MCP) solves this by defining a universal contract between agents and tools, analogous to what REST did for web APIs.

By 2026, MCP adoption has become a first-class concern for framework builders. CrewAI has added A2A (Agent-to-Agent) protocol support alongside MCP. Google’s Agent Development Kit (ADK) treats MCP as native infrastructure. Organizations standardizing on MCP today are building pipelines that will compose with any future agent or tool without a rewrite.

In practice, teams adopting MCP find the biggest win is not the first integration; it is the second and third. Once you have a structured tool registry, adding a new data source or external API becomes a configuration change, not a development sprint.

Trend 3: Bounded Autonomy and Governance Frameworks Become Non-Negotiable

Bounded autonomy architectures set explicit operational limits, define escalation paths to humans for high-stakes decisions, and maintain comprehensive audit trails; the governance layer that transforms an experimental agent into a production-grade system.

Here is the uncomfortable truth: Gartner predicts that over 40% of agentic AI projects will be cancelled by end of 2027, citing escalating costs, unclear ROI, and inadequate risk controls. The failure pattern is consistent: teams deploy agents fast, governance structures lag behind, something goes wrong that an audit trail would have caught, and the project is cancelled.

Deloitte’s Tech Trends 2026 report confirms the scale of this problem: only 14% of organizations have production-ready agentic solutions, while 42% are still building a strategy roadmap. The gap between ambition and deployment is not a technology problem. It is a governance design problem.

“Governance is not overhead in 2026. It is the mechanism that earns organizational trust to deploy agents in higher-value, higher-stakes scenarios.”

Leading organizations are implementing bounded autonomy: agents operate within defined action boundaries, escalate to humans when a decision exceeds a confidence threshold or dollar value, and log every action with reasoning for post-hoc auditing. Organizations building this infrastructure now create a virtuous cycle; more trust leads to more deployment leads to more data leads to better agents.

Trend 4: The Framework Decision – LangGraph, CrewAI, and AutoGen

LangGraph is the production choice for stateful, auditable workflows; CrewAI is the fastest path to a working role-based prototype; AutoGen excels at conversational multi-agent research tasks. Pick the framework that matches your workflow diagram, not benchmark scores.

Framework selection is the highest-leverage early decision in any agentic AI project. Get it wrong and you spend weeks refactoring. The three dominant frameworks in 2026 are not interchangeable; they solve different engineering problems with different abstractions.

| Framework | Key Strength | Best Used When |

|---|---|---|

| LangGraph | Stateful graph-based control, durable execution, LangSmith observability built-in | Building production systems with branching logic, compliance requirements, or long-running workflows that need audit trails |

| CrewAI | Role-based agent mental model, fastest setup time, active A2A and MCP protocol support | Rapid prototyping of multi-agent business workflows; teams new to agentic AI who need working code in hours, not days |

| AutoGen | Conversational multi-agent patterns, Microsoft Research backing, strong Azure ecosystem integration | Code generation research agents, conversational AI systems, or Azure-native enterprise deployments |

Code Example 1: LangGraph + AutoGen Cross-Framework Integration

Source: langchain-ai/langgraph — autogen-integration-functional

This snippet demonstrates the cross-framework integration pattern that resolves the framework paralysis problem. An AutoGen AssistantAgent becomes a first-class LangGraph task node. The InMemorySaver checkpointer persists conversation state across turns. Teams can adopt LangGraph’s graph-based control flow without discarding existing AutoGen agents, a critical migration path for organizations mid-deployment.

Trend 5: Agentic AI Enters the Software Development Lifecycle

In 2026, AI agents are not only tools developers use; they are participants in CI/CD pipelines, running tests, reviewing pull requests, and flagging regressions autonomously, compressing release cycles while engineers shift from code authoring to system design and output validation.

According to CIO Magazine (Feb 2026), the defining challenge for engineering teams in 2026 is not whether AI can participate across the SDLC, but how deliberately organizations design for it. Frontier models can now reason across long-running, multi-step workflows invoking compilers, test runners, and deployment pipelines as tools.

The role shift is concrete. Engineers who used to write boilerplate code now write the specifications that agents implement. Engineers who ran tests manually now design the agent pipelines that run tests on every commit. The skill becomes systems thinking and prompt architecture, not syntax fluency.

“In practice, teams building multi-agent SDLC pipelines find that the hardest problem is not writing agent logic, it is designing the handoff contracts between agents.”

Code Example 2: CrewAI Role-Based Research and Writer Agent

Source: crewAIInc/crewAI – Quickstart

This CrewAI snippet shows the role-based mental model in action: define agents by role, assign tasks, compose into a crew. Compared to the LangGraph example, it requires less boilerplate and no graph-theory knowledge. The trade-off is less control over execution flow. For linear research-to-report pipelines, CrewAI’s abstraction is the right fit. For complex branching workflows with error recovery loops, reach for LangGraph.

Frequently Asked Questions

What is the difference between agentic AI and traditional automation?

Traditional automation executes a predefined script: if X, do Y. Agentic AI plans a sequence of steps toward a goal it has not seen before, selects tools dynamically, recovers from failures mid-execution, and adapts when intermediate results change the optimal path. Agentic AI handles ambiguity; traditional automation handles only the cases its author anticipated.

How do I choose between LangGraph, CrewAI, and AutoGen?

Sketch your workflow as a diagram first. If it looks like a flowchart with loops and conditional branches, use LangGraph. If it maps to a team of specialists each doing a defined job in sequence, use CrewAI. If it is a multi-party conversation where agents debate or refine output iteratively, use AutoGen. The framework that matches your mental model causes the least friction in production.

Why do so many agentic AI projects fail before reaching production?

Three causes dominate. First, teams deploy agents without governance infrastructure, no audit trails, no escalation paths, no cost controls. Second, legacy system integration is harder than expected; most enterprise systems were not designed for programmatic agent access. Third, success metrics are defined too late. Gartner (Jun 2025) recommends defining measurable ROI before the pilot begins, not after.

What is the Model Context Protocol and why does it matter?

MCP is an open standard that defines how an AI agent discovers and invokes external tools, APIs, and data sources. It matters because it makes agent pipelines composable and portable. An agent built on MCP can use any MCP-compatible tool without custom integration code. For teams building multiple agents that need to share tools across projects, MCP is the plumbing that prevents duplicated infrastructure.

How do I add human-in-the-loop oversight to an agent pipeline?

Define a confidence threshold or action category that triggers a pause. When the agent reaches a step that crosses that threshold, spending money, deleting data, sending an external communication, it suspends execution and sends a structured summary to a human reviewer. LangGraph supports this natively via interrupt nodes. CrewAI supports approval steps in task configuration. The key design principle: humans review decisions, not transcripts.

The Three Truths Your Team Needs to Act On

First: multi-agent orchestration is now the production baseline, not an advanced research topic. The empirical evidence from controlled trials and enterprise deployments is consistent; specialized agents working in coordinated pipelines outperform monolithic models on every quality metric that matters.

Second: framework choice matters far less than governance and observability design. Teams that invest in audit trails, escalation paths, and cost controls before scaling agents will deploy more, fail less, and build organizational trust faster than teams racing to ship.

Third: the pilot-to-production gap is a change-management problem, not a technology problem. The technology is mature enough. What is missing in most organizations is the workflow redesign, the role redefinition, and the accountability structures that turn an impressive demo into a reliable business system.

Which part of your current engineering stack would a well-governed, multi-agent pipeline replace first?