AI Glossary Definition: A structured reference of the core AI terms software developers and CTOs need to design, build, and evaluate modern machine learning systems. These terms span the full stack: from how a model learns (training, fine-tuning) to how it serves results (inference, RAG) to where it fails (hallucination) and how teams keep it safe (AI safety, model parameters). Mastering this vocabulary is the first architectural decision your team makes.

Why This Glossary Matters Now

According to McKinsey (2025), 88% of organisations now use AI in at least one business function, yet only 6% report meaningful EBIT impact from it. The gap is not the technology. The gap is shared understanding. When a CTO green-lights a “RAG implementation” and an engineer ships a basic prompt-injection loop, the project fails not from bad code but from misaligned vocabulary.

The AI glossary for software developers below covers 11 terms organised by function. Each definition is implementation-aware: you will learn what the term means, where it lives in a real system, and what decision it drives. Gartner (2024) identifies AI Engineering, the discipline that wraps these concepts into production, as the top emerging technology focus for CTOs. This glossary is where that discipline starts.

“Understanding AI vocabulary is the first infrastructure decision your team makes.”

1–3: The Foundation Terms – LLM, Transformer, and Inference

These three terms define the base layer of every modern AI system. Get them wrong in a conversation with a vendor and you will either overpay for compute you do not need or underspec a pipeline that will collapse at scale.

1. Large Language Model (LLM)

A Large Language Model is a neural network trained on massive text corpora, billions of tokens to predict and generate language. GPT-4, Claude, and Llama 3 are all LLMs. The “large” refers to parameter count, which ranges from a few billion to over a trillion values. An LLM does not “know” facts the way a database does; it compresses statistical patterns from training data into weights.

When your team asks “should we use an LLM?”, the real question is: does your task require fluent, context-sensitive language generation? If yes, an LLM is the right class of model. If you need deterministic, rule-based output, a traditional classifier is usually cheaper and more auditable.

2. Transformer

A Transformer is the neural network architecture that powers virtually every major LLM. Introduced in the landmark paper “Attention Is All You Need” (Vaswani et al., 2017), the Transformer processes input tokens in parallel, unlike earlier recurrent models, by using a mechanism called self-attention to weight the relevance of every token relative to every other token in the sequence.

In practice, teams building on top of existing LLMs rarely need to touch the Transformer architecture directly. Understanding it matters for two reasons: (1) context window limits come directly from Transformer attention complexity, and (2) fine-tuning methods like LoRA modify Transformer weight matrices, so a basic architectural mental model prevents costly misconfigurations.

3. Inference

Inference is the process of running a trained model against new input to produce an output. Training teaches a model; inference is when the model earns its keep. For software teams, inference is the operational concern: latency, throughput, cost per token, and GPU memory all live here.

Teams frequently confuse inference cost with training cost. Training a foundation model costs tens of millions of dollars and runs once. Inference runs every time a user sends a prompt. Serving architecture batching, quantisation, speculative, decoding is entirely an inference problem, not a training one.

4–6: Building Smarter Systems – RAG, Embedding, and Vector Store

These three terms form a system pattern: you convert knowledge into a searchable format (embedding + vector store), then retrieve the most relevant pieces at query time (RAG). Together they let an LLM answer questions about your proprietary data without retraining.

4. Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) is the technique of fetching relevant passages from an external knowledge base and injecting them into an LLM prompt at inference time. As Gao et al. (2024) show in their landmark RAG survey, this approach directly addresses three core LLM limitations: hallucination, outdated knowledge, and non-transparent reasoning. The retrieved passages act as a live, verifiable memory layer.

In production, RAG splits into three stages: (1) index documents as vector embeddings, (2) retrieve the top-k most semantically similar chunks for a given query, and (3) augment the LLM prompt with those chunks before generating a response.

Code Example 1 – Minimal RAG Pipeline Source: langchain-ai/langchain · docs/tutorials/rag.ipynb

This code shows embeddings converting corpus text into vectors (line 4), FAISS indexing them for fast similarity search (lines 5-7), and the RetrievalQA chain injecting the top-4 matches into the LLM context before generating an answer.

5. Embedding

An embedding is a dense numerical vector, typically 768 to 3,072 floating-point values, that represents the semantic meaning of a piece of text. Words or sentences with similar meanings have embeddings that are geometrically close in high-dimensional space. This is what allows a vector search to find “contract termination clause” when a user asks “how do I cancel?”

The embedding model is separate from the generation model. OpenAI’s text-embedding-3-small, Cohere’s embed-v3, and open-source models like E5 and BGE all produce embeddings. Choosing an embedding model affects retrieval quality more than switching between LLMs, a fact that catches many teams off guard.

6. Vector Store

A Vector Store (also called a vector database) indexes high-dimensional embeddings and enables fast approximate nearest-neighbour search. FAISS (Meta), Pinecone, Weaviate, and pgvector (a Postgres extension) are all vector stores. The query flow is: convert the user’s question to an embedding, then retrieve the database’s top-k most similar document embeddings.

Selecting a vector store is a data-engineering decision, not an AI decision. Key evaluation dimensions: scale (number of vectors), update frequency, hybrid search support (keyword + semantic), and cost. FAISS is optimal for in-memory prototypes; managed services like Pinecone handle multi-billion-vector production workloads.

“RAG is what you build when your LLM does not know what happened last Tuesday.”

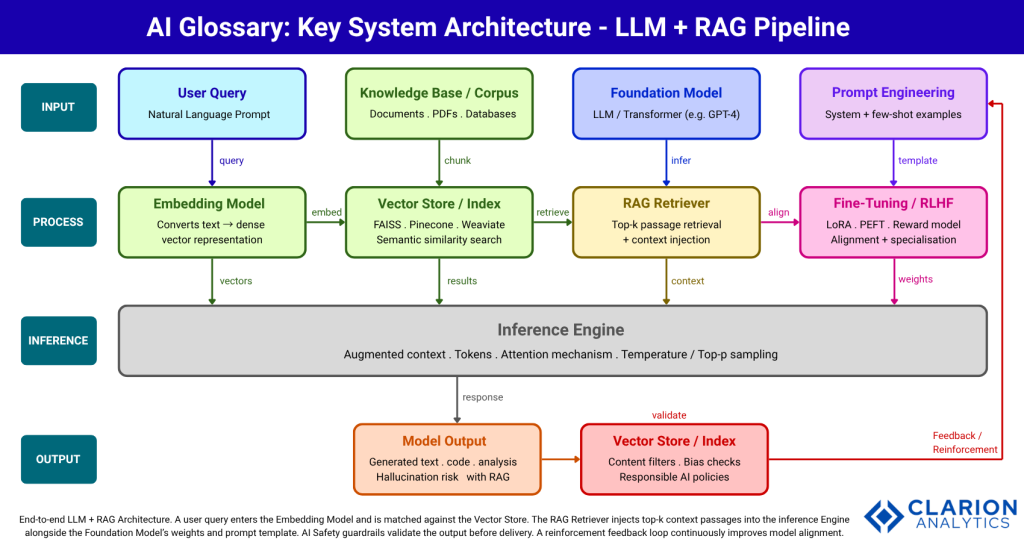

Figure 1: A user query enters the Embedding Model and is matched against the Vector Store via semantic similarity search. The RAG Retriever injects the top-k context passages into the Inference Engine alongside the Foundation Model’s weights and prompt template. AI Safety guardrails validate the output before delivery. A reinforcement feedback loop continuously improves model alignment through human feedback and usage signals.

7–9: Shaping Model Behaviour – Fine-Tuning, Prompt Engineering, and Hallucination

These three terms govern how you customise a model’s behaviour, guide it at runtime, and recognise its most common failure mode. Each represents a different budget and time investment.

7. Fine-Tuning

Fine-tuning adapts a pre-trained foundation model to a specific task or domain by continuing training on a smaller, labelled dataset. The model’s existing world knowledge stays intact; the fine-tuning adjusts its behaviour for your use case. Techniques like LoRA (Low-Rank Adaptation) make this tractable without full GPU clusters by modifying only a fraction of the model’s weight matrices.

Teams building this typically find that fine-tuning costs 10-100x more than prompt engineering in upfront effort but can reduce per-query inference costs by enabling smaller, faster models to match larger ones on a narrow task. The Hugging Face Transformers library — the most-starred AI repository on GitHub (150k+ stars) is the standard toolkit for fine-tuning workflows.

Code Example 2 – Loading a Fine-Tuned Transformer for Inference Source: huggingface/transformers · examples/README.md

The pipeline() call abstracts tokenisation, fine-tuned weight loading, and inference into one line. It demonstrates inference (prediction step), fine-tuning (domain specialisation on sentiment data), and parameters (66 million values doing the classification work).

8. Prompt Engineering

Prompt engineering is the practice of designing and iterating on the text inputs, system messages, examples, and constraints that guide an LLM’s output without modifying its weights. A well-designed prompt can shift a generic LLM from a 60% to a 90%+ task success rate. Few-shot prompting (providing 2-5 worked examples) and chain-of-thought (asking the model to reason step by step) are the two highest-leverage techniques.

Prompt engineering is not a workaround for missing fine-tuning budget. It is the fastest feedback loop your team has. In practice, most production LLM applications are 70% prompt engineering and 30% infrastructure. Teams that skip structured prompt development and jump straight to fine-tuning often solve the wrong problem at ten times the cost.

9. Hallucination

Hallucination occurs when an LLM generates output that is fluent and confident but factually incorrect or invented. The model is not “lying”; it is pattern-matching on training distributions and producing text that fits the expected shape of an answer without grounding in truth. Citation hallucination (fabricating research papers), date hallucination, and entity hallucination (inventing company names or product features) are the most costly failure modes in production.

RAG is the primary architectural mitigation: by forcing the LLM to generate from retrieved passages rather than solely from internal weights, hallucination rates drop substantially. Additional mitigations include temperature reduction, self-consistency sampling, and programmatic output validation. Teams should log and measure hallucination rate as a first-class production KPI alongside latency and cost.

Table 1: RAG vs Fine-Tuning vs Prompt Engineering – When to Use Each

| Option | Key Strength | Typical Cost / Effort | Best Used When |

|---|---|---|---|

| Prompt Engineering | Zero retraining; fastest iteration; highest leverage per dollar | Low, hours to days | Task is well-defined; model size is sufficient; latency matters less than speed to ship |

| Retrieval-Augmented Generation (RAG) | Grounds outputs in verified, up-to-date documents; reduces hallucination | Medium, days to weeks | Answers must reference proprietary, recent, or regulated knowledge bases |

| Fine-Tuning (LoRA / PEFT) | Adapts model style, tone, or domain knowledge permanently; enables smaller, faster models | High, GPU budget; labelled dataset curation | Narrow, high-volume task where a specialised model outperforms general ones at lower inference cost |

| Full Pre-Training | Full architectural control; optimised for very specific domains | Very High, $M range; months of compute | Unique domain where no foundation model covers the vocabulary |

“Prompt engineering is not a workaround. It is the fastest way to align a model with your product’s reality.”

10–11: The Guard Rails – AI Safety and Model Parameters

The final two terms are not glamorous, but they separate teams shipping responsible AI from teams accumulating technical and reputational debt.

10. AI Safety

AI Safety is the field of research and engineering practice focused on ensuring that AI systems behave as intended and avoid harmful outputs. In production systems, this manifests as content filters (blocking toxic or legally risky outputs), bias audits, adversarial robustness testing, and human-in-the-loop review for high-stakes decisions. Gartner (2024) reports that despite average GenAI investment of $1.9 million in 2024, fewer than 30% of AI leaders have executive satisfaction with returns; governance gaps are a leading cause.

CTOs building AI products should treat AI safety as a system architecture concern, not a post-launch patch. Guardrails belong in the inference pipeline, not in a review document. Open-source toolkits like LLM Guard and Guardrails AI provide programmable safety layers that can sit between the user request and the model response.

11. Model Parameters

Model parameters are the numerical values, weights and biases that a neural network learns during training and stores permanently. GPT-4 is estimated at over one trillion parameters; Llama 3.1-8B has 8 billion. Parameter count roughly correlates with capability but directly determines compute and memory cost. A 70-billion-parameter model requires approximately 140 GB of GPU VRAM at full precision, a procurement and infrastructure decision, not just a research one.

Two related terms CTOs encounter: quantisation (compressing parameters from 32-bit floats to 8-bit or 4-bit integers to reduce memory footprint by 2-4x) and context window (the maximum number of tokens the model can attend to in one inference call). Both are levers for cost management, not just technical curiosities.

“The teams that master this vocabulary today will set the technical standards everyone else follows tomorrow.”

Frequently Asked Questions

Q: What is RAG in AI, and why should developers care? RAG (Retrieval-Augmented Generation) lets an LLM answer questions using your private or recent documents without retraining. It reduces hallucination by grounding outputs in retrieved passages. Developers should care because RAG is the fastest path to a production-ready, domain-specific AI application with verifiable, traceable answers.

Q: What is the difference between fine-tuning and prompt engineering? Prompt engineering guides model behaviour through the text input, requiring no changes to model weights. Fine-tuning updates the model’s weights using a labelled dataset, permanently specialising it for a task. Start with prompt engineering, it is lower cost and faster to iterate. Add fine-tuning only when prompt quality reaches its ceiling.

Q: How do large language models work at a high level? LLMs are transformer-based neural networks trained on massive text corpora to predict the next token in a sequence. At inference time, they generate text by sampling from probability distributions over the vocabulary, token by token. They do not retrieve facts from a database; they compress patterns from training data into billions of numerical weights.

Q: What causes AI hallucination, and how do you reduce it? Hallucination occurs when an LLM produces confident but incorrect output because its training statistics favour plausible-sounding text over factual accuracy. The primary architectural fix is RAG: force the model to generate from retrieved, verified passages. Secondary mitigations include lower temperature settings, chain-of-thought prompting, and output validation pipelines.

Q: What are model parameters, and do they affect cost? Model parameters are the learned numerical weights inside a neural network. Parameter count directly determines GPU memory requirements and inference cost. A 70-billion-parameter model costs roughly 10x more to serve than a 7-billion one. Quantisation (compressing parameter precision) can cut memory footprint by 2-4x with minimal accuracy loss, making larger models economically viable.

Conclusion

Three insights stand above the rest in this AI glossary. First, RAG is the most impactful architectural pattern available to teams today: it reduces hallucination, injects proprietary knowledge, and requires no retraining. Second, prompt engineering returns more value per hour than any other optimisation technique; treat it as a first-class engineering discipline, not an afterthought. Third, model parameters and inference costs are inseparable: every capability decision is simultaneously an infrastructure budget decision.

The AI terminology landscape will keep expanding. Foundation models will gain new capabilities; new architectural patterns will emerge. But the 11 terms in this glossary form a durable foundation, a shared language that lets engineering teams, product managers, and CTOs build systems that actually deliver value rather than demos that impress and then disappear.

One question worth sitting with: if your team called a meeting today to evaluate an AI vendor, how many of these 11 terms would appear in the conversation and how many would be used correctly?