DEFINITION – Deep learning API security is the practice of protecting the endpoints that expose trained neural network models to consuming applications. It encompasses authentication, input validation, rate limiting, adversarial-input defence, and runtime monitoring. A secure deep learning API prevents model theft, data exfiltration, and adversarial manipulation while keeping latency acceptable for production inference workloads.

Why Deep Learning APIs Are the New Attack Surface

Deep learning API security has moved from a niche concern to a board-level priority. According to Gartner’s 2024 API Strategy Survey, 82% of organisations now expose models through internal APIs, and 37% rank security as their top API challenge. The average API breach leaks at least ten times more data than a conventional security incident, and when the API serves a deep learning model, that breach also exposes proprietary intellectual property and training data.

The threat landscape has shifted materially since 2022. Gartner (August 2024) projects global information security spending will reach $212 billion in 2025, a 15% increase driven largely by AI adoption expanding the attack surface. Simultaneously, Gartner’s 2025 cybersecurity trend report confirms that GenAI adoption is accelerating unstructured-data risks, with LLM inference pipelines now squarely in the crosshairs of adversaries.

In practice, teams building deep learning APIs often underestimate how different the threat model is from a standard REST service. A conventional API vulnerability yields user data. An ML API vulnerability can yield the model weights themselves, enable model inversion attacks, or allow an adversary to poison the inference pipeline silently.

“A compromised deep learning API doesn’t just leak data, it exposes the intelligence your business spent millions building.”

Where Deep Learning APIs Live in the Wild

Understanding the real deployment contexts is the first step to securing them right. Deep learning APIs power three broad categories of production systems:

- Inference-as-a-Service endpoints (image classification, NLP, speech recognition) exposed to millions of end users via public REST or gRPC APIs

- Internal ML microservices consumed by other services in a data platform, often over mTLS-secured service meshes

- Third-party model integrations, where an organisation calls an external AI provider’s API and must protect the data it sends outbound

Each context carries distinct risks. Public inference endpoints face credential stuffing, rate-abuse for model extraction, and adversarial inputs. Internal microservices face lateral movement once a perimeter is breached. Third-party integrations introduce supply-chain trust issues. The Cloud Security Alliance (2025) recommends separating permissions by function; training upload, inference, and model management should each carry distinct scopes so overprivileged tokens cannot weaponise all three.

The Layered Security Architecture for Deep Learning APIs

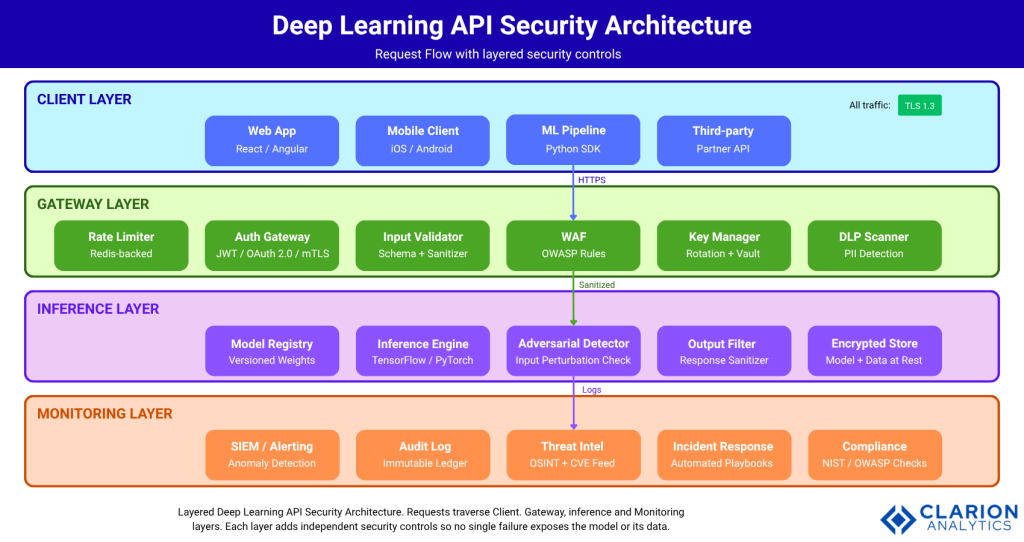

A secure deep learning API is never a single control; it is four coordinated layers that each operate independently so no single failure exposes the model.

Figure 1: Layered Deep Learning API Security Architecture. Requests traverse Client, Gateway, Inference, and Monitoring layers. Each layer adds independent security controls so no single failure exposes the model or its data.

Layer 1 – Client Layer enforces TLS 1.3 for all traffic regardless of client type.

Layer 2 – Gateway Layer combines rate limiting (Redis-backed), OAuth 2.0/JWT authentication, schema-based input validation, a Web Application Firewall (WAF), secrets rotation via vault integration, and a Data Loss Prevention (DLP) scanner in a sequential pipeline. Every request that reaches Layer 3 has passed all six controls.

Layer 3 – Inference Layer wraps the model with an adversarial-input detector and an output filter before returning results. This layer also enforces model versioning and maintains an encrypted store for model weights and data at rest.

Layer 4 – Monitoring Layer collects immutable audit logs, feeds a SIEM with ML-based anomaly detection, correlates against threat-intel feeds, and triggers automated incident-response playbooks.

“Security must be layered: each control assumes the one before it can fail.”

Authentication and Authorization: The First Gate

Every request to a deep learning API must be authenticated and scoped. OAuth 2.0 with short-lived JWT access tokens (15–60 minutes) is the recommended pattern for public-facing endpoints. Machine-to-machine calls should use mutual TLS (mTLS), which eliminates token leakage risk entirely.

The following code snippet, adapted from the andifalk/api-security repository and OWASP Top 10 2025 implementation examples, shows a secure FastAPI inference endpoint with API-key gating and CORS lockdown:

Source: andifalk/api-security / juanrodriguezmonti.github.io

This pattern keeps the inference call fully isolated behind the authentication gate. Even if an attacker discovers the endpoint path, no inference executes without a valid, scoped key. Teams should store keys in a dedicated secrets manager such as HashiCorp Vault or AWS Secrets Manager and rotate them automatically on a 90-day cycle.

Rate Limiting, Input Validation, and Adversarial Defence

Rate limiting and input validation protect the model from both volumetric abuse and crafted-input attacks.

Model extraction attacks work by querying a public API thousands of times with carefully designed inputs to reverse-engineer the model’s decision boundary. Rate limiting per API key, enforced at the gateway with Redis-backed sliding-window counters, disrupts this attack class before a single query reaches the model. CyCognito (2026) recommends automating key rotation and combining rate limits with anomaly-detection alerts for traffic spikes.

Input validation at the schema level (OpenAPI/Swagger spec enforcement) is the second gate. Any request body that does not conform to the expected tensor shape, data type, or value range is rejected at the gateway; it never reaches the inference engine. The Cloud Security Alliance (2025) calls this a “positive security model”: define what good traffic looks like and reject everything else.

At the inference layer, adversarial-input detection applies a secondary check against known attack patterns. Chowdhury (arXiv, 2025) demonstrates that adversarial training with FGSM-generated perturbations substantially improves model robustness against gradient-based attacks on CIFAR-10, ImageNet, and MNIST. The trade-off is a modest accuracy reduction on clean inputs, typically 2–5%, which most production teams accept as the price of robustness.

A comprehensive 2025 review in MDPI Technologies surveyed attacks across all major ML tasks and confirmed that defence mechanisms combining adversarial training with feature-level detection outperform single-method defences, particularly against adaptive adversaries who know the defence strategy.

Source (Snippet 2): OWASP Top 10 2025 examples — SSRF-safe URL validation

This pattern prevents Server-Side Request Forgery (SSRF), a critical risk when deep learning pipelines fetch remote resources (model checkpoints, datasets) via URL parameters. Any URL that resolves to a private or loopback address is rejected before the pipeline makes a network call.

“Input validation at the gateway is your cheapest and most impactful security control, implement it before anything else.”

Choosing the Right Control: A Comparison

No single control secures a deep learning API. The table below compares the primary approaches so teams can prioritise based on their deployment context:

| Approach / Tool | Key Strength | Best Used When |

|---|---|---|

| OAuth 2.0 + JWT (short-lived tokens) | Stateless, scalable, industry-standard auth | Public-facing deep learning APIs serving multiple client types |

| Mutual TLS (mTLS) | Bidirectional cert verification; no token leakage risk | Machine-to-machine calls between internal ML microservices |

| API Gateway (Kong / APISIX) | Centralised rate limiting, auth, and DLP enforcement | High-traffic inference APIs needing policy at the edge |

| Adversarial Training (PGD / FGSM) | Hardened model resists input-level perturbation attacks | Vision or NLP models exposed to untrusted user inputs |

| Zero Trust Architecture | Every request verified regardless of network origin | Multi-cloud or hybrid deployments with sensitive model IP |

| SIEM + ML Anomaly Detection | Real-time detection of unusual traffic and exfiltration | Production APIs handling PII or proprietary model outputs |

Monitoring, Zero Trust, and Compliance

Security controls that cannot be observed cannot be trusted.

Runtime monitoring for deep learning APIs must go beyond HTTP error codes. A SIEM with ML-based anomaly detection identifies unusual query patterns, sudden increases in unique input diversity often signal a model extraction attempt; unusual spikes in response payload size can signal data exfiltration. Gartner (2025) found that organisations deploy an average of 45 cybersecurity tools and recommends consolidating observability into a unified platform to avoid alert fatigue.

The NSA’s 2024 guide on deploying AI systems securely advocates Zero Trust architecture for all AI deployments: every request is verified regardless of network origin, and sensitive model data is processed inside secure enclaves. Digital signatures on training datasets using quantum-resistant standards per FIPS 204 prevent training-data poisoning.

For compliance, the OWASP API Security Top 10 remains the baseline framework. The 2025 OWASP Top 10 for general web applications added two new categories relevant to ML pipelines: Software Supply Chain Failures (A03) and AI-Specific Threats (a new entry covering prompt injection and agent attack surfaces). Map your controls to these categories before any compliance audit.

“Zero Trust is not a product, it is the discipline of assuming breach and verifying everything, including your own inference services.”

Frequently Asked Questions

How do I secure a deep learning API from model extraction attacks?

Apply Redis-backed rate limiting at the API gateway to cap query volume per key. Combine this with output perturbation, add small calibrated noise to responses to degrade the signal an attacker can use to reconstruct your model. Monitor for abnormal query diversity patterns in your SIEM and rotate API keys automatically on a fixed schedule.

What authentication method is best for ML inference APIs?

Use short-lived JWTs (15–60 min) with OAuth 2.0 scopes for user-facing APIs. For service-to-service calls within your data platform, mutual TLS (mTLS) is superior because it eliminates token theft risk. Store all keys and secrets in a dedicated vault such as HashiCorp Vault and never hardcode credentials in API client code.

How do adversarial inputs threaten deep learning APIs?

Adversarial inputs are carefully crafted payloads designed to trigger incorrect model outputs. Attackers submit slightly perturbed images or text to bypass classifiers or extract confidence scores. Defend by enforcing strict input schema validation at the gateway, deploying a secondary adversarial-input detector at the inference layer, and retraining your model periodically with adversarial examples.

What is the OWASP API Security Top 10 and why does it matter for AI?

The OWASP API Security Top 10 is the industry-standard risk framework for API vulnerabilities. For deep learning APIs, the most critical categories are Broken Object-Level Authorization, Unrestricted Resource Consumption (model extraction via query flooding), and the 2025 addition of AI-Specific Threats covering prompt injection and agent manipulation. It provides a compliance baseline accepted by auditors worldwide.

How do I detect API abuse targeting my ML model in real time?

Deploy a SIEM with ML-based anomaly detection that baselines normal query patterns per API key. Configure alerts for sudden increases in request volume, high entropy in input payloads, unusually large response payloads, and geographic anomalies. Automate incident-response playbooks to throttle or block suspicious keys within seconds, not minutes.

Three Principles That Must Drive Your API Security Strategy

Three insights every CTO and engineering lead should carry forward. First, deep learning APIs expand the attack surface in qualitatively new ways; model theft, adversarial manipulation, and training-data poisoning are threats that traditional API security programmes were never designed to address. Second, layered controls beat single-control solutions: a JWT gate without rate limiting is vulnerable to model extraction; rate limiting without adversarial-input detection leaves the model itself exposed. Third, observability is not optional; a control you cannot monitor is a control you cannot trust.

The question is not whether your deep learning APIs will be targeted. The question is whether your security architecture will detect and contain that targeting before it becomes a breach. Which of the four layers in your current stack would fail first?