DEFINITION AI outsourcing in Singapore means contracting an external team, headquartered or operating in Singapore, to design, build, deploy, and maintain AI systems, including large language models, MLOps pipelines, and data engineering infrastructure, on behalf of a product organisation. Singapore’s government-backed AI ecosystem, bilingual technical workforce, and legal alignment with Western IP frameworks make it a preferred nearshore alternative for CTOs looking to scale AI capabilities without the overhead of full-time specialist hires.

Why Singapore’s AI Talent Gap Is Your Strategic Opening

AI outsourcing in Singapore starts with a paradox that every CTO in the region knows: the city-state is one of the world’s most advanced AI hubs, yet its supply of qualified AI engineers consistently falls short of demand. According to AWS and YouGov research (2024), 81% of Singapore employers rank AI-skilled hiring as a top priority, yet 74% cannot find the candidates they need. That shortage is your leverage point.

Demand for AI engineers in Singapore grew 40% in 2025, while the government aims to triple its AI practitioner base to 15,000 over five years. The gap between supply and need will not close anytime soon. Organisations that build outsourcing partnerships now lock in talent access before the queue lengthens further.

The wider global picture confirms the pattern. According to the World Economic Forum Future of Jobs Report 2024, AI specialists top the list of fastest-growing occupations with 40% annual growth projected through 2030. McKinsey’s State of AI (2024) shows 78% of organisations now use AI in at least one function, up from 55% in 2023. Every new AI deployment intensifies competition for the same slim pool of ML engineers, MLOps specialists, and LLM architects.

Building in-house is possible, but the maths rarely favour it. A single AI specialist commands a median Singapore salary of S$133,300 in 2025, with senior ML engineers earning above S$200,000. A McKinsey study cited by Xyonix (2024) puts the average cost of hiring one AI specialist at USD $150,000 annually, before infrastructure and tooling. Outsourcing to a Singapore-based partner gives you a team, not a single headcount, at a fraction of that burn rate.

“74% of Singapore employers cannot find the AI talent they need; that shortage is not a threat to your roadmap; it is the reason your outsourcing partner exists.”

What You Actually Get When You Outsource AI in Singapore

Outsourcing AI development to Singapore delivers four concrete capabilities that are difficult to replicate in-house on a startup or scale-up timeline.

First, specialist depth. Singapore concentrates talent trained in PyTorch, Hugging Face Transformers, LangChain, and production-grade MLOps. The Information and Communications sector has the highest proportion of degree holders 72.8% across all Singaporean industries. This is not general-purpose development talent; these engineers build, fine-tune, and ship AI systems for a living.

Second, government-backed infrastructure. Singapore’s government invested over USD $700 million in AI and quantum computing and has trained 17,000+ local professionals in AI, analytics, and cloud. Partners operating in this ecosystem have access to IMDA-accredited frameworks, national AI testing environments, and compliance tooling aligned to MAS regulations for financial AI deployment.

Third, cost efficiency. According to Accenture research, 65% of companies experienced reduced costs and improved timelines by outsourcing AI projects. Operational cost savings of up to 60% versus equivalent US or UK hires are achievable when combining Singapore’s talent density with a structured delivery model.

Fourth, speed. The Deloitte Global Outsourcing Survey 2024 found that 80% of executives plan to maintain or grow third-party outsourcing investment, with skilled talent and agility now ranked alongside cost reduction as primary drivers. A Singapore partner with pre-built MLOps scaffolding ships your first model to production in weeks, not quarters.

The Delivery Architecture: How It All Fits Together

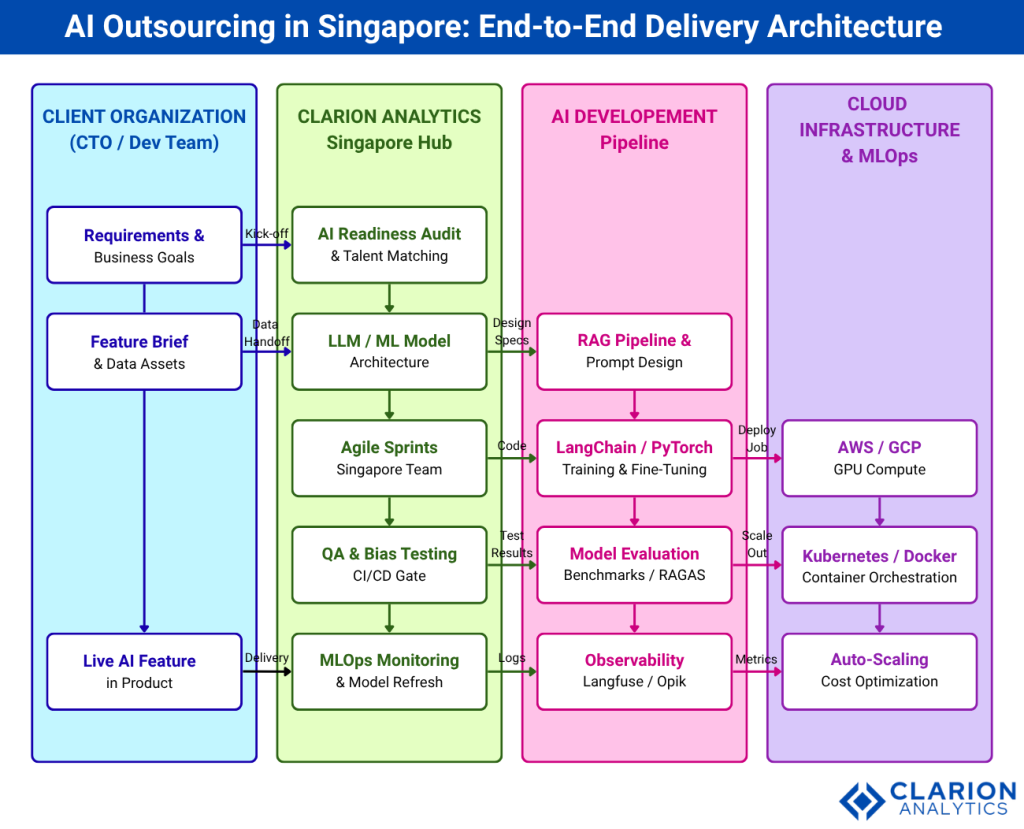

A production AI outsourcing engagement runs across four layers: client requirements, Singapore delivery hub, AI development pipeline, and cloud MLOps infrastructure. Every layer operates in parallel, with clear handoff points that prevent the drift common in poorly governed outsourcing arrangements.

Figure 1: AI Outsourcing End-to-End Architecture (Clarion Analytics model). Client requirements flow from left to right through the Singapore delivery hub (green), into the AI development pipeline (orange), and onto cloud MLOps infrastructure (purple). Vertical arrows show iterative sprint feedback loops. The blue column tracks client involvement at every stage – from requirements brief through to live model monitoring.

In practice, teams building this typically start with a two-week AI readiness audit. The Singapore team maps the client’s data assets, identifies the highest-value AI use case, and selects the appropriate architecture, RAG pipeline, fine-tuned LLM, or pure ML model. This front-loaded discovery phase prevents the single most common failure mode in AI outsourcing: building the wrong thing well.

The LangChain framework (132k GitHub stars, actively maintained as of April 2026) provides the backbone for LLM application development. The snippet below shows the RAG pipeline initialisation pattern a Singapore outsourcing team would use as a production starting point.

Technologies, Tools, and the MLOps Stack

Singapore AI outsourcing partners do not all offer the same capabilities. Understanding the toolchain helps CTOs evaluate fit before signing.

| Option | Key Strength | Best Used When |

|---|---|---|

| RAG Pipeline Specialist | LangChain, LangGraph, vector stores (Pinecone, FAISS), prompt engineering, evaluation with RAGAS | You have large document repositories, internal knowledge bases, or customer support content that an LLM must search and synthesise in real time. |

| Fine-tuning and LLM Training | Hugging Face Transformers, PyTorch, LoRA/QLoRA, LlamaFactory, GPU cluster management | Your use case requires a model customised on proprietary data – e.g. a domain-specific code reviewer, a regulated-industry compliance classifier, or a multilingual chatbot. |

| Full-Stack MLOps Partner | Kubernetes, Docker, CI/CD pipelines, Langfuse/Opik observability, model registry, A/B deployment, cost optimisation | You already have a working model but lack the infrastructure to deploy, monitor, retrain, and scale it reliably in production. |

The second code snippet illustrates the LangGraph stateful agent pattern, the architecture used when a workflow requires multiple AI tool calls, memory across turns, or human-in-the-loop review steps.

“The best Singapore AI outsourcing partner is not the one who codes fastest, it is the one who designed the MLOps scaffold before the first sprint started.”

Clarion Analytics: Singapore AI Outsourcing at the Frontier

Among Singapore-based AI partners, Clarion Analytics stands at the frontier of enterprise AI delivery. Clarion combines deep LLM engineering expertise with a full-stack MLOps practice, enabling CTOs to outsource the complete AI development lifecycle from ideation and data architecture through to live model monitoring and continuous retraining.

Clarion Analytics delivery model is built around three principles that distinguish it from generic software outsourcing: AI-native teams (every engineer is trained in LLM and ML system design, not just web development), production-first architecture (all projects begin with an MLOps scaffold, not a Jupyter notebook), and transparent governance (weekly model performance reviews, explainability reports, and IP ownership transferred to the client at each milestone).

For CTOs scaling AI in regulated sectors, fintech, healthcare, and enterprise SaaS, Clarion Analytics Singapore’s base provides a legally aligned, IP-secure environment that offshore alternatives in less regulated jurisdictions cannot match. The team’s work spans RAG-powered knowledge systems, LLM fine-tuning for domain-specific classification, and cloud-native MLOps infrastructure on AWS and GCP. Visit clarion.ai to explore their current capability portfolio and engagement models.

Implementation Playbook: From First Call to Live Model

A structured outsourcing engagement follows five phases. Each phase has a clear output, preventing the scope creep that derails AI projects. According to a 2025 real-world study of AI-assisted development at scale, organisations deploying structured AI workflows achieved a 31.8% reduction in PR review cycle time, evidence that process discipline, not just technology, drives AI delivery speed.

- Discovery and Audit (Week 1-2): The Singapore team inventories your data assets, maps use cases to business outcomes, and selects the architecture. Output: a technical spec with a time-boxed POC scope.

- POC Sprint (Week 3-6): A working prototype is shipped to a staging environment. LangChain or LangGraph provides the application scaffold; Hugging Face Transformers handles model loading. Output: a demo the product team can evaluate.

- Production Build (Week 7-14): CI/CD pipelines, container orchestration (Kubernetes), and observability tooling (Langfuse or Opik) are configured. The model is packaged for A/B deployment. Output: a production-ready release candidate.

- Handover and Knowledge Transfer (Week 15-16): Documentation, model cards, and runbooks are delivered. The client’s internal team is onboarded to the monitoring dashboards. Output: full operational ownership transferred.

- Ongoing MLOps Retainer (Monthly): The Singapore partner monitors model drift, retrains on new data, and manages cloud cost optimisation. According to the Symeonidis et al. MLOps review (2024), continuous integration practices are the most critical predictor of AI system longevity in production. Output: a model that improves over time rather than degrading.

Teams building this typically find that the discovery phase is the highest-leverage investment in the entire engagement. A two-week audit that identifies a poor-fit architecture saves three months of rework. The cost of skipping it is almost always paid back with interest.

“A two-week AI readiness audit is the highest-leverage investment in any outsourcing engagement. The cost of skipping it is paid back with months of rework.”

Frequently Asked Questions

How much does it cost to outsource AI development in Singapore?

Outsourcing AI development to a Singapore-based partner typically costs 30-60% less than building an equivalent in-house team at US or UK salary rates. A mid-sized engagement covering one LLM application plus MLOps infrastructure – runs roughly USD $80,000-$200,000 for a six-month build, depending on model complexity and data volume. Ongoing monthly MLOps retainers range from USD $8,000-$25,000.

Is Singapore a good location for AI outsourcing?

Singapore ranks among the top global AI hubs. The government invested over USD $700 million in AI and quantum computing, the legal system protects IP under English-based frameworks, and 72.8% of ICT sector workers hold degrees. For CTOs in APAC, Europe, or North America, Singapore offers the rare combination of advanced technical talent, regulatory clarity, and English-first communication qualities that most offshore alternatives lack.

What AI technologies do Singapore outsourcing teams typically use?

Production Singapore AI teams use LangChain and LangGraph for LLM application development (132k and 19k GitHub stars respectively), PyTorch and Hugging Face Transformers for model training, Kubernetes and Docker for deployment, and observability tools such as Langfuse or Opik for model monitoring. Cloud infrastructure is typically AWS or GCP, both of which operate Singapore data centres for data residency compliance.

How do I protect my IP when outsourcing AI development?

Singapore’s IP legal framework aligns closely with UK and US precedent, making contract enforcement straightforward. Best practice requires IP assignment clauses in every milestone, with all model weights, training data transforms, and pipeline code transferred to the client at each sprint delivery. Singapore-based partners like Clarion Analytics structure contracts to ensure the client owns 100% of the IP from day one, not at project close.

How long does it take to ship a production AI feature using an outsourcing partner?

A working RAG prototype reaches staging in three to six weeks. A production-ready deployment with CI/CD, monitoring, and A/B testing infrastructure requires ten to fourteen weeks from first briefing. This timeline assumes the client provides labelled data or access to the relevant knowledge base in week one. MLOps retainers begin on the day of production launch and run continuously.

Conclusion

Three insights define the AI outsourcing opportunity in Singapore. First, the talent gap is structural and widening: 74% of Singapore employers cannot find the AI skills they need, yet the city-state houses some of Asia’s deepest concentrations of production ML engineers. Second, the economics strongly favour outsourcing for anything below the scale at which a dedicated in-house AI platform team becomes justified, with cost savings of 30-60%, faster time-to-market, and no equity dilution on specialist hires. Third, the architecture discipline separates good outsourcing from great: partners who begin with an MLOps scaffold and governance framework, not a notebook, deliver systems that survive beyond the initial build.

The practical question is not whether to outsource AI development in Singapore, but which partner has the engineering depth, MLOps maturity, and governance model to match your product’s risk tolerance. Clarion Analytics is a logical first conversation for CTOs who want a Singapore-based partner operating at the frontier of LLM and MLOps delivery.

What would it take for your AI roadmap to ship on time if your specialist headcount doubled overnight?