AI vehicle claim management automation refers to the use of machine learning, computer vision, and natural language processing to handle every stage of the auto insurance claims lifecycle, from first notice of loss through damage assessment, fraud scoring, and settlement, with minimal human intervention. Modern systems combine image analysis models, NLP-driven document processing, and graph-based fraud detection into a single orchestrated pipeline that connects to existing claims management systems via REST APIs.

Why Manual Claims Processing Is Costing Insurers Billions

AI vehicle claims automation is no longer an experimental investment. According to McKinsey (2025), AI-driven claims processing can reduce resolution time by 50 to 70 percent, compressing what once took 30 days into under 8 days. For engineering teams at P&C carriers and insurtechs, the question has shifted from whether to automate to how to do it at production scale.

Manual claims processing carries structural defects that AI directly addresses. Error rates in manual assessments range from 7 to 12 percent of all claims, and standard claims cost $40 to $60 each to process by hand. Datagrid (2025) reports that AI adoption jumped from 8 percent to 34 percent full deployment between 2024 and 2025 alone, a clear signal that the engineering community has moved past proof-of-concept.

The sections below unpack each layer of a production AI claims pipeline: what it does, the models involved, and how teams typically wire them together.

Standard claims processing costs fell 30 to 40 percent once AI pipelines replaced manual data entry and that saving scales across every claim volume spike.

How AI Claims Automation Actually Works: The Full Pipeline

An AI claims pipeline is not a single model. It is an orchestrated sequence of five specialised services, FNOL intake, document extraction, damage assessment, fraud scoring, and settlement routing, each powered by dedicated ML models that pass structured outputs downstream.

McKinsey’s July 2025 analysis describes how UK insurer Aviva deployed more than 80 AI models across its claims domain, cutting complex liability assessment time by 23 days and reducing customer complaints by 65 percent, all while saving £60 million ($82 million) in its motor claims domain. Aviva’s architecture is the clearest public example of what a full pipeline looks like at enterprise scale.

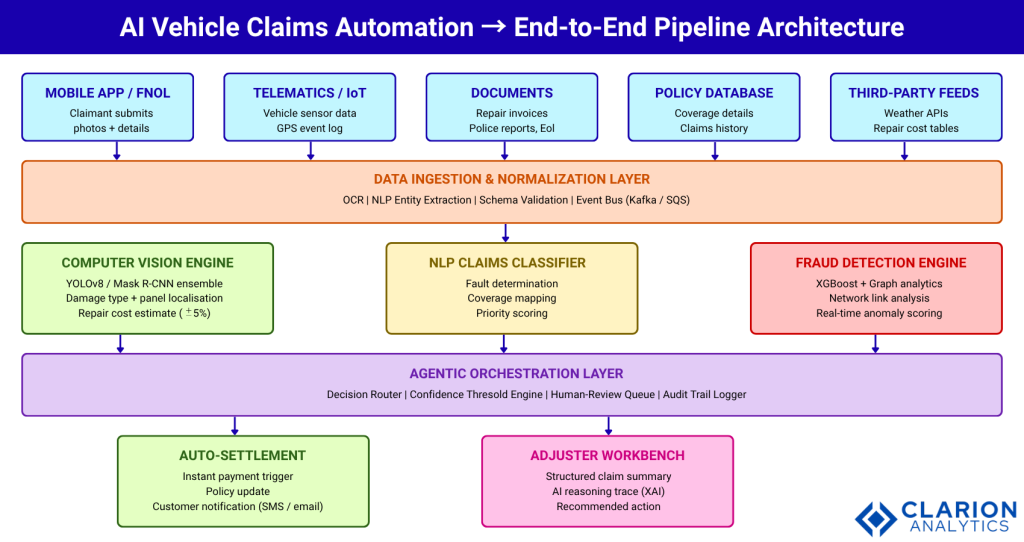

Figure 1: End-to-End AI Vehicle Claims Automation Pipeline Architecture. Inputs from mobile FNOL, telematics, and documents enter a normalisation layer powered by OCR and NLP. Three parallel AI engines, computer vision, NLP classification, and fraud detection process claims simultaneously before an agentic orchestration layer routes decisions to auto-settlement or an adjuster workbench. Each component exposes REST APIs, allowing teams to swap individual models without re-architecting the full system. All decisions are logged with reasoning traces for regulatory audit.

Computer Vision for Vehicle Damage Assessment

Computer vision is the highest-impact module in the claims pipeline. It replaces the physical inspection step, the one that historically took days to schedule, with a near-instant automated assessment triggered the moment a claimant uploads photos through a mobile app.

Modern implementations use ensemble architectures. A 2024 paper in Applied Sciences (MDPI) demonstrated that an ensemble of 10 YOLOv5 detectors outperforms single-model approaches on both accuracy and inference speed, scaling to thousands of assessments per minute. The CarDD dataset (Wang et al., 2023) published in IEEE Transactions on Intelligent Transportation Systems provides the primary public benchmark for training and evaluating these models.

In practice, teams building this typically find that damage localisation (identifying which panels are affected) is more useful than simple classification (dent vs. scratch) because it feeds directly into repair cost estimation. A PwC case study (2024) confirmed this: the insurer’s first model classified damage, the second localised it, and the third estimated cost. Separating those concerns improved trust with adjusters and made explainability tractable.

Separating damage classification, panel localisation, and cost estimation into three discrete models is the architectural pattern that earns adjuster trust and regulatory compliance.

Code Snippet 1 – Mask R-CNN Training Invocation Source: louisyuzhe/car-damage-detector, custom.py

This snippet illustrates the three training entry points engineering teams use when adapting Mask R-CNN to proprietary vehicle damage datasets. Starting from COCO weights is typically the fastest path to production-quality accuracy. The last flag enables incremental retraining on newly closed claims — critical for model drift prevention in live deployments.

NLP and OCR for Intelligent Document Extraction

Every claim arrives with unstructured documents: police reports, repair invoices, medical bills, and written statements. NLP and OCR services transform that chaos into structured records that downstream models can reason over.

A Nordic insurer documented by Decerto (2025) automated 70 percent of its claims tasks using NLP to extract relevant data and prioritise claims by urgency, reducing processing time by 30 percent and operational costs by 20 percent. The system extracted fields like claim number, vehicle number, incident date, and type of loss from unstructured documents with no manual template setup.

The key engineering decision is whether to use a general-purpose large language model for extraction or a fine-tuned encoder model like a domain-specific BERT. In production, teams typically run a lightweight encoder for structured fields (policy number, dates, amounts) and fall back to an LLM call for ambiguous narrative content. This hybrid approach balances latency, cost, and accuracy across the document variety a real claims queue generates.

AI-Powered Fraud Detection at Scale

Auto insurance fraud costs the industry an estimated $308 billion annually. AI changes the detection equation by analysing patterns across millions of claims simultaneously, patterns no human adjuster team could spot at speed.

The most effective production architectures layer two distinct model types: a gradient-boosted classifier (typically XGBoost) that scores structured claim features, and a graph analytics engine that maps relationships between claimants, repair shops, and legal representatives to identify fraud rings. The Car.ly repository (abhisheks008, 2023) demonstrates this combined approach with open-source tooling.

Code Snippet 2 – XGBoost Fraud Classification Pipeline Source: abhisheks008/Car.ly – Vehicle Insurance Claim Fraud Detection

This snippet shows the core fraud classification pipeline that feeds a claims routing engine. Note the scale_pos_weight parameter: fraud datasets are highly imbalanced (roughly 2-5% fraud rate), and this parameter directly compensates for that. In production, the output fraud_proba score is passed to the orchestration layer, which flags claims above a threshold for adjuster review rather than auto-settlement.

Agentic AI and the Touchless Claims Workflow

The latest development in auto insurance AI claims processing is agentic orchestration systems that autonomously coordinate multiple AI services to complete an end-to-end workflow without human intervention for routine claims.

Lemonade’s AI system handles roughly 40 percent of claims instantly, with the fastest settled in under 3 seconds. McKinsey (2025) projects that agentic AI, which accounted for 21 percent of public insurance AI deployments in Q4 2025, will become the dominant architecture for claims management as model reliability improves.

The agentic layer’s job is decision routing: it reads the outputs from the computer vision, NLP, and fraud modules, applies confidence thresholds, and either triggers an auto-settlement payment or escalates to an adjuster workbench with a structured summary and reasoning trace. This trace is what makes the system defensible under insurance regulations that require explainable AI decisions.

The agentic orchestration layer is not the AI, it is the trust layer. Without confidence thresholds and explainable reasoning traces, regulators and adjusters will reject the system regardless of accuracy.

Comparing Automation Approaches: Which Architecture Fits?

Not every carrier needs the same solution. The right architecture depends on claim volume, document complexity, and regulatory environment.

| Approach | Key Strength | Best Used When |

|---|---|---|

| Rule-Based Automation (RPA) | Predictable, fully auditable, easy to govern and roll back | Claims follow rigid structured formats; no image analysis required; regulatory environment requires total transparency |

| ML + Computer Vision Pipeline | High accuracy on damage photos; scales to thousands of assessments per minute; integrates with mobile FNOL | High-volume collision or comprehensive claims where images are submitted; carrier wants 30-40% cost reduction and faster resolution |

| Agentic AI (LLM-Orchestrated) | Handles unstructured inputs and multi-step reasoning; communicates with customers; routes complex edge cases dynamically | Complex claims requiring document synthesis, adjuster coordination, and dynamic customer dialogue; carrier has mature data infrastructure |

Implementation Guidance: Building Your First AI Claims Pipeline

Start with FNOL automation and computer vision damage triage as your minimum viable pipeline. Both problems have strong open-source foundations, clear input-output contracts, and measurable business outcomes that justify continued investment.

Use pretrained YOLO or Mask R-CNN weights fine-tuned on your own closed claims image dataset. You need roughly 5,000 labelled damage images to achieve production-grade accuracy; below that, transfer learning from the public CarDD or TQVCD datasets closes the gap. Gate every model decision with a confidence threshold: claims above 0.85 confidence route to auto-settlement; everything else goes to the adjuster queue. This hybrid approach lets you ship value immediately while the model improves on live data.

KPMG research cited by Vega IT (2025) shows that insurers with unified data platforms and disciplined governance scale AI with fewer failures. Before deploying models, establish a shared claims data pipeline that all AI services consume, not separate data contracts per team. This single investment reduces deployment time from months to weeks once you start shipping additional models.

Build the explainability layer from day one, not as an afterthought. Every auto-settlement decision should produce a reasoning trace: which damage areas triggered what cost estimate, what fraud signals were checked, and which policy terms applied. That trace is your compliance asset and your adjuster training tool simultaneously.

Build the explainability layer from day one, it is your compliance asset, your adjuster training tool, and the only thing standing between a rejected pilot and a production rollout.

Frequently Asked Questions

How accurate is AI vehicle damage assessment compared to human adjusters?

AI systems achieve over 95 percent accuracy in damage assessment, matching or exceeding human adjusters in most standard cases, according to industry benchmarks (2025). Computer vision models process damage photos in seconds with expert-level precision. Complex or unusual claims, multi-vehicle pile-ups, highly modified vehicles still benefit from human oversight, which is why leading implementations use AI to augment adjusters rather than replace them entirely.

What data does an AI claims pipeline need to get started?

The minimum viable dataset is 5,000 labelled vehicle damage images for the computer vision module, plus 12 months of structured claims records (incident type, severity, repair cost, fraud outcome) for the fraud detection model. Public datasets like CarDD (IEEE, 2023) and TQVCD (Heliyon, 2024) reduce the image labelling burden significantly for teams building their first model.

How does AI fraud detection in vehicle insurance actually work?

AI fraud detection typically layers two models: a gradient-boosted classifier (XGBoost or LightGBM) that scores individual claim attributes, and a graph analytics engine that maps relationships between claimants, repair shops, and attorneys to detect fraud ring patterns. The classifier flags suspicious features, multiple claims shortly after policy purchase, inflated repair estimates while the graph layer identifies coordinated fraud that single-claim analysis would miss.

Can AI claims automation integrate with legacy policy management systems?

Yes, and REST API design makes this practical without a full system replacement. Each AI module, FNOL intake, computer vision, fraud scoring exposes a standard API endpoint. The orchestration layer queries your existing policy database via API and posts structured outputs back to your claims management system. Teams at carriers using 20-year-old core systems have achieved full pipeline integration in under 6 months using this approach.

What percentage of vehicle claims can be fully automated today?

McKinsey analysis shows that more than 50 percent of claims activities have automation potential by 2030, with straight-through processing already standard for simple collision claims at leading carriers. In 2025, leading insurtechs like Lemonade auto-settle approximately 40 percent of claims instantly. For traditional carriers, a realistic first-year target is 20 to 30 percent straight-through processing, rising as model confidence thresholds are refined.

Three Things Engineering Teams Should Take Away

First, AI vehicle claims automation delivers its strongest ROI at the computer vision damage assessment layer. Start there, measure cycle time reduction and cost-per-claim, and use those results to justify expanding the pipeline.

Second, the orchestration and explainability layer is not optional. Regulators and adjusters will reject any system that issues decisions without a human-readable reasoning trace. Build it alongside the models, not after them.

Third, data architecture precedes model architecture. A shared, governed claims data pipeline reduces future model deployment time from months to weeks. Every dollar invested in data infrastructure compounds across every subsequent AI model your team ships.

The question for every CTO in this space is no longer whether to automate vehicle claims. McKinsey’s 2025 analysis shows AI leaders are compounding shareholder returns 6 times faster than laggards. The question is whether your engineering team will build the foundational pipeline this year or spend next year watching competitors close the gap.