DEFINITION: Digital privacy is the right of individuals and organisations to control how personal data is collected, processed, and shared across connected systems. It spans technical controls, encryption, access management, anonymisation and governance frameworks, including GDPR, CCPA, and HIPAA. For software developers, digital privacy means engineering systems where data protection is a design constraint, not a post-release patch.

Introduction: The $4.88 Million Question

Every decision about digital privacy in your system carries a dollar sign. According to IBM (2024), the global average cost of a data breach hit $4.88 million in 2024, a 10% spike and the largest year-over-year jump since the pandemic. That number is not a headline for the security team. It is a product decision.

For software developers and CTOs, digital privacy has moved from a compliance line item to a core architecture requirement. The organisations that treat it as an afterthought are the ones funding breach remediation, GDPR fines, and customer-trust recovery campaigns simultaneously.

The regulatory environment has accelerated the stakes. Gartner (2024) reports that 75% of the global population now has personal data covered by modern privacy regulations, and the EU imposed EUR 2.1 billion in GDPR fines in 2024 alone. The question is not whether your system will be audited. It is whether it will pass.

“In a connected world, data privacy is not a feature; it is the load-bearing wall of your architecture.”

Understanding the Regulatory Landscape

Compliance is the map, not the territory. Developers who build to the minimum regulatory requirement consistently get surprised by enforcement. GDPR requires a 72-hour breach notification window. CCPA mandates opt-out rights and data portability. HIPAA governs health data with strict audit trail requirements. Layering these simultaneously across a multi-cloud stack is where most engineering teams hit their first wall.

Privacy laws now cover 79% of the world’s population, 6.3 billion people, with 144 countries enforcing data protection statutes as of 2025, according to Usercentrics (2025). For teams building global products, the design assumption must be that every user is covered by at least one major regulation.

In practice, teams building multi-tenant SaaS products find that the hardest compliance challenge is not understanding the regulation; it is mapping data flows accurately enough to answer the question: where does each user’s data live, and who can access it? Without a data catalogue and classification schema in place, this question is unanswerable.

Privacy-by-Design: Building It In, Not Bolting It On

Privacy-by-Design means threat-modelling for privacy at the architecture phase, before a single endpoint is written. The principle is simple: data you never collect cannot be breached.

McKinsey’s research on consumer data protection found that leading enterprises build security and privacy requirements directly into software-development policies, assigning responsibility to the development team from the design phase. This approach achieved compliance in more than 90% of applications developed, cutting downstream rework and accelerating time to market.

The practical implementation for developers includes: data minimisation (collect only what the feature requires), retention policies enforced at the schema level, consent management integrated into the data pipeline rather than handled by a third-party banner, and deletion requests processed through automated workflows rather than manual ops tickets.

“Data you never collect cannot be breached. Data minimisation is a security control, not just a compliance checkbox.”

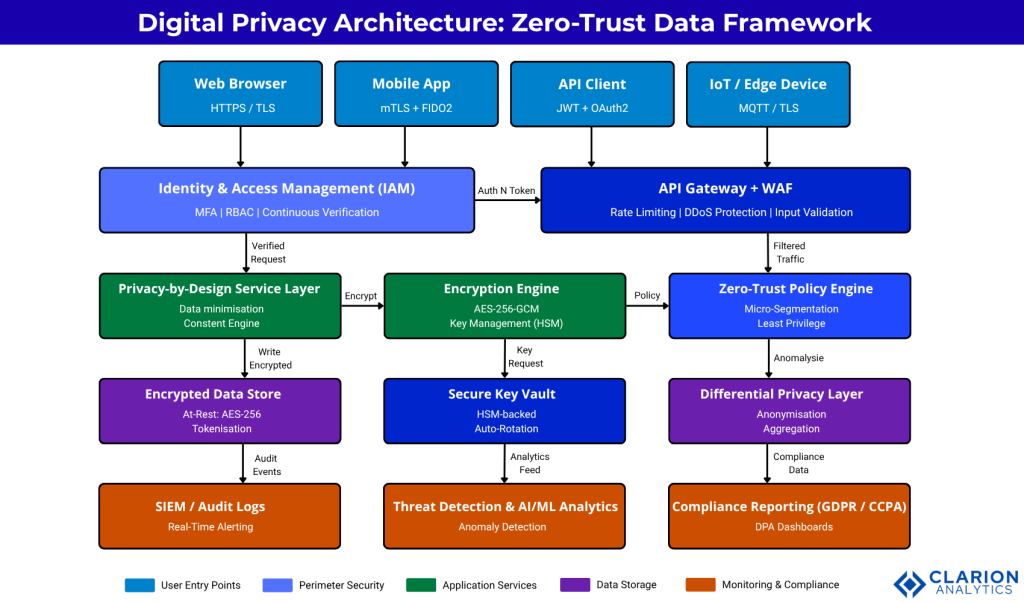

Zero-Trust Architecture: The New Security Default

Zero-trust operates on a single premise: no user, device, or network segment is trusted by default. Every request is authenticated, authorised, and continuously validated. This directly addresses the most common initial attack vector identified in IBM’s 2024 breach report, stolen or compromised credentials, which accounted for 16% of all breaches and took an average of 292 days to detect.

Gartner’s 2024 IT survey found that 63% of organisations worldwide have fully or partially implemented a Zero Trust strategy. The arXiv systematic review by Gambo and Almulhem (2025) confirms the four pillars driving adoption: continuous authentication, conditional access, dynamic trust evaluation, and least-privilege enforcement.

For engineering teams, micro-segmentation is the most impactful starting point. Instead of flat networks where a compromised service can traverse laterally, micro-segmentation isolates services at the API or container boundary. Combined with identity and access management (IAM) and role-based access control (RBAC), this limits the blast radius of any single credential compromise to a single service or data scope.

The diagram illustrates five security layers: user entry points (web, mobile, API, IoT) authenticate through IAM and the API gateway before reaching the application service layer. An encryption engine handles AES-256-GCM operations with keys managed in a hardware security module (HSM). A Zero-Trust Policy Engine enforces micro-segmentation and least-privilege access. At the data layer, encrypted storage, a secure key vault, and a differential privacy module protect data at rest. All layers feed a centralised monitoring tier for SIEM alerting, AI-powered threat detection, and GDPR/CCPA compliance reporting.

“Zero Trust is not a product you buy; it is an architecture posture you enforce continuously across identity, network, and data.”

Encryption in Practice: At Rest, in Transit, in Use

Encryption is only as strong as its implementation. The three domains at rest, in transit, and in use each require different approaches, and teams often over-invest in one while leaving another exposed.

At rest, AES-256-GCM is the current standard for symmetric encryption of stored data. The OWASP Cryptographic Storage Cheat Sheet recommends authenticated encryption modes (GCM or CCM) as a first preference, combined with automated key rotation policies. Keys must be stored separately from the data they encrypt in a managed key vault or HSM.

In transit, TLS 1.3 is the minimum bar. The OWASP User Privacy Protection Cheat Sheet mandates HTTPS with HSTS headers and recommends mutual TLS (mTLS) for service-to-service communication. Any service that still allows HTTP in production, even on internal networks, is operating with an open window.

In use is where most teams have the largest gap. Privacy-enhancing computation (PEC) techniques, such as differential privacy, homomorphic encryption, and secure multi-party computation, allow processing of sensitive data without exposing plaintext values. Gartner (2022) projected that 60% of large organisations would use at least one PEC technique by 2025. The gap between projection and actual adoption is where competitive differentiation now lives.

Comparison: Data Privacy Approaches for Engineering Teams

| Approach | Key Strength | Key Trade-off | Best Used When |

|---|---|---|---|

| Privacy-by-Design | Eliminates risk at source; reduces future rework; cleanest compliance posture | Requires upfront design discipline and longer sprint cycles | Greenfield builds, new product lines, or systems undergoing major re-architecture |

| Zero-Trust Architecture | Limits blast radius of any breach; continuous verification reduces insider threat | Complex to retrofit; adds latency per request; requires IAM and policy engine investment | Distributed microservices, multi-cloud environments, or post-breach remediation |

| Privacy-Enhancing Computation (PEC) | Enables data utility without exposing plaintext; supports cross-org collaboration | Higher compute overhead; steep learning curve; nascent tooling ecosystem | Analytics on sensitive datasets, federated ML, or regulated cross-border data sharing |

“The average breach involving shadow data files stored in unmanaged sources cost 16% more than a standard breach. Visibility is a privacy control.”

Implementation Roadmap for CTOs and Engineering Teams

A pragmatic privacy programme has three horizons. Teams building this typically find that Horizon 1 delivers the fastest compliance wins, while Horizons 2 and 3 generate measurable reduction in breach costs over 12 to 24 months.

Horizon 1 (0 to 3 months): Data inventory and classification. Map every data store, classify fields by sensitivity (PII, financial, health), enforce TLS 1.3 everywhere, enable automated secrets rotation using a vault, and run a GDPR/CCPA gap assessment.

Horizon 2 (3 to 9 months): Encrypt all PII at rest with AES-256-GCM, implement IAM with least-privilege RBAC, deploy micro-segmentation between services, integrate consent management into the data pipeline, and instrument audit logging.

Horizon 3 (9 to 18 months): Adopt Privacy-Enhancing Computation for sensitive analytics workloads, implement differential privacy for reporting pipelines, automate DSAR (Data Subject Access Request) workflows, and begin Zero Trust maturity assessment against the CISA model.

IBM’s 2024 breach data makes the ROI clear: organisations that deploy AI and automation extensively in prevention workflows reduce breach costs by an average of $2.2 million per incident. Security staffing shortages, meanwhile, drive breach costs $1.76 million higher on average. Tooling investment is not optional; it is a cost offset.

“Teams that automate prevention workflows reduce breach costs by $2.2M on average. In privacy, automation is not a convenience; it is financial risk management.”

Frequently Asked Questions

1. What is the difference between data security and digital privacy?

Data security focuses on protecting systems and data from unauthorised access through technical controls such as encryption and firewalls. Digital privacy is broader: it covers an individual’s right to control how their data is collected, used, and shared. Security is a necessary component of privacy, but privacy requires governance, consent, and transparency in addition to technical protection. Teams often get security right while still violating privacy principles by over-collecting data or sharing it without appropriate consent.

2. How do I make my application GDPR and CCPA compliant at the same time?

Start by implementing the stricter of the two standards in each area. GDPR requires a legal basis for processing and a 72-hour breach notification window. CCPA requires opt-out rights and data portability. Build a unified consent management system that captures user preferences, implement data subject request (DSR) automation, create a data inventory with classification labels, and engage legal counsel to validate your privacy notice language. Most technical GDPR requirements satisfy CCPA simultaneously.

3. What encryption standard should developers use for data at rest in 2025?

AES-256-GCM is the current production standard for symmetric encryption of data at rest. It provides authenticated encryption protecting against both eavesdropping and tampering in a single operation. Keys should be stored separately from encrypted data, ideally in a managed key vault or hardware security module (HSM) with automated rotation policies. Never use ECB mode. Prefer GCM or CCM over CBC unless the platform explicitly requires otherwise. Key rotation should trigger on schedule and on any suspected compromise.

4. How does zero-trust architecture differ from traditional perimeter security?

Traditional perimeter security trusts anything inside the network boundary. Once an attacker is inside through a phishing attack, credential theft, or a compromised third-party integration, they can move laterally with few controls. Zero trust removes the concept of a trusted perimeter. Every user, device, and service must authenticate and be authorised for every request, regardless of network location. Continuous verification, least-privilege access, and micro-segmentation limit the blast radius of any single compromise to the smallest possible scope.

5. What are privacy-enhancing technologies (PETs) and should my team adopt them?

PETs are a family of techniques that allow data to be used or analysed without exposing the underlying raw values. The most relevant for software teams include differential privacy (adds calibrated noise to query results), homomorphic encryption (allows computation on encrypted data), and federated learning (trains ML models across distributed data without centralising it). Adoption should be prioritised for analytics pipelines processing sensitive health or financial data, cross-organisation data sharing, or any system where ML models are trained on PII. The tooling ecosystem is maturing rapidly.

The Path Forward: Privacy as a Competitive Advantage

Three insights should shape every engineering decision on this topic. First, data protection cost is rising faster than most security budgets, the IBM (2024) average of $4.88 million per breach is not a ceiling. Second, regulations are expanding coverage globally. Third, AI-assisted breach prevention is demonstrably the highest-return security investment available.

The teams that will differentiate in the next 24 months are not those who implement the regulatory minimum. They are the ones who treat digital privacy as a product quality attribute, something users can feel, and trust companies can demonstrate.

Clarion Analytics helps engineering teams and CTOs operationalise these principles through privacy-by-design consulting, data classification tooling, and compliance workflow automation. Visit clarion.ai to explore how we help organisations build privacy-first architecture at scale.

“Privacy is not a feature shipped in v2. It is a load-bearing constraint that shapes every architectural decision from day one.”