What is LLM knowledge graph construction? A knowledge graph built with LLMs is a structured, machine-readable network of entities and relationships automatically extracted from unstructured text using large language models. It replaces manual annotation pipelines with prompt-driven extraction, enabling enterprises to convert documents, databases, and communications into a queryable semantic layer that grounds AI reasoning, reduces hallucinations, and accelerates multi-hop insight discovery.

Why Your Enterprise AI Is Flying Blind Without a Knowledge Graph

Vector search retrieves similar text chunks. It does not understand relationships between entities, cannot traverse a supply chain across three hops, and collapses entirely when asked what the main themes of a million-token corpus are. That is where LLM knowledge graph construction changes the game.

According to Gartner (2025), integrating a knowledge graph improves LLM response accuracy by 54.2 percent, more than three times the performance of SQL alone. McKinsey (2024) reports that 65% of organizations now use generative AI in at least one business function, yet data professionals still spend 25–30% of their time searching for information that should already be connected. The gap between AI capability and AI usefulness lives in unstructured data, and a knowledge graph closes it.

The problem has a name: context collapse. LLMs are reasoning engines without memory. Without a structured knowledge layer, every query starts from scratch. Teams building RAG pipelines find that vector search works for simple retrieval, but the moment a question requires connecting dots across departments, documents, or time periods, performance degrades fast.

“Knowledge graphs improve LLM response accuracy by 54.2 percent, more than three times the performance of SQL alone.” ~Gartner (2025)

Gartner’s 2024 Hype Cycle placed knowledge graphs on the “Slope of Enlightenment”, a signal of growing production maturity after years of early-adopter noise. The market agrees: the enterprise knowledge graph segment is projected to grow at a CAGR of 33.4% through 2030, driven directly by demand for structured context in GenAI deployments.

How LLMs Construct Knowledge Graphs: The Three-Stage Pipeline

LLM-driven KG construction follows three stages: ontology engineering (defining entity types and relation schemas), knowledge extraction (prompting the LLM to identify entities and triples from text), and knowledge fusion (resolving duplicates and linking entities to a canonical store).

A 2025 survey by Bian et al. (arXiv) provides the most comprehensive current map of this pipeline. The authors identify two master paradigms:

Schema-based: A predefined ontology constrains the LLM’s output. Entity types, relation labels, and hierarchies are defined up front. The LLM populates the schema. This delivers high consistency and is mandatory in regulated domains like healthcare and financial services. Competency Questions (CQs) are often used to define scope before extraction begins.

Schema-free: No external ontology is provided. The LLM autonomously discovers entities, infers relation types, and builds the schema from patterns in the data. Techniques like Chain-of-Thought prompting replace formal schema supervision. This approach is faster to stand up and better suited for open-domain knowledge discovery.

In practice, teams building this typically find that a hybrid approach works best: define four to eight top-level entity types for your domain, then let the LLM discover sub-types and relation labels freely within those boundaries. This keeps the graph consistent where it matters while avoiding the maintenance overhead of a fully hand-crafted ontology.

The three pipeline stages break down as follows:

Stage 1 – Ontology Engineering. Define entity types (person, organization, drug, regulation) and core relation labels (works-for, treats, governs). Use Competency Questions to scope the knowledge boundary. Tools: NeOn-GPT, LKD-KGC, or a custom GPT-4 prompt chain.

Stage 2 – Knowledge Extraction. Chunk your documents into TextUnits. For each chunk, prompt the LLM to output entities, their types, descriptions, and relations as structured JSON. Few-shot prompting (5–7 examples) consistently outperforms zero-shot for relation extraction, as Zhu et al. (2024) demonstrate across eight benchmark datasets.

Stage 3 – Knowledge Fusion. Entity resolution removes duplicates (“Apple Inc.”, “Apple”, “AAPL” are one node). Relation normalization maps semantically equivalent labels to a canonical form. Vector similarity between entity embeddings, combined with LLM disambiguation, is the current production standard.

GraphRAG: The Production Architecture You Should Know

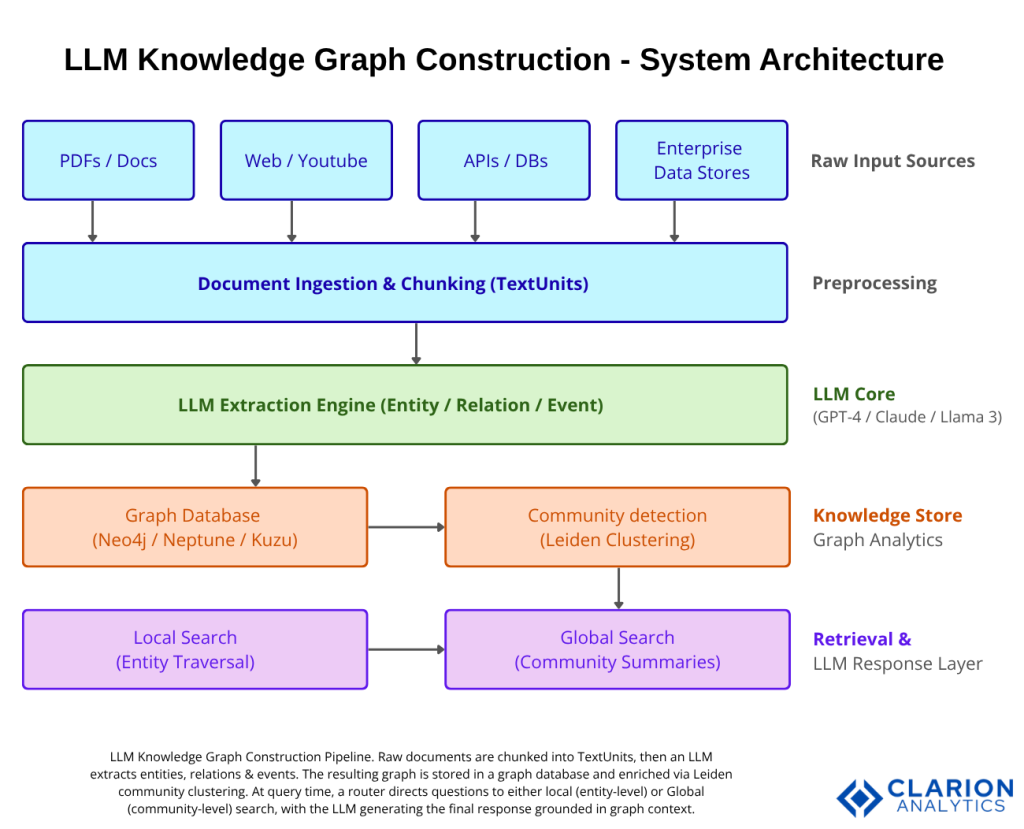

GraphRAG is Microsoft’s graph-based retrieval-augmented generation architecture. It builds an entity knowledge graph from documents in two LLM passes, first extracting entities and relations, then generating community summaries via Leiden clustering, enabling both local (entity-level) and global (whole-corpus) question answering.

The foundational paper, Edge et al. (Microsoft Research, 2024), demonstrated that standard RAG fails systematically on global questions: “What are the main themes in this dataset?” produces misleading answers because vector search retrieves chunks semantically similar to the question word, not to the actual themes. GraphRAG solves this by pre-indexing community summaries at multiple granularity levels.

The pipeline operates in five stages: raw document chunking into TextUnits, LLM-driven entity and relation extraction, storage in a graph database, Leiden community detection to identify clusters of related entities, and a query-time router that directs questions to local search (entity traversal) or global search (community summaries). The LLM generates the final answer grounded in graph context, not raw chunks.

“GraphRAG reduced LinkedIn’s ticket resolution time from 40 hours to 15 hours, a 63% operational efficiency gain.”

LinkedIn’s engineering team published results showing that combining RAG with knowledge graphs raised retrieval accuracy and reduced the median per-issue resolution time by 28.6% in customer service. That is not a benchmark result. That is a production system serving millions of users.

Real-World Use Cases Across Industries

Enterprises deploy LLM-built knowledge graphs for customer service (reducing resolution times via GraphRAG), drug discovery (linking genes, compounds, and trials), supply chain intelligence (tracing multi-tier vendor relationships), and fraud detection (surfacing hidden entity connections across transaction graphs).

Customer Service and Support. Connect product documentation, CRM tickets, engineering runbooks, and historical resolutions into one graph. When a support agent or AI assistant asks “What caused similar outages last quarter?”, the graph traverses entity relationships across silos rather than guessing from keyword similarity.

Life Sciences and Pharma. Drug discovery requires linking genes, proteins, compounds, disease pathways, and clinical trial outcomes, exactly the multi-hop relationship structure knowledge graphs handle natively. Organizations implementing KGs in this domain report that KG infrastructure enables research AI that would be computationally impossible on flat data.

Financial Services and Fraud. Knowledge graph implementations reduce compliance investigation costs by 40% in banking, and businesses using knowledge graphs report a 20% increase in cross-selling efficiency. Fraud rings are relationship structures; graph traversal finds them where SQL joins stop.

Supply Chain and Manufacturing. LLM-constructed KGs built from supplier contracts, logistics reports, and news feeds provide early warning of disruptions by surfacing second- and third-tier supplier dependencies that no single database tracks.

Code Snippet 1 – GraphRAG Entity Extraction Prompt

Source: microsoft/graphrag – graphrag/index/graph/extractors/graph/graph_extractor.py

What this shows: The exact prompt template that drives GraphRAG’s entity and relation extraction step. Each document chunk is passed to an LLM with this instruction, and the structured delimited output is then parsed into graph triples. The tuple_delimiter pattern makes parsing deterministic and avoids JSON parsing failures on long outputs. For developers, this is the template to adapt when building domain-specific extraction for healthcare, legal, or financial data; simply replace the entity_types list.

Choosing Your Stack – Tools, Frameworks, and Databases

The tooling landscape matured rapidly in 2024–2025. Your choice depends on document volume, budget, schema requirements, and team familiarity.

| Approach / Tool | Key Strength | Best Used When |

|---|---|---|

| Microsoft GraphRAG | Global + local query modes; community-aware hierarchical summaries | Large private document corpora; enterprise Q&A over internal knowledge bases |

| Neo4j LLM Graph Builder | End-to-end UI + API; multi-source ingestion (PDF, YouTube, S3, web); LangChain native | Rapid prototyping; teams already running Neo4j; need a polished user interface |

| LightRAG | 10× token reduction vs. GraphRAG; comparable accuracy via dual-level retrieval | High-volume pipelines (1,500+ docs/month); cost-sensitive production environments |

| Schema-based custom pipeline | Maximum semantic consistency; human-aligned ontology; full auditability | Regulated industries (healthcare, finance) where schema compliance is non-negotiable |

“LLM knowledge graph construction reached production maturity in 2024–2025, with organizations achieving 300–320% ROI across finance, healthcare, and manufacturing.”

The average ROI for enterprise graph database projects is 348% over three years. That figure reflects production deployments, not pilots. But ROI depends heavily on tool selection matching the use case GraphRAG’s upfront indexing cost is prohibitive for small, frequently updated datasets, where LightRAG or a custom schema-based pipeline delivers equivalent results at a fraction of the cost.

Code Snippet 2 – Neo4j LLM Graph Builder: Extraction + Storage Chain

Source: neo4j-labs/llm-graph-builder – backend/src/graph_query.py

What this shows: The complete LangChain extraction-to-storage pipeline in under 30 lines. The LLMGraphTransformer handles the structured prompting; Neo4jGraph.add_graph_documents writes the result via Cypher with full provenance tracking. For a CTO evaluating build-vs-buy: this is what “buy and configure” looks like on the Neo4j stack. The allowed_nodes list is where you inject your domain ontology.

Implementation Guidance – From Prototype to Production

A production LLM knowledge graph pipeline requires four components: a document ingestion layer, an LLM extraction step, a graph database, and a query interface with hybrid retrieval. Start with a schema-guided approach in regulated domains; use schema-free for open discovery.

Step 1: Define your ontology. For a regulated domain, list 6–10 entity types and 8–15 relation labels. For open discovery, define only the 3–4 types you are certain about and let the LLM generate the rest. Feed these as Competency Questions or directly as allowed_nodes in your transformer config.

Step 2: Chunk and embed source documents. Chunk size matters. GraphRAG uses 300–600 token chunks by default. Smaller chunks increase entity precision; larger chunks capture more contextual relation evidence. Overlap of 10–15% reduces boundary truncation errors.

Step 3: Configure LLM extraction. Use GPT-4o or Claude for best relation accuracy. Zhu et al. (2024) found that GPT-4 is “more suited as an inference assistant rather than a few-shot information extractor”, meaning: fine-tune a smaller model (Llama 3.1 8B via QLoRA) for high-volume extraction, and use GPT-4 class models for reasoning and disambiguation. This combination cuts extraction costs by 60–80% at scale.

Step 4: Load to graph database and run community detection. Neo4j, Amazon Neptune, Kuzu, and FalkorDB all support LangChain integration. After initial ingestion, run the Leiden algorithm to generate community summaries. This is a one-time cost per indexing cycle, not per query.

Step 5: Deploy query interface with hybrid retrieval. Combine semantic vector search (for initial entity lookup) with graph traversal (for relationship hops) and BM25 keyword search (for high-precision named entity retrieval). Graphiti by Zep implements exactly this three-mode hybrid retrieval with incremental graph updates, making it well-suited for AI agent memory that evolves in real time.

Frequently Asked Questions

How does LLM knowledge graph construction differ from traditional NLP pipelines? Traditional pipelines use rule-based or supervised models trained on labeled data for each entity type and relation. LLM-based construction uses prompt engineering and few-shot examples to extract entities and relations from raw text without retraining. This reduces time-to-first-graph from months to days and generalizes across domains without annotated datasets, though it requires careful prompt design to maintain consistency.

What is GraphRAG and how does it reduce LLM hallucinations? GraphRAG is Microsoft’s graph-based retrieval-augmented generation approach. It pre-builds a knowledge graph and community summaries from a document corpus. At query time, the LLM receives structured graph context, verified entities, relationships, and summaries, rather than raw text chunks. This grounds the model’s response in structured facts, reducing hallucination rates by providing a verifiable source of truth that vector search alone cannot supply.

Should I use a knowledge graph or a vector database for enterprise AI? Use both. Vector databases excel at semantic similarity retrieval for unstructured content. Knowledge graphs excel at relationship traversal, multi-hop reasoning, and whole-corpus understanding. Production GraphRAG implementations use vector search to find the entry-point entity, then traverse the graph to gather contextual relationships. The two are complementary layers, not competing alternatives.

Which LLM works best for knowledge graph extraction? GPT-4o and Claude 3.5/4 class models deliver the best relation extraction accuracy for schema-constrained pipelines. For high-volume extraction at lower cost, fine-tuned Llama 3.1 8B (via QLoRA on domain-specific triples) achieves 80–90% of GPT-4 quality at 10× lower inference cost. The Frontiers in Big Data study (2025) found seven-shot prompting consistently outperforms zero-shot for relation extraction across open-source and proprietary models.

How long does it take to build a production knowledge graph with LLMs? A working prototype on a 1,000-document corpus takes two to three days with Neo4j LLM Graph Builder or GraphRAG. Production deployment, including schema validation, entity disambiguation, community detection, and a query interface, typically requires four to eight weeks for a team of two engineers. Ongoing maintenance is minimal if you use an incremental update system like Graphiti, which adds new data to the graph in real time without full reindexing.

The Knowledge Graph Is the Foundation Your AI Stack Needs

Three insights define where this technology stands in 2026. First, the productivity case is settled: LLM-built knowledge graphs cut annotation time, reduce hallucinations, and deliver measurable ROI 300–320% for mature deployments. Second, the tooling is production-ready: GraphRAG, Neo4j LLM Graph Builder, and LightRAG give teams a clear path from prototype to production without building pipelines from scratch. Third, the architecture choice schema-based versus schema-free, GraphRAG versus LightRAG, depends entirely on your document volume, domain rigidity, and cost tolerance. Get that match right early, and you avoid expensive refactoring.

The question every CTO should now be asking is not “should we build a knowledge graph?”; it is “how many months of unstructured data value are we leaving on the table without one?”