What is Generative AI? Generative AI refers to machine learning systems that produce original content text, code, images, or structured data by learning statistical patterns from large datasets. In enterprise contexts, it applies across use cases from automated code generation and clinical decision support to financial risk modeling and customer service automation. Unlike traditional AI that classifies or predicts, generative AI creates, reasons, and increasingly acts.

The $4.4 Trillion Reshaping Nobody Planned For

Generative AI is reshaping industries by automating knowledge work at scale. McKinsey (2024) estimates the technology could add between $2.6 trillion and $4.4 trillion annually to global economic output, a figure that surpasses Germany’s entire GDP. The critical shift happening in 2025 is from experimentation to enterprise-wide deployment.

The numbers support this urgency. Gartner (2025) forecasts worldwide gen AI spending will hit $644 billion in 2025, a 76.4% year-over-year jump. Meanwhile, McKinsey’s State of AI survey reports that 78% of organizations now deploy AI in at least one business function, up from 55% just one year earlier. Adoption is no longer a differentiator. How you deploy is.

The performance gap is already visible. Companies that have redesigned workflows around generative AI achieve measurable gains in revenue and productivity. Those stuck in pilot mode are falling behind. For software teams and CTOs, the question is not whether to build but what to build first.

“The companies winning with generative AI are not the ones with the best models. They are the ones with the clearest workflows.”

Five Industries Where Generative AI Is Creating a Performance Gap

Generative AI is delivering measurable advantage in healthcare, financial services, software engineering, retail, and legal services. Accenture (2024) found that companies with fully modernized, AI-led processes achieve 2.5x higher revenue growth and 3.3x greater success scaling gen AI use cases compared to their peers.

Healthcare: From Drug Discovery Compression to Clinical Summarization

AI-assisted drug discovery is compressing timelines that once took decades. Accenture’s research shows that generative AI could dramatically shorten drug development cycles by surfacing molecular structures and clinical correlations faster than human researchers alone. On the operational side, large hospital systems are deploying gen AI to auto-summarize clinical notes, reducing documentation time by 30–40% per shift. The constraint is not the technology; it is data governance and regulatory approval pathways.

Financial Services: Risk Modeling, Fraud Detection, and Contract Automation

Financial institutions are using gen AI to synthesize regulatory documents, generate risk scenario narratives, and flag anomalous transaction patterns in near real time. Accenture’s 2024 financial services analysis shows that generative AI now accounts for roughly 12% of technology budget in leading firms, with that share expected to grow to 16% by end of 2025. JPMorgan Chase’s coding assistant boosted engineering productivity by 10–20% across tens of thousands of developers.

Software Engineering: Code Generation, Test Automation, and Incident Response

Software engineering is the function where gen AI delivers the most immediate, measurable output. Accenture (2024) reports that generative AI reduces software development time by up to 55% in early deployments. GitHub Copilot and similar tools accelerate code completion, but the real gains come from AI-assisted test generation, PR review automation, and incident triage work that used to require senior engineers.

The Architecture Stack Every Engineering Team Needs to Understand

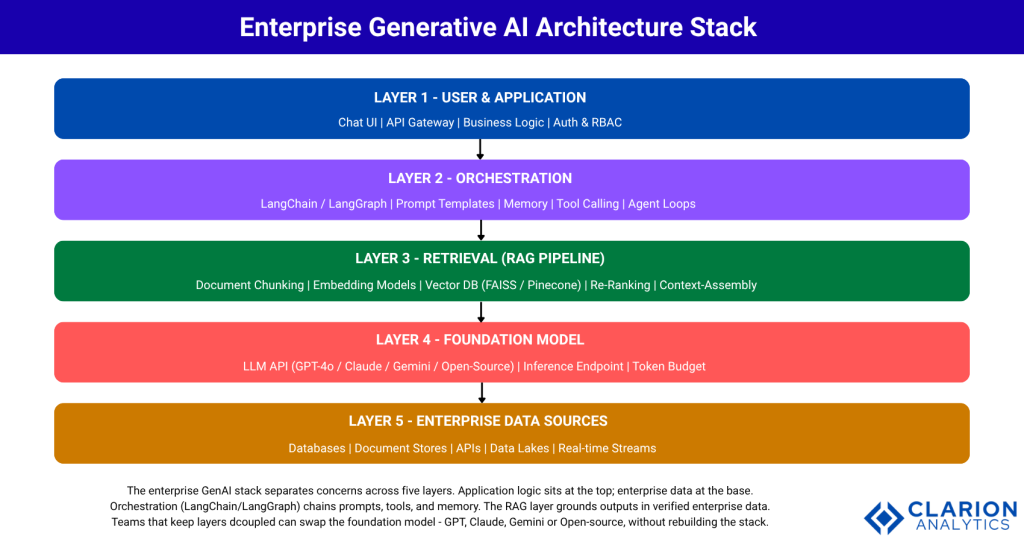

Enterprise generative AI deployments rely on a layered architecture: a user and application layer, an orchestration layer, a retrieval layer (RAG or fine-tuning), a foundation model layer, and enterprise data sources. Choosing the right combination of these layers determines both output accuracy and total cost of ownership.

In practice, teams building this typically find that the orchestration and retrieval layers are where most of the engineering effort lands. The foundation model itself is almost a commodity decision. What differentiates a good enterprise system is how cleanly the retrieval pipeline grounds model outputs in verified data, and how robustly the orchestration layer handles errors, tool calls, and memory.

Code Snippet 1 – Provider-agnostic model initialization Source: langchain-ai/langchain

This snippet demonstrates LangChain’s provider-agnostic interface. A CTO evaluating vendor lock-in risk can see immediately that swapping from GPT to Claude to an open-source model requires changing a single string, not rewriting the application layer.

The enterprise GenAI stack separates concerns across five layers. Application logic sits at the top; enterprise data at the base. The orchestration layer (LangChain/LangGraph) chains prompts, tools, and memory. The RAG layer grounds outputs in verified enterprise data, reducing hallucinations without requiring model retraining. Teams that keep layers decoupled can swap the foundation model GPT, Claude, Gemini, or any open-source alternative without rebuilding the full system.

“Architecture choice, RAG, fine-tuning, or agentic determines value realization. Model selection is a secondary decision.”

RAG vs Fine-Tuning vs Agentic AI: Choosing the Right Pattern

RAG is best when enterprise knowledge changes frequently. Fine-tuning suits narrow, stable tasks requiring a specific output style. Agentic AI is the right choice when completing a goal requires multiple decisions, tool calls, and conditional logic. Gartner (2025) projects that by 2028, 33% of enterprise software will incorporate agentic capabilities, up from less than 1% in 2024.

| Approach | Key Strength | Best Used When |

|---|---|---|

| RAG (Retrieval-Augmented Generation) | Grounds outputs in live enterprise data; reduces hallucinations without retraining | Knowledge is large, dynamic, and requires source attribution (legal, finance, support) |

| Fine-Tuning | Produces highly consistent style and format; lower inference latency per call | Task is narrow, stable, and requires specific tone or structured output (code completions, form extraction) |

| Agentic AI (Multi-step Orchestration) | Autonomously plans, calls tools, and completes multi-step goals across systems | Workflows span multiple systems, require sequential decisions, or involve real-world actions (procurement, DevOps automation) |

Code Snippet 2 – Minimal RAG pipeline Source: langchain-ai/langchain

This snippet shows a complete minimal RAG pipeline in roughly 12 lines. Documents are chunked, embedded into FAISS, and the retriever pulls the top-4 most relevant chunks before passing them to the LLM. The model answers using only the retrieved context, reducing hallucinations and grounding every response in enterprise data.

“The RAG layer is where most enterprise gen AI value is won or lost, not in model selection.”

From Pilot to Production: The Implementation Playbook

Most organizations fail to scale gen AI because they treat it as a technology project rather than a workflow redesign. Deloitte (2024) found that only organizations that fundamentally redesign workflows achieve sustainable EBIT impact. Of the 2,773 leaders surveyed, nearly three-quarters reported their most advanced gen AI initiative meeting or exceeding ROI expectations, but only when the effort extended beyond the proof-of-concept phase.

The FACTS framework (Freshness, Architectures, Cost, Testing, Security), developed by NVIDIA researchers (2024) from building three internal enterprise chatbots, identifies 15 control points where RAG pipelines fail in production. The most common failure modes are stale data in the vector index, prompt injection vulnerabilities, and the absence of latency budgets, none of which surface in a demo environment.

Four principles consistently separate teams that ship from those that stay in pilot: (1) pick one high-value, narrow workflow and automate it end-to-end before expanding; (2) treat the retrieval pipeline as production infrastructure, not a notebook; (3) instrument every token with cost and latency metrics from day one; (4) define a human-in-the-loop escalation path before anything goes live. Johnson and Johnson’s experience, where just 10–15% of nearly 900 gen AI projects delivered 80% of the value, confirms that focus beats breadth.

“Organizations that treat gen AI as a workflow redesign, not a technology project, are the ones achieving sustainable EBIT impact.”

Frequently Asked Questions

How is generative AI reshaping industries right now?

Generative AI is reshaping industries by automating knowledge work across healthcare, finance, software, retail, and legal services. Companies with AI-led processes already achieve 2.5x higher revenue growth than peers (Accenture, 2024). The shift from pilot to enterprise-wide deployment driven by workflow redesign, not just model adoption, is the defining pattern of 2025.

What is the difference between RAG and fine-tuning for enterprise AI?

RAG grounds model outputs in live enterprise data without retraining, making it ideal for dynamic knowledge bases like legal or support documentation. Fine-tuning trains the model on domain-specific examples, producing consistent style and faster inference for narrow, stable tasks. Most enterprise teams start with RAG and add fine-tuning selectively where latency or format consistency is critical.

How do I choose between building a custom LLM and using a hosted API?

For 95% of enterprise use cases, a hosted API (GPT, Claude, Gemini) combined with a robust RAG pipeline outperforms a custom-trained model on cost, speed, and maintenance burden. Custom models make sense only when data cannot leave your infrastructure, output format is highly specialized, or inference volume is large enough to justify the infrastructure cost, typically millions of requests per day.

How long does it take to go from a generative AI pilot to production?

A well-scoped gen AI pilot can reach production in 6–12 weeks if the workflow is narrow, the data is clean, and the team has vector database infrastructure in place. Most delays come from data preparation (chunking, cleaning, embedding), not model integration. Deloitte (2024) found that more than two-thirds of respondents reported that 30% or fewer of their experiments would be fully scaled in the next three to six months, largely due to data and governance friction.

What are the biggest risks of deploying generative AI in regulated industries?

The top risks in regulated industries are hallucination producing incorrect outputs that inform decisions, data leakage via prompt injection or retrieval of unauthorized documents, and regulatory non-compliance around explainability and audit trails. Mitigations include SELF-RAG architectures with output verification, role-based access control on the retrieval layer, and human-review workflows for high-stakes outputs. Governance must be designed in, not bolted on.

Three Things to Build Before Your Competitor Does

Three insights should drive your gen AI roadmap. First, the performance gap between AI-led and laggard organizations is already measurable and widening; 2.5x revenue growth is not a rounding error. Second, architecture choice, specifically how you design the retrieval and orchestration layers, determines value realization far more than which foundation model you choose. Third, workflow redesign is the non-negotiable unlock: organizations that bolt gen AI onto existing processes capture a fraction of the available value.

The open question is not whether your industry will be reshaped by generative AI. It already is. The question is whether your engineering team is building the retrieval pipelines, orchestration frameworks, and governance structures that turn a prototype into a production system that compounds in value over time.