Industry 5.0 is the next phase of industrial development that follows Industry 4.0. It reintegrates human creativity, judgment, and social value alongside advanced automation, rather than optimising for efficiency alone. Three interlocking pillars define it: human-centricity, resilience, and sustainability. Cobots, digital twins, edge AI, and explainable AI (XAI) form the enabling technology stack. Unlike its predecessor, Industry 5.0 measures success not only in throughput, but in worker wellbeing, product personalisation, and supply-chain anti-fragility.

The $255 Billion Shift: Why Human-Centricity Is the New Efficiency

Industry 5.0 human-machine collaboration is not a philosophical aspiration; it is a market reality. The global Industry 5.0 market is forecast to grow from $65.8 billion in 2024 to $255.7 billion by 2029 (31.2% CAGR). That growth is driven by one insight: machines working alongside humans outperform either working alone.

McKinsey (2025) estimates that AI agents and robots could generate roughly $2.9 trillion in US economic value per year by 2030, but only if organisations redesign workflows rather than simply bolt automation onto existing processes. That redesign is exactly what Industry 5.0 demands.

For software developers and CTOs, this creates both an urgent mandate and a concrete opportunity. The question is no longer whether to adopt AI and robotics. The question is how to architect systems that keep human judgment at the centre while letting machines handle scale, speed, and precision.

“Machines working alongside humans outperform either working alone, and the $255B market forecast proves it.”

Industry 4.0 vs. Industry 5.0: What Actually Changed for Your Stack

Industry 4.0 optimised processes; Industry 5.0 optimises outcomes. The shift is from automation-first to human-first design, and that changes nearly every architectural decision.

Industry 4.0 gave us cyber-physical systems, IoT sensor networks, cloud analytics, and the connected factory. It was enormously productive. It was also, as ISG Research (2025) notes, somewhat silent on the human element. Robots replaced assembly workers; algorithms replaced middle managers. The implicit assumption was that efficiency and headcount reduction were the same thing.

Industry 5.0 rejects that assumption. A systematic review of 98 academic articles (Tandfonline, 2025) identifies three consensus themes: human centricity, resilience, and sustainability. Cobots work beside humans rather than instead of them. Digital twins give operators a live virtual model they can interrogate. XAI dashboards explain model decisions in plain language so engineers can override them when context demands it.

| Dimension | Industry 4.0 | Industry 5.0 | Best Used When |

|---|---|---|---|

| Primary Goal | Throughput and cost reduction | Human wellbeing and sustainable value | Greenfield factory design or major re-fit |

| Human Role | Monitor and maintain machines | Strategic collaborator with machines | Complex, variable, or high-stakes tasks |

| Robot Type | Industrial arms (caged) | Cobots (force-limited, open floor) | Wherever human proximity is required |

| Data Use | Optimise machine KPIs | Augment human decision-making | When operator insight is scarce or slow |

| AI Approach | Predictive analytics, black-box ML | Explainable AI (XAI), human-in-the-loop | High-stakes or regulated environments |

| Risk Model | Reliability engineering | Anti-fragility and ethical governance | Supply chains or safety-critical processes |

The Technology Stack That Makes It Real

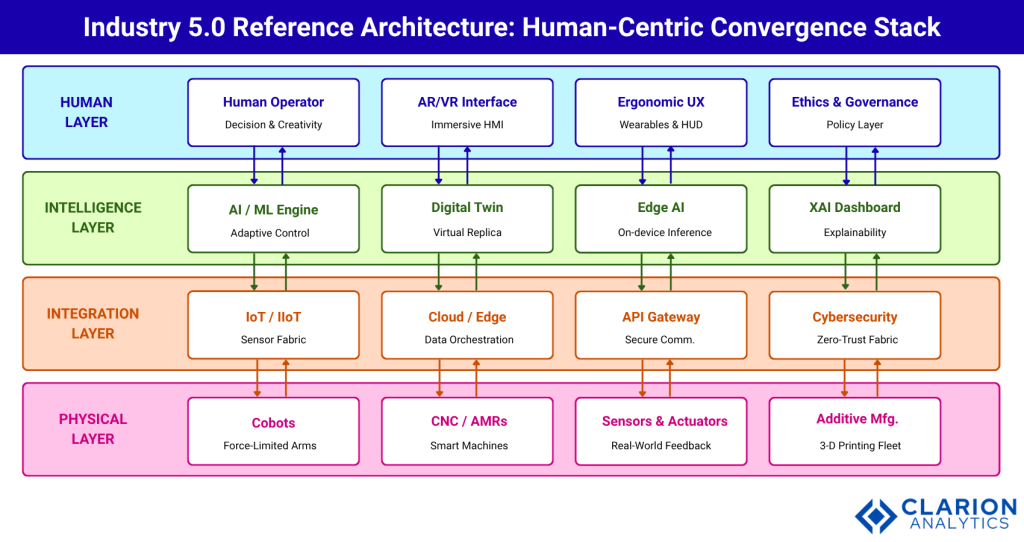

Four technology families define the Industry 5.0 stack: cobots, digital twins, edge AI, and XAI dashboards. Each layer amplifies, rather than replaces, human decision-making.

Collaborative robots, cobots, carry force-torque sensors that cut power on contact with a human hand. They share a workspace rather than occupying a cage. Digital twins create real-time virtual replicas of machines, cells, and entire factories, allowing operators to test interventions in simulation before applying them physically. Edge AI runs inference locally on the shop floor, eliminating cloud-round-trip latency for safety-critical decisions. XAI dashboards translate model outputs into language engineers trust enough to override.

The architecture spans four layers: Physical (cobots, CNC machines, sensors, additive manufacturing), Integration (IoT fabric, cloud orchestration, API gateway, cybersecurity), Intelligence (AI/ML engine, digital twin, edge AI, XAI dashboard), and Human (operators, AR/VR interfaces, ergonomic UX, ethics and governance). Data flows bidirectionally between all four layers.

Figure 1 caption: Industry 5.0 Reference Architecture, Human-Centric Convergence Stack. Data flows bidirectionally between all four layers. Physical-layer sensors feed real-time telemetry upward to the Integration Layer, which routes it to the Intelligence Layer. The Intelligence Layer surfaces decisions and alerts to the Human Layer via AR/VR interfaces and XAI dashboards. Human operators close the loop with override commands that flow back down the stack.

Real-World Use Cases: Where Developers Are Shipping Today

Leading Industry 5.0 deployments appear in automotive cobot lines, pharmaceutical digital twins, and logistics AMR fleets. Every production deployment shares one trait: a human remains the final decision authority.

Automotive: Cobot-Assisted Final Assembly

BMW and similar OEMs deploy force-limited cobots for seat installation and wiring harness routing — tasks that require both strength and tactile judgment. The cobot carries the part; the human guides placement. Error rates drop and repetitive-strain injuries fall in parallel. The cobot adapts its path in real time when the human repositions the component.

Pharmaceutical: Digital Twin Quality Control

Biopharma manufacturers run virtual replicas of bioreactors alongside physical batches. Process scientists monitor pH drift in the twin before it registers on the physical vessel. When the twin signals anomaly, a scientist reviews the AI recommendation and authorises a corrective feed adjustment. The FDA increasingly expects this kind of audit trail.

Logistics: Human-AMR Fleet Coordination

In e-commerce fulfilment centres, autonomous mobile robots (AMRs) handle bin transport while human pickers focus on exception handling, fragile items, and returns processing. Edge AI assigns tasks to AMRs based on picker location and workload. In practice, teams building this system typically find that the handoff protocol, how the AMR signals it is waiting, requires as much design effort as the routing algorithm.

“Every production Industry 5.0 deployment shares one trait: a human remains the final decision authority.”

Implementation Playbook: Getting from Zero to a Working Human-AI Loop

A three-phase Sense-Infer-Act approach lets teams ship a minimal human-in-the-loop system in under eight weeks, without waiting for a complete factory overhaul.

Phase 1: Sense – Connect Your Physical Assets

Attach IIoT sensors to the two or three machines most critical to throughput. Stream telemetry via MQTT or OPC-UA to an edge gateway. Store raw time-series in a lightweight TSDB (TimescaleDB or InfluxDB). Validate data quality before building any model. Garbage-in applies doubly when human safety depends on the output.

Phase 2: Infer – Build the Digital Twin and Anomaly Detector

Start with a physics-informed baseline (e.g., a degradation curve from the equipment manufacturer’s spec sheet). Overlay a lightweight ML model trained on historical sensor data. The code snippet below, adapted from the open-source Javihaus/Digital-Twin-in-python framework, shows this hybrid pattern in practice.

Source: Javihaus/Digital-Twin-in-python – hybrid_digital_twin/core.py

The twin combines a physics degradation coefficient (physics_k) with a three-layer neural network. The predict_future() call returns a capacity trajectory that can trigger a maintenance alert 200+ cycles before failure, giving a human technician actionable lead time.

Phase 3: Act – Close the Human-in-the-Loop Cycle

Surface inferences to operators via a dashboard or AR heads-up display. Provide one-click override. Log every override with a timestamp and operator ID; this data becomes the training signal that makes your next model iteration more accurate. The FABRIC cobot pattern below shows how runtime scenario switching works in a ROS-based system.

Source: cangorur/human_robot_collaboration – config/scenario_manager.py

Setting ROS parameters at runtime lets the operator change the collaboration mode without stopping the robot. The FABRIC node re-reads parameters on its next planning cycle and adjusts its Bayesian policy to match the new human context, no cold restart required.

The Risks Developers Must Design Around

The three biggest risks in Industry 5.0 systems are automation bias, cybersecurity surface expansion, and skill atrophy in operators.

Automation bias is the tendency for humans to trust algorithmic output even when their own judgment would catch an error. Counter it with confidence-interval visualisation and mandatory override prompts on high-consequence decisions. Every XAI dashboard should display not just what the model recommends, but how certain it is.

Cybersecurity surface area grows every time you connect a cobot or sensor to a network. The Integration Layer must implement a zero-trust fabric: mutual TLS between all nodes, signed firmware updates, and network segmentation that isolates OT from IT.

“A compromised cobot is not a software bug – it is a physical-safety incident.”

Skill atrophy is the subtlest risk. When a cobot handles all repetitive assembly, operators may lose the motor memory to step in during a robot fault. Design rotation schedules and simulation-based refreshers into the operating model from day one.

Frequently Asked Questions

How does Industry 5.0 human-machine collaboration differ from automation? Automation replaces human tasks; Industry 5.0 collaboration augments them. In an Industry 5.0 system, machines handle repetitive or physically demanding work while humans retain final authority on complex, context-dependent decisions. The goal is higher combined output, not lower headcount.

What programming languages and frameworks are most used in Industry 5.0 systems? Python dominates the Intelligence Layer for digital twins, ML models, and XAI tooling. ROS 2 (C++ and Python) is the standard for cobot integration. Node-RED and OPC-UA connectors handle IIoT data ingestion. Rust and C++ appear in latency-critical edge inference modules.

How is a digital twin different from a simulation? A simulation runs a model of a system in isolation. A digital twin is a live replica that receives real-time sensor data from its physical counterpart and updates continuously. The twin diverges from the simulation exactly when it matters most: under anomalous real-world conditions.

What is the minimum viable Industry 5.0 project a team can ship in 8 weeks? Connect two to three IIoT sensors to an edge gateway, stream telemetry to a time-series database, train a lightweight anomaly detector on historical data, and surface alerts to a human operator via a web dashboard with a one-click override. That is a working human-in-the-loop system.

How do cobots stay safe when working next to humans? Modern cobots (Universal Robots, KUKA LBR, FANUC CRX) use force-torque sensors that cut motor power within milliseconds of detecting unexpected resistance. Speed-and-separation monitoring via computer vision adds a second safety layer. Both mechanisms must be validated against ISO/TS 15066 before deployment.

Conclusion: Three Truths and One Question

Three insights should anchor every Industry 5.0 architecture decision. First, the market is moving fast — $255.7 billion by 2029 means first-mover advantages will compound quickly. Second, the technology stack is mature enough to ship: cobots, digital twins, edge AI, and XAI are production-ready today, not research prototypes. Third, the hardest problem is organisational, not technical. Automation bias, operator skill atrophy, and cybersecurity governance require design attention from day one.

The question worth sitting with: in five years, will your organisation look back and describe AI as something that replaced your best people, or something that made them ten times more effective?