Computer vision worker safety is the use of AI-powered camera systems to detect, classify, and alert on occupational hazards in real time without human observers watching every feed. These systems combine object detection, pose estimation, and predictive analytics to identify missing PPE, unauthorised zone entry, unsafe postures, and equipment proximity risks the moment they appear, not after an incident report is filed.

The Problem with Waiting for the Incident Report

Computer vision worker safety systems shift organisations from lagging indicators to real-time, predictive hazard detection reducing the gap between a risk appearing and a human being alerted.

According to the U.S. Bureau of Labor Statistics (2024), a U.S. worker died every 104 minutes from a work-related injury in 2024. Construction and extraction recorded 1,032 fatalities that year. Manufacturing facilities reported 220,000 injuries. Transportation and warehousing remains the most dangerous occupational group, with 1,391 fatalities in 2024 alone.

The hard truth: most of those deaths were preceded by a visible, detectable hazard, a missing hard hat, a worker in a forklift exclusion zone, a collapse risk that no human happened to be watching at that exact moment. Traditional safety programs excel at documenting what went wrong. They struggle to catch what is about to go wrong.

That gap is what computer vision closes. And it does so using infrastructure most operations leaders already own.

“The camera was always there. The intelligence was not.”

How Computer Vision Safety Systems Actually Work

A computer vision safety system ingests live video from existing cameras, runs inference on each frame using a trained deep-learning model, and triggers an alert when a defined safety rule is violated — typically within 200 milliseconds.

The system does not require a human to watch every feed. It watches all feeds simultaneously, applies detection models trained on tens of thousands of labelled images, and fires an alert only when a threshold is crossed. The supervisor acts. The model monitors.

The Four Core Detection Tasks

PPE compliance detects the presence or absence of hard hats, high-visibility vests, gloves, and safety glasses per worker per frame. Zone and perimeter enforcement flags workers who enter exclusion zones around heavy plant, excavations, or energised equipment. Pose and ergonomics analysis estimates body joint positions to identify unsafe lifting postures or fatigue indicators before musculoskeletal injury occurs. Equipment proximity and struck-by risk tracking measures the distance between pedestrians and moving vehicles in real time.

From a technical standpoint, the core engine is object detection most commonly a YOLO (You Only Look Once) architecture. A 2024 peer-reviewed systematic review in Artificial Intelligence Review (Springer) found that YOLO-based detectors deliver the highest frames-per-second throughput for PPE compliance, with several production deployments exceeding 95% detection accuracy.

Code Snippet 1 – Source: ciber-lab/pictor-ppe / Detection-Example.ipynb

Minimum-viable PPE detector: load a pre-trained YOLO-v3 model, run inference on a site image, and classify each detected worker as compliant or non-compliant.

A technical lead can adapt this notebook with site-specific training images in under a day. The ciber-lab/pictor-ppe repository pairs this code with the Pictor-v3 dataset of 1,472 crowd-sourced construction site images, giving teams a labelled starting point for fine-tuning.

Manufacturing: From Conveyor Belt to Control Room

In manufacturing, AI safety monitoring flags unsafe postures at assembly stations, detects workers entering hazardous machine zones without lockout-tagout confirmation, and monitors ergonomic risk in real time reducing musculoskeletal injuries, which account for nearly one-third of serious workplace injuries.

The scale of the problem is significant. The Manufacturers Alliance member survey (2024) found that 72% of manufacturing leaders believe AI will make plants safer. Nonfatal injuries in the sector fell to 326,400 cases in 2023, a 6.15% reduction, but the rate of cases requiring days away from work remained stubbornly flat. Injury frequency is falling. Injury severity is not.

Computer vision changes that equation by catching the posture or the moment before the injury, not documenting it afterward. Pose estimation models track the angle of a worker’s spine during a lifting task. If it exceeds a calibrated threshold, the system flags it as an ergonomic risk event, triggering either a real-time alert or a coaching notification.

“Musculoskeletal injuries are invisible until they are debilitating. Computer vision makes the body’s risk posture visible before the damage is done.”

Amazon warehouses using technology-enabled safety programs achieved a 34% improvement in recordable incident rates and a 65% drop in lost-time incidents over five years. Amazon performed 7.8 million facility inspections in 2024, a 24% increase from 2023. That scale is only possible with automated vision systems.

In practice, manufacturing teams building this typically find that the highest-value first deployment is not the most complex one. Starting with PPE compliance monitoring on a single production line generates immediate data, builds internal confidence in the system, and creates the incident-reduction evidence needed to justify a broader rollout.

Construction: Watching What Humans Cannot Watch Fast Enough

On construction sites, AI-powered cameras monitor the “Fatal Four” hazard falls, struck-by, electrocution, and caught-in incidents simultaneously across the full site, delivering alert accuracy above 90% in validated deployments. According to the Construction Industry Institute (2023), maintaining alert accuracy above 90% is critical to prevent alert fatigue and keep worker responsiveness high.

Construction is the most lethal civilian occupation in the U.S. In 2023, the fatal injury rate for construction workers was 9.6 per 100,000 workers. The four fatal categories account for 60% of construction deaths. Falls alone are responsible for 38.4% of all construction fatalities, according to OSHA enforcement data. Computer vision addresses all four categories simultaneously from a fixed camera position, something no human observer can replicate at scale.

Skanska’s early AI drone pilot reduced inspection time by 50% and cut human error in hazard identification by 30%. Companies using active AI safety systems on construction sites now report incident reductions of 40 to 50%, driven by earlier detection and faster supervisor response.

The academic evidence supports this. A 2023 paper published in Sensors (MDPI) from the Korea Electronics Technology Institute proposed a framework combining computer vision with semantic rule inference, validating real-time helmet and safety gear detection alongside worker pose estimation on active construction sites.

Code Snippet 2 – Source: ultralytics/ultralytics / ultralytics/solutions/object_counter.py

Zone-entry counter: turns any existing camera feed into a real-time worker and vehicle tracker for exclusion zone enforcement.

A site team can deploy this in under an afternoon to enforce exclusion zones around excavations or heavy plant. The ultralytics/ultralytics repository has over 130,000 GitHub stars and receives weekly updates, making it one of the most production-ready CV frameworks available to industrial teams.

Logistics and Warehousing: Speed, Forklifts, and Invisible Risks

Warehousing combines high vehicle density, repetitive motion, and variable lighting conditions where human observation fails at scale. Computer vision monitors forklift proximity, pedestrian lane compliance, and ergonomic risk simultaneously across hundreds of thousands of square feet.

“A forklift moving at 8 mph covers the length of a warehouse aisle in seconds. No safety manager can watch every aisle. An AI system can.”

Manufacturing facilities reported 220,000 injuries in 2024. Musculoskeletal disorders account for nearly one-third of serious workplace injuries. Amazon data shows MSDs represent 57% of recordables in warehouse operations. Warehouses that have adopted automation technologies have seen a 25% reduction in workplace injuries, according to warehouse automation analysis (Sellers Commerce, 2024).

The competitive pressure is also sharpening. BCG’s 2026 logistics AI survey found that more than 40% of shippers now consider an LSP’s AI capabilities when selecting a logistics partner. AI safety is no longer just an HSE decision. It is a commercial differentiator.

McKinsey’s November 2024 analysis of distribution operations found that AI-powered tools can unlock 7 to 15 percent additional warehouse capacity by identifying spare capacity, adjusting workflows, and balancing workloads. A major logistics provider used a digital twin powered by AI to increase warehouse capacity by nearly 10% without adding new real estate. Safety and efficiency improvements are increasingly inseparable.

System Architecture: What You Actually Need to Deploy This

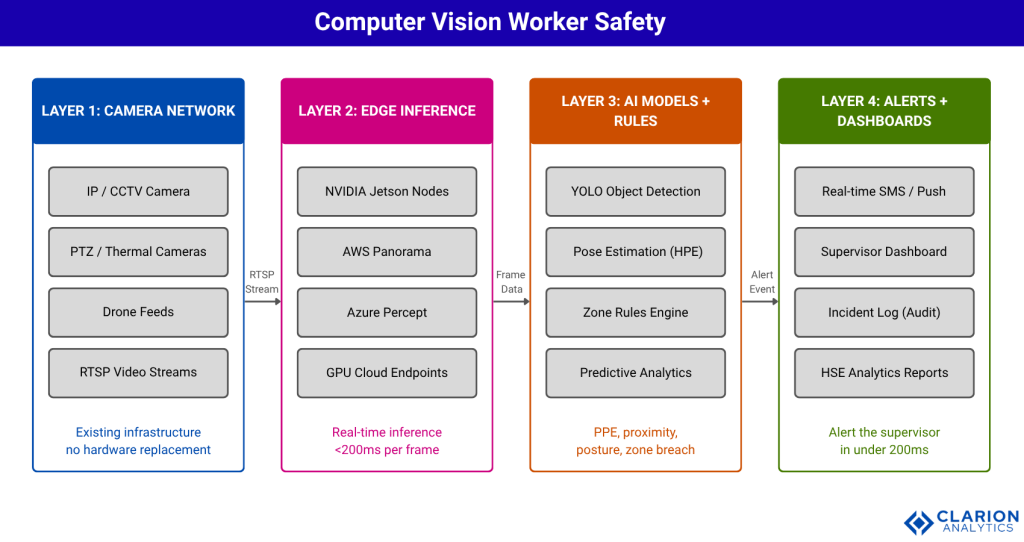

A production computer vision safety system has four layers: edge devices (cameras and inference hardware), an AI inference engine running object detection and pose models, a rules and alerting layer, and a safety analytics dashboard for HSE teams.

Most organisations already own Layer 1. Cameras are pervasive in industrial environments. The question is whether they are generating intelligence or just storage costs. Connecting existing RTSP streams to an inference node requires no new cabling and no camera replacement.

Comparing Deployment Approaches

| Approach | Key Strength | Best Used When |

|---|---|---|

| Rule-based CCTV analytics | Low cost; fast deployment on existing cameras | Basic zone enforcement and occupancy counting in low-complexity environments |

| YOLO-based real-time CV | Sub-second multi-class detection; >95% PPE accuracy in benchmarks | Active production floors and construction sites needing continuous monitoring |

| Pose estimation + ergonomics AI | Quantifies body posture risk; prevents MSDs before injury occurs | Manufacturing assembly lines and warehouses with high repetitive-motion injury rates |

| Predictive analytics layer | Combines historical near-miss data with live CV signals to forecast risk windows | Sites with rich historical safety data seeking pre-scheduled interventions |

| Drone + CV inspection | Accesses heights and hard-to-reach areas; cuts inspection time up to 50% | Large-footprint construction or infrastructure sites needing aerial hazard mapping |

Implementation: What Teams Building This Actually Learn

Successful deployments start with a single camera zone and a narrow detection task, typically PPE compliance before expanding to multi-site rollouts. The biggest barrier is not the technology. It is employee trust and change management.

The Manufacturers Alliance (2024) found that employee acceptance was the most-cited challenge in AI safety implementation, flagged by over half of survey respondents. Workers need to understand that CV systems generate coaching data, not surveillance records for discipline. Partnering with HR and legal to establish a clear data-use policy before deployment is not optional. It is the foundation on which adoption is built.

In practice, teams building this typically find three phases: a two-week baseline period where the system logs events without alerting, giving HSE teams clean data on actual hazard frequency; a supervised alert phase where supervisors receive notifications but verify them manually, calibrating confidence thresholds; and a full production phase where high-confidence detections trigger automated workflows and lower-confidence detections go to a human review queue.

The ROI case emerges from the baseline data. One construction firm documented 2,400 PPE compliance violations in the first two weeks of baseline monitoring events that would have generated zero incident reports under the previous system because no injury had occurred. That data becomes the business case for expansion.

“Start with one camera, one hazard class, and one week of baseline data. The ROI case builds itself.”

Frequently Asked Questions

How does computer vision improve worker safety?

Computer vision analyses live video to detect hazards the moment they appear, such as missing PPE, unauthorised zone entry, unsafe postures, and forklift proximity risks. It alerts supervisors in real time, shifting safety from a reactive, paperwork-driven process to a continuous, automated prevention layer. No human observer needs to watch every feed.

Can AI safety monitoring work with my existing cameras?

Yes, in most cases. Modern CV safety platforms ingest standard RTSP video streams from IP cameras already on your site. You add edge inference hardware or connect to a cloud inference endpoint. This eliminates the need to replace existing camera infrastructure and lowers the capital barrier significantly.

What is the ROI of computer vision safety systems in manufacturing?

Successful AI safety implementations have demonstrated ROI of 150 to 200 percent within two years, driven by lower injury costs, fewer production stoppages, and reduced insurance premiums. Every dollar invested in workplace safety returns four to six dollars in avoided costs, according to construction safety benchmarks.

How accurate are AI hazard detection systems on construction sites?

The Construction Industry Institute found alert accuracy above 90% is needed to maintain worker responsiveness. Leading YOLO-based PPE detection models now exceed 95% accuracy in peer-reviewed benchmarks. Accuracy drops in occlusion-heavy or low-light conditions, making camera placement a critical design decision.

What are the biggest challenges when deploying AI safety monitoring?

The three most common challenges are worker acceptance and privacy concerns (flagged by over 50% of manufacturing survey respondents), fragmented data infrastructure, and scaling from a pilot to enterprise-wide deployment. Transparency about footage use and a clear governance policy matter more than model selection.

Three Truths That Should Change Your Next Capital Decision

Three insights from this analysis should land with any operations or HSE leader. First, the infrastructure cost of computer vision worker safety is lower than most organisations assume because most sites already own the cameras. Second, the ROI is measurable within weeks, not quarters: baseline monitoring reveals the true frequency of near-miss events that legacy systems never capture. Third, the technology is production-ready. YOLO-based detection models now exceed 95% accuracy on PPE compliance, and frameworks like ultralytics receive weekly updates from a community of over 130,000 active developers.

The deployment risk is not technical. It is organisational. Facilities that treat AI safety monitoring as a surveillance program will face resistance. Facilities that position it as a coaching and prevention tool, are transparent about data use, and are focused on systemic risk patterns rather than individual blame consistently achieve faster adoption and stronger safety outcomes.

The single question worth sitting with: if your organisation experienced a preventable fatality next week, and the safety camera footage showed the hazard clearly 30 seconds before impact, what would you wish your system had done with that footage in real time?

“The technology is no longer the barrier. The decision to start is.”