Construction site safety AI refers to the deployment of computer vision models, edge computing hardware, and machine learning algorithms to automatically detect unsafe conditions, PPE non-compliance, and proximity hazards on active job sites, providing real-time alerts to site supervisors within milliseconds of detection.

The Fatality Numbers That Make the Status Quo Indefensible

In 2024, construction and extraction workers suffered 1,032 fatalities in the U.S. alone, according to BLS data, with falls representing the single largest cause. Manual audits catch these risks after injuries, not before.

Construction remains among the most dangerous industries on earth. According to the U.S. Bureau of Labor Statistics (2026), construction site safety AI is becoming a survival issue: the sector logged 1,032 worker fatalities in 2024, with falls, slips, and trips accounting for 370 of those deaths. That is one fatality roughly every eight hours, in a single industry.

OSHA data (2024) shows Fall Protection remains the single most-cited construction standard, year after year. The pattern is predictable, yet prevention using manual inspection has stalled. A site with 200 workers, multiple floors of activity, and rotating subcontractors cannot be monitored by a single HSE officer walking rounds.

The lagging-indicator problem is structural. Paper-based safety audits generate records of hazards that already passed unchallenged. Walk-through inspections happen once per shift. Near-misses go unreported. The data that could prevent the next incident exists on-site but is invisible to the people who need it.

“Every inspection that happens on paper is a hazard that already passed unchallenged.”

The business case compounds the moral one. The National Safety Council estimates a single injured worker costs $42,000 in direct medical costs and lost time. A single fatality, including legal exposure and project delays, can reach seven figures. Deloitte’s 2024 Engineering and Construction Outlook found that AI-driven inspection and real-time hazard detection delivers a 20% boost in overall site productivity. Safety investment and operational efficiency are the same investment.

How Computer Vision Detects Hazards in Real Time

Computer vision safety systems process live video frames through object-detection models such as YOLO, identifying missing PPE, unauthorized zone entry, and machinery proximity in under 50 milliseconds, then pushing alerts to supervisors via SMS, email, or dashboard.

Computer vision construction safety works by treating every camera frame as a structured data source. A trained deep learning model, most commonly a variant of the You Only Look Once (YOLO) architecture, scans the frame and draws bounding boxes around recognized objects: hard hats, safety vests, persons, vehicles, machinery, and zone boundaries.

The speed is what separates this from CCTV. Wang et al. (2021, Sensors) demonstrated that YOLOv5s runs at 52 frames per second on GPU, with a mean average precision (mAP) of 86.55% on a construction-specific dataset. That means a violation can be flagged, and an alert dispatched, before a supervisor would have finished scanning the same area with a naked eye.

More recent architectures push the boundary further. A 2025 study published in Scientific Reports (Wang, 2025) showed that YOLOv10 with transformer attention layers delivers superior handling of partial occlusions and multi-scale objects, which are both endemic problems on cluttered job sites where a worker’s hard hat may be partially obscured by scaffolding.

The YOLO model does not work in isolation. Post-processing layers apply non-maximum suppression to eliminate duplicate detections and a confidence threshold filter to reduce false alarms. Zone enforcement uses spatial clustering algorithms such as HDBSCAN to map safety cones into monitored exclusion zones. The combined system produces actionable, specific, timestamped alerts rather than raw motion-detection flags.

Five High-Value Use Cases on Active Job Sites

The five most proven uses are PPE compliance monitoring, restricted zone enforcement, proximity detection near heavy machinery, fall-risk posture analysis, and multi-site centralized dashboards for HSE directors.

1. PPE Compliance Monitoring. The system detects missing hard hats, absent safety vests, and uncovered faces across all camera feeds simultaneously. Deloitte’s 2026 E&C Outlook specifically cites computer vision safety analytics as a transformative layer for compliance, noting that hazards are now identified in seconds rather than shift-end walk-through reviews.

2. Restricted Zone Enforcement. Safety cones define exclusion boundaries around open trenches, crane swing radii, and live electrical work. The AI maps those coordinates and triggers an alert the moment a worker steps inside.

3. Proximity Detection Near Heavy Machinery. Excavators and telehandlers create strike zones that shift as equipment moves. The system monitors person-to-machine distance in real time and alerts operators before a struck-by incident occurs. OSHA lists struck-by incidents as one of the Fatal Four.

“A single prevented fall incident can pay for an entire site’s AI safety platform for the duration of the project.”

4. Fall-Risk Posture and Edge Proximity Analysis. Skeleton-pose estimation models identify workers within a threshold distance of unguarded edges or working at height without visible harness attachment points. This is the most difficult detection class but also the most consequential: falls caused 370 of the 1,032 construction deaths in 2024.

5. Multi-Site Centralized HSE Dashboard. For HSE Directors overseeing multiple concurrent projects, a centralized dashboard aggregates violation counts, heatmaps of high-frequency hazard zones, and compliance trend lines. This converts scattered site data into portfolio-level risk intelligence.

System Architecture: From Camera Feed to Supervisor Alert

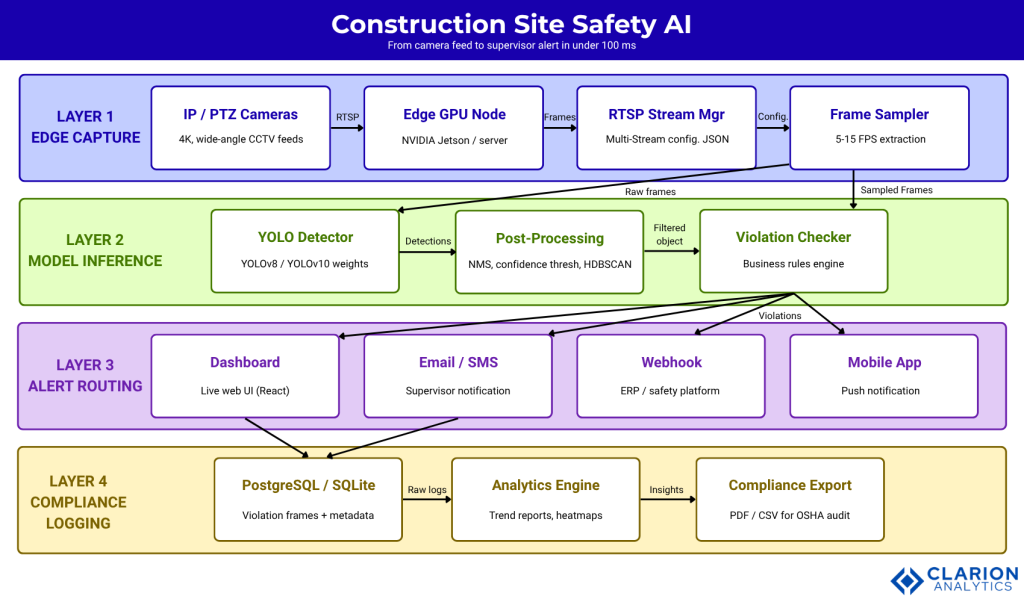

A construction CV safety stack has four layers: edge capture (IP cameras + edge GPU nodes), model inference (YOLO-based detection running on-device), alert routing (webhooks, email, SMS, dashboard), and compliance logging (database recording every violation frame).

The diagram below maps the complete data flow from camera to compliance log. Four layers operate in sequence, with latency budgets that keep the system responsive in under 100 milliseconds for alert-critical events.

Figure 1: Construction Site Safety AI Architecture. Layer 1 (Edge Capture) ingests RTSP streams from IP cameras into an on-site edge GPU node, where a frame sampler extracts 5-15 FPS for inference. Layer 2 (Model Inference) runs the YOLO detector, applies post-processing (NMS, confidence filtering, HDBSCAN zone mapping), and passes confirmed violations to the violation checker. Layer 3 (Alert Routing) simultaneously dispatches to a live web dashboard, email/SMS to the supervisor, webhooks to ERP or safety platforms, and mobile push notifications. Layer 4 (Compliance Logging) stores timestamped violation frames and metadata in a relational database, feeds an analytics engine for trend reporting, and exports compliance records for OSHA audit.

In practice, teams building this typically find that edge-node placement is the first critical decision. A node must have line-of-sight to the network switch feeding camera streams, adequate cooling in site-trailer conditions, and a UPS battery for brief power interruptions. The software stack itself is more forgiving: Docker Compose deployments (as used in the Ansarimajid/Construction-PPE-Detection reference architecture) spin up a FastAPI inference service, PostgreSQL database, and web dashboard with a single command.

Choosing the Right Approach: A Tool Comparison

Rule-based CCTV systems flag motion but cannot classify hazard types. Cloud-only CV models add latency and bandwidth cost. Edge-deployed AI systems deliver sub-100ms detection with offline resilience, making them the leading choice for large active sites.

Not every site or budget warrants the same deployment model. The comparison below covers the five primary approaches currently in use.

| Approach / Tool | Key Strength | Key Limitation | Best Used When |

|---|---|---|---|

| Traditional CCTV (Rule-based Motion) | Low cost; no AI training needed | Cannot classify hazard type; high false-positive rate | Small sites with minimal dynamic hazards |

| Cloud-only Computer Vision | No edge hardware; easy central management | Latency 500ms+; bandwidth cost; offline failure | Low-risk zones with reliable connectivity |

| Edge-deployed AI (YOLO + GPU node) | Sub-100ms inference; works offline; scalable | Higher upfront hardware cost | High-risk active sites; remote locations |

| Hybrid Edge + Cloud Sync | Real-time edge alerts + cloud analytics | More complex integration; dual maintenance | Enterprise multi-site rollouts requiring central dashboards |

| Wearable Sensor + AI | Individual tracking; biometric data | Worker adoption friction; battery management | High-value workers in extreme-risk zones |

“Teams that deploy CV safety on a pilot zone first consistently build faster stakeholder buy-in than those who push for site-wide rollout from day one.”

The edge-deployed model dominates for large active sites, but the hybrid edge + cloud approach is gaining ground for enterprise clients who need portfolio-level analytics. McKinsey research (2022) found that AI safety implementations in construction deliver 150 to 200 percent ROI within two years. The hybrid model makes that ROI visible to senior leadership through centralized dashboards rather than siloed site reports.

Implementation Roadmap: From Pilot to Multi-Site Rollout

A successful rollout follows four steps: site survey and camera placement planning (weeks 1-2), model fine-tuning on site-specific PPE and machinery classes (weeks 3-4), alert workflow integration with existing safety management platforms (weeks 5-6), and phased expansion with ROI review at 90 days.

Phase 1: Site Survey and Camera Placement (Weeks 1-2). Map high-risk zones: crane swing radii, fall edges, equipment staging areas, and site entry points. Place PTZ and wide-angle 4K cameras to maximize field-of-view coverage. Define exclusion zones using physical safety cones, whose coordinates the AI will later cluster into monitored boundaries.

Phase 2: Model Fine-Tuning (Weeks 3-4). The base YOLO weights are pre-trained on large construction PPE datasets. Fine-tune on 200 to 500 site-specific annotated images to capture your unique gear colors, machinery profiles, and lighting conditions. This step is what takes detection accuracy from acceptable to production-grade.

Phase 3: Alert Workflow Integration (Weeks 5-6). Configure notification channels. Set alert cooldown thresholds to prevent supervisor fatigue from repeated identical alerts. Connect the violation log database to your safety management platform via webhook or API.

Phase 4: Phased Expansion and 90-Day ROI Review. Expand camera coverage zone by zone. At 90 days, compare violation frequency data against pre-deployment incident records to produce the ROI case for board-level reporting.

Code Snippet 1: Multi-Stream Site Configuration

Source: yihong1120/Construction-Hazard-Detection — config/configuration.json (GitHub)

This configuration block governs one camera stream. The detection_items flags control exactly which hazard classes the model actively monitors on that stream. Limiting detection to relevant hazard types for each zone reduces false-positive volume and keeps supervisor attention focused on actionable alerts. The work-hours parameters prevent night-shift camera artifacts from triggering daytime violation thresholds.

Code Snippet 2: Production Deployment Environment Variables

Source: Ansarimajid/Construction-PPE-Detection — .env.example (GitHub)

env

MODEL_PATH=Model/ppe.pt

DETECTION_CONFIDENCE=0.5

ALERT_COOLDOWN_SECONDS=10

DATABASE_URL=postgresql+asyncpg://user:pass@db/ppe_detection

SENDER_EMAIL=safety@yourfirm.com

RECEIVER_EMAIL=hse.director@yourfirm.com

WEBHOOK_URL=https://hooks.yoursafetyplatform.com/alertsThese seven variables control the entire behavioral profile of a production PPE detection service. The DETECTION_CONFIDENCE threshold (0.0 to 1.0) is the single most impactful tuning parameter: lowering it increases recall at the cost of more false positives; raising it tightens precision. ALERT_COOLDOWN_SECONDS prevents the same ongoing violation from flooding the supervisor’s inbox. The WEBHOOK_URL enables direct integration with enterprise safety management platforms such as Procore or Intelex.

Frequently Asked Questions

How accurate is AI in detecting PPE on construction sites?

State-of-the-art models such as YOLOv8 achieve mAP50 scores above 87% on construction-specific datasets (Wang et al., 2024, Cogent Engineering). Edge-deployed systems with site-specific fine-tuning consistently exceed 90% precision in controlled deployments. False-positive rates drop significantly when models are trained on site-specific gear colors and lighting conditions.

Can computer vision work with my existing site cameras?

Yes. Modern CV safety platforms accept RTSP streams from most IP cameras, including legacy CCTV. You typically need a local edge GPU node (such as an NVIDIA Jetson) to run inference, but the cameras themselves do not need to be replaced. Platforms like the open-source Construction-Hazard-Detection framework support multi-stream RTSP configuration out of the box.

What does it cost to implement AI safety monitoring on a construction site?

Entry-level deployments covering 10 to 20 cameras typically run $15,000 to $60,000 for hardware and first-year software. Full-platform solutions on large sites can reach $2,000 to $10,000 per project per month. A mid-size project with meaningful fall and struck-by risk typically sees ROI within 12 to 18 months through prevented incidents and reduced insurance premiums.

How does real-time hazard detection differ from standard CCTV surveillance?

Standard CCTV records footage for later review. Real-time AI hazard detection processes each video frame in under 100 milliseconds, classifies specific hazard types (missing hard hat, worker in exclusion zone, proximity to machinery), and pushes an actionable alert to a supervisor before the hazard escalates. It is the difference between a record of an incident and prevention of one.

How long does it take to see ROI from construction site safety AI?

According to McKinsey analysis, successful AI safety implementations in construction deliver 150 to 200 percent ROI within two years, driven primarily by reduced incident costs and productivity gains. A single prevented fall fatality, which the National Safety Council estimates costs $42,000 in direct medical costs alone, can exceed the full annual platform cost on a mid-size project.

“AI doesn’t replace your HSE director. It gives them eyes on every corner of the site, simultaneously.”

Three Things Every CXO Should Take Away

Construction site safety AI has crossed from experimental to production-ready. Three conclusions stand up to scrutiny from the research, the code, and the deployment data.

First, the ROI case is now empirical, not speculative. McKinsey documents 150 to 200 percent returns within two years. A single prevented fatal incident exceeds the annual platform cost for most sites. The financial argument for AI safety monitoring is stronger than the argument against it.

Second, the technology is deployable on existing infrastructure. RTSP-compatible IP cameras, an edge GPU node, and open-source YOLO weights provide a working foundation. Full-stack reference implementations exist in open source and production SaaS platforms. The integration barrier is real but not prohibitive.

Third, adoption without change management fails. Supervisors need to trust the alerts. Workers need to understand why cameras are there. HSE teams need to own the alert workflow. Technology without people-process alignment produces ignored dashboards, not safer sites.

The question is not whether construction site safety AI works. The question is which incident you are willing to let happen before you find out.

Table of Content

- The Fatality Numbers That Make the Status Quo Indefensible

- How Computer Vision Detects Hazards in Real Time

- Five High-Value Use Cases on Active Job Sites

- System Architecture: From Camera Feed to Supervisor Alert

- Choosing the Right Approach: A Tool Comparison

- Implementation Roadmap: From Pilot to Multi-Site Rollout

- Frequently Asked Questions

- Three Things Every CXO Should Take Away