Multilingual voice AI in APAC refers to enterprise conversational AI systems that process speech, understand intent, and generate responses across two or more regional languages, including Bahasa Indonesia, Bahasa Malaysia, Mandarin Chinese, and Tamil, within a single contact centre deployment. These systems combine automatic speech recognition (ASR), natural language understanding (NLU), and dialogue management to serve linguistically diverse customer bases without routing to separate language queues.

Why Generic Voice AI Fails APAC’s Contact Centres

Standard ASR models trained on Western data show word error rates exceeding 120% on Tamil and degrade significantly on Bahasa and code-switched Mandarin-English, making direct deployment in APAC contact centres commercially unviable without regional fine-tuning.

If your board has approved a multilingual voice AI budget and your vendor just showed you a demo in clean, studio-recorded English, you have a problem. According to Gartner (December 2024), 85% of customer service leaders will explore or pilot a customer-facing conversational GenAI solution in 2025. Over 75% feel executive pressure to implement. The momentum is real. But in APAC, the linguistics are not.

Research published in 2026 on Singapore’s four official languages found that Qwen3-ASR, despite achieving sub-3% word error rate on English, produced Tamil WER exceeding 120% before targeted fine-tuning. That is not a minor accuracy issue. That is a system that cannot function. Two-stage balanced upsampling reduced Tamil WER by 72% without degrading Mandarin or English performance. The fix exists, but it requires deliberate engineering, not default deployment.

The core problem is that most enterprise voice AI platforms were built for high-resource language markets. The training data skews toward English, then Mandarin, then everything else. Tamil, Malay, Bahasa Indonesia are systematically underrepresented. When your contact centre in Singapore, Kuala Lumpur, or Jakarta routes a call from a Tamil-speaking customer to an AI that has never been trained on Tamil speech, the failure is invisible to your vendor’s demo environment but painfully visible to your customer.

“APAC enterprises that treat multilingual voice AI as a translation problem will fail. It is a code-switching problem, and those are not the same thing.”

The Code-Switching Reality That Breaks Most Platforms

In APAC, agents and customers routinely switch between languages mid-sentence, a behaviour called code-switching, meaning a caller in Kuala Lumpur may open in English, clarify in Bahasa Malaysia, and close in Mandarin, all within 90 seconds.

Speechmatics research published in July 2025 describes a typical Singapore finance meeting where a speaker presents in English, switches to Mandarin for a key point, and a colleague follows up partially in Malay before reverting to English for the summary. This is not an edge case. It is the everyday rhythm of business communication across the region.

Monolingual voice AI systems, even those marketed as multilingual, typically require the caller to stay within a single language for the entire interaction. The moment a Singlish-speaking customer says “Boleh help me check my balance or not?” the system fails at transcription, fails at intent detection, and escalates to a human agent for a query that should have deflected cleanly.

Researchers at arXiv (2025) confirmed that fine-tuning Whisper and SeamlessM4T on code-switching pairs, specifically Bahasa Malay-English, Mandarin-Bahasa Malay, and Tamil-English, consistently outperforms purely multilingual training. Importantly, Singaporean code-switching occurs primarily at the phrase level rather than the word level. That structural difference matters: your NLU engine must be configured to handle phrase-level switches, not just token-level language identification.

In practice, teams building this architecture typically find that language identification latency is their first bottleneck. If the model takes 800 milliseconds to detect that a caller has switched from Bahasa to Mandarin, and then another 600 milliseconds to re-initialize the correct acoustic model, the natural rhythm of conversation is broken. The caller notices the pause. Trust erodes.

Four Enterprise Use Cases Driving Adoption Right Now

The four highest-ROI use cases for multilingual voice AI in APAC are inbound call deflection, agent assist with real-time transcription, post-call analytics, and IVR modernisation across language channels.

Inbound call deflection is where most APAC deployments start and where ROI is most measurable. AirAsia’s conversational AI implementation reduced its live agent workforce from 1,500 to fewer than 100 while maintaining customer satisfaction. The airline deployed AI-driven chatbots and predictive interaction models across booking, rebooking, refunds, and flight updates. The key enabler was multilingual support, handling queries in English, Bahasa Malaysia, Mandarin, and Thai through the same system.

Agent assist has become the second major deployment pattern. DBS Bank, serving customers across Singapore, Hong Kong, and Taiwan, built the CSO Assistant, a generative AI-powered virtual assistant handling voice and digital interactions in multiple languages. The system transcribes calls in real time, surfaces relevant knowledge base articles, and suggests response templates in the agent’s working language. Average handle time falls because agents stop searching and start resolving.

Post-call analytics applies multilingual transcription to the entire call archive, not just live calls. A contact centre receiving 200,000 monthly calls across three language markets has been, until recently, analytically blind to the non-English portion of that volume. Multilingual ASR makes all calls searchable, categorisable, and scorable. Compliance teams in financial services benefit particularly, as regulatory monitoring requirements apply regardless of the language spoken.

IVR modernisation replaces static, language-coded phone trees with dynamic language detection at the point of call ingestion. The caller does not press 1 for English or 2 for Mandarin. The system detects language within the first two seconds of speech and routes accordingly, or, in a full multilingual deployment, handles the entire interaction without routing.

“Gartner projects agentic AI will autonomously resolve 80% of common service issues by 2029, but only if the underlying ASR can hear the customer correctly in the first place.”

Architecture of a Production-Ready Multilingual Voice AI Stack

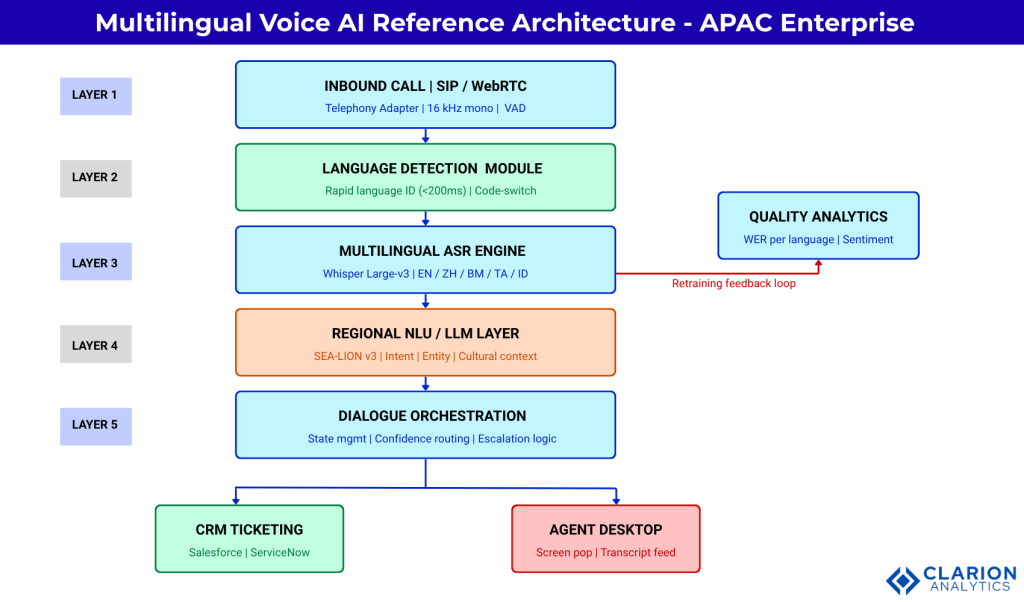

A production-grade multilingual voice AI stack for APAC requires five layers: telephony ingestion, a fine-tuned multilingual ASR engine, a regionally-tuned NLU/LLM layer, a dialogue orchestration layer, and a CRM integration bus.

Figure 1: Five-layer APAC Multilingual Voice AI Reference Architecture. A call enters via a SIP/WebRTC telephony adapter, passes through language detection (<200ms), routes to a fine-tuned ASR engine (Whisper Large-v3 or equivalent) with balanced training across English, Mandarin, Bahasa Malaysia, Tamil, and Indonesian. ASR output feeds a regionally-tuned NLU/LLM layer (SEA-LION v3 or fine-tuned GPT-4o). The dialogue orchestrator manages state, confidence thresholds, and escalation. CRM integration and agent desktop receive the final output. A quality analytics feedback loop continuously re-trains the ASR and NLU layers on real production data.

The confidence threshold at the dialogue orchestration layer is the component most teams underinvest in at deployment. Set it too high and you over-escalate to human agents, destroying your deflection rate. Set it too low and unresolved queries reach their end without resolution, destroying your CSAT. A per-language threshold, typically higher for Tamil and Bahasa than for Mandarin and English, reflects real-world accuracy differences and prevents the system from treating all languages as equally reliable.

Choosing the Right Platform: Build, Buy, or Hybrid

Enterprises with more than 10 language-market combinations and over 500,000 monthly calls typically benefit from a hybrid approach, combining a globally-supported ASR engine such as Whisper or Google Speech-to-Text with a regionally-fine-tuned NLU layer such as SEA-LION.

| Option | Key Strength | Best Used When |

|---|---|---|

| Global Platform (Google CCAI, AWS Connect) | Broad language support, managed SLAs, fast time-to-production | 5 languages or fewer, prefer managed infrastructure, limited in-house NLP capacity |

| Open-Source Build (Whisper + SEA-LION) | Full customisation, no per-call licensing costs, data sovereignty | In-house ML engineers, strict data residency requirements, or dialect-specific customisation needs |

| Hybrid (Global ASR + Regional NLU) | Best accuracy for code-switching, balanced cost model, production-grade reliability | High call volumes (500K+ monthly) across 5 or more language-market combinations |

AI Singapore’s SEA-LION, released as an open-source family of LLMs, supports 11 Southeast Asian languages including Mandarin, Tamil, Malay, and Indonesian. It was pre-trained on one trillion tokens and fine-tuned to outperform global models on the SEA-HELM benchmark. BCG’s 2025 AI Radar data shows that Asia-Pacific companies allocate the highest share of IT budget to AI at 5.2%, and expect a revenue increase of 10% and cost reduction of 12% by 2028. The investment environment supports a hybrid build.

“The fastest path to ROI is not the most languages, it is the highest accuracy in the two or three languages your largest call volumes arrive in.”

Code Snippet 1: Multilingual ASR with Whisper

Source: openai/whisper, GitHub README, Python usage section

This snippet demonstrates the minimum viable ASR configuration for APAC language validation. Load the large-v3 model (the most accurate for low-resource languages), specify the target language, and transcribe. Before committing to any enterprise platform purchase, run your actual production call recordings through this code. The gap between vendor-demo accuracy and real-call accuracy will be immediately visible, and it will determine your implementation strategy.

Implementation Roadmap: From Pilot to Regional Scale

A realistic APAC multilingual voice AI rollout moves through three phases: a 90-day language-specific proof of concept, a 6-month single-market production deployment, and a 12-to-18-month regional expansion with shared model governance.

Phase 1 (Days 1-90): Language-Specific Pilot. Select one language and one intent cluster (for example, Mandarin balance enquiries). Run production call recordings through candidate ASR engines. Measure WER against human transcripts. Set an acceptance threshold, most contact centre programmes use less than 15% WER for production readiness. If Tamil is your priority language, expect to invest in fine-tuning before this threshold is achievable.

Phase 2 (Months 4-9): Single-Market Production. Deploy the highest-accuracy language pair in one market. Instrument every call with per-language quality metrics. Track deflection rate, CSAT, and average handle time (AHT) weekly against your pre-AI baseline. Gartner (March 2025) projects a 30% reduction in operational costs from agentic AI by 2029. Single-market data will tell you if you are on track.

Phase 3 (Months 10-18): Regional Expansion. Add language markets using the shared model governance framework built in Phase 2. Reuse the telephony adapter, dialogue orchestrator, and CRM integration. Customise only the ASR fine-tuning layer and NLU language model for each new market. This is where the hybrid architecture pays back its initial build cost, new language markets add at marginal cost rather than full system re-implementation.

Code Snippet 2: SEA-LION Regional NLU Integration

Source: aisingapore/sealion, HuggingFace Transformers integration, GitHub

This snippet shows the architectural join between the ASR layer and the NLU layer. Whisper produces the transcript. SEA-LION classifies intent. The model understands that “Boleh tolong semak baki akaun saya?” means “Can you please check my account balance?” without requiring translation to English first. This is the difference between a multilingual contact centre that processes regional languages and one that genuinely understands them.

“AirAsia reduced its live agent workforce from 1,500 to fewer than 100 not by replacing people with automation, but by deploying AI that could actually understand its multilingual customer base.”

Frequently Asked Questions

How does voice AI handle code-switching between Bahasa and English in the same call?

Modern multilingual ASR systems address code-switching using phrase-level language models trained on mixed-language corpora. The system detects a language shift within the current utterance and applies the appropriate acoustic model in near real time. Fine-tuned models using paired Bahasa-English training data reduce code-switching word error rates substantially compared to monolingual systems. Production accuracy depends heavily on training data quality and volume for each language pair.

What word error rates should I expect for Tamil and Mandarin in production?

Out-of-the-box models such as Whisper large-v3 achieve roughly 10-15% WER on Mandarin in clean audio. Tamil is considerably harder: baseline WER exceeds 80-120% on untuned models. After balanced fine-tuning on domain-specific Tamil speech data, production WER can reach 30-45% for contact centre audio. Mandarin and Bahasa typically achieve under 15% WER post-tuning. Tamil requires more investment but is achievable within a reasonable pilot timeline.

How long does it take to deploy multilingual voice AI in a Southeast Asian contact centre?

A two-language proof of concept, covering Mandarin and English, can be completed in 90 days using existing platforms and pre-trained models. Adding Tamil or Bahasa as a third language with custom fine-tuning extends this to approximately 6 months for production readiness. Full regional deployment across five or more language markets typically requires 12 to 18 months, with the majority of time spent on data collection, labelling, and model governance rather than infrastructure.

Which platforms support Bahasa Indonesia, Mandarin, and Tamil simultaneously?

Google Cloud Speech-to-Text and AWS Transcribe offer broad commercial language coverage including all three. For open-source deployments, OpenAI Whisper large-v3 covers all three languages but requires fine-tuning for Tamil accuracy. AI Singapore’s SEA-LION provides a regionally-tuned NLU layer for downstream understanding. A hybrid stack combining Whisper for ASR with SEA-LION for intent and entity understanding currently offers the strongest combination of accuracy and regional cultural awareness.

How do I measure ROI for multilingual voice AI in an APAC contact centre?

The four primary KPIs are: call deflection rate (percentage of calls fully resolved by AI without human escalation), average handle time (AHT) reduction on AI-assisted calls, CSAT score on AI-handled interactions versus human-handled baselines, and cost per contact. BCG’s 2025 research shows AI leaders focusing on 3.5 use cases generate 2.1 times the ROI of peers spreading across 6. Apply the same discipline: instrument one language market deeply before scaling.

The Competitive Window Is Closing

Three insights from this analysis deserve to sit at the top of your 2026 planning agenda. First, code-switching is the defining technical challenge for APAC voice AI, not language coverage. A system that speaks ten languages but breaks when a caller mixes two of them is not a multilingual system. Second, regional fine-tuning is mandatory, not optional. Tamil WER above 100% on an untuned model is not a configuration problem. It is a training data problem that requires deliberate investment to fix. Third, ROI is measurable within 90 days when you instrument correctly.

BCG projects AI and GenAI will contribute $120 billion to Southeast Asia’s GDP by 2027. The contact centre is one of the most direct channels for that value capture. But only if the AI can actually hear and understand the customer.

If your contact centre still routes Tamil-speaking customers to a separate queue, you are not running multilingual voice AI. You are running parallel monolingual systems at multilingual cost. The architecture exists. The models are available. The question is whether your organisation moves with the urgency the market requires.