What Is NVIDIA DeepStream? NVIDIA DeepStream is a GPU-accelerated streaming analytics SDK built on GStreamer. It provides hardware-optimised plugins for video decode, multi-camera batching, TensorRT inference, object tracking, and cloud messaging assembled into a single pipeline. Developers use DeepStream to build production-grade Intelligent Video Analytics (IVA) applications for edge devices, on-premises GPUs, and cloud infrastructure, without managing each underlying NVIDIA library directly.

Why AI Video Analytics Can No Longer Rely on CPU-Bound Pipelines

Traditional CPU-based video pipelines cannot sustain real-time inference across multiple simultaneous streams. NVIDIA DeepStream solves this with 100% GPU-accelerated decode, preprocessing, and inference on a single unified framework.

The numbers are hard to ignore. According to Grand View Research (2024), the global AI video analytics market was valued at $12.6 billion in 2024 and is forecast to reach $71.3 billion by 2033 at a CAGR of 21.4%. That growth is not driven by dashboards and quarterly reports; it is driven by cameras running inference at the edge, right now, in warehouses, retail floors, and city streets.

The bottleneck teams hit first is not model accuracy. It is throughput. A single H.264 1080p stream decoded on CPU burns roughly 30–40% of one core. Add inference and you are out of headroom before you process a second stream. GPU-accelerated pipelines change that equation entirely.

“The bottleneck in AI video is never the model, it is the pipeline. Fix the pipeline first.”

What Is the NVIDIA DeepStream SDK? Architecture and Core Components

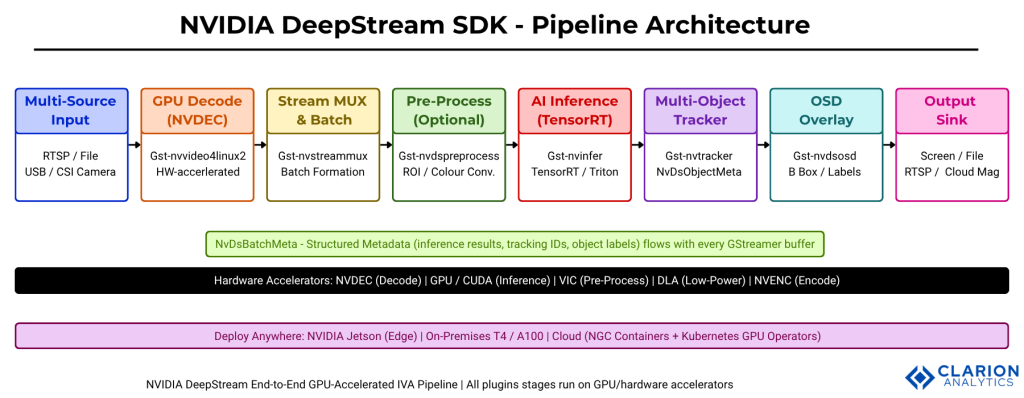

DeepStream is built on GStreamer and exposes more than 40 hardware-accelerated plugins. The core pipeline flows from source input through NVDEC decode, stream muxing, TensorRT inference, object tracking, OSD overlay, and output sink.

At its foundation, DeepStream’s documentation describes the SDK as an “optimized graph architecture built using the open source GStreamer framework.” Every component is a GStreamer plugin. Every frame carries a NvDsBatchMeta structure that accumulates inference results, object bounding boxes, tracking IDs, and class labels as it moves through the graph.

The hardware acceleration stack underneath each plugin is worth understanding. Video decode uses NVDEC. Color conversion and dewarping use VIC (Vision Image Compositor). Inference accelerates through TensorRT or NVIDIA Triton Inference Server. Encoding for output uses NVENC. The CPU handles only I/O and orchestration.

According to NVIDIA’s DeepStream overview (2024), the SDK now supports generative AI agents that can create complete video analytics pipelines from natural language prompts, reducing development time from weeks to hours.

Figure 1: NVIDIA DeepStream End-to-End Pipeline Architecture. The pipeline begins with multi-source inputs (RTSP, file, USB/CSI camera) decoded on NVDEC hardware. Gst-nvstreammux batches frames from all sources. Gst-nvinfer runs TensorRT or Triton inference. Gst-nvtracker assigns persistent object IDs. Gst-nvdsosd renders overlays. The output sink routes to screen, file, RTSP, or cloud via Gst-nvmsgbroker. NvDsBatchMeta carries all results between plugins. DeepStream containers deploy identically on Jetson edge devices, on-premises GPUs, and cloud infrastructure.

Key Use Cases – Where Teams Deploy DeepStream Today

DeepStream is deployed in smart cities for traffic monitoring, in retail for shopper analytics, in manufacturing for defect detection, and in healthcare for patient safety monitoring any domain where video must become structured data in real time.

Smart Cities and Traffic Management

City traffic systems use DeepStream to count vehicles, detect incidents, and monitor pedestrian flow across dozens of intersection cameras simultaneously. The multi-camera batching model means a single GPU processes feeds from multiple junctions with one shared inference engine, cutting hardware cost significantly vs. per-camera processing.

Retail Analytics and Loss Prevention

Retailers run DeepStream pipelines to track customer dwell time, queue length, and product interaction without storing raw video. The metadata-only output model reduces storage requirements and sidesteps many privacy concerns, since NvDsBatchMeta logs coordinates and classifications, not pixels.

Industrial Quality Inspection

In manufacturing, DeepStream feeds conveyor belt cameras into defect detection models at 60fps without dropped frames. The NVIDIA Technical Blog (Nov 2024) documents a real-time visual inspection pipeline for defect detection using TAO 6 and DeepStream 8, achieving sub-30ms end-to-end latency on Jetson edge hardware.

Healthcare and Safety Monitoring

Hospital and care facility systems use DeepStream for fall detection and patient safety monitoring. The secondary classifier pattern, where the primary model detects persons and a secondary model classifies posture, is a clean fit for this use case with minimal pipeline modification.

DeepStream vs. Alternative Approaches – Which Should You Choose?

DeepStream is the best choice when you need multi-stream GPU-accelerated inference at the edge or in a data centre. Custom OpenCV pipelines suit simpler, single-stream applications; cloud-only solutions add latency that makes real-time response impractical.

| Option | Key Strength | Best Used When | Latency Profile |

|---|---|---|---|

| NVIDIA DeepStream SDK | Full GPU acceleration; 40+ plugins; TensorRT + Triton; edge-to-cloud deployment | Multi-stream, low-latency production inference on NVIDIA hardware | < 30ms |

| Custom OpenCV + PyTorch | Full flexibility; no SDK dependency; wide community support | Single-stream prototyping or non-NVIDIA GPU environments | 50–200ms |

| Cloud-only (AWS Rekognition, GCP Video AI) | Zero infrastructure; fully managed service | Non-real-time workloads where 200–500ms latency is acceptable | 200–500ms |

| NNStreamer (open source) | Hardware-agnostic; supports ARM and non-NVIDIA devices | Deployments on ARM/embedded devices without NVIDIA GPUs | Varies |

“DeepStream is not just an inference wrapper, it is a complete production pipeline runtime that happens to include GPU acceleration.”

System Architecture Deep Dive – Plugins, Metadata, and NvDsBatchMeta

DeepStream passes inference results between plugins using NvDsBatchMeta, a structured metadata object attached to every GStreamer buffer. Developers probe this metadata in Python or C++ to access bounding boxes, class labels, tracking IDs, and confidence scores.

The Gst-nvinfer plugin documentation describes how the plugin attaches NvDsInferTensorMeta to each frame’s metadata list, giving downstream probes direct access to raw tensor output when needed. For most applications, the parsed NvDsObjectMeta (bounding box, class ID, confidence) is sufficient.

In practice, teams building custom analytics find that the metadata probe pattern is the key architectural decision. A Python pad probe sits between any two plugins and intercepts NvDsBatchMeta on every buffer without blocking the GPU pipeline. All analytics logic (counting, zone crossing, event emission) lives in probe callbacks, not in forked pipeline branches.

Code Snippet 1 – Building a Minimal DeepStream Object Detection Pipeline in Python

Source: NVIDIA-AI-IOT/deepstream_python_apps – apps/deepstream-test1/deepstream_test_1.py

This snippet constructs a 4-element GStreamer pipeline in Python, source, GPU decode, TensorRT inference, and OSD render, in under 15 lines of setup code. For developers coming from OpenCV, the key shift is that you declare a pipeline graph rather than writing a frame-processing loop. Inference, decode, and rendering all happen in dedicated GPU hardware without any explicit frame extraction in Python.

Implementation Guide – From Installation to Your First Pipeline

DeepStream installs via a Debian package or Docker container on Ubuntu. Full pipeline configuration requires only a .txt config file for standard models. Custom models require ONNX export and TRT engine generation via trtexec.

Installation Prerequisites

Per the NVIDIA DeepStream Installation Guide, DeepStream 9.0 requires Ubuntu 24.04, NVIDIA driver 590.48.01+, TensorRT 10.14+, and CUDA. Install dependencies first:

bash

sudo apt install libssl3 libssl-dev libgstreamer1.0-0 \

gstreamer1.0-tools gstreamer1.0-plugins-good \

gstreamer1.0-plugins-bad gstreamer1.0-plugins-ugly \

libjansson4 libyaml-cpp-dev libjsoncpp-dev

# Install DeepStream package

sudo tar -xvf deepstream_sdk_v9.0.0_x86_64.tbz2 -C /

cd /opt/nvidia/deepstream/deepstream-9.0/ && sudo ./install.shIntegrating Custom YOLO Models

The DeepStream-Yolo repository by marcoslucianops demonstrates the standard custom model integration pattern: export YOLO to ONNX, call trtexec to generate a TensorRT engine, and reference it in the nvinfer config file.

Code Snippet 2 – Adding Multi-Object Tracking and Secondary Classification

Source: NVIDIA-AI-IOT/deepstream_python_apps – apps/deepstream-test2/deepstream_test_2.py

This extends the basic pipeline with Gst-nvtracker between inference and the OSD, assigning each detected object a persistent object_id across frames. The secondary GIE runs attribute classification only on tracked objects, avoiding redundant inference on already-classified items. For retail or traffic use cases, this tracker + secondary classifier pattern is the production baseline.

“Teams building DeepStream pipelines typically find that tracker configuration, not model integration, is where most tuning time goes.”

Deploying DeepStream – Edge, On-Premises, and Cloud

DeepStream containers run identically on NVIDIA Jetson edge devices, on-premises T4/A100 GPUs, and cloud instances. Kubernetes with NVIDIA GPU Operator handles orchestration at scale.

The 2025 survey on Cloud-Edge-Terminal Collaborative Systems (arXiv:2502.06581) confirms that distributing video processing across cloud, edge, and terminal devices is now the dominant architecture for production IVA, enabling adaptive analytics and privacy-preserving analysis that cloud-only approaches cannot achieve.

DeepStream runs on NVIDIA T4, Hopper, Blackwell, Ampere, ADA, and Jetson AGX Thor platforms, per NVIDIA’s installation documentation (2025). Docker containers from NGC allow teams to build once and deploy to any supported platform without recompiling.

For multi-site deployments, Kubernetes with a GPU Operator and NVIDIA DCGM handles GPU resource scheduling and monitoring. A sample Helm chart is available on NGC for teams starting with Kubernetes orchestration.

Frequently Asked Questions About NVIDIA DeepStream

What hardware does NVIDIA DeepStream run on?

DeepStream runs on any NVIDIA GPU supporting CUDA, including Jetson edge devices (Nano through AGX Thor), data centre GPUs (T4, A100, H100), and consumer RTX cards for development. For production, NVIDIA recommends enterprise-grade GPUs designed for 24/7 operation. The SDK supports NVIDIA T4, Hopper, Blackwell, Ampere, ADA, and Jetson platforms.

Can I use DeepStream with PyTorch or TensorFlow models?

Yes. DeepStream integrates with NVIDIA Triton Inference Server via the Gst-nvinferserver plugin, which supports PyTorch, TensorFlow, ONNX, and TensorRT backends. For maximum throughput, exporting your model to ONNX and generating a TensorRT engine with trtexec is the recommended path. See the NVIDIA Triton integration docs for configuration details.

How many video streams can DeepStream process simultaneously?

Stream density depends on resolution, frame rate, model size, and GPU. On a single NVIDIA T4 GPU running 1080p streams with a lightweight detection model at 30fps, teams typically achieve 16–32 simultaneous streams. Using the frame-skip interval config and enabling tracker prediction between inferences can roughly double stream density without significant accuracy loss.

What is the difference between DeepStream and NVIDIA Metropolis?

NVIDIA Metropolis is the end-to-end platform for building vision AI services. DeepStream is the streaming analytics SDK within Metropolis. Metropolis also includes TAO Toolkit for model training, Triton for serving, and the VSS Blueprint for video search and summarisation. Per NVIDIA’s official documentation, DeepStream is the core runtime; Metropolis is the surrounding ecosystem.

Is NVIDIA DeepStream free to use?

DeepStream SDK is free to download and use under NVIDIA’s SDK license agreement. Commercial deployment on enterprise GPU hardware is covered by that license. Containers are available on NGC for both free and enterprise tiers. Review the current NVIDIA DeepStream Software License Agreement on the DeepStream Getting Started page before production deployment.

Key Takeaways and Next Steps

Three things matter most when evaluating NVIDIA DeepStream. First, the pipeline model, connecting hardware-accelerated plugins as a GStreamer graph rather than writing frame loops, is the mental shift that unlocks real throughput gains. Second, NvDsBatchMeta is the integration surface: everything your application needs (detections, tracks, classifications) flows through that metadata object, and your analytics logic lives in probe callbacks. Third, the container-first deployment model means the same pipeline code runs on a Jetson at the edge and on a T4 in the cloud, removing the environment mismatch that slows production rollouts.

The AI video analytics market (Grand View Research, 2024) is growing at over 21% annually. The teams capturing that opportunity are the ones with production-ready pipelines today, not the ones still benchmarking CPU alternatives.

Start with the deepstream-test1 Python sample to validate your environment, then extend to test2 for tracking. From there, swap in your own TensorRT engine file and you have a production-ready baseline in a few hours, not weeks.

“The fastest path from a trained model to a live, multi-stream production deployment runs through DeepStream.”