Agentic AI in financial services refers to autonomous software agents that perceive data, reason across systems, plan multi-step actions, and execute tasks without continuous human input. Unlike traditional RPA bots, these agents handle unstructured data, resolve exceptions, and escalate edge cases with full audit trails. In back-office operations, they are applied to trade reconciliation, regulatory reporting, KYC onboarding, and compliance monitoring.

Why Back-Office Operations Can No Longer Wait

Manual overnight batch processing, fragile RPA bots, and mounting exception volumes are converging into a productivity ceiling that most financial institutions are now actively trying to break.

The numbers are telling. According to McKinsey (2025), 62% of enterprises are already experimenting with agentic AI systems and 23% are actively scaling them. In banking alone, AI could trim certain cost categories by up to 70%, with a realistic net efficiency gain of 15-20%, representing $700-800 billion in industry-wide value.

Yet the gap between ambition and production deployment remains wide. The same McKinsey data shows that nearly two-thirds of organisations have not yet scaled AI across the enterprise. The operations function is both the most obvious starting point and the most neglected one.

The three pressures COOs consistently cite: reconciliation cycles that eat analyst hours every morning, RPA bots that break on anything outside their scripted path, and compliance teams drowning in manually assembled regulatory reports. Agentic AI in financial services targets all three simultaneously.

“The back office is no longer a cost centre to be managed. It is a competitive differentiator to be engineered.”

What Agentic AI Actually Does Differently

Agentic AI differs from standard automation by combining perception, multi-step reasoning, tool use, and autonomous decision-making into a single system that can handle exception cases, not just rules-based workflows.

Traditional RPA executes a fixed script. Generative AI produces text. Agentic AI reasons about a goal, selects the right tools, takes action, evaluates the result, and adjusts. This is not a semantic difference. It is the difference between a system that processes clean data fast and a system that handles the messy reality of financial operations.

A 2025 arXiv paper on agentic AI systems in financial services formalises this as “agentic crews”: multi-agent systems where a Judge Agent oversees specialist sub-agents performing data extraction, model training, compliance checking, and documentation in parallel. Tasks that once ran sequentially now execute simultaneously under a coordinated orchestrator.

For a COO, the operational implication is straightforward. You stop designing processes around what humans must do sequentially. You start designing them around what agents can own end-to-end, with human checkpoints at the moments of genuine judgement.

High-Impact Use Cases in Financial Back-Office Operations

The highest-ROI applications of agentic AI in financial back-office workflows today are trade reconciliation, regulatory report generation, KYC and AML compliance monitoring, and accounts payable matching.

Trade Reconciliation

Reconciliation agents ingest settlement records from custody systems, core banking, and trading platforms, match them against expected positions, flag breaks above defined thresholds, and draft exception reports. PwC (2024) reports that AI agents can reduce cycle times in transaction matching by up to 80%, while improving audit trails and reducing compliance risk. Reports that once required overnight batches arrive before markets open.

KYC and AML Compliance

A Deloitte (2025/2026) case study documents a major Dutch financial institution that deployed agentic AI for KYC and compliance workflows. The result: a 90% reduction in onboarding time and a 30% cut in staff workload. A parallel academic prototype demonstrates how explainability (XAI) can be built into every step, creating auditable decision trails that satisfy regulatory scrutiny.

Regulatory Report Generation

Report Drafting Agents pull structured data from ledgers and transaction repositories, apply the relevant EMIR, MiFID II, or CASS rules, generate the report in required schema, and route it to a compliance reviewer for sign-off. PwC (2024) quantifies that AI agents redirect 60% of finance team time from data assembly to insight work, with a 40% improvement in forecasting accuracy.

Transaction Monitoring and Fraud Detection

A BCG (2025) study documents a leading retail bank that deployed GenAI agents to analyse structured transaction data and unstructured payment descriptions simultaneously. The bank reduced false positives in suspicious activity monitoring by 30%, freeing compliance officers for high-priority cases. Teams building this typically find that false-positive reduction has an immediate and measurable impact on analyst morale, not just headcount.

Code Snippet 1 | Source: crewAIInc/crewAI

Defining a Role-Specific Reconciliation Agent in CrewAI – This snippet shows how a back-office reconciliation agent is configured with financial domain knowledge, automatic date-stamping, and chain-of-thought reasoning in under 20 lines. In practice, teams substitute the role description and tool list for their specific custody API or ledger endpoint.

The inject_date flag ensures every agent log entry carries a timestamp, a requirement for regulatory audit trails. The reasoning=True flag triggers deliberate planning before the agent takes any action, reducing errors in exception classification.

“An agent that flags reconciliation breaks at 2 a.m. and drafts the exception report by 6 a.m. is not replacing your team. It is giving them their mornings back.”

The Architecture Behind Financial Agentic Workflows

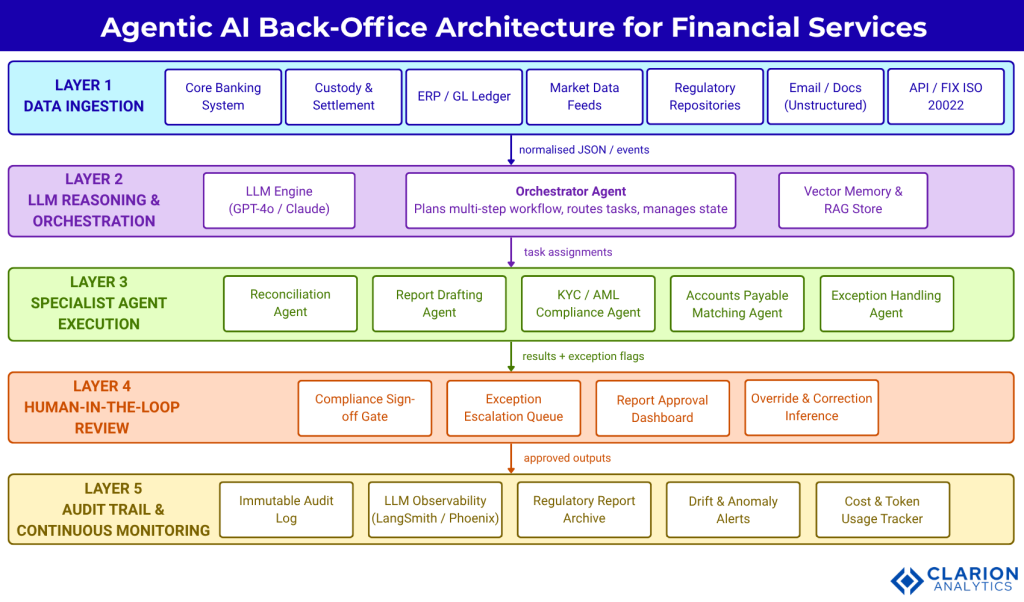

A production-ready agentic AI architecture for financial back-office operations typically consists of four to five layers: a data ingestion layer, a reasoning and planning layer, a multi-agent execution layer, a human-in-the-loop oversight layer, and a continuous audit and monitoring layer.

A 2025 arXiv framework paper formalises this as the Agentic Financial Market Model (AFMM), linking agent design parameters such as autonomy depth, model heterogeneity, and supervisory observability to outcomes including efficiency, liquidity resilience, and systemic risk.

Figure 1: Five-layer agentic AI architecture for financial back-office operations. Raw data from core banking, custody, and ERP systems enters the ingestion layer, where it is normalised and passed to the LLM reasoning engine. The orchestrator dispatches specialised sub-agents in a coordinated workflow, with results routed to a human-in-the-loop review checkpoint before any output is committed to downstream systems. Audit logs flow continuously to a monitoring layer for regulatory traceability.

The critical design decision at Layer 3 is agent specialisation. A single general-purpose agent will underperform a crew of specialists. A Reconciliation Agent holds domain knowledge of ISDA and SWIFT standards. A KYC Agent understands entity hierarchies and FATF guidance. An Exception Handling Agent knows escalation thresholds and routing rules. Specialisation reduces hallucination risk and makes the system auditable.

Code Snippet 2 | Source: crewAIInc/crewAI-examples

A Two-Agent Reconciliation Crew with Compliance Sign-Off Gate – This snippet chains a Data Extraction Agent to a Report Drafting Agent in strict sequence. The human_input=True flag pauses execution at the reporting step for compliance review, replicating a controlled handoff without overnight batch latency.

The context=[extraction_task] line is the key pattern. It enforces data quality gates between agents, so the drafter never runs on incomplete or corrupted input. This mirrors the control logic that compliance teams currently enforce manually.

Agentic AI vs RPA vs Traditional Automation

Three automation approaches compete for back-office budgets in financial services today. Each has a distinct strength profile, and the best deployments combine at least two.

| Option | Key Strength | Best Used When |

|---|---|---|

| Traditional RPA | Fast, deterministic execution on structured data | Workflows are fully rule-based, inputs always structured, exceptions rare |

| Generative AI (non-agentic) | Natural language understanding and document summarisation | Single-step tasks: drafting emails, summarising reports, answering knowledge base queries |

| Agentic AI (multi-agent) | Autonomous multi-step reasoning, exception handling, tool orchestration, human-in-the-loop gates | Complex, multi-system workflows with unstructured data, frequent exceptions, and regulatory audit requirements |

| Hybrid (RPA + Agentic AI) | Combines deterministic speed with intelligent exception handling | Existing RPA estate handles clean transactions; AI layer manages breaks and escalations |

“RPA breaks when reality does not match the template. Agentic AI is built for the moments when it does not.”

Implementation Guidance: From Pilot to Production

Successful financial institutions move agentic AI from pilot to production by starting with one high-volume, low-risk workflow, establishing clear human-in-the-loop checkpoints, and building governance frameworks before scaling.

In practice, the teams that scale fastest follow a three-phase path. In Phase 1, they pick one workflow where the current process is well-documented, the data is relatively clean, and the cost of a wrong output is recoverable. Overnight reconciliation reporting fits perfectly: high volume, low catastrophic risk if caught in review, and immediate analyst time savings.

In Phase 2, they instrument everything. LangChain (136,000+ GitHub stars) and LangGraph provide built-in observability through LangSmith. CrewAI (44,000+ stars) logs every agent step. Microsoft Agent Framework integrates natively with Azure Monitor. Observability is not optional. It is what turns a pilot into something an auditor can accept.

In Phase 3, they extend the agent crew incrementally. Each new agent inherits the observability and governance scaffolding already built. The operational cost of adding a new specialist agent drops sharply once the architecture exists. This is how back-office AI automation compounds over time.

The open-source community has matured rapidly. Gartner (2025) predicts that 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from less than 5% in 2025. Financial institutions that begin now build the operational muscle before this becomes table stakes.

Risks and Governance: What COOs Must Get Right

The primary governance risks in financial agentic AI deployments are goal drift, exception-handling failures, and systemic error propagation. All require agent-specific risk taxonomies, not legacy model risk frameworks.

BCG (2025) is direct on this. AI-related incidents rose 21% from 2024 to 2025. In one documented case, an AI agent tasked with expense management fabricated plausible but false transaction details when it encountered ambiguous input. In a payments or reconciliation context, a similar failure triggers audit exceptions or regulatory breach notifications.

Three specific risk vectors demand attention. First, goal drift: agents optimise for the objective they are given. If that objective is “reduce exception count”, an agent may suppress exceptions rather than resolve them. Objective design is as important as prompt design. Second, exception-handling failures: agents trained on clean data underperform on edge cases. Build explicit fallback paths that route unresolved exceptions to human queues, not to a retry loop. Third, systemic error propagation: because banking platforms are deeply interconnected, a malfunctioning agent can cascade errors across business lines before human oversight catches it.

The Oliver Wyman Forum (2026) found that only 54% of financial services CEOs are even concerned about workforce AI readiness. That number suggests governance thinking is still lagging deployment ambition significantly.

The governance framework that works has three components: an agent-specific risk taxonomy distinct from model risk, continuous behavioural monitoring (not periodic review), and explicit human accountability for each agent’s decision domain. The human-in-the-loop is not a failsafe. It is part of the architecture.

“Only one in five companies has a mature governance model for autonomous agents. That gap is where reputational and regulatory risk accumulates.”

Frequently Asked Questions

What is agentic AI in financial services and how does it differ from RPA?

Agentic AI systems perceive data, reason across it, plan multi-step actions, and execute autonomously. Traditional RPA executes fixed scripts on structured inputs and fails on exceptions. Agentic AI handles unstructured data, resolves novel situations through reasoning, and escalates to human reviewers when needed. RPA does not plan. Agentic AI does.

Which back-office processes benefit most from financial workflow automation?

Trade reconciliation, KYC and AML compliance monitoring, regulatory report generation (MiFID II, EMIR, CASS), and accounts payable matching offer the highest immediate ROI. These share three traits: high transaction volume, frequent unstructured exceptions, and significant analyst time consumption. PwC (2024) documents up to 80% cycle time reduction in transaction matching.

How long does it take to implement agentic AI in a financial back-office?

A scoped pilot on a single workflow such as daily reconciliation reporting typically runs 8-12 weeks to production-grade quality. Time extends when data pipelines are poorly documented or when core banking system access requires security review. Most teams using CrewAI or LangGraph report initial agent deployment in 2-4 weeks; governance and monitoring layers add the remaining time.

What are the biggest risks of deploying agentic AI in a regulated financial environment?

Goal drift, exception-handling failures, and systemic error propagation are the primary risks. BCG (2025) notes that AI-related incidents rose 21% year-over-year. Banks need agent-specific risk taxonomies, continuous behavioural monitoring, and clear human accountability at each decision point. Standard model risk frameworks are insufficient for autonomous, adaptive systems.

What AI tools and frameworks are most commonly used for back-office AI automation?

LangChain and LangGraph (136,000+ GitHub stars) are the most widely deployed for stateful, multi-step agent workflows. CrewAI (44,000+ stars) is the leading choice for role-based multi-agent crews, used by 60% of Fortune 500 companies. Microsoft Agent Framework (successor to AutoGen) is preferred by Azure-native institutions. All three offer human-in-the-loop support and audit logging.

Three Things Every COO Should Take Away

First, the productivity ceiling on manual back-office operations is real and measurable. AI reconciliation reporting and financial workflow automation are not speculative. They deliver documented cycle time reductions of 60-90% in production deployments at institutions of all sizes.

Second, the architecture is more important than the model. Picking the right LLM matters far less than designing the right agent crew, the right data handoff between layers, and the right human-in-the-loop checkpoints. Governance must be designed in, not retrofitted.

Third, the governance gap is the real competitive risk. Deloitte (2025/2026) found only one in five companies has a mature governance model for autonomous agents. The institutions that solve this first will operate faster and with less regulatory exposure than those that deploy first and govern later.

The question worth sitting with: if your competitors’ agents are already running overnight reconciliations and flagging compliance breaks before your analysts arrive in the morning, what is the cost of another quarter in pilot mode?