Definition: Generative computer vision foundation models are large neural networks pre-trained on billions of images and text pairs, capable of performing multiple visual tasks including image generation, segmentation, and zero-shot recognition without task-specific retraining. Models such as CLIP, SAM, and Stable Diffusion represent this category. They serve as base layers that developers fine-tune, prompt, or extend to build production vision systems across healthcare, manufacturing, and autonomous systems.

A Market Worth $38 Billion and Why Developers Need to Pay Attention

The generative AI computer vision market is growing from $7.8 billion in 2024 to a projected $38.67 billion by 2029, driven by demand for synthetic data, model-based inspection, and zero-shot visual understanding across industries. Developers and CTOs who plan their technology stack without accounting for foundation models risk building pipelines that will require expensive rewrites within 18 months.

According to The Business Research Company (2025), the generative AI computer vision market is growing at a 38% CAGR, one of the fastest expansion rates across any AI segment. That growth is not speculative. It reflects manufacturers already running vision-based defect inspection, hospitals using synthetic imaging data, and product teams shipping features powered by generative vision models.

The broader AI vision market tells a similar story. Grand View Research (2024) sized the global AI vision market at $15.85 billion and projects it will reach $108.99 billion by 2033. The generative AI segment within that market is projected to grow at the highest CAGR of any sub-segment, driven specifically by synthetic data generation and zero-shot capabilities.

For software developers and engineering leaders, this is not a future consideration. It is the decision in front of you today.

“Foundation models have shifted computer vision from task-specific pipelines to general-purpose visual intelligence, and that changes how every engineering team should plan its roadmap.”

What Makes a Model a Foundation Model in Computer Vision?

A vision foundation model is pre-trained on massive, diverse datasets and designed to generalize across tasks. Unlike narrow models trained for one job, foundation models can segment, classify, generate, and describe images with minimal or zero additional training.

McKinsey (2023) defines the distinction clearly: unlike previous deep learning models built for a single task, foundation models can process extremely large and varied sets of unstructured data and perform more than one task. This makes them structurally different from every CNN or detection network that came before.

Three characteristics define a vision foundation model. First, scale: these models are trained on datasets measured in billions of image-text pairs. Second, generality: they transfer to new tasks with little or no fine-tuning. Third, promptability: developers interact with them through natural language, points, boxes, or masks rather than retraining the entire network.

Gartner (2025) predicts that by 2027, more than half of GenAI models used by enterprises will be domain-specific, up from 1% in 2024. Foundation models are the starting point for each of those specialized deployments.

The Core Models Every Team Should Know

The three models that anchor generative computer vision today are CLIP (semantic understanding via contrastive learning), SAM 2 (promptable zero-shot segmentation), and Stable Diffusion (latent-space image generation). Each solves a different part of the vision problem.

CLIP – Bridging Language and Vision

CLIP, developed by OpenAI, learns visual representations by training on 400 million image-text pairs using contrastive learning. It maps images and text into a shared embedding space, enabling zero-shot image classification without a single labeled example. The research paper SAM-CLIP: Merging Vision Foundation Models (Wang et al., 2024) demonstrates that CLIP excels in semantic understanding while SAM specializes in spatial understanding, confirming these models are complementary rather than competitive.

SAM and SAM 2 – Segment Anything, Anywhere

Meta’s Segment Anything Model is the most-starred open-source vision foundation model on GitHub, with over 52,000 stars. A comprehensive survey of SAM (Zhang et al., 2024) covering 192 papers confirms that SAM 2, released July 2024, extends promptable segmentation to video through a streaming memory architecture. Teams can run zero-shot segmentation on arbitrary objects — no labeled dataset, no fine-tuning.

Stable Diffusion – Latent Diffusion for Image Synthesis

Stable Diffusion operates by compressing images into a latent space, running the diffusion process there, then decoding back to pixels. Po et al. (2024) in Computer Graphics Forum confirm that diffusion models have become the architecture of choice for visual computing, with exponential growth in production tools for image generation, video synthesis, and 3D reconstruction.

Code Snippet 1: Stable Diffusion image generation via Hugging Face Diffusers

Source: huggingface/diffusers – pipeline_stable_diffusion.py

This pattern, load a checkpoint, move to GPU, generate, is the canonical entry point for every team evaluating generative vision models for synthetic data or content pipelines. The Diffusers library abstracts model architecture differences, so switching from Stable Diffusion 1.5 to SDXL or FLUX requires changing only the model string.

“Three lines of Python now produce photorealistic images from a text prompt; the real challenge has moved from generation to controlled, production-safe deployment.”

Real-World Use Cases: Where These Models Are Already Working

Generative vision foundation models are being deployed in medical imaging augmentation, autonomous vehicle perception, industrial defect detection, retail virtual try-on, and content creation pipelines, solving data scarcity and reducing labeling cost in each domain.

Medical Imaging and Synthetic Data

McKinsey (2024) reports that 65% of organizations are regularly using generative AI, nearly double 2023 adoption. In healthcare, generative models solve a fundamental bottleneck: acquiring large labeled medical imaging datasets is expensive and often impossible at scale. Teams use diffusion models to generate synthetic radiology scans for rare conditions, then train diagnostic classifiers on augmented datasets.

Autonomous Vehicles and Robotics

SAM 2’s streaming memory architecture processes video frames in real time, making it viable for robotics perception pipelines. Autonomous vehicle teams use SAM to generate pixel-accurate segmentation masks for new road scenarios without manually labeling thousands of frames. CLIP handles the semantic classification layer, identifying road signs and obstacles in zero-shot mode.

Industrial Quality Control

In practice, teams building manufacturing inspection systems find that fine-tuning a vision foundation model costs 10x less in data and compute than training a custom detection network from scratch, but only if the base model was chosen to match the sensor and lighting conditions of the specific deployment environment. A model pre-trained on natural photography will struggle on infrared or X-ray data without domain-specific fine-tuning.

“In practice, teams building vision systems find that fine-tuning a foundation model costs 10x less in data and compute than training from scratch, but only if the base model was chosen to match the deployment domain.”

Architecture Deep Dive – How These Systems Are Built

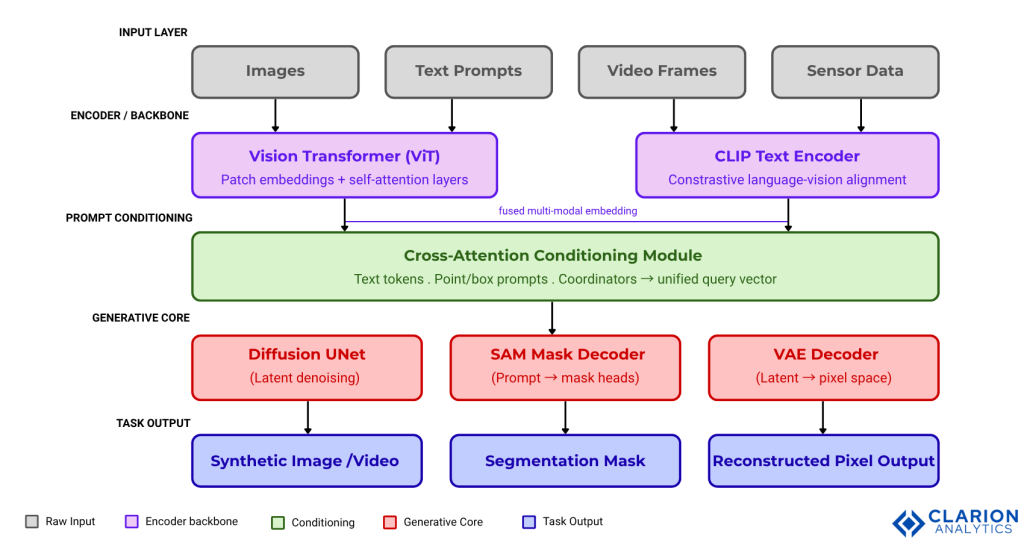

A production generative vision system combines a backbone encoder (Vision Transformer or CNN), a generative decoder (diffusion or autoregressive), and a task-specific head, connected through prompt conditioning layers that bridge language and spatial understanding.

Architecture Caption: The generative computer vision pipeline moves from raw input (images, text prompts, video frames) through a shared encoder backbone (Vision Transformer + CLIP text encoder), fusing embeddings in a cross-attention conditioning module. The generative core branches into three parallel paths: a diffusion UNet for image synthesis, a SAM mask decoder for segmentation, and a VAE decoder for pixel reconstruction. Each path delivers a distinct output type, allowing a single system to serve generation, segmentation, and editing tasks from a unified foundation.

Code Snippet 2: SAM zero-shot segmentation via SamPredictor API

Source: facebookresearch/segment-anything – predictor_example.ipynb

Four lines of code load the SAM checkpoint and produce segmentation masks from a single pixel coordinate, no training data, no annotation pipeline, no fine-tuning. This illustrates zero-shot inference: the model generalizes to objects it has never seen explicitly trained on. CTOs evaluating build vs. buy decisions use this pattern as the baseline for estimating annotation cost savings.

Foundation Model Comparison – Choosing the Right Tool

CLIP is best for zero-shot classification and retrieval; SAM is best for interactive and automated segmentation; Stable Diffusion is best for synthetic data generation and creative image pipelines. Choosing incorrectly often means retraining, not just retuning.

| Model | Key Strength | Best Used When |

|---|---|---|

| CLIP (OpenAI) | Zero-shot text-image alignment and semantic retrieval across unlabeled datasets | You need to classify or retrieve images without any labeled training data |

| SAM 2 (Meta) | Promptable, zero-shot segmentation across images and video with streaming memory | You need object masks without annotating a training set |

| Stable Diffusion / SDXL | Photorealistic image and video generation via latent diffusion | You need synthetic training data, creative content, or image editing at scale |

| DALL-E 3 (OpenAI) | High prompt fidelity and detail-rich generation via GPT-4 conditioning | You need precise, instruction-following image generation integrated into a product |

“The model you choose is not just a technical decision; it determines your data pipeline, your hardware budget, and your compliance posture for the next two years.”

Implementation Guidance – Moving from Prototype to Production

Successful production deployment of generative vision models requires choosing between API access, self-hosted inference, and fine-tuned checkpoints, then addressing latency via quantization, edge deployment, and batched inference pipelines.

Three deployment paths exist. The first is API access: services like OpenAI’s DALL-E 3 API or Hugging Face Inference Endpoints abstract hardware management but introduce latency, cost-per-call, and data privacy constraints. The second is self-hosted inference: teams run Stable Diffusion or SAM on A100 or H100 GPUs, gaining control over throughput and data governance. The third is fine-tuned checkpoints: starting from a foundation model and adapting it to proprietary imagery using LoRA or full fine-tuning.

Latency is the most common production blocker. Teams typically find that quantizing diffusion models to 8-bit or 4-bit precision cuts VRAM usage by 40-60% with minimal quality loss. For segmentation workloads, SAM 2’s streaming memory design enables real-time processing at 30+ fps on high-end GPUs. Edge deployments require additional optimization: ONNX export, TensorRT compilation, or model distillation.

The huggingface/diffusers repository (33,000+ stars) is the production standard for diffusion-based pipelines, supporting Stable Diffusion, FLUX, video generation, and audio synthesis through a unified API that handles model loading, memory optimization, and scheduler configuration.

FAQ – Generative Computer Vision Foundation Models

1. What is a vision foundation model and how does it differ from a traditional CV model?

A vision foundation model is pre-trained on billions of diverse images and can perform multiple tasks, classification, segmentation, generation, with no retraining. Traditional CV models are trained from scratch for one specific task and fail outside that narrow domain. Foundation models transfer to new problems through prompting or lightweight fine-tuning.

2. How do I choose between SAM, CLIP, and Stable Diffusion for my use case?

Choose CLIP when you need zero-shot text-image retrieval or classification without labeled data. Choose SAM when you need object masks for arbitrary visual content. Choose Stable Diffusion when you need to generate or edit images at scale. These models are often combined: CLIP provides semantic labels, SAM generates masks, and Stable Diffusion fills or transforms masked regions.

3. Can I fine-tune a foundation model on proprietary image data without sharing it externally?

Yes. All three major models, SAM, CLIP, and Stable Diffusion, can be fine-tuned on-premise using open-source weights. Hugging Face Diffusers and the SAM 2 training scripts support custom dataset fine-tuning without data leaving your infrastructure. For CLIP, fine-tuning on domain-specific image-text pairs typically requires GPU clusters for at least 24-48 hours.

4. What hardware do I need to run Stable Diffusion or SAM 2 in production?

Stable Diffusion 1.5 runs on a consumer GPU with 4GB VRAM. SDXL and FLUX models need 8-12GB VRAM for inference. SAM 2 (ViT-H backbone) requires 8GB+ VRAM for single-image inference. For throughput at scale, teams use A100 or H100 GPUs. Edge deployments use quantized models on NVIDIA Jetson or Apple Silicon with Core ML conversion.

5. How do generative vision models solve the problem of limited labeled training data?

Generative models create synthetic images of rare conditions, diverse lighting scenarios, or failure modes that are expensive to photograph and annotate. SAM eliminates the need for pixel-level annotation by generating masks from point prompts. Self-supervised learning, which underpins CLIP and many modern encoders, reduces the need for labeled data by up to 80% compared to fully supervised approaches.

Conclusion – Three Insights That Should Change How You Build

First: Foundation models are infrastructure, not features. Building a custom CV pipeline without evaluating SAM, CLIP, or Stable Diffusion first is the equivalent of writing a database from scratch, technically possible, almost never the right decision.

Second: Model selection is a data governance decision as much as a technical one. API-based models are fastest to prototype but create data exposure risks. Self-hosted open-source models trade operational complexity for control. Align your choice to your compliance posture early.

Third: The combination beats any individual model. Production systems increasingly chain CLIP for semantic retrieval, SAM for spatial grounding, and diffusion models for generation, treating each as a specialist module in a shared embedding space.

The question worth sitting with: if your team started a new vision project tomorrow, how many of your annotation hours could a foundation model eliminate, and what would you build with that time instead?

Table of Content

- A Market Worth $38 Billion and Why Developers Need to Pay Attention

- What Makes a Model a Foundation Model in Computer Vision?

- The Core Models Every Team Should Know

- Real-World Use Cases: Where These Models Are Already Working

- Architecture Deep Dive – How These Systems Are Built

- Foundation Model Comparison – Choosing the Right Tool

- Implementation Guidance – Moving from Prototype to Production

- FAQ – Generative Computer Vision Foundation Models

- Conclusion – Three Insights That Should Change How You Build