Running LLMs locally means deploying large language models directly on your own hardware, whether a developer laptop or an on-premises server, without routing prompts through external cloud APIs. The model weights, inference engine, and all prompt data remain on your machine. This approach eliminates per-token API costs, prevents proprietary data from leaving your infrastructure, and enables offline operation with zero network dependency.

Why Running LLMs Locally Is No Longer a Fringe Choice

McKinsey (2025) reports that more than 70 percent of organizations now regularly use generative AI, up from roughly one-third just two years earlier. IDC estimates organizations will spend over $370 billion on GenAI implementation between 2024 and 2027. That scale of adoption brings a problem cloud providers would prefer you overlook: every prompt you send to an external API leaves your infrastructure. For regulated industries, healthcare, finance, legal, defense, that is not a configuration issue. That is a compliance failure.

The tools for running LLMs locally have matured fast. Three platforms now dominate: Ollama, LM Studio, and GPT4All. Each takes a different philosophy to the same goal. This guide breaks down exactly what each tool does, where it excels, and how to choose the one that fits your stack.

“Local LLMs have quietly moved from weekend experiment to practical production infrastructure, and the tooling finally matches the ambition.”

What You Actually Need to Run an LLM on Your Own Machine

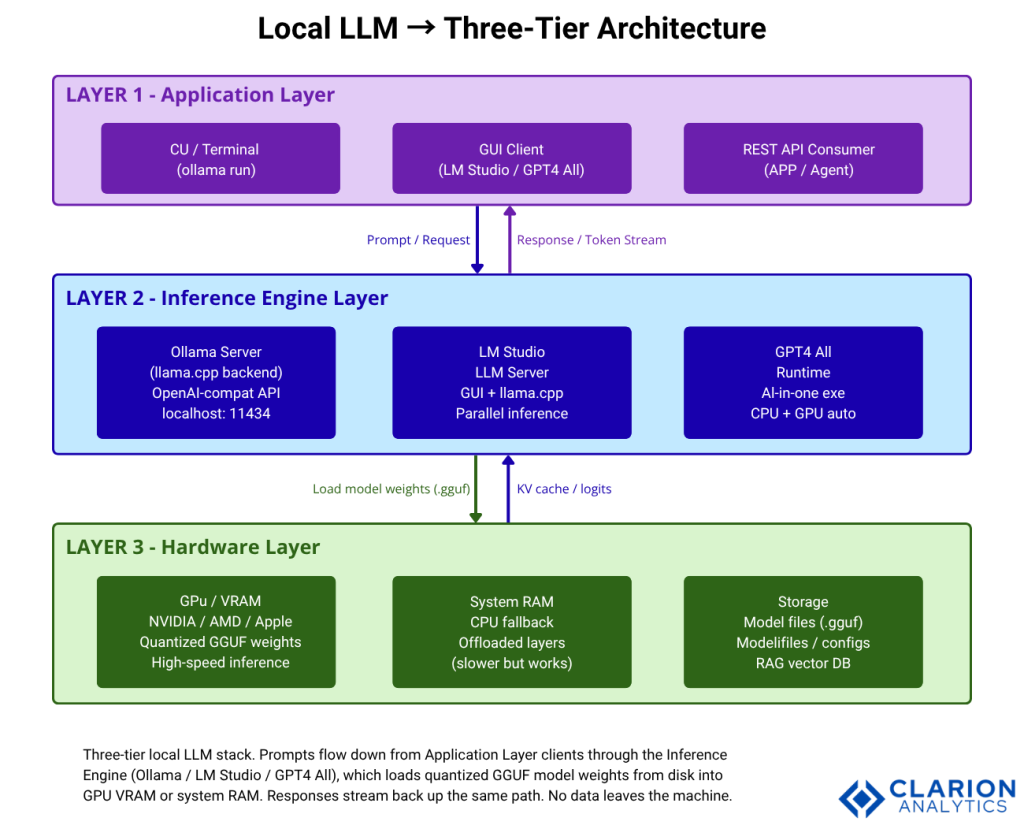

A local LLM deployment has three layers: hardware, inference engine, and model format. Understanding all three saves you hours of debugging.

On hardware, the minimum practical setup for a 7-8B parameter model is 8GB of dedicated VRAM (GPU memory) or 16GB of system RAM for CPU-only inference. A 2025 study published in the IEEE IPDPS PAISE Workshop (Arya & Simmhan, arXiv:2506.09554) found that sequence length and batch size strongly determine throughput on edge accelerators; longer contexts consume memory non-linearly. Apple Silicon (M2/M3/M4) is a compelling option because it uses unified memory: the same pool serves both CPU and GPU, allowing 70B parameter quantized models to run on a MacBook Pro with 64GB RAM.

The dominant model format is GGUF (GPT-Generated Unified Format), the standard created by the llama.cpp project. GGUF enables quantization, compressing model weights from 16-bit floats to 4-bit integers with minimal quality loss. A 2024 arXiv paper (Jin et al., arXiv:2402.16775) confirmed that 4-bit quantized LLMs retain performance comparable to full-precision counterparts across most benchmarks. Practical result: a 13GB download runs a capable 8B parameter model.

The inference engine layer sits between hardware and your application. It handles model loading, tokenization, KV-cache management, and the REST API your code calls. Ollama, LM Studio, and GPT4All all build on llama.cpp at their core, but expose very different surfaces to developers.

Ollama – The Developer’s Default

Ollama is the Docker of local LLMs. It manages model downloads, versioning, and serving through a CLI that feels immediately familiar to any developer who has typed docker pull. A persistent background server exposes an OpenAI-compatible REST API on localhost:11434, meaning any application that already calls GPT-4 can point to Ollama instead by changing one URL.

The star count on GitHub tells the story: 163,000+ stars as of early 2026, with active development through February 2026 (v0.16.3). Teams building this typically find that the zero-config API compatibility is the single biggest time-saver. There is no SDK to install, no authentication layer to configure for local use, and no format translation required.

The Modelfile system is Ollama’s most underrated feature for developers. A Modelfile is a plain-text configuration similar to a Dockerfile that bakes a system prompt, temperature, and context length into a named, versioned model variant. Teams version-control Modelfiles alongside application code and share them with ollama push.

Source: github.com/ollama/ollama – CLI Reference

bash

# One-line install (macOS / Linux)

curl -fsSL https://ollama.com/install.sh | sh

# Pull a quantized model (~4GB download)

ollama pull llama3.1:8b

# Run interactively

ollama run llama3.1:8b

# Call via REST API — OpenAI-compatible

curl http://localhost:11434/api/generate \

-d '{"model":"llama3.1","prompt":"Explain GGUF quantization."}'The snippet above takes under 60 seconds on a modern machine. The REST endpoint accepts the same JSON structure as the OpenAI /v1/chat/completions endpoint, so existing application code requires no changes beyond the base URL. This is the fastest path from zero to a working private LLM API.

Source: github.com/ollama/ollama – Modelfile Customization

dockerfile

# Modelfile — version-control this alongside your app

FROM llama3.1:8b

SYSTEM """You are a senior Python engineer.

Be concise. Always include working code examples."""

PARAMETER temperature 0.3

PARAMETER num_ctx 8192

# Build and run the custom model variant

# ollama create python-expert -f Modelfile

# ollama run python-expertA Modelfile creates a named, reusable model variant without fine-tuning. The SYSTEM directive sets the role; PARAMETER controls inference behavior; FROM pins the base model version. The resulting model artifact is portable: share it with ollama push and any team member can pull the exact same configured model.

“A Modelfile bakes a system prompt, temperature, and context length into a named artifact, versioned, shareable, and reproducible without any fine-tuning required.”

LM Studio – Visual Control for Power Users

LM Studio is the graphical power tool. It wraps llama.cpp in a polished GUI with a built-in model browser connected directly to Hugging Face, a chat interface, and real-time parameter controls, temperature, top-p, repeat penalty, GPU layer count, all adjustable without touching a config file.

The tool targets engineers who understand LLMs but prefer visual workflows for experimentation. Running two models side by side to compare output quality on a specific prompt takes seconds in LM Studio; in a CLI environment it requires scripting. The built-in performance comparison panel shows tokens-per-second across hardware configurations, making GPU selection and quantization-level decisions data-driven rather than guesswork.

LM Studio v0.4.3 (released early 2026) added an llmster daemon for headless deployment and parallel inference supporting up to four simultaneous requests, bridging the gap between desktop tool and lightweight server. The llama.cpp quantization study (Jin et al., 2024) validates the underlying inference engine: 4-bit quantization preserves benchmark performance while cutting memory footprint by roughly 75 percent versus FP16.

In practice, teams building this typically use LM Studio during model selection, comparing a Q4_K_M quantized 13B model against a Q5_K_M variant to find the speed-quality tradeoff that fits their hardware budget, then hand off the winning model file path to Ollama for production serving.

GPT4All – Zero-Friction Local AI for Teams

GPT4All removes every decision point between a user and a running model. There is no GGUF file management, no quantization level to select, and no configuration required. The installer bundles all dependencies. A product manager or legal analyst can be running private AI in under five minutes on a Windows laptop, no IT ticket required.

The model library ships with human-readable descriptions, RAM requirements, and community ratings. LocalDocs GPT4All’s built-in RAG system indexes PDF, Word, and text files locally and makes them queryable without any vector database setup. This addresses the most common non-developer use case: “let me ask questions about this document without uploading it anywhere.”

The GitHub repository at nomic-ai/gpt4all holds 73,000+ stars as of mid-2025, reflecting genuine adoption rather than hype. The trade-off is developer flexibility: GPT4All’s API surface is limited compared to Ollama, and the plugin ecosystem extends functionality within the application rather than building separate tools that call it.

“GPT4All closes the gap between AI capability and non-technical adoption, local, private, and genuinely usable in under five minutes.”

Architecture: The Three-Tier Local LLM Stack

Figure 1: Three-tier local LLM stack. Prompts flow down from Application Layer clients (CLI, GUI, REST consumer) through the Inference Engine layer (Ollama, LM Studio, or GPT4All runtime), which loads quantized GGUF model weights from disk into GPU VRAM or system RAM. Responses stream back up the same path. No data leaves the machine at any point.

Tool Comparison: Ollama vs LM Studio vs GPT4All

| Tool | Key Strength | Best Used When | API Support | License |

|---|---|---|---|---|

| Ollama | OpenAI-compatible API, Docker-like model management, Modelfile system | Building apps that call local LLMs via code; CI/CD pipeline integration | OpenAI-compatible REST (localhost:11434) | MIT |

| LM Studio | GUI model browser, real-time parameter tuning, multi-GPU support, parallel inference | Experimenting with quantization levels; comparing models visually | OpenAI-compatible local server (v0.4.3+) | Proprietary (free tier) |

| GPT4All | Zero-config install, curated model library, built-in LocalDocs RAG, Windows-first UX | Non-technical teams handling sensitive documents; rapid deployment without IT support | Basic REST API (limited vs Ollama) | MIT |

How to Pick the Right Tool for Your Stack

The decision is simpler than the feature lists suggest. Start from your primary use case, not the tool’s feature matrix.

If you are writing code that calls a language model a chatbot, a document summarizer, a code reviewer, choose Ollama. Its persistent API server, OpenAI-compatible endpoint, and Modelfile versioning fit developer workflows exactly. Gartner (2025) projects that 40 percent of enterprise applications will include task-specific AI agents by end of 2026; Ollama is the tooling that makes those agents run locally.

If you are evaluating which model to use for a specific task, comparing Llama 3.1 against Qwen 3 against a fine-tuned variant on your actual prompts, use LM Studio. Visual side-by-side comparison and live parameter adjustment turn what would be a scripting project into a thirty-minute evaluation session.

If your goal is giving a non-engineering team access to private AI, a legal department asking questions about contract PDFs, a finance team summarizing internal reports, GPT4All’s installer-and-go approach is unmatched. IDC (2024) found 60 percent of enterprises view on-premises AI infrastructure as costing less than or about the same as public cloud; GPT4All is the lowest-friction entry point to that calculation.

“The question is not which local LLM tool is best, it is which philosophy matches your workflow: API-first, GUI-first, or installer-first.”

Frequently Asked Questions

Can I run LLMs locally on a laptop without a GPU?

Yes. CPU-only inference works for models up to 7-8B parameters using quantized GGUF files. Expect 3-8 tokens per second on a modern Intel or AMD CPU; slower than GPU inference but usable for many tasks. Apple Silicon MacBooks perform significantly faster than x86 CPUs because their unified memory architecture removes the GPU-RAM transfer bottleneck.

How does running an LLM locally compare to using the OpenAI API for cost?

Cloud API costs scale linearly with usage. A team running 50,000 tokens per day on GPT-4o spends roughly $75 per month at current pricing. A one-time hardware investment in a machine capable of local inference pays for itself within months at that volume. For teams with sensitive data, the compliance risk reduction of keeping data on-premises carries independent financial value beyond compute savings.

Which local LLM tool works best for privacy-sensitive business data?

All three tools keep data on-device by default. For technical teams requiring programmatic access and audit trails, Ollama is the strongest choice because its open-source architecture is fully inspectable. GPT4All is the best option for non-technical staff because it requires no configuration to guarantee data stays local. Verify that telemetry settings are disabled in any tool before handling regulated data.

What is GGUF and why does it matter for local LLM deployment?

GGUF (GPT-Generated Unified Format) is the standard file format for quantized LLM weights, introduced by the llama.cpp project. It enables 4-bit quantization that reduces a 70B parameter model from roughly 140GB (FP16) to around 40GB without significant quality loss. All three tools covered in this guide use GGUF as their primary model format. When you see Q4_K_M in a model filename, it describes the quantization method (4-bit, K-quant, medium variant).

Can Ollama integrate with existing applications that already use the OpenAI API?

Yes, with a single configuration change. Ollama’s server responds to the same endpoint structure as the OpenAI API. Change your base URL from https://api.openai.com/v1 to http://localhost:11434/v1 and update the model name. No SDK change, no request format change. Applications using LangChain, LlamaIndex, or any OpenAI-compatible client connect to Ollama without code modifications.

Local LLMs Are Production-Ready – Here Is What to Do Next

Three insights drive the decision in 2025 and 2026. First, hardware has caught up: quantized 8B models run at interactive speed on consumer GPUs, and Apple Silicon makes a 70B model viable on a laptop. Second, the tooling has standardized: GGUF format and OpenAI-compatible APIs mean your application code is portable across tools. Third, the business case has closed: 70 percent of organizations already run GenAI workloads, and on-premises infrastructure matches cloud cost for moderate-to-high usage volumes.

Ollama is the right starting point for most developers. Run the install command in Snippet 1, pull llama3.1:8b, and your application has a private, free, OpenAI-compatible LLM endpoint in under five minutes. If you need to evaluate models visually first, open LM Studio. If you need to hand a working tool to a non-technical colleague today, send them the GPT4All installer.

The harder question is not which tool to use. It is: what does your team build once the cloud dependency is gone?

Table of Content

- Why Running LLMs Locally Is No Longer a Fringe Choice

- What You Actually Need to Run an LLM on Your Own Machine

- Ollama – The Developer’s Default

- LM Studio – Visual Control for Power Users

- Architecture: The Three-Tier Local LLM Stack

- Tool Comparison: Ollama vs LM Studio vs GPT4All

- How to Pick the Right Tool for Your Stack

- Frequently Asked Questions

- Which local LLM tool works best for privacy-sensitive business data?

- What is GGUF and why does it matter for local LLM deployment?

- Can Ollama integrate with existing applications that already use the OpenAI API?

- Local LLMs Are Production-Ready – Here Is What to Do Next