DSPy (Declarative Self-improving Python) is an open-source framework from Stanford NLP that replaces hand-written prompts with modular Python programs. Developers declare what a language model should do using typed signatures, then compile the program; DSPy automatically optimizes prompts and, optionally, model weights using algorithms like MIPROv2. The result is a reproducible, maintainable AI pipeline that outperforms manually crafted prompts without prompt engineering.

Why Prompt Engineering Is Breaking Production AI

Prompt engineering fails at scale because hard-coded prompt strings break every time a model, dataset, or pipeline step changes, a problem DSPy solves by compiling typed programs into optimized prompts automatically.

According to Gartner (2025), by 2027 at least 55% of software engineering teams will be actively building LLM-based features. But the prompts holding those pipelines together are fragile. Change the underlying LLM, swap a retrieval index, or add a reasoning step, and every hand-tuned string in the system can silently degrade.

Gartner (2024) notes that RAG and prompt engineering skills are now rated as the most essential upskilling priorities for software engineers, yet the dominant approach to those skills remains trial, error, and copying prompts from blog posts. That is the exact problem DSPy was built to solve.

DSPy LLM pipeline optimization is not a minor workflow tweak. It is a paradigm shift from strings to programs, from guesswork to metrics, from brittle to compiled.

“The moment you change your LLM or your data, every manually crafted prompt in your pipeline is a liability.”

What Is DSPy and How Does It Work?

DSPy abstracts LM pipelines as text transformation graphs composed of parameterized modules (Predict, ChainOfThought, ReAct). A built-in compiler then optimizes each module’s prompts and demonstrations against a developer-defined metric.

According to the official DSPy documentation, DSPy is a declarative framework for building modular AI software. It allows you to iterate fast on structured code rather than brittle strings, and offers algorithms that compile AI programs into effective prompts and weights for your language models, whether building simple classifiers, sophisticated RAG pipelines, or agent loops.

Think of it as the shift from assembly to a high-level language. You stop specifying how the model should be prompted and start specifying what it needs to accomplish.

Signatures – The Typed Interface

A Signature is a natural-language typed function declaration. question -> answer tells DSPy what goes in and what comes out. Field names carry semantic meaning; the compiler interprets question differently from query, and infers how to construct the prompt accordingly. The compiler uses in-context learning to interpret field names and iteratively refines its usage of each field.

Modules – Reusable Reasoning Blocks

DSPy provides built-in modules like dspy.Predict (basic completion), dspy.ChainOfThought (step-by-step reasoning), and dspy.ReAct (agent with tools). Modules can be composed together in code, similar to layers in PyTorch, allowing multi-step pipelines.

The Compiler / Optimizer Loop

The compiler takes your program, a small training set, and a metric function. It runs the program repeatedly, bootstraps high-scoring examples as demonstrations, proposes new instructions, and uses search algorithms to find the best combination. DSPy modules are parameterized, meaning they can learn how to apply compositions of prompting, finetuning, augmentation, and reasoning techniques.

“DSPy is to prompt engineering what PyTorch was to hand-coded backpropagation: it makes optimization systematic, not artisanal.”

DSPy Architecture: From Signature to Optimized Pipeline

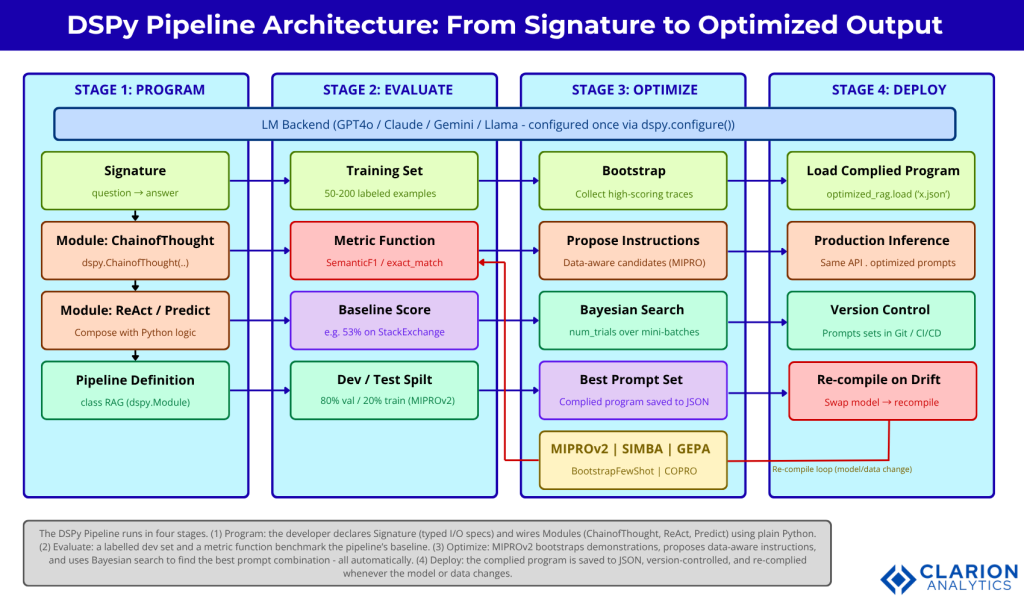

A DSPy architecture has five layers: the LM backend, Signatures (typed I/O specs), Modules (prompting strategies), a Metric function, and an Optimizer that compiles everything into an optimal prompt set.

Figure 1: The DSPy pipeline runs in four stages. (1) Program: the developer declares Signatures and wires Modules. (2) Evaluate: a labelled dev set and metric function benchmark the baseline. (3) Optimize: MIPROv2 bootstraps demonstrations, proposes instructions, and uses Bayesian search. (4) Deploy: the compiled program is version-controlled and re-compiled on model/data changes.

The pipeline runs in three stages. First, Program: the developer defines Signatures and wires Modules using plain Python control flow. Second, Evaluate: a dev set and metric function establish a baseline score. Third, Optimize: MIPROv2 bootstraps demonstrations, proposes candidate instructions, and uses Bayesian search to find the highest-scoring prompt combination. The compiled program is then deployed with all optimized prompts embedded and version-controlled.

According to the DSPy roadmap, DSPy takes inspiration from PyTorch, but with one major difference: PyTorch was introduced after DNNs were mature ML concepts, whereas DSPy seeks to establish and advance core LM program research from programming abstractions to NLP systems to prompt optimizers. In PyTorch you define layers and call an optimizer; in DSPy you define modules and call a compiler.

Real-World Use Cases for DSPy

DSPy excels at multi-hop question answering, RAG pipeline optimization, ReAct agent loops, and classification tasks where consistent output quality matters more than one-off prompt tricks.

Multi-Hop Question Answering

Multi-hop QA requires the model to retrieve and synthesize information from several sources before answering. Hard-coded prompts rarely handle this gracefully; one reasoning failure cascades through the chain. DSPy treats each retrieval and reasoning step as a parameterized module, then optimizes all steps jointly against an end-to-end accuracy metric.

According to the foundational DSPy paper (Khattab et al., ICLR 2024), a few lines of DSPy allow GPT-3.5 and Llama2-13b-chat to self-bootstrap pipelines that outperform standard few-shot prompting generally by over 25% and 65%, respectively, and pipelines with expert-created demonstrations by up to 5-46% and 16-40%, respectively.

RAG Pipeline Optimization

Source: stanfordnlp/dspy – RAG Tutorial

This snippet defines a RAG module with a single ChainOfThought step, then hands it to MIPROv2. The optimizer runs Bayesian search over instruction and demonstration combinations. According to the DSPy RAG tutorial, it improves pipeline quality from 53% to 61% on StackExchange QA, costing around $1.50 for the medium auto setting and taking some 20-30 minutes. In practice, teams building this typically find that the first compilation immediately surfaces prompt flaws that were not previously visible, because the metric makes failures measurable rather than silent.

Agentic Loops with ReAct

Source: stanfordnlp/dspy – Optimizers

This is the full DSPy loop in nine lines. A ReAct agent is configured with a Wikipedia search tool, then MIPROv2 optimizes its prompts against 500 HotPotQA examples. According to DSPy documentation, an informal run like this raises ReAct accuracy from 24% to 51% by teaching gpt-4o-mini more about the specifics of the task. No prompt was written manually.

DSPy vs LangChain vs LlamaIndex – Which Should You Use?

DSPy optimizes how prompts are generated and tested; LangChain orchestrates how components connect; LlamaIndex excels at retrieval plumbing. Many production stacks combine all three.

According to Cohorte’s practical DSPy guide (2025), LangChain is a “lego box” of integrations and chains, while DSPy is system-programming plus auto-optimization. You can compose them, for example, wrapping LangChain tools as functions for a DSPy ReAct agent.

| Framework | Key Strength | Best Used When |

|---|---|---|

| DSPy | Automatic prompt/weight optimization via compiler + Bayesian search | You need reproducible, high-accuracy multi-step LLM pipelines |

| LangChain | Broad integrations, agent orchestration, rapid prototyping | You need to connect LLMs to many external data sources quickly |

| LlamaIndex | Deep retrieval, document indexing, and structured RAG | Your use case is primarily document search and retrieval indexing |

From a performance standpoint, a standardized 100-query RAG benchmark (AI Multiple, 2025) shows DSPy at the lowest framework overhead of approximately 3.53 ms, compared to LangChain at approximately 10 ms and LangGraph at approximately 14 ms.

“DSPy does not replace LangChain, it replaces the prompt engineering that LangChain left for you to do manually.”

Implementing DSPy: A Step-by-Step Guide

A basic DSPy implementation has four steps: install and configure an LM backend, define a Signature and Module, collect a small training set, and compile with MIPROv2, a process that typically costs around $2 and takes 20 minutes.

Installation and Setup

bash

pip install dspy

export OPENAI_API_KEY=your_key_hereDSPy supports any model accessible via LiteLLM. For OpenAI-compatible endpoints, add an openai/ prefix to your full model name.

Define Your Signature and Module

Start with the simplest module dspy.Predict and upgrade to dspy.ChainOfThought for tasks requiring reasoning.

Run the Optimizer

Collect 50-200 labeled input-output examples. Define a metric function (exact match, SemanticF1, or a custom Python callable). Then compile:

According to the MIPROv2 documentation, MIPROv2 starts with a bootstrapping stage, running the program many times to collect high-scoring traces. It then enters a grounded proposal stage, using traces and program code to draft candidate instructions. Finally it launches a discrete search stage using Bayesian optimization to find the best combination.

Evaluate and Deploy

Load optimized_qa.json in production. Re-compile whenever the underlying model or dataset changes; the process is fully automated.

“A typical MIPROv2 optimization run costs $2 and 20 minutes, the ROI on eliminating weeks of manual prompt iteration is immediate.”

Frequently Asked Questions

What does DSPy stand for and how is it pronounced?

DSPy stands for Declarative Self-improving Python, pronounced “dee-es-pie.” It was created at Stanford NLP and is now backed by Databricks. The name reflects its core design: programs declare intent, and the framework self-improves prompts automatically through compilation against a metric.

Can I use DSPy with GPT-4, Claude, or open-source models?

Yes. DSPy supports any model accessible via LiteLLM, which includes OpenAI, Anthropic, Google Gemini, Azure, and most open-source models via Ollama or Hugging Face endpoints. You configure the LM once with dspy.configure() and all modules use it automatically; swapping models requires changing one line.

How is DSPy different from prompt engineering?

Prompt engineering is manual and brittle: you write strings, test them, and repeat. DSPy is programmatic and systematic: you define typed signatures, collect examples, and let the optimizer find the best prompt automatically. DSPy prompts are version-controlled, reproducible, and not broken by model updates.

Do I need a large dataset to use DSPy optimizers?

No. MIPROv2 can bootstrap useful few-shot examples with as few as 20-50 labeled examples. For better generalization, 200 examples are recommended. A light optimization run (auto="light") is a practical starting point for any new pipeline, even with minimal data.

Can I use DSPy alongside LangChain or LlamaIndex?

Yes, and many teams do. A common pattern is to use LlamaIndex or a vector database for document retrieval, expose the retriever as a plain Python function, and pass it to a DSPy ReAct module as a tool. LangChain integrations can similarly be wrapped as DSPy-compatible callables; the frameworks complement rather than replace each other.

The Future of LLM Programming Is Declarative

Three insights from this guide are worth carrying forward. First, prompt brittleness is an architecture problem, not a skill gap, and DSPy addresses it at the architecture level. Second, optimization is not optional for production AI. Third, DSPy and LangChain are not competitors — they operate at different layers, and the best production stacks use both.

Gartner (2025) projects that by 2027 organizations will use small, task-specific models at least three times more than general-purpose LLMs, making systematic fine-tuning and prompt optimization a core competency rather than a research concern.

DSPy has grown to well over 28,000 GitHub stars and over 160,000 monthly pip downloads, with hundreds of projects already using it in production, according to community tracking data (2025). The optimizer lineup is expanding (GEPA, SIMBA, MIPROv2), and DSPy 3.0 is on the horizon.

The question is not whether your team should adopt declarative LLM programming; it is how quickly your current prompt-engineering debt will force you to.