DEFINITION: Semantic segmentation is a computer vision technique that assigns a class label such as “road,” “pedestrian,” or “tumor” to every pixel in an image. Unlike object detection, which draws bounding boxes, semantic segmentation produces a dense, pixel-level map of the entire scene. Modern implementations use deep learning, specifically encoder-decoder architectures and vision transformers, to achieve real-time accuracy across autonomous driving, medical imaging, and industrial inspection.

Why Semantic Segmentation Is Now a Board-Level Priority

The global semantic image segmentation market is projected to grow from $7.1 billion to $14.6 billion by 2030, at a 12.4% CAGR (Valuates Reports, 2024). That growth does not come from academic curiosity. It comes from production demands across three converging sectors: autonomous vehicles that cannot navigate without pixel-perfect scene parsing, radiology platforms that need to segment tumors faster than a radiologist can read, and industrial lines where defects invisible to the human eye cost millions per shift.

Simultaneously, Gartner projects total GenAI spending to reach $644 billion in 2025, a 76.4% jump year-over-year (Gartner, 2025). Vision AI semantic segmentation chief among its capabilities, is one of the primary beneficiaries. For CTOs, the question is no longer whether to adopt semantic segmentation, but which architecture to adopt, and how fast to deploy it.

The good news: the tooling has never been more accessible. Foundation models, transformer-based encoders, and high-quality open-source frameworks have collapsed the gap between research and production. This guide covers everything you need to make informed architecture decisions in 2025.

“Semantic segmentation is no longer a research benchmark exercise. It is the perception layer that makes autonomous systems production-viable.”

From CNNs to Vision Transformers: The Architecture Shift

Convolutional architectures dominated semantic segmentation for nearly a decade. U-Net brought skip connections. DeepLab brought atrous convolutions and ASPP (Atrous Spatial Pyramid Pooling). PSPNet introduced pyramid pooling for global context. Each was a genuine advance. Each also shared a structural ceiling: CNNs build global context by stacking local operations, which limits their ability to capture long-range spatial relationships in one pass.

Vision Transformers (ViTs) broke that ceiling. Using self-attention across the entire image treated as a sequence of patches, ViTs capture global context in a single layer. Xie et al. (NeurIPS 2021) introduced SegFormer, which combines a hierarchical Mix Transformer encoder with a lightweight MLP decode head. SegFormer-B5 achieves 84.0% mIoU on the Cityscapes validation set while being 5x smaller than previous state-of-the-art CNN models at equivalent accuracy. Crucially, its design avoids fixed positional encoding, which means it generalises to different image resolutions without retraining.

Research published in 2025 on urban point-cloud segmentation confirms the transformer trend: PTv3, a point-based transformer model, leads the SemanticKITTI benchmark at 75.5% mIoU, outperforming all CNN-based baselines. In practice, teams building new segmentation systems rarely start from a CNN backbone today.

Foundation Models and Zero-Shot Segmentation

The biggest shift in the last 18 months is the arrival of promptable foundation models. Meta’s Segment Anything Model 2 (SAM 2) extends the original SAM to handle both images and video with a streaming memory module. You give it a point, a bounding box, or a text cue, and it segments the target object, then propagates that mask through subsequent video frames in real time. No retraining required.

Code Snippet 1 – SAM 2 Video Inference

Source: facebookresearch/sam2 (GitHub)

This snippet initialises a SAM 2 video predictor, accepts a point or box prompt on a single frame, and streams segmentation masks across the entire video. What previously required a full annotation pipeline and a custom-trained model now resolves in three method calls. The torch.autocast context halves GPU memory usage, making this viable on a single A100 or even a high-end consumer card.

“With SAM 2, three lines of code replace what previously required a full annotation and training pipeline.”

Where Semantic Segmentation Creates Real Business Value

Real-world ROI from semantic segmentation falls into four domains that should map directly to your roadmap planning.

Autonomous Driving. Segmentation identifies every pixel as road, lane marking, pedestrian, vehicle, or obstacle. Systems like Tesla Autopilot and Waymo’s stack run multiple segmentation heads per camera feed at 30+ fps. Without pixel-accurate scene parsing, planning and control layers cannot function safely.

Medical Imaging. A 2025 systematic review in IET Image Processing confirms that deep learning segmentation models consistently match or exceed radiologist-level accuracy on tasks such as tumor delineation, organ contouring, and polyp detection. U-Net variants remain dominant in clinical deployment due to their small-data efficiency.

Precision Agriculture. Satellite and drone imagery segmentation enables crop-health monitoring, weed identification, and yield estimation at per-plant granularity. Models trained on multispectral bands can classify plant stress before it is visible to the naked eye.

Industrial Inspection. Pixel-level defect detection on semiconductor wafers, welding seams, and fabric surfaces reduces false-negative rates to near zero while eliminating manual inspection overhead. Teams at tier-1 automotive suppliers run SegFormer-B1 on NVIDIA Jetson Orin edge hardware with sub-30ms latency per frame.

Production Architecture: How a Modern Segmentation Pipeline Works

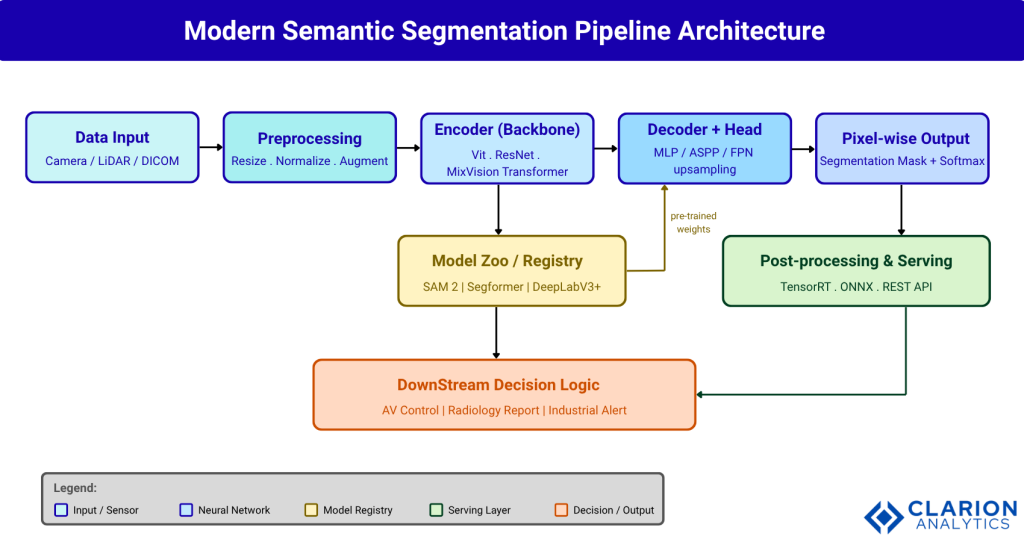

A production segmentation pipeline has five stages: data ingestion, preprocessing, encoder-decoder inference, post-processing, and downstream decision logic. GPU-optimized model serving with TensorRT or ONNX is standard for achieving latency under 50ms per frame.

Figure 1: Modern Semantic Segmentation Pipeline Architecture. Raw sensor data (camera, LiDAR, or DICOM) enters a preprocessing stage for resizing, normalisation, and augmentation. The encoder extracts feature representations using a ViT or CNN backbone. The decoder upsamples features back to the original resolution via MLP, ASPP, or FPN heads. Outputs are pixel-wise masks served through TensorRT or REST API to downstream application logic. Pre-trained weights from the model registry accelerate training by up to 10x versus random initialisation.

Code Snippet 2 – SegFormer Fine-Tuning via MMSegmentation

Source: open-mmlab/mmsegmentation (GitHub)

This snippet loads the SegFormer-B5 configuration from MMSegmentation, redirects it to a custom dataset, and runs single-image inference. Changing the config filename from ‘b5’ to ‘b0’ switches to the lightweight backbone, useful for edge deployment, without touching any model code. MMSegmentation’s unified API means the same inference call works for DeepLabV3+, Mask2Former, or PSPNet.

“The teams that ship fastest are not training from scratch. They are fine-tuning foundation models on domain-specific data.”

Choosing the Right Model: A Practical Comparison

Not every architecture fits every production constraint. The table below maps the leading models to the scenarios where they create the most value.

| Model | Key Strength | Best Used When | mIoU (Cityscapes) | Edge-Ready |

|---|---|---|---|---|

| SegFormer-B5 | Accuracy-speed balance; MLP decoder; no positional encoding | Production systems needing strong accuracy and moderate inference speed | 84.0% | Yes (B0/B1) |

| SAM 2 | Zero-shot, promptable; images + video; no retraining needed | Low-annotation budgets or novel domains; interactive tools | N/A (zero-shot) | No (cloud GPU) |

| DeepLabV3+ | Atrous convolutions; excellent boundary detail; well-documented | Established pipelines requiring interpretability and fine-grained edges | ~82.1% | With TensorRT |

| Mask2Former | Unified semantic + instance + panoptic; transformer-based | Panoptic segmentation tasks requiring full scene understanding | ~83.3% | Partial |

| U-Net | Encoder-decoder with skip connections; small data friendly | Medical imaging and satellite tasks with limited labeled data | Domain-specific | Yes |

Note: mIoU figures are benchmark results and will vary on domain-specific datasets. Always profile on your own data before committing to an architecture.

Frequently Asked Questions

What is the difference between semantic segmentation and instance segmentation?

Semantic segmentation assigns a class label to every pixel, but treats all instances of a class as one region. Instance segmentation goes further, distinguishing between separate objects of the same class. For example, semantic segmentation labels all cars as “car,” while instance segmentation labels them “car_1” and “car_2.” Panoptic segmentation combines both approaches in one pass.

Which semantic segmentation model should I use in 2025?

SegFormer-B3 or B5 is the best default for production: it balances accuracy and inference speed, has excellent community support, and is fine-tunable via MMSegmentation in hours. Use SAM 2 when labeled data is scarce or you need zero-shot generalization. Use DeepLabV3+ when edge interpretability and proven stability matter more than peak benchmark performance.

How do I run semantic segmentation in real time on edge hardware?

Export your trained model to ONNX or use TensorRT quantization (INT8) to cut inference latency by 3 to 5x on NVIDIA Jetson. For CPU-only edge devices, use lightweight backbones such as SegFormer-B0 or MobileNetV3-based architectures. Profile with torch.profiler before optimizing: the bottleneck is usually the decoder upsampling, not the backbone.

Can semantic segmentation work without labeled training data?

Yes, in two ways. First, foundation models like SAM 2 perform zero-shot segmentation using point or bounding-box prompts, requiring no pixel-level labels. Second, open-vocabulary models like CLIPSeg accept text prompts. For high-accuracy domain-specific work, a small set of labeled images (as few as 50 to 200) combined with fine-tuning usually outperforms pure zero-shot approaches.

How do vision transformers improve semantic segmentation over CNNs?

Vision Transformers use self-attention to capture global context across the entire image in a single pass, whereas CNNs build context progressively through stacked local convolutions. This gives transformers a structural advantage for understanding large regions like sky, roads, and organs. SegFormer’s hierarchical ViT encoder also avoids fixed positional encodings, making it robust to resolution changes at test time.

What the Next 12 Months Will Bring

Three insights should guide your semantic segmentation roadmap for the next year.

First, the foundation model layer has stabilised. SAM 2, SegFormer, and Mask2Former have all reached production maturity. Your architecture decision should focus on fine-tuning strategy and inference optimisation, not on which research model is newest.

Second, edge deployment pressure is intensifying. As autonomous systems move from data-center inference to on-device processing, lightweight transformer backbones (SegFormer-B0/B1) and INT8 quantization via TensorRT will become standard requirements rather than optional optimisations.

Third, the annotation bottleneck is dissolving. Zero-shot models like SAM 2 and open-vocabulary segmenters like CLIPSeg are reducing the labeling cost for new domains from months to days. Teams that build annotation pipelines around these tools will iterate significantly faster than those still relying on manual pixel-level labeling.

The question worth asking your team today: at which of your five highest-value computer vision tasks could replacing a CNN with a fine-tuned SegFormer or a SAM 2 prompt pipeline cut your time-to-production in half?