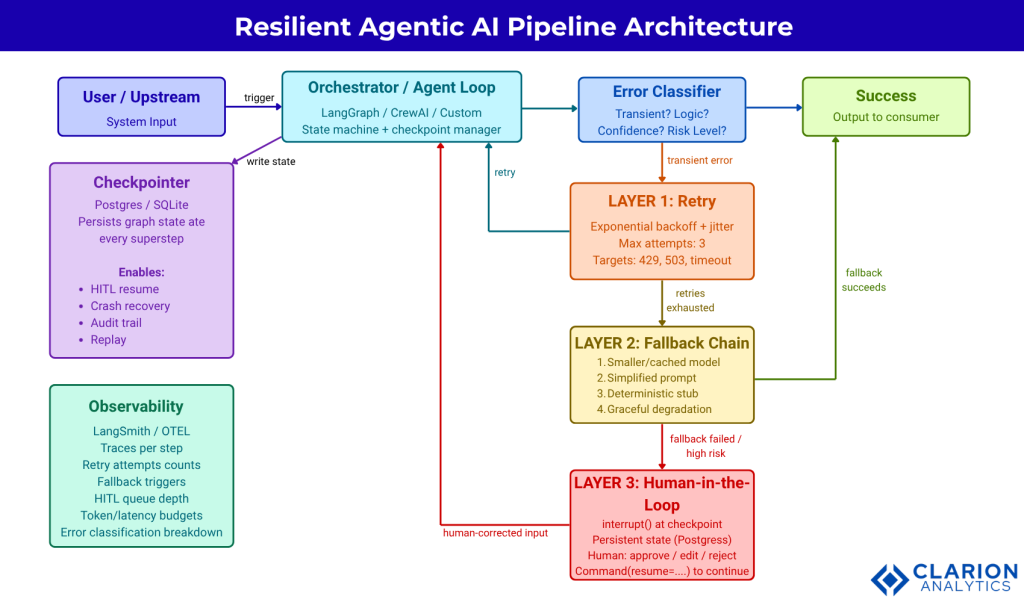

A resilient agentic AI pipeline is a multi-step LLM-driven workflow designed to continue producing correct outputs even when individual components fail. It combines three defensive layers: retry logic (automatic re-attempts with backoff), fallback chains (alternative execution paths when retries are exhausted), and human-in-the-loop checkpoints (deliberate pause-and-review gates for high-stakes or ambiguous steps).

Why Most Agentic AI Projects Fail Before They Ship

Most agentic AI projects fail in production not because the models are bad, but because the orchestration layer has no plan for failure. Without retry logic, fallback chains, and human-in-the-loop gates, a single transient error cascades into full pipeline collapse.

Building a resilient agentic AI pipeline is not optional once your agents touch real systems. Gartner’s June 2025 forecast predicts that over 40% of agentic AI projects will be cancelled by the end of 2027. The three failure drivers cited are escalating costs, unclear business value, and critically inadequate risk controls. Risk controls are not a governance checkbox. They are the retry policies, fallback chains, and human gates that keep a multi-step LLM workflow from silently producing garbage or taking an irreversible action.

The problem is architectural. LLM calls are non-deterministic. APIs rate-limit. Models hallucinate. Downstream tools fail. A pipeline that handles none of these realities is not a production system. It is a demo waiting to break.

This post maps the three defensive layers every agentic pipeline needs: what they are, when to use each, how to implement them with production tooling, and how to layer them correctly so failures stay local instead of cascading.

A pipeline that cannot fail gracefully is not production-ready. It is a demo waiting to break.

The Anatomy of an Agentic Pipeline Failure

Agentic pipelines fail in three predictable categories: system design flaws (bad routing, missing error handlers), inter-agent misalignment (conflicting goals, message corruption), and task verification failures (unvalidated outputs propagating downstream as facts).

The 2025 arXiv paper “Where LLM Agents Fail and How They Can Learn From Failures” by Zhu et al. introduces AgentErrorTaxonomy, a modular classification built from 1,600+ annotated real-world failure trajectories. The taxonomy identifies five failure domains: memory, reflection, planning, action, and system-level operations.

Each domain fails differently. Memory failures corrupt the context that downstream steps rely on. Planning failures produce multi-step paths that hit dead ends mid-execution. Action failures calling the wrong tool with the wrong parameters are often the most visible, but system-level failures are the most silent. A rate-limited API call that returns a 429 looks like success until the empty response reaches your parser.

Teams building these systems typically find that most pipeline breaks trace back to one absent design decision: no explicit error type classification. Retry logic that retries a hallucination just generates three confident wrong answers. Fallback logic that re-routes a confidence failure to a simpler model is useful. Routing a transient HTTP timeout through a human approval queue is wasteful. The classifier comes first.

Before writing any retry or fallback code, map your failure modes into at least three buckets: transient infrastructure errors (retry-eligible), logic and quality failures (fallback-eligible), and risk or confidence failures (HITL-eligible). Everything else follows from that classification.

Retry logic applied to a hallucination does not fix the problem. It produces three confident wrong answers instead of one.

Building the Retry Layer: Backoff, Jitter, and Selective Retries

Effective retry logic for LLM pipelines combines exponential backoff, random jitter (to prevent thundering herds), and error-type discrimination, retrying only transient failures like rate limits and timeouts, not logic or hallucination errors.

The most common LLM production failure is a transient one: an HTTP 429 rate-limit, a 503 service unavailable, or a connection timeout. These errors resolve with time. The correct response is to wait and retry with exponential backoff and added jitter.

Tenacity (4.9k stars, Apache 2.0) is the standard Python library for this pattern. The decorator API wraps any function call and handles stop conditions, wait strategies, and async support without changing your application logic.

Code Snippet 1: LLM call with exponential backoff and jitter Source: jd/tenacity – doc/source/index.rst

This snippet wraps an async LLM API call with three defenses: a maximum of three attempts, exponential backoff starting at two seconds (doubling each retry, capped at thirty), and a random jitter of up to two seconds. The retry predicate targets only HTTP errors and timeouts, not ValueError or parsing errors, which should not be retried. Jitter prevents multiple concurrently failing agents from retrying in lockstep and spiking the upstream API simultaneously.

Three is a reasonable default retry budget for API calls. If you’ve tried three times on a transient failure, you are likely facing an outage, not a flap. The correct response is to trigger the fallback layer, not to retry indefinitely.

Three is the right retry budget for LLM API calls. Beyond three, you have an outage escalation, do not retry forever.

Designing Fallback Chains That Actually Work

A fallback chain routes a failed pipeline step through a sequence of progressively simpler alternatives, for example, from a frontier model to a smaller local model to a cached result before escalating to a human or returning a graceful error.

When retries are exhausted, the pipeline should not crash. It should try a cheaper, simpler, or pre-computed alternative. A well-designed fallback chain reads like a decision tree that degrades gracefully.

A typical four-level chain for an LLM generation step: (1) primary model with full context, (2) a smaller or cached version of the same model with a simplified prompt, (3) a deterministic rule-based stub that covers the most common cases without using an LLM at all, and (4) a graceful degradation response that returns a partial result or a user-facing message. Only after all four levels are exhausted should the pipeline escalate to a human.

The 2026 arXiv paper “Graph-Based Self-Healing Tool Routing for Cost-Efficient LLM Agents” by Bholani formalizes this as a routing problem. When a tool fails, its edges are reweighted to infinity in a cost-weighted tool graph, and Dijkstra’s algorithm recomputes the shortest path automatically. The agent recovers without invoking the LLM again, a pattern that removes both latency and hallucination risk from the recovery path.

In practice, the biggest mistake teams make with fallbacks is conflating them with retries. A retry repeats the same operation with the same input, hoping the transient condition has resolved. A fallback changes the operation entirely. Calling the same frontier model three more times after retries are exhausted is not a fallback. It is a fourth retry with extra latency.

Fallback chains also need their own budgets. Cap each fallback level at one attempt. If level two fails, move to level three immediately. The chain should complete in under the total latency budget allocated to the step, not compound latency across multiple slow failures.

Human-in-the-Loop: When Agents Must Stop and Ask

Human-in-the-loop checkpoints are triggered by confidence thresholds, risk flags, or explicit failure exhaustion. Production systems use persistent state checkpointers so the workflow can pause indefinitely and resume exactly where it stopped when human input arrives.

When all automated recovery paths are exhausted or when an action is irreversible enough to require explicit authorization, the pipeline must pause and route to a human. The HULA framework paper (Atlassian, 2024) demonstrates HITL deployed inside Atlassian JIRA: engineers could review and correct LLM-generated coding plans before execution, materially reducing overall development time and effort.

The engineering challenge with HITL is not the interrupt itself. It is the state management. A paused graph may sit idle for minutes, hours, or days before a human responds. The execution context must survive that gap intact. Without a persistent checkpointer, the pipeline restarts from scratch when resumed, losing all intermediate work.

Code Snippet 2: LangGraph human-in-the-loop interrupt with checkpoint resume Source: langchain-ai/langgraph – LangChain official docs

This snippet configures per-tool interrupt policies: irreversible actions (write_file, delete_record) always pause, SQL execution pauses with a restricted decision set, and safe reads auto-approve. The AsyncPostgresSaver persists full graph state to Postgres, so the interrupt can last for hours without losing context. Command(resume=’approve’) resumes execution from the exact node where it paused.

LangGraph (31.2k stars) is the most widely adopted framework for this pattern, trusted in production by Klarna, Replit, and Elastic.

Persistent checkpointing is not a nice-to-have. It is the foundation that makes human-in-the-loop patterns production-viable.

Choosing the Right Pattern: Retry, Fallback, or HITL

The choice between pure retry, fallback chain, and human-in-the-loop depends on error type, step reversibility, latency tolerance, and risk level. Most production pipelines use all three in a layered sequence.

| Pattern | Key Strength | Best Used When |

|---|---|---|

| Retry + Backoff (Tenacity) | Zero latency overhead on success path; handles flaps invisibly; no code changes to application logic | Error is transient: rate limit (429), timeout, 503. Step is idempotent and safe to repeat. |

| Fallback Chain | Maintains pipeline progress at lower quality/cost; avoids latency stacking from repeated identical failures; resilient to model outages | Primary model is unavailable or over-budget. A simpler model or cached result is acceptable. Step does not require full capability. |

| Human-in-the-Loop (LangGraph) | Catches judgment failures automated systems cannot resolve; prevents irreversible mistakes; builds operator trust for high-stakes deployments | Action is irreversible (write, delete, send). Confidence is below threshold. All automated recovery paths are exhausted. Regulatory review is required. |

| Layered (all three) | Maximum resilience; self-heals transient issues silently; degrades gracefully under load; escalates only what humans need to see | Production systems with real users and real consequences. Any multi-step pipeline touching external systems or data stores. |

Production pipelines should layer all three patterns, retry, fallback, and HITL, in sequence, not choose between them.

Production Readiness: An Implementation Checklist

A production-ready agentic pipeline must have typed error classification, per-step retry budgets, model fallback sequences, persistent state checkpointing, and at least one HITL gate for irreversible or high-risk actions.

Teams building this typically find that the checklist reveals three missing items: there is no error taxonomy (every failure is caught by a generic except clause), there is no fallback model configured (retries are the only recovery), and the HITL gate exists in the code but the checkpoint backend is InMemorySaver, meaning any restart loses the paused state.

Work through this checklist before your next production deployment:

- Error taxonomy is defined: transient, logic, confidence, and risk buckets.

- Retry decorator targets transient errors only, with exponential backoff and jitter. Max attempts is three.

- Fallback chain has at least two levels below the primary model. Each level has a one-attempt budget.

- Deterministic stub or cached-response layer is the final automated fallback before HITL.

- LangGraph (or equivalent) checkpointer is backed by Postgres or SQLite, NOT InMemorySaver in production.

- HITL interrupt_on is configured per tool, based on reversibility and risk level.

- Observability captures: retry attempt count, fallback trigger rate, HITL queue depth, and error type distribution per step.

- SLA budget is allocated explicitly: X seconds for retries, Y seconds for fallback, Z hours maximum for HITL.

- Runbook documents how to handle HITL queue backlogs and what to do when all three layers fail.

- Load test includes simulated rate-limit responses and tool failures to verify recovery paths work end-to-end.

Frequently Asked Questions

How do I handle LLM failures in a production pipeline?

Classify the failure first. Transient errors (429, 503, timeout) should trigger an exponential backoff retry with jitter. Tenacity handles this with a single decorator. Logic or quality failures should route to a fallback chain. Confidence or risk failures should pause at a human checkpoint. Never apply the same recovery strategy to all error types.

When should I retry vs. escalate to a human in an agentic workflow?

Retry when the failure is transient, and the step is idempotent, the same input will produce a correct output once the infrastructure recovers. Escalate to a human when all automated recovery paths are exhausted, when the action is irreversible (a delete or a send), or when the agent’s confidence score falls below a defined threshold for the risk level of the decision.

What is the difference between a fallback chain and a retry loop?

A retry loop repeats the same operation with the same input, expecting a transient condition to resolve. A fallback chain substitutes a different operation entirely, typically a simpler or cheaper alternative. Calling the same frontier model four times is a retry. Routing to a smaller local model after three failed attempts is a fallback. They address different failure modes and should not be conflated.

Which tools should I use to add human-in-the-loop to my AI agent?

LangGraph (31.2k stars) is the production standard. It provides interrupt() primitives, per-tool interrupt policies, and Postgres-backed checkpointing so workflows can pause indefinitely and resume exactly where they stopped. For pure retry logic, Tenacity is the standard Python library with async support.

How many retries should an agentic AI step attempt before failing?

Three is the correct default for LLM API calls. Attempt one hits the failure. Attempt two often succeeds during a brief rate-limit window. Attempt three covers a second flap. Beyond three, you are likely facing an outage, not a transient error, and continuing to retry burns latency budget and masks the real issue. Set stop_after_attempt(3) and transition to the fallback chain after the third failure.

An agentic pipeline is only as reliable as its least-defended step. Harden each step before you trust the whole chain.

Build for Failure, Design for Recovery

A resilient agentic AI pipeline is not built by adding a try/except block and hoping for the best. It is built by classifying failures precisely, layering three complementary recovery mechanisms, and backing them all with persistent state.

Three insights matter above all others. First: error classification precedes everything. Retry logic applied to a logic failure is worse than no retry at all, it consumes budget and produces confidently wrong outputs. Build your taxonomy before you write your first decorator.

Second: the three layers, retry, fallback, and HITL, are not alternatives. They are a sequence. Most failures resolve at layer one. Persistent outages hit layer two. High-stakes or irreducible failures reach layer three. A pipeline that only has retry is brittle. A pipeline with all three is defensible in production.

Third: persistent checkpointing is the load-bearing infrastructure for the entire stack. Without it, a HITL pause that lasts more than the process lifetime loses all intermediate state. With it, agents can pause for hours, resume from any step, and maintain a full audit trail.

As Gartner (2025) notes, over 40% of agentic AI projects will be cancelled by 2027, mostly due to inadequate risk controls. The teams that ship to production and stay there will be the ones that treated reliability as a first-class architectural concern from the start.

The question worth carrying forward: which step in your current agentic pipeline has no recovery path, and what breaks downstream when it fails?