Model Context Protocol (MCP) is an open standard that defines how AI agents discover and invoke external tools, read data sources, and exchange structured context using a JSON-RPC client-server architecture. Introduced by Anthropic in November 2024 and donated to the Linux Foundation’s Agentic AI Foundation in December 2025, MCP replaces bespoke per-tool integrations with a single protocol that works across any compliant model or host.

Why Every AI Platform Team Is Standardising on MCP

MCP solves the MxN integration problem: instead of building one custom connector per model per tool, teams implement the protocol once on each side and gain universal interoperability. The math shifts from multiplicative to additive, and it compounds as tool counts grow.

Before MCP, connecting an AI agent to ten enterprise systems meant ten bespoke integrations, each with its own authentication logic, error handling, and versioning contract. Add a second AI model and the problem doubled. According to analysis from Bonjoy (2026), MCP reduces integration cost by 60-70% compared to that custom-connector approach.

The urgency is real. Gartner (2026) predicts 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from fewer than 5% in 2025. MCP is the interoperability layer that makes that scale possible.

By March 2026, the protocol reached 97 million monthly SDK downloads and over 10,000 public servers. OpenAI, Google DeepMind, Microsoft, and AWS have all adopted it. The protocol race is over. The implementation race has just begun.

“MCP transforms AI integration from an engineering bottleneck into a platform capability that scales linearly, not quadratically.”

How MCP Works: The Client-Server Architecture

An MCP deployment consists of three components: the host (the LLM application), the client (the protocol intermediary running inside the host), and the server (the tool or data source) connected via JSON-RPC over stdio or streamable HTTP.

The host, such as Claude Desktop or a custom agent, runs the MCP client. That client negotiates capabilities with one or more MCP servers on startup. Each server advertises its tools (executable functions), resources (data endpoints), and prompts (reusable templates). The LLM then requests tool calls through the client, which routes them to the correct server and returns structured results.

Transport matters in production. Local agents use stdio for zero-latency. Remote deployments use Streamable HTTP, which replaced the earlier SSE transport in the March 2025 spec update. JSON-RPC serialization adds roughly 10-15ms of latency compared to a direct API call, so caching and connection pooling at the gateway layer are important at scale.

Enterprise Use Cases: Where MCP Delivers Real ROI

Enterprise teams use MCP to wire AI agents into CRMs, code repositories, databases, and ticketing systems without rebuilding connectors each time the model or tool changes.

Software Development Workflows

The modelcontextprotocol/servers GitHub repository ships reference MCP servers for GitHub, Postgres, Slack, Google Drive, and Git. Teams at Block and Bloomberg have used these as the foundation for agents that can read open pull requests, query internal databases, and post summaries to Slack — all without custom glue code.

Regulated Industries and Compliance

Wing Venture Capital’s 2025 enterprise AI analysis identifies MCP as the missing identity and permissioning layer for multi-tool AI workflows. In financial services, agents now query Workday for HR data, Salesforce for customer records, and Jira for project status, with each call scoped to the user’s permissions and fully logged for audit.

Multi-Model Orchestration

In practice, teams building multi-agent pipelines often find that MCP’s model-neutrality is its most underrated feature. Switching a Claude-based agent to GPT-4o for a specific task becomes a configuration change, not a rebuild, because the MCP servers do not care which model is calling them.

“Organisations adopting MCP report 40-60% faster agent deployment times and the economics only improve as the tool count grows.”

Code Snippet 1: Minimal MCP Server (Tool, Resource, and Prompt)

Source: modelcontextprotocol/python-sdk, examples/snippets/servers/fastmcp_quickstart.py

This 25-line server registers all three MCP primitives. The @mcp.tool() decorator auto-generates JSON Schema from Python type hints, so the client can discover and validate the tool without a separate spec file. Running with transport=”streamable-http” exposes this server over HTTP, making it accessible to any MCP-compliant host, not just local stdio connections.

Production Architecture: The MCP Gateway Pattern

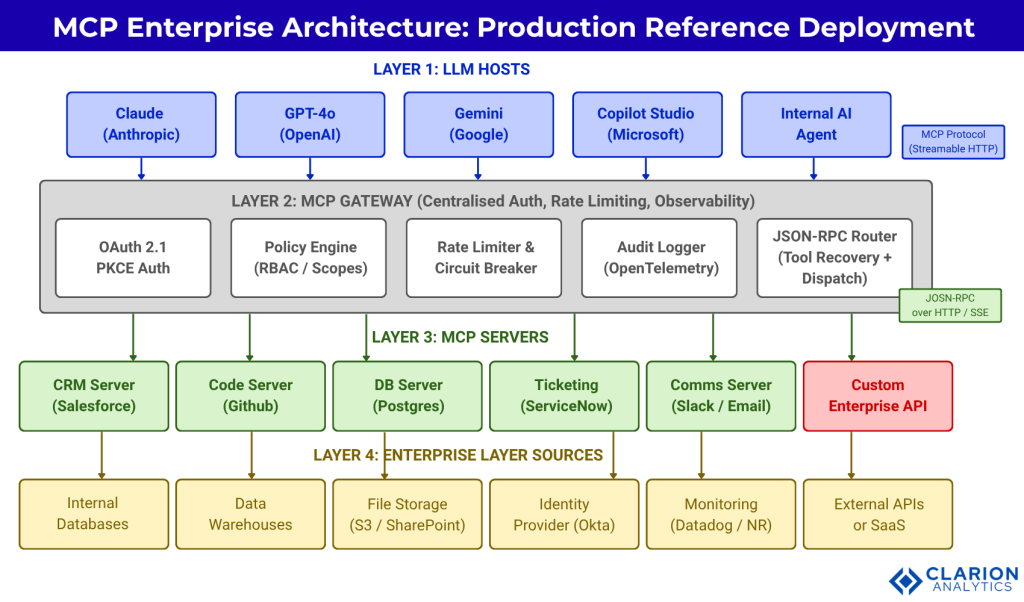

In production, teams centralise auth, rate limiting, and observability in a single MCP gateway rather than distributing these concerns across individual servers, a pattern supported by peer-reviewed research from arXiv.

The Brett (2025) paper on MCP gateways formalises what engineering teams have discovered independently: putting a dedicated gateway between the LLM hosts and the MCP servers is the most maintainable production pattern. The gateway handles OAuth token introspection, enforces RBAC policies, applies rate limits per agent identity, and ships traces to OpenTelemetry once, for all servers.

Without a gateway, each MCP server must independently implement these concerns. That creates as many auth implementations as there are servers, each one a potential security gap and maintenance burden.

Figure 1: LLM hosts (Layer 1) connect to all enterprise tools through a centralised MCP Gateway (Layer 2), which enforces OAuth 2.1 PKCE authentication, policy-based access control, rate limiting, and OpenTelemetry-based audit logging before routing JSON-RPC requests to individual MCP Servers (Layer 3). Each server exposes one or more enterprise data sources (Layer 4) via standardised tool, resource, and prompt primitives.

Security in Production MCP Deployments

The three highest-priority MCP security risks are prompt injection via malicious tool descriptions, token mis-redemption, and tool-squatting attacks that replace trusted servers with lookalikes. Hou et al. (2025) present the first systematic academic taxonomy of MCP threats, covering four attacker types across the full server lifecycle.

The June 2025 MCP specification update made PKCE mandatory under OAuth 2.1, introduced Resource Indicators (RFC 8707) to ensure tokens are only valid for their intended server, and explicitly prohibited token passthrough. These spec changes directly close the three attack vectors above.

An empirical study by Hasan et al. (2025/2026), analysing 1,899 open-source MCP servers, found that MCP servers exhibit the same code smell and bug patterns as traditional software. Auto-approval of tool calls was particularly dangerous: research found tool poisoning attacks succeed at 84.2% when auto-approval is enabled.

“Security is not a layer you add to an MCP deployment after the fact; it is a protocol-level design decision made before the first server goes live.”

Code Snippet 2: OAuth-Secured MCP Server for Enterprise

Source: rb58853/simple-mcp-server, private_server/server.py

This snippet adds OAuth 2.1 token introspection and scope enforcement. The token_verifier checks that every inbound request carries a token issued specifically for this server (Resource Indicator validation from RFC 8707), blocking token mis-redemption attacks. The required_scopes field ensures agents only call tools within their permission boundary. For regulated enterprise environments, this is the minimum viable auth configuration.

Integration Patterns Compared

Three patterns dominate enterprise MCP architecture: direct server connections, centralised gateways, and federated multi-agent meshes, each with distinct tradeoffs in latency, control, and complexity.

| Pattern | Key Strength | Best Used When | Main Risk |

|---|---|---|---|

| Direct Server Connection | Lowest latency; simplest mental model; no gateway hop | Internal PoC / single-agent, low-risk tools | Auth/observability must be rebuilt per server |

| Centralised MCP Gateway | Single auth, audit, and rate-limiting enforcement point; easiest to govern | Production enterprise; regulated industries; multi-model deployments | Gateway becomes single point of failure if not HA |

| Federated Multi-Agent Mesh | Horizontal scalability; each sub-agent specialises; no central bottleneck | Complex orchestration; agent-to-agent delegation workflows (Q3 2026+ spec) | Trust propagation and scope creep between agents |

| Custom API Connectors (pre-MCP) | Full control; no protocol dependency | Legacy systems not yet MCP-compliant | Multiplicative maintenance burden (M x N problem) |

Table 1: MCP Integration Pattern Comparison. The gateway pattern is recommended for all production enterprise deployments.

Implementation Roadmap for Platform Engineers

Most teams move through four phases: a two-to-four week pilot with one tool, a department-wide expansion, an org-wide rollout with governance controls, and ongoing optimisation.

“The teams that succeed with MCP in production treat it as infrastructure, not a one-time integration project.”

Phase 1: Pilot (weeks 1-4)

Pick one low-risk internal tool, such as a read-only Postgres MCP server or a GitHub read-only server. Wire it to one agent. Measure latency, validate the JSON Schema tool discovery, and confirm your identity provider can issue tokens the server trusts. The official modelcontextprotocol/servers reference implementations are the fastest starting point.

Phase 2: Expansion (months 2-3)

Deploy the MCP Gateway. Centralise OAuth 2.1 token introspection, add rate limiting, and connect OpenTelemetry to your observability stack. Add two to five more MCP servers behind the gateway. Run the MCP Inspector tool to validate tool schemas before each deployment.

Phase 3: Enterprise Rollout (months 3-6)

Onboard enterprise identity (Okta, Azure AD) to the OAuth 2.1 flow. Enforce required_scopes per tool category. The PrefectHQ/fastmcp framework (25K stars, actively maintained) includes built-in SSO and RBAC controls, a private registry, and rollback support that simplifies this phase.

Phase 4: Optimisation (ongoing)

Monitor JSON-RPC latency at the gateway. Add caching for read-heavy resource endpoints. Review the MCP spec roadmap: agent-to-agent coordination lands in Q3 2026 and will require trust propagation policies before you enable it.

Frequently Asked Questions About MCP in Enterprise

What is model context protocol and why does it matter for enterprise AI?

Model Context Protocol is an open standard for connecting AI agents to external tools and data sources using a JSON-RPC client-server architecture. It matters for enterprise AI because it replaces bespoke per-tool connectors with a single protocol, reducing integration cost by 60-70% and making AI deployments portable across Claude, GPT, and Gemini without rebuilding integrations.

How does an MCP server differ from a REST API or OpenAPI spec?

A REST API is a general-purpose interface designed for human-built clients. An MCP server is specifically designed for LLM agents: it advertises capabilities dynamically at runtime, uses JSON-RPC for structured bidirectional communication, and supports the three AI-specific primitives of tools (executable actions), resources (data sources), and prompts (interaction templates) in a single protocol.

How do I secure an MCP server in a production enterprise environment?

The June 2025 MCP spec mandates OAuth 2.1 with PKCE and Resource Indicators (RFC 8707). Implement token introspection at the gateway layer to verify each token was issued specifically for your server. Use required_scopes to enforce least-privilege access. Disable auto-approval of tool calls. Never allow token passthrough from the host to backend services.

Can MCP work with multiple LLM providers at the same time?

Yes. This is MCP’s core value proposition. Anthropic, OpenAI, Google DeepMind, Microsoft, and AWS all support the protocol as of 2025-2026. An MCP server does not know or care which model is calling it. A multi-model agent architecture can route different tasks to different LLMs while all models share the same MCP server infrastructure.

What are the biggest risks of running MCP servers in production?

The top three risks, per peer-reviewed research: prompt injection via malicious tool descriptions that hijack agent behaviour, token mis-redemption attacks that reuse a valid token across servers it was not issued for, and tool-squatting attacks that insert a malicious server into the discovery path. Mitigate with the gateway pattern, mandatory PKCE, Resource Indicator validation, and tool schema verification before deployment.

Three Things Every Platform Team Should Do This Quarter

The strategic picture is clear. MCP has achieved in 18 months what most standards take a decade to accomplish: genuine cross-vendor adoption, vendor-neutral governance under the Linux Foundation, and a growing ecosystem of 10,000+ servers that platform teams can consume rather than build.

Three insights should drive your next 90 days. First, the MxN integration problem is solved at the protocol level. Your job now is to implement the gateway pattern that makes MCP production-safe at scale. Second, security is a first-class engineering concern from day one: mandatory PKCE, Resource Indicators, and required_scopes are not optional hardening; they are the minimum spec-compliant configuration. Third, multi-model AI strategies and MCP are mutually reinforcing: the more models you run, the more MCP’s vendor-neutral integration layer saves you.

The question worth sitting with: if every enterprise system your agents will ever need has an MCP server available today, what is actually stopping you from starting your pilot this week?