What is edge AI computer vision? Edge AI computer vision is the practice of running image and video inference models directly on industrial IoT hardware, without routing data to a cloud backend. It combines optimized neural networks, hardware-specific runtimes such as TensorRT or ONNX Runtime, and purpose-built silicon to deliver sub-millisecond latency and continuous operation in air-gapped or bandwidth-constrained factory environments.

Why the Factory Floor Cannot Wait for the Cloud

Cloud-routed inference adds 50-200ms of round-trip latency that breaks real-time requirements in manufacturing QA, robotics, and safety monitoring. On-device edge inference eliminates this dependency entirely.

Real-time vision inference at the industrial edge is not a performance preference. It is an operational requirement.

According to Deloitte’s 2024 Manufacturing Industry Outlook, 67% of manufacturers cite latency as the primary barrier to cloud-dependent AI in production environments. A press brake operating at 120 cycles per minute cannot pause while a JPEG travels to a data center and returns a defect classification.

IDC’s 2024 Worldwide Edge Spending Guide projects global edge computing spend will exceed $378 billion by 2028, with industrial IoT as the largest single segment. The edge AI computer vision opportunity is large, and the implementations are already live on factory floors worldwide.

“A defect caught at the press is 100x cheaper to fix than one caught at the end of the line.”

McKinsey research from 2024 shows real-time vision-based quality inspection reduces defect escape rates by up to 90% compared with manual methods. The business case is settled. The engineering challenge is execution.

Industrial Use Cases Driving Edge Vision Adoption

The leading industrial edge computer vision use cases are PCB and surface defect detection, robotic pick-and-place guidance, worker safety monitoring, and predictive maintenance via thermal imaging.

Defect detection on production lines is the highest-volume application. A camera mounted above a conveyor belt runs a quantized object detection model locally, flagging dimensional anomalies or surface scratches in under 10ms per frame. No image ever leaves the factory network.

Robotic bin-picking and guidance uses pose estimation models running on the robot’s onboard compute module. Latency here is mechanical: if the inference result arrives after the robot arm has already committed to a trajectory, the result is useless. Edge inference keeps the control loop tight.

Worker PPE and safety zone monitoring requires sustained 30-fps inference across multiple camera feeds simultaneously. A single Jetson AGX Orin can handle four to six such streams in parallel at FP16 precision.

System Architecture for Edge Vision Pipelines

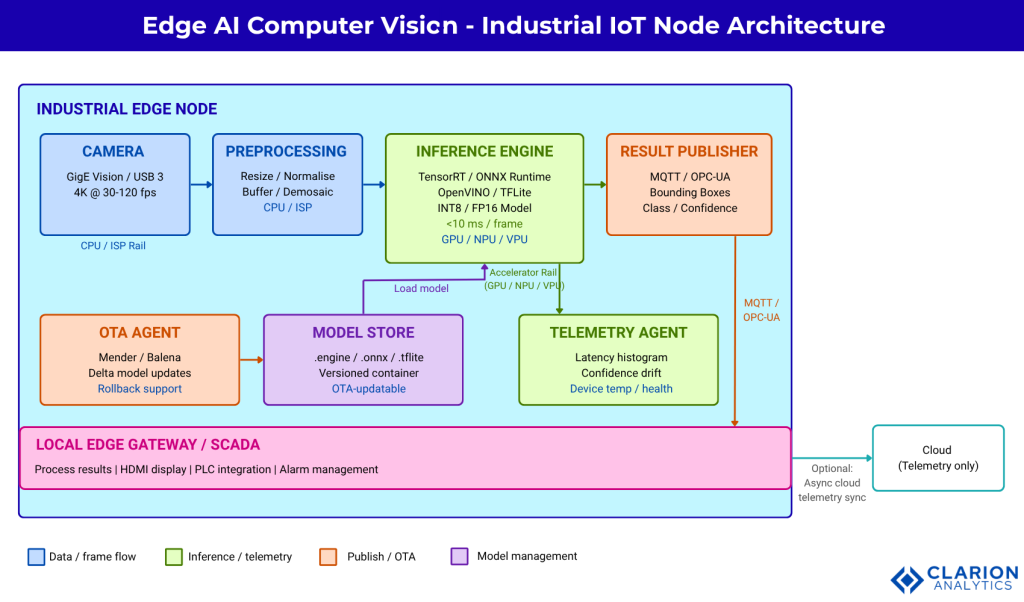

A production edge vision pipeline has four layers: sensor/camera input, a preprocessing stage on-device, a quantized inference engine, and a lightweight result-publishing layer using MQTT or OPC-UA.

Figure 1: Edge AI Vision Pipeline Architecture. The diagram shows a five-component edge vision pipeline for an industrial IoT node. Camera frames enter a preprocessing buffer where resolution scaling and normalization run on the CPU or ISP. The quantized model runs on the dedicated accelerator (GPU, NPU, or VPU), producing bounding boxes or classification scores in under 10ms. Results publish over MQTT to a local SCADA system or edge gateway, with optional cloud sync for model telemetry only, keeping production data on-premises.

“The edge inference stack is not a trimmed-down cloud stack. It is a different architecture built for determinism, not elasticity.”

In practice, teams building this architecture often underestimate the preprocessing step. Resizing a 4K GigE camera frame to 640×640, normalizing pixel values, and batching for the inference engine can consume 3-5ms on a CPU. Offloading preprocessing to the device ISP or using CUDA-accelerated preprocessing in OpenCV reclaims that budget.

Choosing Your Runtime: TensorRT, ONNX Runtime, and OpenVINO

TensorRT delivers the highest throughput on NVIDIA Jetson hardware; ONNX Runtime provides the broadest hardware compatibility across ARM and x86 edge platforms; OpenVINO is the best choice for Intel Movidius and Core Ultra edge targets.

| Runtime | Key Strength | Best Used When |

|---|---|---|

| NVIDIA TensorRT | Highest FPS on NVIDIA GPUs; FP16/INT8 kernel fusion | You deploy exclusively on Jetson Orin or T4 edge servers |

| ONNX Runtime | Hardware-agnostic; 30+ execution providers | Your fleet spans ARM, Intel, and NVIDIA devices |

| Intel OpenVINO | Optimized for Intel Movidius VPU and Core Ultra NPU | You deploy on Intel-based edge gateways or NUCs |

| TFLite / LiteRT | Smallest binary footprint; MCU-friendly | You target microcontrollers or constrained ARM Cortex-M |

The NVIDIA/TensorRT GitHub repository provides official OSS samples and plugins and is the authoritative reference for kernel-level optimization on Jetson platforms. For cross-platform fleets, the microsoft/onnxruntime repository supports every major edge execution provider and is actively maintained with weekly releases.

The EdgeYOLO architecture (Xu et al., 2023, arXiv) achieves 34.6% mAP at 256 frames per second on a Jetson AGX Xavier, outperforming YOLOv5s by 4.5% accuracy with 20% fewer parameters, making it a strong baseline for defect detection tasks.

Model Optimization: Quantization, Pruning, and Architecture Selection

INT8 post-training quantization typically reduces model size by 60-75% and increases throughput by 2-4x with under 3% accuracy degradation on industrial classification tasks when a representative calibration dataset is used.

Research from Li et al. (2024, IEEE IoT Journal) demonstrates a structured pruning and INT8 quantization pipeline that retains 97.3% of baseline accuracy while reducing model size by 62%. That result is achievable in production with careful calibration dataset curation.

Code Snippet 1: Exporting YOLOv8 to TensorRT with INT8 Quantization

Source: ultralytics/ultralytics – ultralytics/engine/exporter.py\

This three-line export call wraps the full TensorRT builder API. It performs layer fusion, INT8 calibration against the supplied dataset, and serializes a hardware-specific .engine file. The resulting engine file is not portable across GPU architectures, so build it on the target device class.

“Quantization is not a last resort. It is the first optimization decision every edge vision team should make.”

Code Snippet 2: Static INT8 Quantization with ONNX Runtime

Source: microsoft/onnxruntime – onnxruntime/python/tools/quantization/

This snippet runs static INT8 post-training quantization on any ONNX model, hardware-agnostically. The CalibrationDataReader feeds representative production images to determine optimal quantization scale factors per layer. The output runs on ARM, Intel, and NVIDIA targets without modification.

Canziani et al. (arXiv, 2023 update) confirm that MobileNetV3 and EfficientNet-Lite families sit on the Pareto front for accuracy per watt in the 5-15W power envelope common to industrial edge nodes.

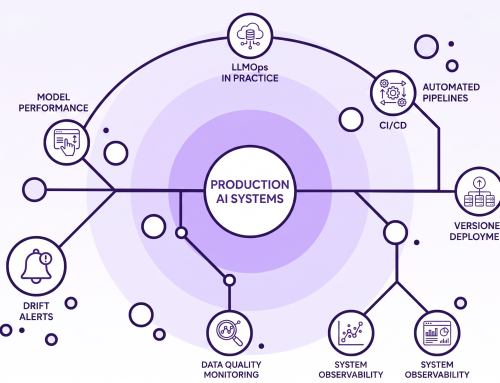

Deployment, Monitoring, and Model Updates at Scale

Production edge vision deployments require an OTA update mechanism, a lightweight telemetry agent for inference latency and confidence score drift, and a rollback capability, all operable without remote SSH access to each device.

“Shipping a model to one Jetson is engineering. Shipping to 500 Jetsons reliably is operations.”

Teams building this typically find that model drift is the silent killer of production accuracy. A model trained on summer lighting conditions degrades when the factory shifts to winter lighting in December. Monitoring average confidence scores per camera zone, not just aggregate accuracy, surfaces this drift weeks before it causes line stoppages.

A practical MLOps stack for industrial edge vision includes three components. First, a container registry (Harbor or AWS ECR) stores versioned model containers. Second, an OTA agent (Mender, Balena, or NVIDIA Fleet Command) handles delta updates to the inference container on each node. Third, a lightweight MQTT telemetry agent ships inference latency, confidence score histograms, and device temperature back to a central dashboard without exposing raw camera frames.

Accenture’s Industry X research from 2023 found industrial AI deployments with on-device inference achieve 3-5x lower total cost of ownership over five years compared with cloud-routed architectures. A robust edge MLOps pipeline is a major contributor to that TCO advantage.

Frequently Asked Questions

What hardware is best for edge AI computer vision in industrial settings?

NVIDIA Jetson Orin NX and AGX Orin lead for GPU-accelerated workloads requiring 30+ FPS across multiple streams. Intel NUC with Core Ultra NPU suits lower-power, single-stream applications. Hailo-8 and Hailo-8L offer the best performance-per-watt for dedicated inference accelerator cards in existing x86 industrial PCs. Hardware choice depends on stream count, model complexity, and available board power.

How do I convert a PyTorch model to run on a Jetson edge device?

Export the PyTorch model to ONNX first using torch.onnx.export(). Then convert the ONNX model to a TensorRT .engine file using either the trtexec CLI tool or the Ultralytics export API with format=”engine”. Build the engine file on the target Jetson device to ensure architecture-specific kernel optimization. The entire pipeline typically takes under 30 minutes for a YOLOv8-scale model.

What is the difference between TensorRT and ONNX Runtime for edge inference?

TensorRT fuses layers, selects CUDA kernels, and builds a hardware-locked binary optimized for a specific NVIDIA GPU. It delivers the highest possible throughput on NVIDIA targets but is not portable. ONNX Runtime uses swappable execution providers (CUDA, TensorRT, OpenVINO, CoreML) to run the same model file across different hardware. Choose TensorRT for maximum performance on homogeneous NVIDIA fleets. Choose ONNX Runtime for heterogeneous industrial device pools.

How much accuracy do I lose when quantizing a computer vision model to INT8?

With a well-curated calibration dataset of 200-500 representative images, INT8 post-training quantization typically incurs less than 1-3% mAP degradation on standard detection benchmarks. Li et al. (2024) demonstrated 97.3% accuracy retention with 62% model size reduction using structured pruning combined with INT8 quantization. Accuracy loss above 5% usually signals a poor calibration set rather than a fundamental quantization limit.

Can I run edge inference without an internet connection in a factory?

Yes, this is a primary design goal of edge AI computer vision deployments. TensorRT engine files and ONNX Runtime models run entirely on-device with no network dependency during inference. OPC-UA and MQTT result publishing operate on local factory networks. Internet connectivity is only required for initial model deployment and optional telemetry sync, both of which can be batched during scheduled maintenance windows.

Building for the Edge Means Building for Reality

Three insights define successful industrial edge vision deployments. First, model optimization is not optional: INT8 quantization and architecture selection determine whether a design fits the hardware budget. Second, runtime choice must match fleet topology: TensorRT for NVIDIA-only fleets, ONNX Runtime for mixed hardware. Third, MLOps at the edge is a discipline of its own: OTA updates, confidence drift monitoring, and rollback capability are non-negotiable in production.

The engineers who succeed at this work treat the edge node not as a small cloud server but as a deterministic embedded system with its own operational contract.

The question worth sitting with: as edge AI computer vision matures from pilot to fleet-scale production, which layer of your stack will break first, the model, the runtime, or the operations pipeline?