Data augmentation for computer vision is the practice of programmatically expanding a training dataset by applying label-preserving transformations to existing images, including geometric distortions, colour-space shifts, and synthetic generation via diffusion models. Its twin purpose is to improve generalisation by exposing a model to greater visual variety, and to correct dataset imbalances that encode demographic or contextual bias into the model’s predictions. When applied correctly, augmentation is the single highest-leverage intervention available to a team before the first training epoch begins.

Why Most CV Models Fail in the Real World

The gap between test-set performance and real-world performance in computer vision is almost always a data distribution problem, not a model problem.

The global AI vision market reached USD 15.85 billion in 2024 and is projected to surpass USD 108 billion by 2033 at a 24.1% CAGR, driven by demand for automation, deep learning, and edge-computing deployments. Yet despite this investment, production failure remains stubbornly common. A model scoring 97% on a held-out test set can degrade badly the moment it encounters an ethnic demographic, lighting condition, or camera angle it rarely saw during training. The test set, drawn from the same distribution as the training data, masked the problem entirely.

Data augmentation for computer vision sits at the centre of almost every production failure post-mortem. According to McKinsey (May 2024), 70% of high-performing AI organisations still report significant difficulty integrating data into models effectively. The data problem, not the algorithm problem, is what keeps CTOs up at night.

Researchers found that free image databases used to train skin-cancer detection AI contained very few images of people with darker skin tones. The downstream consequence was models that performed poorly for the populations who needed them most. This is not an edge case. It is a pattern repeated across facial recognition, hiring tools, autonomous navigation, and medical diagnostics.

“The best augmentation strategy is not the one with the most transforms, it is the one designed around the gaps in your dataset.”

Understanding the Augmentation-Bias Paradox

Standard augmentation multiplies what is already in your dataset if the source data is skewed, every flip and crop makes the skew worse.

This is the augmentation-bias paradox, and it catches most teams off-guard. Bias propagation is a known limitation: if the original dataset is biased, augmentation replicates and reinforces that bias. Label integrity risk is a related problem, as certain transformations may invalidate the label entirely.

A 2024 study by Angelakis et al. examined class-specific bias introduced by standard augmentation operations like random cropping. The researchers found that while residual architectures such as ResNet exhibited consistent DA-induced bias across datasets, Vision Transformers showed meaningfully greater robustness. Architecture choice, not just data choice, is a bias variable that most teams overlook.

Bias in a CV model originates from three distinct sources, each requiring a different fix. Label-collection bias emerges when annotators apply inconsistent standards across demographic groups. Sampling bias occurs when one subgroup, scenario, or environment is systematically under-represented in the raw data. Augmentation-induced bias is created by the pipeline itself when transforms distort statistical relationships between classes.

According to a 2025 comprehensive review in Artificial Intelligence Review (Springer), there is a real danger of producing biased synthetic data that further biases downstream models, creating a vicious cycle. Breaking that cycle requires intentional, audited augmentation design, not random transform stacking.

Core Augmentation Techniques: From Geometry to Generative AI

The most robust CV pipelines layer three augmentation tiers: geometric transforms for invariance, photometric transforms for lighting variation, and diffusion-model synthesis for demographic and scenario coverage gaps. Each tier serves a distinct purpose.

Tier 1: Geometric Transforms

Flipping, rotation, scaling, and affine shear teach the model that object identity does not depend on orientation or position. These operations are cheap, well-understood, and appropriate for almost every CV task. One critical constraint is label integrity. Flipping a digit “6” produces a “9”. Rotating a chest X-ray 180 degrees changes clinical interpretation. Always confirm that a transform preserves label semantics for your specific domain before including it in the pipeline.

Tier 2: Photometric Transforms

Brightness jitter, contrast adjustment, Gaussian noise, and colour-space perturbations teach the model invariance to lighting and sensor variation. These transforms are the primary tool for closing the gap between controlled training environments and messy production deployments, warehouse floors, outdoor surveillance cameras, and mobile devices with inconsistent white balance.

Tier 3: Generative and Synthetic Augmentation

Diffusion models have emerged as a powerful tool for image data augmentation, capable of generating realistic and diverse images by learning the underlying data distribution. Conditional diffusion models can generate counterfactual images that balance the distribution of sensitive attributes such as gender and skin tone across the dataset, while preserving all other image properties unchanged. This is qualitatively different from oversampling; you are generating genuinely novel variations that expand the distribution.

USC researchers demonstrated that quality-diversity algorithms combined with generative models produced a balanced dataset of roughly 50,000 images in 17 hours, approximately 20 times more efficiently than traditional rejection-sampling approaches. That puts synthetic diversity augmentation within reach of teams without massive data budgets.

According to Gartner (2025), synthetic data now sits adjacent to foundation models on the maturity curve and is positioned as essential infrastructure for bias correction and model training. It is no longer experimental.

“A model that passes your test suite but fails on a demographic it rarely saw in training is not a test problem; it is a data problem.”

Pipeline Architecture: From Raw Data to Fair Deployment

A production-ready CV pipeline runs a bias audit before augmentation, not after training; the ordering determines whether bias is corrected or amplified.

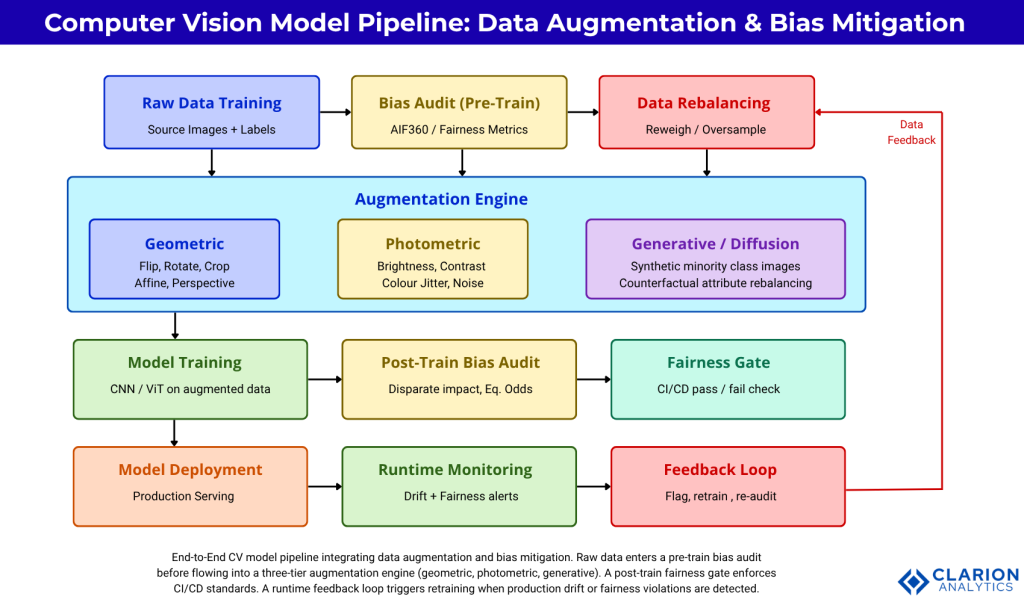

Figure 1. End-to-end CV model pipeline integrating data augmentation and bias mitigation. Raw data enters a pre-train bias audit before flowing into a three-tier augmentation engine (geometric, photometric, generative). A post-train fairness gate enforces CI/CD standards. A runtime feedback loop triggers retraining when production drift or fairness violations are detected.

Diagnosing and Correcting Bias in Your Training Data

Bias in CV models originates from three sources: label-collection bias, sampling bias, and augmentation-induced class-accuracy skew, each requiring a different technical fix.

Start with dataset-level auditing before writing a single line of training code. Compute class distribution, demographic distribution where labels permit, and inter-annotator agreement scores. The question is not whether bias exists, it always does, but whether its distribution will produce model failures that matter in your deployment context.

The AI Fairness 360 toolkit from IBM Research is an extensible open-source library containing techniques developed by the research community to help detect and mitigate bias throughout the AI application lifecycle. Its 70+ fairness metrics and nine mitigation algorithms cover pre-processing, in-processing, and post-processing interventions. Disparate impact ratio, equal opportunity difference, and statistical parity difference provide three different lenses on the same bias question. No single metric tells the whole story.

In practice, teams building medical imaging or hiring-decision systems find that disparate impact alone is insufficient. Bellamy et al. (2018/2019) showed that applying AIF360’s Reweighing algorithm as a pre-processing step consistently reduces bias metrics across datasets with a minimal performance cost, the average AUC drop is less than 1.5% per subsequent evaluations using Fairlearn and AIF360 frameworks.

Augmentation Approaches: A Practical Comparison

| Approach | Key Strength | Best Used When |

|---|---|---|

| Geometric transforms (flip, rotate, crop) | Zero cost, universal invariance | Object detection or classification with orientation-independent classes |

| Photometric transforms (brightness, noise, colour jitter) | Sensor and lighting robustness | Outdoor, multi-camera, or cross-device deployments |

| Conditional Diffusion Model synthesis | Fills demographic or scenario gaps; generates genuinely novel distributions | Dataset has demographic under-representation or rare-event scarcity |

| AIF360 Reweighing (pre-processing) | Corrects label bias without changing data volume | Protected-attribute bias identified at audit; cannot collect more data |

| AutoAugment / RandAugment (policy search) | Automated, task-specific policy optimisation | Sufficient compute budget; highest benchmark accuracy required |

Tooling and Implementation: Building the Pipeline

Three open-source libraries cover the complete augmentation-to-fairness pipeline: Albumentations for transforms, AIF360 for bias metrics, and diffusion-model wrappers for synthetic data generation. You do not need to build any of this infrastructure from scratch.

Code Snippet 1: Albumentations Augmentation Pipeline

Source: albumentations-team/albumentations – README.md

This snippet defines a composable six-step pipeline that simultaneously transforms the image and its associated bounding boxes. Passing bbox_params ensures that when the image flips or rotates, bounding box coordinates update automatically, preventing the label integrity failures described earlier. Albumentations benchmarks at up to 5.68x the speed of equivalent torchvision transforms on a single CPU thread, keeping the data loader from bottlenecking training.

“Bias auditing should be a gate in your CI pipeline, not a post-deployment fire drill.”

Code Snippet 2: AIF360 Reweighing for Pre-Processing Bias Mitigation

Source: Trusted-AI/AIF360 – aif360/sklearn/preprocessing/reweighing.py

This snippet implements the Reweighing pre-processing algorithm. Rather than modifying or deleting training samples, it assigns a per-sample weight so that the joint distribution of labels and protected attributes becomes more statistically uniform before training begins. The weight array passes directly into scikit-learn’s fit() no custom training loop required. It is the least invasive bias-mitigation intervention available and the correct first step for most production teams.

Real-World Applications and Architecture Patterns

From autonomous vehicles to medical imaging, the same architecture applies: domain-specific augmentation at ingestion, bias measurement at dataset freeze, and continuous fairness monitoring at deployment. The specific transforms change; the pipeline structure does not.

Autonomous vehicle teams apply weather simulation augmentations, fog and rain overlays, sensor noise models, and time-of-day colour shifts to ensure the object detector generalises across conditions that are dangerous to collect at real-world scale. The bias concern is geographic and contextual: a model trained primarily on North American urban roads may perform poorly in narrow European streets or monsoon conditions in Southeast Asia.

Medical imaging presents a higher-stakes version of the same problem. A dermatology model trained on lighter skin tones risks missing a common form of skin cancer on a darker-skinned patient, and AI is only as good as its data. Diffusion-model augmentation, generating counterfactual images of the same lesion across the skin-tone spectrum, is one of the few techniques capable of addressing this gap when collecting additional real data is not possible.

Retail and manufacturing quality control present a different profile. The bias risk is contextual rather than demographic: models trained on pristine factory lighting fail on production floors with variable illumination, different camera orientations, or packaging variants not present in training. Photometric augmentation is the primary tool here, layered with geometric augmentation for fixture and camera mount variation.

According to Gartner (2025), organisations face significant governance challenges around bias and fairness, and emerging regulations may impede AI applications where models cannot demonstrate equitable performance across demographic groups. Fairness is no longer a nice-to-have; it is an architecture requirement.

“The organisations that will win the next decade of computer vision are building bias-aware data pipelines today, not accuracy leaderboards.”

Frequently Asked Questions

What is data augmentation in computer vision and why does it matter?

Data augmentation in computer vision is the programmatic creation of additional training images by applying controlled transformations to existing ones. It matters because deep learning models require large, diverse datasets to generalise well. When real data is scarce, expensive, or biased, augmentation is the primary mechanism for expanding dataset size, improving robustness, and reducing overfitting without collecting new images.

How does data augmentation introduce or amplify bias?

If your source dataset under-represents a demographic group, geographic context, or lighting scenario, standard augmentation multiplies that under-representation across your training set. A 2024 arXiv study showed that random cropping can skew class accuracy unevenly depending on model architecture. Auditing dataset distribution before and after augmentation is essential to catch both inherited and augmentation-induced variants of the problem.

Which augmentation library should I use for a production computer vision pipeline?

Albumentations is the leading choice for CPU-based augmentation: it supports 70+ transforms, handles images, masks, bounding boxes, and keypoints in a single call, and benchmarks consistently faster than torchvision and imgaug. For GPU-accelerated augmentation on large uniform batches, Kornia is the best alternative. Both integrate cleanly with PyTorch and TensorFlow and take under five minutes to adopt from torchvision.

How do I measure and reduce demographic bias in a CV model?

Start with IBM’s AIF360 toolkit to measure disparate impact ratio, statistical parity difference, and equal opportunity difference across your protected attributes. A disparate impact ratio below 0.8 indicates legally significant bias in many jurisdictions. For mitigation, apply Reweighing as a pre-processing step before training. If data is too sparse to reweight effectively, use a conditional diffusion model to generate synthetic images for under-represented groups.

What is the difference between geometric augmentation and synthetic data generation?

Geometric augmentation creates variants of existing images by transforming them; every output derives from real data already in your dataset. Synthetic data generation, via GANs or diffusion models, creates entirely new images by sampling from a learned distribution. Synthetic generation can introduce genuinely novel diversity not present in the source data, making it the only tool capable of filling demographic or scenario gaps when real data cannot be collected.

Conclusion

Three insights should drive your next CV project. First, augmentation strategy must be designed around dataset gaps, not applied as a default checklist; the type of bias in your data determines whether geometric, photometric, or generative augmentation is the right lever. Second, bias auditing must happen before training, not after deployment; AIF360 makes pre-train auditing a 30-line pipeline addition, not a research project. Third, synthetic data generation has crossed from experimental to production-ready; when demographic or scenario gaps cannot be filled with real data, conditional diffusion models are now fast and accessible enough for most engineering teams.

The question worth sitting with: does your current test set actually measure model performance for every subgroup your model will encounter in production, or does it just confirm that your model has memorised your training distribution?