Deep learning model deployment via REST API is the process of packaging a trained neural network as a versioned, stateless HTTP service that accepts structured inference requests and returns predictions in real time. An optimized deployment pipeline combines model compression, dynamic batching, and scalable serving infrastructure to minimize response latency, maximize hardware utilization, and make model outputs reliably accessible to any client over standard web protocols.

Why Production Inference Is Now a Business-Critical Problem

Inference workloads are directly tied to revenue generation. As Deloitte (2025) projects, inference will account for roughly two-thirds of all AI compute by 2026, up from one-third in 2023, making every API call’s cost and speed a direct factor in unit economics and user experience.

Serving a deep learning model via a REST API is no longer a nice-to-have engineering exercise. According to Gartner (2024), more than 30% of all API demand growth by 2026 will come from AI and LLM-powered tools. Separately, Gartner (2023) projected that over 80% of enterprises will have GenAI applications in production by 2026, up from less than 5% in early 2023. The ramp is steep, and infrastructure must keep pace.

Teams building production AI systems quickly discover a painful gap. A model that achieves 95% accuracy in a notebook may struggle to serve 500 simultaneous requests without timing out. The bottleneck is almost never the model itself; it is the serving infrastructure around it.

“Getting a model to 95% accuracy is the research problem. Getting it to serve 10,000 concurrent requests at under 100ms is the engineering problem.”

Real-World Use Cases: Where REST-Served Models Deliver Value

REST-served deep learning models power recommendation engines, fraud detection, real-time NLP, medical imaging, and autonomous vehicle perception; any domain where a client application needs a low-latency prediction over a network.

Fraud detection APIs at financial institutions process thousands of transactions per second, each requiring sub-100ms inference. NLP sentiment and classification models are embedded inside customer support pipelines, executing synchronously on every incoming ticket. Computer vision APIs score product images the moment they are uploaded to e-commerce platforms.

What these use cases share: the model is a stateless function, the REST API is the contract, and latency SLAs are non-negotiable. In practice, teams building this typically find that meeting a 50ms P99 requirement forces a complete rethink of how batching, hardware, and model precision are configured.

Architecture of an Optimized Model Serving System

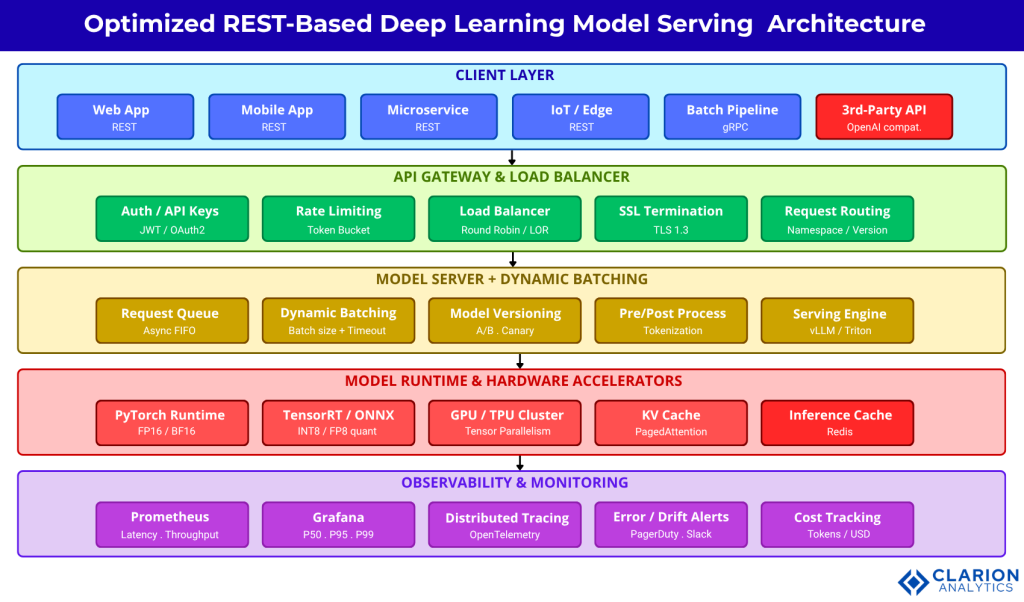

A production-grade model serving architecture routes HTTP requests through a load balancer to a model server such as vLLM, Triton, or BentoML, which applies dynamic batching before calling the model runtime, then returns predictions through a caching and monitoring layer.

The diagram below shows the five-layer reference architecture. Each layer has distinct responsibilities and distinct failure modes. Teams should treat Layers 3 and 4 as the primary optimization surface, where batching, quantization, and parallelism decisions create the largest impact.

Figure 1: Five-layer architecture for optimized REST-based deep learning deployment. (1) Client Layer sends HTTP/gRPC requests. (2) API Gateway handles auth, rate limiting, and load balancing. (3) Model Server applies dynamic batching, versioning, and pre/post-processing via vLLM, Triton, or BentoML. (4) Model Runtime executes inference on GPU/TPU with quantized precision and KV-cache optimization. (5) Observability layer captures latency, throughput, and drift metrics via Prometheus and Grafana. A telemetry feedback loop flows back through all layers.

Key Technologies: Choosing Your Serving Stack

The three dominant open-source serving frameworks are vLLM (best for high-throughput LLM inference), NVIDIA Triton (best for multi-framework, multi-GPU production environments), and BentoML (best for rapid prototyping and framework-agnostic REST API generation). Choosing the wrong tool at this stage costs weeks of re-architecture.

| Option | Key Strength | Best Used When |

|---|---|---|

| vLLM | PagedAttention memory management; 40,000+ stars; OpenAI-compatible REST interface out of the box | You serve large language models and need maximum GPU throughput with built-in horizontal scaling |

| NVIDIA Triton | Multi-framework support (TensorRT, PyTorch, ONNX, TensorFlow); enterprise-grade dynamic batching; HTTP and gRPC | You have heterogeneous model types across your org and need a single production inference standard |

| BentoML | Fastest path from model to REST API; Python-native; batchable decorator; auto Docker generation | Your team is iterating quickly and needs a framework-agnostic wrapper without heavy DevOps overhead |

| FastAPI + Ray Serve | Maximum API flexibility; async/await; scales from 1 to 100+ GPU nodes via Ray cluster | You need custom pre/post-processing, multi-model pipelines, or a non-standard request format |

“Quantization and batching are not optional polish, they are the difference between a model that costs $0.001 per inference and one that costs $0.06.”

Five Optimization Techniques That Cut Inference Latency

The five highest-impact optimizations for a REST-served deep learning model are: (1) dynamic request batching, (2) model quantization, (3) KV-cache management, (4) model compilation via ONNX or TorchScript, and (5) async non-blocking API handlers.

1. Dynamic Request Batching

Sending one request at a time to a GPU is deeply wasteful. Dynamic batching groups concurrent API calls into a single forward pass. Research from Delavande et al. (2026) confirms that structured request timing can reduce per-request energy consumption by up to 100x on H100 GPUs, particularly during memory-bound decoding phases.

Source: github.com/bentoml/BentoML

The single decorator @bentoml.api(batchable=True) instructs BentoML to group concurrent API requests into a single batched forward pass. Teams using this pattern typically see 3 to 5x GPU utilization improvement over per-request inference. The underlying model code requires no modification.

2. Model Quantization (INT8 and FP8)

Quantization converts model weights from FP32 or FP16 to INT8 or FP8, reducing memory footprint and bandwidth demands during decoding. The survey by Zhou et al. (2024) identifies quantization as one of the three primary techniques for reducing LLM inference cost without materially degrading accuracy. Post-training quantization requires no retraining and can be applied directly to any existing checkpoint.

At scale, the cost difference is substantial. According to McKinsey (2025), reasoning-capable model inference costs six times more than standard model inference. Leading companies are responding with sparse activations and distillation; INT8 quantization is the fastest first step any team can take.

3. KV-Cache and Memory Management

Key-value caching stores and reuses attention key/value pairs from previous tokens during auto-regressive decoding. vLLM implements this via PagedAttention, treating GPU memory like virtual memory pages. This enables significantly higher concurrent request throughput compared to standard attention implementations by eliminating memory fragmentation.

4. Model Compilation (ONNX and TorchScript)

Exporting a model to ONNX or TorchScript format removes Python-runtime overhead and enables hardware-optimized kernel execution via TensorRT. A compiled model runs inference without the Python GIL and supports parallel execution across multiple instances on the same GPU.

5. Async API Handlers

Blocking synchronous request handlers waste CPU time while GPU inference runs. Using Python’s async/await with FastAPI or Ray Serve allows the server to accept new connections while existing ones wait for inference results. The arXiv inference engines survey (2025) catalogues 20+ production inference engines and consistently identifies async scheduling as a baseline requirement for production throughput.

Source: Standard FastAPI + PyTorch deployment pattern

Pydantic’s BaseModel validates incoming request schemas at the API layer, catching malformed inputs before they reach the model. Using Depends(get_model) with lru_cache ensures a single model instance is loaded once and reused across all requests. This pattern eliminates the most common class of cold-start latency spikes in small to medium deployments.

“The biggest latency wins almost always come from the serving layer, not from re-training the model.”

Implementation Guide: Going From Notebook to Production API

Moving a model from notebook to production REST API requires four steps: (1) export the model to a portable format, (2) wrap it in a serving framework with batching enabled, (3) containerize with Docker and add a health-check endpoint, and (4) deploy behind a load balancer with horizontal auto-scaling.

Step 1: Export to ONNX or TorchScript. This removes Python-runtime overhead and enables multi-instance parallelism. For PyTorch models, torch.onnx.export() or torch.jit.script() accomplish this in a few lines and produce a runtime-independent model artifact.

Step 2: Wrap in a serving framework. For rapid iteration, BentoML provides the lowest configuration overhead. For multi-model enterprise environments, NVIDIA Triton is the production standard. For maximum LLM throughput, vLLM provides an OpenAI-compatible REST interface out of the box.

Step 3: Containerize and add observability. BentoML generates a Docker image automatically. For Triton or vLLM, use official NGC containers. Add a /health endpoint, a /metrics Prometheus endpoint, and structured JSON logging from day one.

Step 4: Deploy with auto-scaling. Kubernetes with Horizontal Pod Autoscaler scales based on CPU and GPU utilization or custom metrics such as queue depth. Set resource requests and limits explicitly to prevent noisy-neighbour latency degradation across model versions.

Frequently Asked Questions

How do I reduce inference latency for deep learning models served via REST API?

Start with dynamic batching and async API handlers; these deliver the largest gains with the least effort. Then apply INT8 quantization using NVIDIA TensorRT or Hugging Face Optimum. Export the model to ONNX or TorchScript to remove Python-runtime overhead. For LLMs specifically, deploy vLLM with PagedAttention to maximize GPU throughput without code changes.

What is the difference between FastAPI and TorchServe for model deployment?

FastAPI is a general-purpose async Python web framework that gives full control over request handling and suits single-model or small-scale deployments with custom logic. TorchServe is a PyTorch-specific server with built-in batching, versioning, multiple model instances, and gRPC support, better suited for multi-model production environments where standardization and operational consistency matter.

When should I use quantization for a production deep learning model?

Apply quantization when memory bandwidth is your primary bottleneck, typically at low batch sizes during auto-regressive decoding. Post-training INT8 quantization reduces model size by 2 to 4x with under 1% accuracy loss on most classification and NLP tasks. Avoid quantizing tasks that require high numerical precision, such as continuous-output regression in narrow value ranges.

How does dynamic batching improve GPU utilization in a model serving API?

A GPU processes a batch of 32 samples in roughly the same wall-clock time as a single sample, because matrix multiplications are designed for parallel execution. Dynamic batching groups individual API requests arriving within a configurable time window into one forward pass, raising GPU utilization from typical single-request levels of 10 to 20% up to 70 to 90%. This directly reduces cost per inference.

What monitoring metrics should I track for a production deep learning API?

Track five core metrics: P50, P95, and P99 inference latency (to surface tail latency that averages hide); requests per second and error rate (for capacity planning); GPU utilization and memory saturation (for hardware efficiency); input and output distribution drift (to detect upstream data pipeline changes); and cost per 1,000 predictions (for unit economics). Export all metrics in Prometheus format and alert when P99 latency exceeds your SLA threshold.

“Production inference is now where the real AI engineering happens, not in the training notebook.”

Conclusion

Three insights define this domain right now. First, the serving layer, not the model, is where most production latency and cost problems live. Dynamic batching alone can reduce cost per inference by 3 to 5x with no model changes. Second, the choice of serving framework is a long-term architectural decision. vLLM, Triton, and BentoML each make different trade-offs between flexibility, performance, and operational complexity. Match the tool to your team and your scale. Third, quantization is no longer experimental: INT8 and FP8 inference are production-ready for most classification, NLP, and vision tasks, and they are the primary lever that leading AI companies are using to manage inference cost growth.

Gartner (2023) projects that over 80% of enterprises will have GenAI applications in production by 2026. What does your serving infrastructure need to look like to serve those applications profitably and at scale?