Code quality metrics are quantifiable measurements of a codebase’s maintainability, reliability, and functionality. They translate subjective notions of “good code” into objective scores, covering complexity, duplication, test coverage, defect density, and technical debt. Engineering teams and CTOs use these metrics to make data-driven decisions about refactoring, release readiness, and technical investment, reducing the hidden cost of poor software before it reaches production.

Why Code Quality Metrics Directly Impact Your Bottom Line

Code quality metrics sit at the center of every sustainable engineering team’s decision-making. Without them, engineering velocity feels like an estimate, and budget conversations about technical debt become impossible to win.

McKinsey’s research on technical debt found that technical debt amounts to 20-40% of a company’s entire technology estate value, and roughly 30% of CIOs report that more than a fifth of their new-product budget is silently consumed fixing quality issues. Gartner’s 2025 data adds that around 40% of infrastructure systems carry meaningful technical debt concerns, and companies using structured debt management approaches report 50% fewer obsolete systems.

These are not abstract engineering concerns. They are cost, speed, and competitive risk in numbers a board can read.

“You cannot improve what you do not measure, but in software, measuring the wrong thing is as dangerous as measuring nothing.”

The five code quality metrics below are the ones proven to predict delivery problems, guide refactoring priorities, and give CTOs language for explaining investment to stakeholders.

Cyclomatic Complexity: The Metric That Predicts Defects Before They Occur

Cyclomatic complexity counts the number of independent logical paths through a function. It is the fastest single indicator of how likely a piece of code is to contain defects and how hard it will be to test.

Thomas McCabe introduced the formula in 1976. Its core insight that more decision points mean more paths, and more paths mean more testing burden, remains valid across every language and paradigm. A 2023 IEEE study (Odeh et al.) trained a machine learning model on 3,270 Python, Java, and C++ samples to predict cyclomatic complexity ratings and achieved 96% accuracy. The metric is that consistent.

The standard scoring guide: 1-5 is simple and easy to test; 6-10 is moderate and worth flagging in code review; 11-20 is complex and should be refactored before merge; 21+ is effectively untestable and requires mandatory refactoring.

Radon, a Python tool used by default in Codacy and Code Climate, computes cyclomatic complexity in under a second per file. The snippet below shows output when run against a module with two functions that need attention.

Source: rubik/radon CLI

bash

# Compute cyclomatic complexity — grade C+ flagged with -nc

$ radon cc mymodule.py -a -nc

mymodule.py

F 12:0 calculate_price - C (complexity: 12)

F 45:0 process_orders - D (complexity: 17)

Average complexity: C (12.50)The -nc flag surfaces only functions ranked C or worse. Both functions here exceed the review threshold. The process_orders function at complexity 17 is firmly in mandatory-refactor territory. Teams building production systems typically find that one or two such hot-spots consume the majority of bug-fix time because high-complexity paths are exactly where edge cases hide.

“A cyclomatic complexity score above 10 is not a style preference; it is a statistical predictor of defects.”

A 2024 IEEE paper on Enhanced Cyclomatic Complexity proposed enriching the base score with structural factors, further improving defect prediction accuracy beyond raw path counts alone.

Test Coverage: What the Percentage Actually Tells You (and What It Doesn’t)

Test coverage measures the share of source code executed during your automated test suite. The number is a floor estimate of risk, not a guarantee of quality.

High coverage correlates with fewer production defects, but coverage without meaningful assertions is a false comfort. Codacy’s 2024 State of Software Quality report found that 53% of developers treat code reviews as mandatory, yet over 40% of teams still run unit and frontend testing manually. That gap. mandatory reviews, manual testing is precisely where incomplete coverage hides.

The practical target thresholds most teams use: below 60% represents significant business risk for payment or security-critical paths; 60-80% is acceptable for internal tooling; 80%+ is the target for production services with SLAs; 100% is rarely worth pursuing since test quality matters more than count.

Test coverage belongs in your CI/CD pipeline as a hard gate, not a dashboard metric read after a release. If a pull request drops coverage below your threshold, it does not merge. That rule is the simplest quality enforcement mechanism most teams under-use.

Technical Debt Ratio: Translating Code Quality Into Business Language

The technical debt ratio expresses remediation cost as a percentage of total development cost. It is the metric that converts code quality conversations into language finance teams understand.

“Technical debt is like dark matter: you know it exists, you can infer its impact, but you cannot see or measure it without the right metrics.” ~ McKinsey, paraphrased

McKinsey’s Tech Debt Score framework, built from analysis of 220 companies across seven industries, found a direct correlation between lower debt scores and higher revenue growth. Companies with actively managed debt freed engineers to spend up to 50% more time on innovation rather than maintenance. Deloitte’s 2024 Tech Trends report benchmarks leading companies as targeting 15% of IT budgets for debt management; those that wait for crisis typically spend 30-40%.

In practice, SonarQube computes the technical debt ratio by estimating remediation effort for every open issue and dividing it by total development effort. A ratio below 5% earns an “A” rating on SonarQube’s scale. Above 50% is rated “E”, critical.

One CIO quoted in McKinsey’s research reduced engineering time spent paying the “tech debt tax” from 75% to 25% after systematic measurement and management. That shift directly enabled the company’s growth phase.

Defect Density and Code Duplication: The Fast Indicators

Defect density and code duplication are the two metrics that give the fastest signal that something is wrong in a codebase, even before deep analysis.

Defect density measures bugs per thousand lines of code (KLOC). A figure above 1.0 per KLOC in production code typically indicates inadequate pre-merge testing. Capers Jones’s study of over 12,000 software projects found formal code reviews detected 60-65% of hidden defects, while informal reviews caught fewer than 50%. Tracking defect density by module or team pinpoints exactly where review discipline breaks down.

Code duplication tracks the percentage of the codebase that is copied rather than abstracted. GitClear’s 2025 analysis of 211 million changed code lines found copy-pasted code rose from 8.3% to 12.3% between 2021 and 2024, partly driven by AI coding assistant adoption. Duplicated code doubles the remediation burden: the same fix must be applied in every copy.

“Teams that embed quality gates into CI/CD pipelines ship faster because they stop defects at the door instead of hunting them in production.”

Keep duplication below 3% for core business logic. Flag anything above 5% as a refactoring sprint candidate.

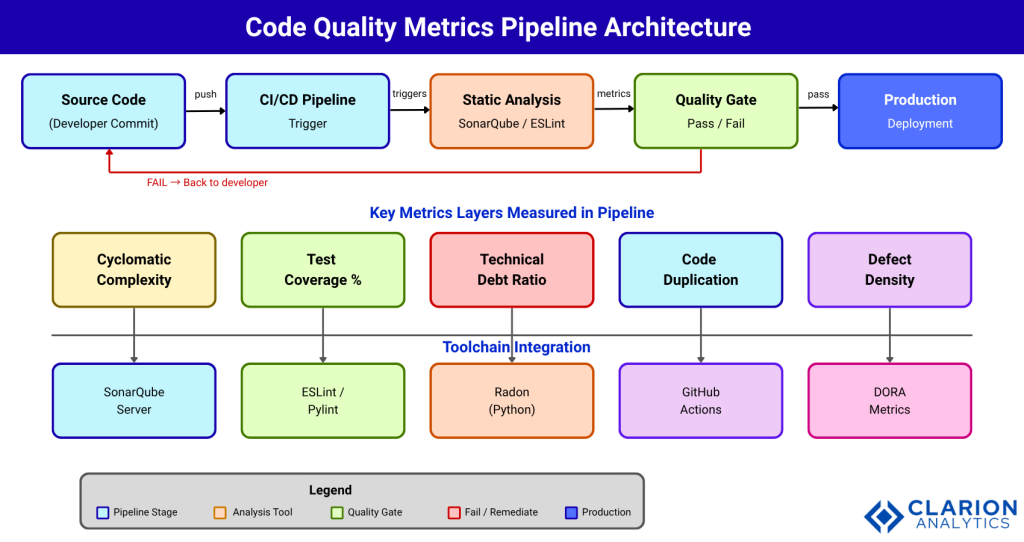

Building a Quality Pipeline: Tools, Architecture, and Implementation

A code quality pipeline runs continuously, not as a quarterly audit. Every commit triggers analysis; every pull request must pass a quality gate before merge.

The snippet below shows how to embed a SonarQube quality gate into a GitHub Actions workflow. Every pull request runs the scan; the pipeline fails automatically if configured thresholds are breached.

Source: SonarSource/sonarqube-scan-action

yaml

# .github/workflows/quality.yml

name: Code Quality Gate

on: [push, pull_request]

jobs:

sonarqube:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- name: SonarQube Scan

uses: SonarSource/sonarqube-scan-action@master

env:

SONAR_TOKEN: ${{ secrets.SONAR_TOKEN }}

SONAR_HOST_URL: ${{ secrets.SONAR_HOST_URL }}fetch-depth: 0 ensures the scanner has full git history for accurate blame analysis and new-issues-only tracking. Without it, SonarQube treats every issue as new on each scan.

Tool Comparison

| Tool | Key Strength | Best Used When |

|---|---|---|

| SonarQube | 30+ language support, quality gates, CI/CD integration | Enterprise codebases needing unified dashboards and policy enforcement |

| radon | Cyclomatic complexity + Halstead + Maintainability Index | Python teams tracking complexity trends over time |

| Pylint | Deep PEP 8 and code smell detection with a quality score | Python teams wanting a single linter score alongside static analysis |

| ESLint | Configurable JS/TS linting with a large plugin ecosystem | Frontend teams enforcing style and catching logic errors early |

| GitHub Code Scanning | Native PR integration, zero infrastructure overhead | Teams on GitHub wanting frictionless security and quality gates |

SonarQube analyzes over 750 billion lines of code daily and is used by 75% of Fortune 100 companies. A 2024 arXiv study (Jin et al.) analyzed 36,460 open-source repositories and found distribution-based scoring of code quality metrics better predicts real-world maintainability than fixed threshold comparisons alone.

Frequently Asked Questions About Code Quality Metrics

What is the most important code quality metric for a software team?

Cyclomatic complexity is the highest-signal single metric because it directly predicts both defect likelihood and testing difficulty. Pair it with test coverage to get a complete picture: complexity tells you where risk lives; coverage tells you whether those risky paths are tested. Together, they cover the majority of pre-release quality risk.

How do you measure code quality without slowing developers down?

Embed measurement into the CI/CD pipeline so it runs automatically on every commit. Static analysis tools like SonarQube and ESLint return results in under two minutes for most projects. Developers see feedback inside their pull request — the same place they read code review comments without switching tools or waiting for a separate report.

What is a good technical debt ratio target?

A technical debt ratio below 5% earns an “A” rating in SonarQube’s scale and is the widely accepted target for production software. Anything above 20% signals that remediation is consuming enough development time to warrant a dedicated debt-reduction sprint before the next feature cycle begins.

Which tools are best for tracking code quality metrics automatically?

SonarQube covers the broadest language range and integrates with GitHub, GitLab, Bitbucket, and Jenkins. For Python teams, radon and Pylint complement SonarQube with deeper language-specific analysis. For JavaScript and TypeScript, ESLint with appropriate plugins enforces both style and logic standards. Most CI/CD platforms support all three with minimal configuration.

Can AI-generated code still have poor code quality metrics?

Yes — and measurably so. GitClear’s 2025 research found that code duplication rose from 8.3% to 12.3% between 2021 and 2024, partly driven by AI coding assistant adoption. Gartner’s Predicts 2026 report warned that citizen-developer prompt-to-app approaches could increase software defects by 2,500% by 2028 without corresponding quality governance. AI tools accelerate output; quality gates enforce standards.

Three Insights Worth Taking into Your Next Sprint

Code quality metrics work only when they are embedded in the development workflow, not reviewed after the fact. The three takeaways that matter most:

Cyclomatic complexity above 10 is a quantified defect risk; refactor before merge, not after the bug report. Technical debt ratio translates code quality into the language of cost and speed: use it to justify refactoring investment to stakeholders who do not read code. Quality gates in CI/CD pipelines enforce standards automatically, removing the social friction of code-review debates and making quality a pipeline constraint rather than a personal judgment.

The question for every team is not whether to track these metrics, but at what threshold to act. Start with cyclomatic complexity on your three most complex modules, run radon or SonarQube today, and set a pull request policy around the results this sprint.