Computer vision is the branch of artificial intelligence that enables machines to interpret visual data images, video frames, and point clouds with human-like accuracy. Models range from simple edge detectors to billion-parameter transformers. The history of computer vision models traces three major eras: hand-crafted features (pre-2012), deep convolutional networks (2012–2020), and attention-based Vision Transformers (2020–present).

Why Computer Vision Milestones Still Matter Today

The global computer vision market reached $19.82 billion in 2024 and analysts project it will exceed $58 billion by 2030. Behind that growth lies a 60-year chain of architectural decisions. Every team shipping a CV system today inherits those decisions, whether they know it or not. The history of computer vision models is not academic; it is a map of which trade-offs were made and why.

Developers who skip this history tend to reach for a model because it ranked highest on a leaderboard, not because it fits their data distribution, latency budget, or hardware constraints. That mismatch is expensive.

“The architecture you choose should fit your constraints, not the other way around.”

Era 1 (1960–2011): Hand-Crafted Features and the Pre-Deep-Learning Age

Computer vision before 2012 was a field of rules, not learning. Engineers manually designed feature descriptors to capture what a machine should notice in a pixel array.

The foundational idea that a visual cortex works through layered feature detectors came from Hubel and Wiesel’s 1959 neuroscience research. Kunihiko Fukushima formalised this into the Neocognitron in 1980, the direct ancestor of today’s CNNs. Yann LeCun’s LeNet-5 (1998) proved the concept on handwritten digit recognition and introduced the convolutional and pooling pattern that remains canonical today.

The 2000s produced powerful hand-crafted descriptors: SIFT (Scale-Invariant Feature Transform), HOG (Histogram of Oriented Gradients), and SURF. Support vector machines classified these features. The approach worked well on controlled datasets but collapsed under real-world variation in lighting, occlusion, and scale.

The fundamental limitation was human bandwidth. Writing rules for every visual concept the model might encounter was not scalable.

Era 2 (2012–2019): The CNN Revolution – AlexNet Changes Everything

In 2012, Krizhevsky, Sutskever, and Hinton’s AlexNet slashed the ImageNet error rate from 26% to 15.3% in a single year. The field never recovered its pre-2012 assumptions.

AlexNet proved that deep convolutional networks, trained on GPUs with large labelled datasets, learned better features than any engineer could hand-craft. What followed was a decade of CNN refinement: VGGNet deepened the network, GoogLeNet widened it, ResNet (2015) introduced residual skip connections that made training 100-layer networks stable. EfficientNet (2019) compounded width, depth, and resolution scaling with a neural architecture search, achieving top accuracy at a fraction of the parameter cost.

The CNN architecture emerged from a clear inductive bias: images have local structure. Adjacent pixels relate to each other. Convolution exploits this by scanning the same learned filter across all spatial positions. Pooling discards irrelevant spatial detail. Together they build a hierarchy: edges at layer one, textures at layer three, object parts at layer ten.

“AlexNet in 2012 did not just win a competition, it invalidated a decade of feature engineering overnight.”

In practice, teams building production CV systems in this era typically faced one recurring challenge: how far down the ImageNet pre-trained hierarchy should they fine-tune? Too shallow and domain-specific features never adapt. Too deep and the model overfits a small target dataset. The answer was almost always: freeze the first half, fine-tune the second.

Code Example: Loading a Pre-trained ViT for Transfer Learning

Source: pytorch/vision — torchvision.models.vision_transformer.py

The snippet below shows exactly how a team migrates from a CNN baseline to a ViT in three lines of torchvision code. The head replacement is the only change required for a custom classification task.

The 768-dimensional embedding is the ViT’s learned global representation of the image. Replacing only the final linear layer preserves 90% of the pre-trained knowledge, the core advantage of transfer learning.

Era 3 (2020–2023): Vision Transformers Rewrite the Rules

Dosovitskiy et al.’s “An Image is Worth 16×16 Words” (ViT, 2020) transplanted the transformer architecture, dominant in NLP since “Attention Is All You Need” (Vaswani, 2017), directly into computer vision.

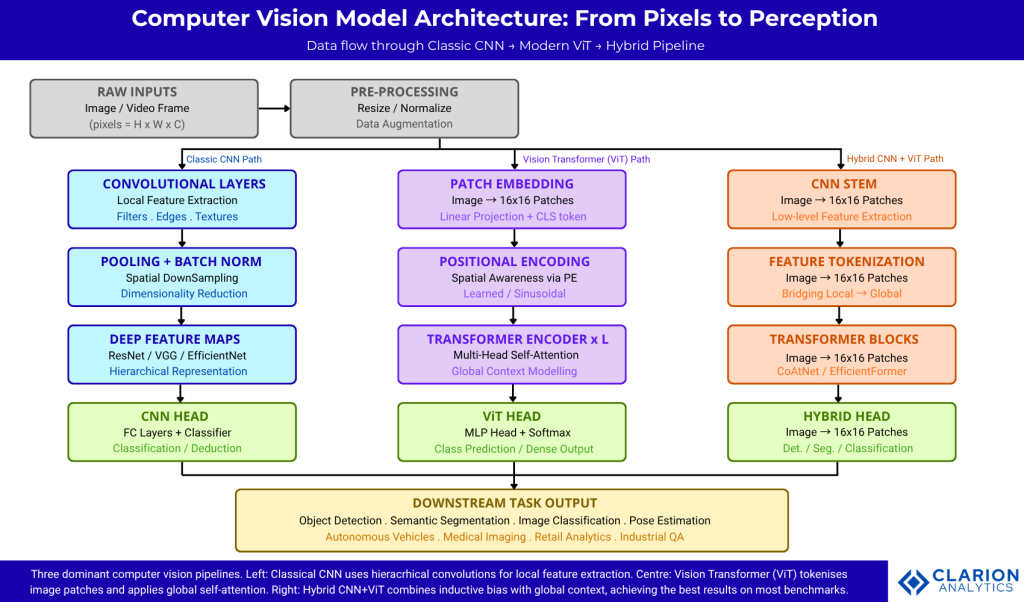

ViT’s key insight was simple: slice an image into fixed patches, treat each patch as a token, and apply multi-head self-attention across the entire sequence. Unlike CNNs, which build context through successive layers of local filtering, ViT computes global relationships in a single pass. Every patch attends to every other patch from layer one.

A 2024 systematic review of ViTs vs CNNs in medical imaging found that ViTs outperformed CNNs in 59% of the 36 reviewed studies, but almost always when pre-training was applied. Without large-scale pre-training, CNNs still held the edge on smaller or lower-resolution datasets.

The ViT era also introduced a new class of challenges: quadratic self-attention cost and the need for massive pre-training datasets. Efficient ViT variants (DeiT, Swin Transformer, PVT) addressed these by introducing local attention windows, distillation training, and hierarchical token reduction.

“ViT sees the entire image at once from layer one. CNN sees the full image only after stacking many local layers.”

Era 4 (2024–Present): Hybrid Architectures and Foundation Models

The dominant research finding of 2024, confirmed by Haruna et al. (arXiv 2024), is that hybrid CNN-ViT architectures outperform pure versions of either on most benchmark tasks. Models like CoAtNet, EfficientFormer, and MobileViT combine CNN stems (for efficient local feature extraction with inductive bias) with transformer layers (for global context modelling).

Meta’s Segment Anything Model (SAM) demonstrated a new paradigm: foundation models pre-trained on massive unlabelled visual data that can zero-shot segment any object from a text or click prompt. This mirrors GPT-style scaling laws applied to vision.

Alonso-Fernandez et al. (arXiv, July 2024) showed empirically that CNNs and ViTs are “highly complementary”, combining their feature vectors consistently boosts accuracy over either alone. Teams should treat them as ensemble candidates, not competitors.

In manufacturing, AI-driven CV achieved 99% accuracy in truck and trailer identification in a 2024 Ryder/Terminal Industries pilot. The models behind that pilot were hybrid YOLO-family detectors, precisely the convergence point of the CNN and ViT eras.

Code Example: YOLOv8 Inference via OpenCV ONNX Runtime

Source: ultralytics/ultralytics — examples/YOLOv8-OpenCV-ONNX-Python/main.py

This four-step snippet (load, preprocess, infer, postprocess) is the standard production inference loop for edge-deployed YOLO models. It requires no PyTorch runtime, only OpenCV, making it ideal for resource-constrained environments.

The output tensor shape [1, 84, 8400] encodes 8,400 candidate anchors, each with 4 box coordinates and 80 class scores. Non-maximum suppression then selects the highest-confidence, non-overlapping predictions.

Architecture Comparison: CNN vs ViT vs Hybrid

Use this table to map architecture type to use case, the most common decision teams get wrong is choosing a ViT when a fine-tuned ResNet would ship twice as fast with comparable accuracy.

| Architecture | Key Strength | Limitation | Best Used When |

|---|---|---|---|

| Classical CNN (ResNet, EfficientNet, VGG) | Local feature extraction, low latency, data-efficient | Struggles to model long-range dependencies | Edge deployment, small datasets, real-time detection on CPU/GPU |

| Vision Transformer – ViT (ViT-B, Swin, DeiT) | Global self-attention; superior on large-scale pre-training | Requires massive data; expensive compute | High-accuracy classification on large datasets; NLP-vision multimodal tasks |

| Hybrid CNN+ViT (CoAtNet, EfficientFormer, YOLO11) | Best-of-both-worlds: local bias + global context | More complex to tune | Production systems needing accuracy AND efficiency; autonomous vehicles; medical imaging |

| Foundation / SAM Models (SAM, CLIP, DINO) | Zero-shot generalisation; no task-specific labels needed | Very high compute cost; too slow for real-time edge | Open-world segmentation, dataset labelling automation, research prototyping |

Choosing the Right Architecture for Your Project

Architecture selection is not a prestige exercise. It is an engineering decision with latency, memory, and accuracy trade-offs. Start from your constraints, not from a leaderboard.

Start with a pre-trained CNN (ResNet-50 or EfficientNet-B0) if your dataset has fewer than 50,000 labelled samples, your inference target is below 30ms, or your hardware lacks a GPU. CNNs still achieve excellent results and deploy easily to ONNX, CoreML, and TFLite.

Upgrade to a ViT or Swin Transformer if you have access to large labelled or unlabelled data, your task benefits from long-range scene understanding (e.g., video captioning, dense prediction across large medical scans), and you have GPU memory to spare.

Use a Hybrid or YOLO-family model for real-world production systems that need to run in near-real-time with high accuracy. The Ultralytics YOLO11 ecosystem (github.com/ultralytics/ultralytics) provides a battle-tested starting point for detection, segmentation, and classification tasks.

Consider SAM or CLIP only when you genuinely need zero-shot generalisation or when the labelling cost of a supervised approach is prohibitive.

“In 2025, hybrid models are the practical default. Pure ViTs win on benchmarks; hybrid models win in production.”

Frequently Asked Questions

What is the history of computer vision models in brief?

Computer vision evolved through four eras: hand-crafted features (1960–2011), deep CNNs led by AlexNet (2012–2019), Vision Transformers that apply self-attention to image patches (2020–2023), and today’s hybrid CNN-ViT and foundation model era (2024–present). Each era solved the limitations of the one before it.

How does a Vision Transformer differ from a CNN?

A CNN extracts local features with sliding filters and builds global context by stacking many layers. A ViT splits the image into patches, treats them as tokens, and computes global relationships in every layer via self-attention. ViTs need more data to train from scratch; CNNs generalise better with small datasets.

Which computer vision architecture should I use?

For most production projects, start with a hybrid model from the Ultralytics YOLO family or EfficientFormer. Reserve pure ViTs for tasks with large datasets and strong compute. Use SAM or CLIP only when zero-shot flexibility outweighs inference cost.

Did Vision Transformers replace CNNs completely?

No. CNNs remain superior for edge deployment, small datasets, and real-time detection. A 2024 PMC systematic review found ViTs outperformed CNNs in only 59% of medical imaging studies — and only when pre-trained on large data. For resource-constrained settings, CNNs still dominate.

What is a foundation model in computer vision?

A foundation model is trained on massive unlabelled visual data and can be adapted to multiple downstream tasks with minimal fine-tuning. Meta’s Segment Anything Model (SAM) is the benchmark example; it segments any object in any image from a text or click prompt, without task-specific training.

Conclusion

Three insights deserve permanent space in your architecture decision framework. First, every major CV breakthrough solved a specific failure mode of its predecessor; hand-crafted features could not scale; pure CNNs lacked global context; pure ViTs lacked data efficiency. Second, the current frontier is hybrid: CNN local bias combined with transformer global attention. Third, no architecture is universally best; the correct choice depends on data volume, latency budget, and compute availability.

The global AI-in-computer-vision market is growing at 22.1% annually through 2030. The teams that ship reliable production systems will be those that understand the architectural trade-offs, not just the benchmark rankings.

One question worth sitting with: if the next architectural breakthrough follows the same pattern as the last four, what problem in today’s hybrid models will it solve first?