LoRA (Low-Rank Adaptation) is a parameter-efficient fine-tuning technique for large language models. It freezes the pretrained model’s weights and injects two small trainable matrices A and B into each transformer layer. Their product approximates the full weight update. This reduces trainable parameters by up to 10,000x, cutting GPU memory by 3x, while matching or exceeding full fine-tuning accuracy on benchmark tasks.

Why Full Fine-Tuning Is a Dead End for Most Teams

Full fine-tuning an LLM means updating every one of its billions of parameters on your domain data. For most engineering teams, that is not a realistic option.

Training costs for frontier models are staggering. According to Epoch AI and Stanford’s 2025 AI Index, GPT-4 required approximately $78 million in compute to train. Google’s Gemini Ultra cost an estimated $191 million. Even storing the optimizer states for a single gradient update on a 175B parameter model demands 1.2TB of VRAM. LoRA fine-tuning changes that equation fundamentally.

The enterprise direction is unmistakable. Gartner (2025) predicts that by 2027 organizations will deploy task-specific models at three times the volume of general-purpose LLMs, up from just 1% domain-specific adoption in 2024. The implication is clear: teams need a way to specialize models cheaply and quickly.

That method is LoRA. This post explains how it works, when to use it, how to implement it, and how it compares to alternatives.

How LoRA Works: The Math Behind the Magic

LoRA reduces trainable parameters by 10,000x relative to full fine-tuning while matching its accuracy on most benchmark tasks. It achieves this through a single elegant insight about how neural networks learn.

During pretraining, a large language model learns dense weight matrices that encode general world knowledge. When you fine-tune for a specific task, the change in those weights has a low intrinsic rank — meaning the delta can be expressed as the product of two much smaller matrices.

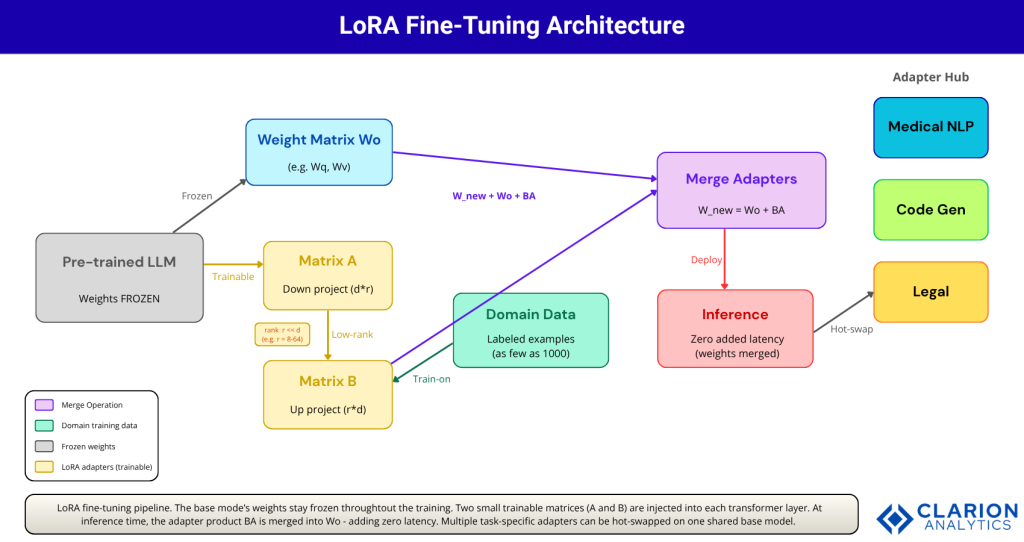

The LoRA equation is: W_new = W₀ + BA, where W₀ is the frozen pretrained weight matrix, A is a down-projection matrix (d × r), B is an up-projection matrix (r × d), and r is the rank, typically 8 to 64, far smaller than the full matrix dimension. According to the foundational LoRA paper (Hu et al., 2021), on GPT-3 175B this reduces the checkpoint size from 350GB to just 35MB while cutting VRAM from 1.2TB to 350GB.

Only A and B are updated during training. The base model weights never change. At inference time, BA is merged back into W₀, producing a single weight matrix with no additional computation cost and no added latency.

LoRA doesn’t just cut costs; it unlocks fine-tuning for teams that were priced out of AI customization entirely.

QLoRA: Taking LoRA to Consumer Hardware

LoRA still requires loading the full base model in 16-bit precision, which remains memory-intensive for very large models. QLoRA, introduced by Dettmers et al. (2023, NeurIPS), solves that by quantizing the base model to 4-bit NF4 precision while keeping the LoRA adapter weights in 16-bit.

The result: QLoRA fine-tunes a 65B-parameter model on a single 48GB GPU while preserving full 16-bit fine-tuning task performance. The Guanaco model family, fine-tuned with QLoRA, reached 99.3% of ChatGPT’s performance level on the Vicuna benchmark after just 24 hours of training on one GPU. A 33B model can be fine-tuned on a single consumer-grade 24GB GPU such as an RTX 4090. The official QLoRA code is available on GitHub.

QLoRA introduces three innovations: 4-bit NormalFloat (NF4) quantization, double quantization to reduce memory footprint further, and paged optimizers to handle memory spikes during training. Together they reduce average memory requirements from over 780GB to under 48GB without performance degradation.

Real-World Use Cases: Where LoRA Is Already Winning

LoRA fine-tuning is deployed in production across a wide range of industries, driven by the enterprise shift toward domain-specific models that Gartner (2025) forecasts will account for over half of enterprise GenAI models by 2027.

Medical NLP. Hospitals use LoRA to fine-tune models on clinical notes, discharge summaries, and ICD coding, sensitive data that cannot leave the premises. A LoRA adapter of a few MB rides on a shared 7B base model, enabling HIPAA-compliant deployment without retraining.

Legal document processing. Law firms and compliance teams fine-tune on contract templates and regulatory guidance. A single base model hosts dozens of jurisdiction-specific adapters, each addressable per-request via adapter hot-swapping.

Code generation. According to research by Anyscale (2023), LoRA-based fine-tuning on Llama-2 outperforms GPT-4 on specialized tasks like SQL generation. The trade-off: roughly 2% accuracy versus full fine-tuning in exchange for dramatically lower serving cost.

Multi-tenant SaaS. Platforms serving multiple enterprise customers load one base model into GPU memory and hot-swap LoRA adapters per tenant. This is now a standard pattern on cloud platforms including OpenAI, Together.AI, and RunPod.

The real shift isn’t technical; it’s organizational. LoRA means a two-person ML team can now do what previously required a GPU cluster.

LoRA System Architecture: From Training to Inference

The LoRA pipeline consists of four distinct stages: freeze the base model, inject rank-decomposition adapters into attention layers, train only those adapters on domain data, then merge or hot-swap them at inference time with zero latency penalty.

Figure 1: LoRA fine-tuning pipeline. The base model’s weights stay frozen throughout training. Two small trainable matrices (A and B) are injected into each transformer layer. At inference time, the adapter product BA is merged into W₀, adding zero latency. Multiple task-specific adapters can be hot-swapped on one shared base model.

The adapter size matters. On a 7B model with rank r=16 targeting query and value projections, the adapter contains roughly 6.7M trainable parameters, 0.1% of the base model. The resulting checkpoint is typically under 50MB. The HuggingFace PEFT library (20,000+ GitHub stars, actively maintained through 2025) handles this entire workflow with a unified API.

Choosing the Right LoRA Variant: LoRA vs QLoRA vs AdaLoRA

Not every fine-tuning scenario is identical. LoRA is the right default for most cases. QLoRA is best when GPU memory is the bottleneck. AdaLoRA dynamically allocates rank based on layer importance, useful for complex tasks where different transformer layers need different update budgets.

| Method | Key Strength | Best Used When |

|---|---|---|

| LoRA | Memory-efficient; no inference latency when merged; widely supported via PEFT library | General domain adaptation; instruction tuning; adapting 7B–70B models on A100/H100 |

| QLoRA | Fine-tunes 65B models on a single 48GB GPU; 4-bit NF4 quantization preserves quality | Consumer hardware (RTX 3090/4090); budget-constrained teams; rapid prototyping |

| AdaLoRA | Dynamically allocates rank budget per layer based on weight importance; more efficient than fixed rank | Tasks where some layers need more capacity (e.g. complex reasoning, code generation) |

| Full Fine-Tuning | Maximum expressiveness; all weights updated; best ceiling performance | When budget allows and catastrophic forgetting is not a concern; small base models (< 3B) |

| Prefix Tuning | No weight modification; adapts via soft prompts; minimal storage overhead | Very fast task switching; deployment where model weights cannot be modified |

In practice, according to Databricks’ fine-tuning guide, LoRA can even outperform full fine-tuning in some cases by avoiding catastrophic forgetting, the phenomenon where fine-tuning overwrites general knowledge. Because the base model stays frozen, LoRA acts as a natural regularizer.

Implementing LoRA in Practice: Code and Configuration

The HuggingFace PEFT library sets up LoRA in under 10 lines. Set rank (r=16), lora_alpha (scaling factor, typically 2x rank), target the query and value projection layers, then wrap your model. The snippet below shows the complete setup for a causal LM.

Snippet 1: Basic LoRA Configuration with PEFT

Source: HuggingFace PEFT Documentation / huggingface/peft

This snippet wraps a 70B model so that only 87 million parameters, 0.124% of the total, are updated during training. Rank 16 targeting only query and value projections is a sensible starting point for most instruction-tuning tasks.

Snippet 2: QLoRA 4-bit Configuration

Source: Dettmers et al., QLoRA (2023) / artidoro/qlora

This QLoRA configuration loads a 70B parameter model into 4-bit NF4 precision, making it trainable on a single 24GB consumer GPU. The BitsAndBytesConfig block is the only addition required over standard LoRA; PEFT handles everything else identically.

Frequently Asked Questions About LoRA Fine-Tuning

Does LoRA fine-tuning add inference latency?

No. When LoRA adapter weights are merged into the base model (W_new = W₀ + BA), the final model is mathematically identical to a fully fine-tuned model. There is zero additional inference latency. Un-merged adapters can be swapped at negligible cost, less than one forward pass.

How do I choose the right LoRA rank?

Start with r=8 for simple classification or extraction tasks. Use r=16 to r=64 for instruction following or complex generation. Higher rank means more trainable parameters but not always better performance. Research by Anyscale suggests minimal gains beyond r=8 for many tasks, while the original LoRA paper found r=1 or r=2 often sufficient.

Can I fine-tune a 70B model on consumer hardware?

Yes, with QLoRA. Combining 4-bit NF4 quantization with LoRA reduces the memory requirement for a 65B parameter model from over 780GB to under 48GB. A single RTX 4090 (24GB) can fine-tune a 33B model. A 48GB GPU such as an A6000 can fine-tune a 65B model in 24 hours, as demonstrated by the Guanaco experiments in Dettmers et al. (2023).

What is the accuracy difference between LoRA and full fine-tuning?

The gap is small. Anyscale’s benchmark (2023) on Llama-2 shows LoRA trades roughly 2% accuracy (95% vs 97%) against full fine-tuning on the 13B model. For most production use cases, that trade-off is well worth the 10,000x reduction in trainable parameters and the cost savings.

How many training examples do I need for LoRA fine-tuning?

Far fewer than you might expect. QLoRA’s Guanaco model achieved near-ChatGPT performance using a few thousand high-quality labeled examples. Practitioners consistently find that 1,000 carefully curated examples outperform 100,000 noisy ones. Quality of labeling and instruction format matters more than raw dataset size.

In practice, 1,000 high-quality labeled examples outperform 100,000 mediocre ones; data curation matters as much as technique.

The Bottom Line: LoRA Is the New Baseline for LLM Adaptation

Three takeaways stand out from the evidence. First: LoRA makes enterprise fine-tuning economically viable. By cutting trainable parameters 10,000x and GPU memory 3x, it moves domain-specific model adaptation from a capital project to a sprint-level task. Second: accuracy is not materially compromised. On most benchmarks, LoRA matches full fine-tuning within 1–2%, and QLoRA’s Guanaco reached 99.3% of ChatGPT performance after one day on one GPU.

Third: the enterprise tailwind is real. Gartner (2025) forecasts that task-specific model deployments will outpace general-purpose LLMs 3:1 by 2027. The teams that master efficient fine-tuning now will be the ones shipping differentiated AI products two years from now. The question worth sitting with: what proprietary data do you have today that could become a competitive moat with 1,000 fine-tuned examples and a 24GB GPU?