A multi-agent AI system (MAS) is an architecture in which multiple specialized LLM-powered agents coordinate through a shared orchestration layer to plan, execute, and verify complex tasks. Each agent holds a discrete role, calls external tools, and reads from or writes to a shared memory store. Unlike single-model pipelines, MAS distribute cognitive load across agents, allowing parallel execution, role specialization, and failure isolation. The orchestrator routes tasks, manages state, and enforces termination conditions.

Why Most Agentic AI Projects Stall Before Production

Multi-agent AI systems have attracted explosive interest — Gartner (2025) recorded a 1,445% surge in enterprise inquiries about MAS between Q1 2024 and Q2 2025. Yet the same data carries a sobering warning: over 40% of agentic AI projects will be canceled by the end of 2027, primarily due to escalating costs, unclear ROI, and inadequate risk controls.

McKinsey’s 2025 State of AI report adds further context: fewer than 10% of deployed AI use cases make it past the pilot stage. Only 23% of organizations are currently scaling an agentic system anywhere in their enterprise. The gap between demo and production is real, and it is architectural.

Most agentic AI projects fail not because the models are weak, but because orchestration, memory, and tool use are designed without production constraints in mind. This guide covers the patterns, decisions, and code primitives that close that gap.

The Three-Layer Architecture of a Production Multi-Agent System

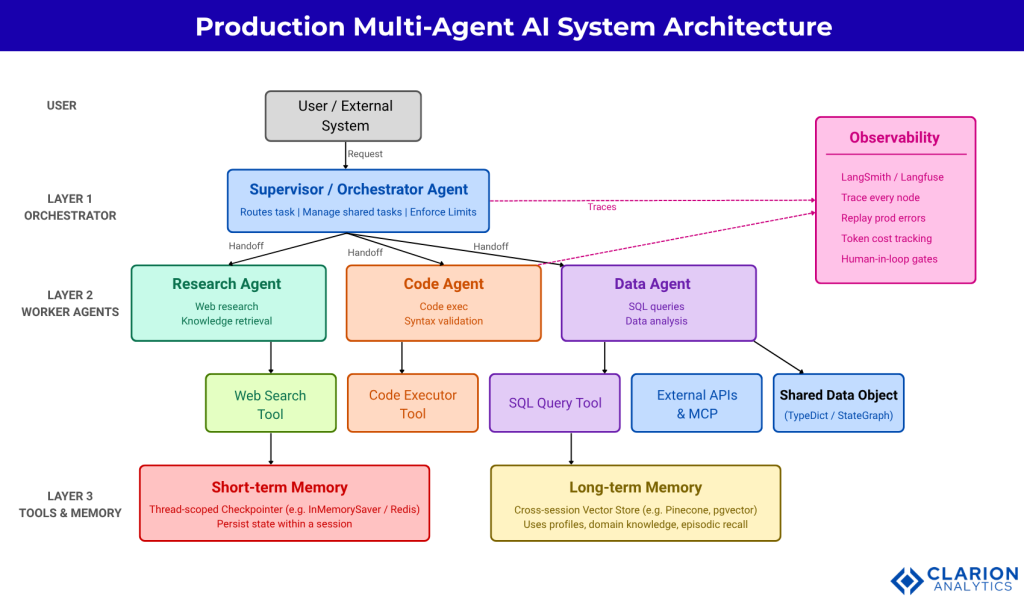

A production multi-agent system separates concerns across three layers: an orchestrator that routes and supervises, specialized worker agents that execute, and a unified memory-and-tool layer that persists state and surfaces external data.

Layer 1: The orchestrator – receives incoming tasks, decomposes them into subtasks, selects the appropriate worker agent, and monitors completion. It owns the termination condition and the recursion limit. If the orchestrator is poorly designed, every downstream agent inherits the failure.

Layer 2: Worker agents – are narrow specialists. A research agent runs web searches. A code agent executes Python. A data agent queries SQL. Each agent has its own system prompt, its own tool set, and its own failure mode. Keeping them narrow improves both accuracy and debuggability.

Layer 3: Tools and memory – is what makes the system stateful. Tools are external integrations that the agents can invoke. Memory tiers persist information across turns and sessions. This layer is where most teams under-invest, and where most production failures originate.

“The orchestration layer is the nervous system of a multi-agent system. Get it wrong, and every agent downstream inherits the failure.”

Orchestration Patterns You Can Actually Deploy

The supervisor pattern works best when tasks have clear owner-agent boundaries; the swarm pattern excels at dynamic handoffs; the planner-executor pattern suits multi-step research or coding tasks requiring sequential verification.

Research by Renney et al. (2026) formalizes three dominant orchestration patterns for LLM-enabled MAS. The supervisor / hierarchical pattern uses a central controller that selects and delegates to specialized subagents via tool-call handoffs. The swarm pattern allows agents to dynamically hand off control to peers based on specialization, with the system remembering which agent was last active. The planner-executor pattern generates a full plan upfront and passes subtasks to executor agents sequentially, making it well-suited for tasks with known structure such as report generation or code review pipelines.

Separately, Zhou and Chan (2026) show that deterministic orchestration, where routing decisions follow stable, reproducible rules rather than emergent LLM-to-LLM negotiation, yields higher accuracy and lower inference cost in production. This is a critical design principle: prefer explicit routing logic over fully emergent behaviour whenever the task structure is known.

In practice, teams building supervisor-pattern systems with LangGraph commonly use the create_supervisor() primitive paired with compile-time memory injection. The snippet below shows the production pattern.

Source: langchain-ai/langgraph-supervisor-py (README, supervisor compile section)

This snippet illustrates compile-time memory injection: the two-tier memory architecture (short-term checkpointer for session state, long-term store for cross-session knowledge) is declared once at compile time and automatically propagated to every agent in the graph. Swapping InMemorySaver for RedisCheckpointSaver requires a single-line change, a clear advantage of framework-level abstraction over hand-rolled orchestration.

Memory Architecture: The Tier That Breaks Most Agents

Production agents need at minimum two memory tiers: a short-term checkpointer that persists conversation state within a session and a long-term store that retrieves user-specific or domain knowledge across sessions.

Most teams start with in-context memory, storing everything in the model’s active prompt window and discover the hard way that this fails at scale. At turn 30 of a long task, the context fills, the model starts hallucinating earlier context, and tool calls begin returning inconsistent results. The fix is architectural, not a better prompt.

Research on agent memory now identifies four distinct tiers. In-context / working memory lives in the active prompt window and resets per session. Short-term/episodic memory uses a thread-scoped checkpointer (Redis, SQLite, or an in-memory saver) to persist the full conversation state within a session, enabling fault tolerance and human-in-the-loop interrupts. Long-term/semantic memory uses a vector store or graph database to retrieve user profiles, domain facts, and historical interactions across sessions. Procedural memory encodes tool schemas and agent role definitions, essentially the “how to act” knowledge that lives in system prompts and tool registries.

Xu et al.’s A-MEM paper (2025) proposes a Zettelkasten-inspired dynamic memory system that generates interconnected knowledge networks for LLM agents, creating structured notes with contextual descriptions, keywords, and cross-links whenever a new memory is added. This outperforms static retrieval approaches and points to where production memory systems are heading: away from flat vector stores toward graph-structured, self-organizing memory.

“Agents that rely on context-window memory alone will hallucinate at turn 30. The fix is architectural, not a better prompt.”

Tool Use That Does Not Break in Production

Reliable tool use in production requires three guarantees: schema validation before dispatch, idempotent execution so retries do not corrupt state, and a hard exit condition in the graph that prevents infinite tool-call loops.

The most common tool-use failure in production is an agent that enters an infinite loop of tool calls because no graph-level termination condition exists. The model keeps calling tools, receiving results, and deciding it needs more information, burning tokens and never returning. The fix is a conditional routing edge that inspects each LLM output before dispatching to the tool node.

Teams building this typically find that the routing logic itself is simple, “does this message contain tool_calls?” but it is invisible in many higher-level frameworks. LangGraph exposes it explicitly as a conditional edge, which is one of the reasons it dominates production deployments.

Source: FareedKhan-dev/Multi-Agent-AI-System (state.py / graph assembly)

This conditional edge is the single most important production guard in any agent graph. It ensures the agent loop terminates when the model stops requesting tools, regardless of how many intermediate tool-call cycles occurred. Combine this with a recursion_limit parameter on the compiled graph and you have two independent guardrails against runaway cost.

Beyond loop control, production tool schemas must be deterministic and idempotent. A tool that writes a database record should check for existence before inserting. A tool that sends an email must not resend on retry. Document each tool’s side-effect contract explicitly in the tool description string; the LLM reads it, and so does every engineer who debugs a production failure.

“Production is where agent systems earn their keep. It is also where they fail most spectacularly if the foundation was built for demos, not durability.”

Choosing Your Framework: LangGraph, CrewAI, or Microsoft Agent Framework

LangGraph is the strongest choice for stateful, graph-controlled workflows; CrewAI offers the fastest path to role-based multi-agent pipelines; Microsoft Agent Framework (the successor to AutoGen) is optimized for Azure-native enterprise deployments.

LangGraph reached general availability in May 2025 and now powers agents at nearly 400 companies, including LinkedIn, Uber, Replit, Elastic, and BlackRock. Its directed-graph model with built-in cyclical execution, state persistence, and LangSmith observability makes it the benchmark for production-grade orchestration. Monthly downloads exceed 34.5 million. The tradeoff is the learning curve: LangGraph’s low-level primitives require explicit state schema design and graph construction.

CrewAI launched in early 2024 and has grown to over 44,000 GitHub stars with 60 million agent executions monthly, used by 60% of Fortune 500 companies for agentic pipelines. Its role-based abstraction (Crew = team, Flows = event-driven pipelines) is the fastest path to a working multi-agent system. Independent of LangChain, it runs 5.76x faster than LangGraph in certain benchmarks. The tradeoff: less granular control over state transitions and harder to customize when workflows diverge from the role-based mental model.

Microsoft Agent Framework (MAF), merged from AutoGen and Semantic Kernel in October 2025, targets organizations in the Azure ecosystem. It brings AutoGen’s multi-agent conversation patterns together with Semantic Kernel’s enterprise features: type safety, observability middleware, plugin connectors, and multi-language support (Python, C#, Java). AutoGen v0.4 remains in maintenance mode for existing projects.

Framework Comparison

| Framework | Key Strength | Best Used When |

|---|---|---|

| LangGraph (langchain-ai) | Graph-based state control, cycles, conditional routing, built-in checkpointing; 34.5M monthly downloads | You need fine-grained orchestration with loops, branching, human-in-the-loop checkpoints, or fault-tolerant long-running workflows |

| CrewAI (crewAIInc) | Role-based abstraction, 5.76x faster in select benchmarks, 44K+ stars, 60M+ executions/month; independent of LangChain | You need rapid multi-agent pipeline prototyping with clear role separation and minimal orchestration boilerplate |

| Microsoft Agent Framework (AutoGen successor) | Deep Azure/OpenAI integration, enterprise compliance, multi-language (Python, C#, Java), AutoGen conversation patterns plus Semantic Kernel enterprise middleware | Your org is Azure-native and needs enterprise SLAs, compliance controls, and integration with Copilot Studio or existing .NET services |

System Architecture Reference

Figure 1: Production multi-agent AI system with three layers. The Supervisor routes requests to specialized Worker Agents via tool-call handoffs. Each agent reads from a shared Tool Registry and writes results to a centralized State Object. Two memory tiers handle persistence: a short-term thread-scoped Checkpointer and a long-term cross-session Vector Store. All node executions emit traces to an Observability backend (e.g., LangSmith) for debugging and cost governance. Solid arrows = request/task flow; dashed arrows = memory read/write and trace emission.

Observability and Human-in-the-Loop Controls

No production multi-agent system should run without observability. Without traces, debugging a failure that occurred 14 tool-call steps into an agent graph is effectively impossible.

McKinsey (2025) notes that agentic AI can automate 60 to 80% of routine infrastructure work over time, but achieving those gains requires governance infrastructure from day one not bolted on after the first production incident. The two minimum viable observability requirements for any production MAS are: a tracing platform that captures every node execution, state transition, tool call, and token count, and human-in-the-loop checkpoints at high-stakes decision points.

LangSmith (for LangGraph), Langfuse, and OpenTelemetry-compatible backends all support the trace-emit pattern. Configure your graph to checkpoint before any irreversible action, such as sending an email, writing a database record, calling a paid API and route those checkpoints to a human-approval queue. This single architectural decision eliminates the most severe class of production failures.

Frequently Asked Questions

How does LLM orchestration work in a multi-agent system?

A central orchestrator agent receives a task, decomposes it into subtasks, and routes each subtask to a specialized worker agent via tool calls or message handoffs. The orchestrator monitors outputs, manages shared state, and decides when a task is complete or when to escalate. This loop continues until a termination condition or recursion limit is reached.

What is the difference between in-context memory and external memory for AI agents?

In-context memory lives inside the model’s active prompt window and is erased when the session ends. External memory persists beyond the context window using databases or vector stores, enabling agents to recall information across sessions. Production agents typically combine both: the prompt window holds recent reasoning steps while a vector store handles long-term facts and user history.

Which multi-agent AI framework is best for production deployments?

LangGraph is the most widely adopted for complex production workflows, used by LinkedIn, Uber, and Replit. CrewAI is faster to implement for role-based pipelines. The Microsoft Agent Framework suits Azure-centric enterprises. Framework choice should match your workflow’s statefulness requirements and your team’s operational model, not GitHub star count. See the firecrawl.dev agent framework comparison (2026) for current benchmarks.

How do I prevent AI agents from looping indefinitely when calling tools?

Set a recursion limit at the graph level and use a conditional edge function that inspects each LLM output. If no tool calls are present in the last message, the graph routes to END rather than back to the tool node. Additionally, configure a max_consecutive_auto_reply on any conversational agents and add an explicit “done” signal the model can use to terminate the loop.

What are the biggest reasons agentic AI projects fail in production?

According to Gartner (2025), over 40% of agentic AI projects face cancellation by 2027 due to three root causes: uncontrolled inference costs from runaway agent loops, zero observability into agent behavior (no tracing), and security gaps such as prompt injection via tool outputs. The fix requires framework-level recursion limits, a tracing platform like LangSmith, and schema-validated tool calls before dispatch.

Three Things That Separate Production Systems from Demos

First: deterministic orchestration patterns beat emergent ones in production. Define routing rules explicitly in your graph edges. Do not rely on the model to self-organize without guardrails. The ORCH research by Zhou and Chan (2026) validates this empirically deterministic routing achieves higher accuracy and lower cost than non-deterministic alternatives.

Second: memory architecture is a design decision, not a default. Every production agent system needs at minimum two memory tiers session-scoped checkpointing and a cross-session retrieval store. Choose and wire them at compile time. Retrofitting memory architecture after deployment is expensive and usually incomplete.

Third: tool reliability requires graph-level contracts, not model-level assumptions. The conditional edge that routes on tool_calls presence, the recursion limit that caps the loop, and the idempotent tool schema that survives retries these are engineering decisions that belong in the code, not in the system prompt.

The real question for any team building agentic systems is not “can we ship a demo?” It is “what breaks at scale, and have we designed for it?”