LLM fine-tuning for enterprise is the process of continuing the training of a pre-trained large language model on a curated, domain-specific dataset to adapt its outputs to proprietary workflows, terminology, and compliance requirements. Unlike prompt engineering or retrieval-augmented generation (RAG), fine-tuning modifies the model’s weights, producing persistent behavioral changes that survive across sessions and require no retrieval infrastructure at inference time.

Why Generic LLMs Fall Short in Enterprise Contexts

Generic LLMs trained on public data lack domain vocabulary, internal process context, and compliance alignment. Fine-tuning on proprietary datasets corrects this by encoding enterprise-specific knowledge directly into model weights, producing consistent behavior without retrieval infrastructure.

Gartner (2025) projects that by 2027, more than 50% of enterprise GenAI models will be domain-specific, up from just 1% in 2024. The message is clear: general-purpose LLMs are the starting point, not the destination.

LLM fine-tuning for enterprise solves a problem that prompting cannot. A foundation model trained on public internet data does not know your internal product taxonomy, regulatory classification rules, or preferred response format. Prompt engineering helps at the margins, but it does not change what the model has learned. Fine-tuning does.

McKinsey (2025) found that while AI adoption has accelerated broadly, only around one-third of organizations report scaling AI across the enterprise. The bottleneck is not model capability, it is model fit. Generic models underperform on specialized tasks that require domain vocabulary, process context, and compliance alignment.

“Your proprietary data is not just an input; it is the competitive moat that a fine-tuned model encodes permanently into its weights.”

Choosing the Right Strategy: RAG, Fine-Tuning, or Both

RAG retrieves facts dynamically and suits frequently changing knowledge bases. Fine-tuning bakes behaviours and style into weights and suits stable domain tasks. Hybrid approaches, fine-tuning for behaviour, RAG for knowledge, consistently outperform either approach alone on knowledge-heavy enterprise workflows.

The choice between RAG and fine-tuning is not binary. It depends on two dimensions: how often the knowledge changes, and how critical it is that the model behaves consistently. Use this framework:

- Fine-tune for stable behavior: output format, tone, classification rules, domain terminology.

- Use RAG for dynamic knowledge: product catalogs, policy documents, real-time pricing.

- Use both when you need consistent behaviour AND current knowledge, a fine-tuned model querying a curated retrieval store.

Below is a comparison of the main fine-tuning approaches available to enterprise teams.

| Approach | Key Strength | Best Used When |

|---|---|---|

| Full Fine-Tuning | Maximum accuracy; all weights updated | You have large labelled datasets (100k+), unlimited GPU budget, and need peak task performance |

| LoRA (Low-Rank Adaptation) | Updates less than 1% of parameters; adapter is portable and mergeable with base model | You have moderate data (1k-50k examples), standard GPU budget, and want reusable adapters per task |

| QLoRA (4-bit Quantized LoRA) | 70% less VRAM vs LoRA; fine-tunes 65B models on a single 48GB GPU | You have constrained hardware, need to fine-tune large models, or want rapid iteration on a budget |

| Instruction Tuning (SFT) | Aligns model behavior to enterprise task format and tone | You need to control output style, format, and instruction-following without changing factual knowledge |

| RAG + Fine-Tuning (Hybrid) | Combines static weight-encoded behavior with dynamic knowledge retrieval | Knowledge changes frequently but behavior and tone must remain consistent |

“QLoRA’s 4-bit quantization is not a quality compromise; it is the enterprise team’s ticket to fine-tuning a 65B model on a single GPU.”

The PEFT Toolkit: LoRA, QLoRA, and Instruction Tuning Explained

LoRA injects trainable low-rank matrices into frozen model layers, updating less than 1% of total parameters. QLoRA adds 4-bit quantization on top, enabling fine-tuning of 65B-parameter models on a single consumer-grade GPU with no meaningful accuracy loss.

LoRA: Low-Rank Adaptation

LoRA, introduced by Hu et al. (2021), addresses the core problem of enterprise fine-tuning: updating billions of parameters is expensive, but skipping fine-tuning leaves the model unfit for domain tasks. LoRA freezes the pre-trained weights and injects small, trainable matrices into the transformer layers. These low-rank matrices are combined with the frozen weights during inference, adding zero latency overhead once merged.

In practice, LoRA updates fewer than 1% of model parameters. For a 7B parameter model, that means training roughly 4 to 40 million parameters instead of 7 billion, cutting GPU memory requirements by roughly 10 to 15x compared to full fine-tuning.

QLoRA: Memory-Efficient Fine-Tuning at Scale

Dettmers et al. demonstrated in QLoRA (NeurIPS 2023) that combining 4-bit quantization with LoRA allows fine-tuning a 65B parameter model on a single 48GB GPU, reaching 99.3% of ChatGPT performance in 24 hours. QLoRA achieves this through three mechanisms: 4-bit NormalFloat (NF4) quantization for model weights, Double Quantization to compress quantization constants, and Paged Optimizers that handle memory spikes during training.

Instruction Tuning: Aligning Model Behavior to Enterprise Tasks

Instruction tuning, also called supervised fine-tuning (SFT), trains the model on (instruction, output) pairs rather than raw text. As surveyed by Zhang et al. (2023), instruction tuning bridges the gap between a model’s next-token prediction objective and the structured behaviour enterprise tasks require. It controls output format, tone, domain terminology, and task-following behaviour.

Code Snippet 1 – Source: huggingface/peft – README example

This five-line configuration wraps any causal LM with LoRA adapters, immediately reducing trainable parameters to under 0.1% of the total model. The rank parameter (r=16) controls the adapter expressiveness. Larger ranks capture more complex domain patterns but require more memory. Enterprise teams typically start at r=8 or r=16 and tune from there.

“Spending 80% of your time on data quality and 20% on training is not inefficiency; it is the only sequence that produces a production-ready model.”

Data Preparation: The Make-or-Break Phase

Enterprise fine-tuning datasets should contain 1,000 to 10,000+ high-quality instruction-output pairs. Data sources include internal documentation, SOPs, support tickets, and expert-validated Q&A pairs. Quality consistently outweighs quantity; a dataset of 1,000 clean examples outperforms 50,000 noisy ones.

Teams building this typically find that data preparation consumes 60 to 80% of the total project timeline. Mathav Raj J. et al. (2024) propose three proven data formatting strategies for enterprise proprietary data: paragraph chunks for dense documentation, question-answer pairs for knowledge-heavy tasks, and summary-function pairs for code repositories.

The minimum viable dataset for a focused enterprise task is 1,000 high-quality examples. For complex domains, legal contract analysis, medical coding, and financial report generation target 10,000 or more. Always reserve 10 to 20% of examples as a held-out evaluation set that is never used in training.

- Clean your data before formatting it. Remove duplicates, correct label inconsistencies, and validate that outputs actually match the instructions.

- Format training data as JSON chat-style objects with system, user, and assistant roles.

- Include negative examples (outputs to avoid) alongside positive ones to improve calibration.

- Version your datasets alongside your model checkpoints. Reproducibility depends on it.

Enterprise Fine-Tuning Architecture

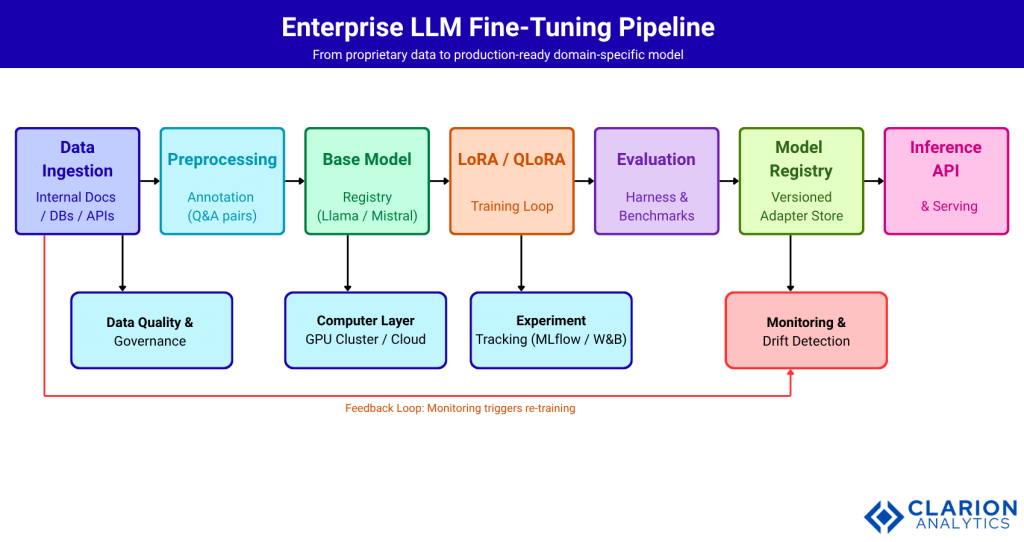

An enterprise fine-tuning pipeline connects a data ingestion layer through preprocessing, a base model registry, a PEFT training loop, an evaluation harness, and a model registry to a production inference API. A monitoring and drift detection layer feeds signals back to re-trigger training when model quality degrades.

Fig: Enterprise LLM fine-tuning pipeline. Data flows left to right from ingestion through preprocessing, PEFT training (LoRA/QLoRA), evaluation, and model registry to a serving API. The feedback loop from the monitoring layer back to data ingestion triggers re-training when production drift is detected.

Implementation Walkthrough: Fine-Tuning with QLoRA

A QLoRA fine-tuning run on a 7B model with 1,000 domain examples typically completes in 2 to 4 hours on a single A100 GPU and costs under $20 in cloud compute at standard on-demand pricing, making it accessible for most enterprise engineering teams.

Teams building this typically find that the first run is rarely the final run. Treat the first fine-tuning experiment as a baseline. Establish your evaluation metrics before you train, not after. Then iterate on data quality and hyperparameters.

Code Snippet 2 – Source: unslothai/unsloth – QLoRA fine-tuning setup

This snippet loads a 4-bit quantized Mistral-7B model using Unsloth’s optimized kernels, attaches LoRA adapters to all six attention and MLP projection layers, and launches a three-epoch supervised fine-tuning run. Rank-Stabilized LoRA (rsLoRA) is enabled, which improves gradient stability at higher ranks. On a single A100 80GB GPU, this configuration trains at roughly 2x the speed of standard HuggingFace PEFT with 70% less VRAM usage.

“A fine-tuned 7B model with clean domain data routinely outperforms a 70B general-purpose model on the specific task it was trained for.”

Evaluating Your Fine-Tuned Model Before Production

Evaluation should combine a held-out task-specific benchmark, automated metrics (accuracy, ROUGE, perplexity), and human evaluation on 50 to 100 representative prompts. Shadow-mode A/B testing against the base model in a staging environment confirms production readiness before full rollout.

Evaluation is where most enterprise fine-tuning projects fail, not because the model is bad, but because the evaluation criteria were never defined. Define your success metrics before writing a single training example:

- Task accuracy: does the model produce the correct answer or classification on your held-out set?

- Format compliance: does every output match the required structure (JSON, table, specific section headers)?

- Domain language: does the model use correct terminology without hallucinating domain-specific terms?

- Regression on general tasks: does the model still handle off-topic queries reasonably, or has fine-tuning caused catastrophic forgetting?

A practical evaluation stack for enterprise teams: use an automated harness (EleutherAI lm-eval or a custom benchmark) for scalable testing, and reserve 50 to 100 prompts for human expert review. When you see conflicting signals between automated and human evaluation, trust the humans for final deployment decisions.

Frequently Asked Questions

When should I fine-tune instead of using RAG? Fine-tune when behaviour, tone, or format must be baked in permanently and does not change frequently. Use RAG when facts update often. Use both when you need consistent behaviour AND current knowledge, a fine-tuned model querying a curated retrieval store.

How much data do I need to fine-tune an enterprise LLM? Start with 1,000 high-quality instruction-output pairs per task. Complex domains such as legal or medical benefit from 10,000 or more examples. Quality consistently matters more than raw volume.

What is the difference between LoRA and QLoRA? LoRA injects trainable low-rank matrices into frozen model layers, updating under 1% of parameters. QLoRA adds 4-bit quantization on top, cutting VRAM usage by up to 70% with negligible accuracy loss compared to 16-bit LoRA.

How long does LLM fine-tuning take on a single GPU? A QLoRA run on a 7B model with 1,000 to 5,000 examples typically completes in 2 to 4 hours on a single A100 80GB GPU, costing roughly $10 to $20 in cloud compute at standard on-demand pricing.

How do I prevent my fine-tuned model from forgetting general capabilities? Use LoRA or QLoRA rather than full fine-tuning, as frozen base weights preserve general knowledge. Keep the learning rate low (1e-4 or below) and include a small sample of general instruction data in your training mix.

Conclusion

Three insights should guide any enterprise LLM fine-tuning initiative. First, the technique you choose matters less than the quality of your training data. Clean, structured, domain-specific examples always outperform larger volumes of noisy ones. Second, QLoRA has effectively removed the compute barrier; any team with access to a single A100-class GPU can fine-tune a 7B or 13B model at a cost that is trivial compared to the downstream value. Third, evaluation is not a final step; it is the foundation that must be defined before any training begins.

“Domain specialization is not the end state, it is the starting point for compounding competitive advantage.”

The question worth asking before you start: what would your organization do differently if your internal LLM actually understood your business as well as your best subject matter expert? The answer to that question is your fine-tuning roadmap.

Table of Content

- Why Generic LLMs Fall Short in Enterprise Contexts

- Choosing the Right Strategy: RAG, Fine-Tuning, or Both

- The PEFT Toolkit: LoRA, QLoRA, and Instruction Tuning Explained

- Data Preparation: The Make-or-Break Phase

- Enterprise Fine-Tuning Architecture

- Implementation Walkthrough: Fine-Tuning with QLoRA

- Evaluating Your Fine-Tuned Model Before Production

- Frequently Asked Questions

- Conclusion