LLMOps (Large Language Model Operations) is the set of practices, tools, and workflows used to deploy, monitor, evaluate, and continuously improve large language models in production. It extends MLOps to address challenges unique to LLMs: non-deterministic outputs, prompt sensitivity, semantic drift, and the absence of ground-truth labels. A mature LLMOps stack covers model observability, automated drift detection, prompt versioning, and CI/CD pipelines adapted for language model behaviour.

Why Traditional MLOps Breaks Down for Language Models

LLMOps sits at the centre of the production AI crisis. According to Gartner research cited in 2025, 85% of AI projects fail, yet organizations with structured monitoring and measurement frameworks are significantly more likely to reach successful outcomes. That number should stop engineers in their tracks. The models are not the problem. The operations are.

Traditional MLOps was designed for deterministic, label-supervised models: you feed structured data in, a numeric prediction comes out, and accuracy tells you whether the model is working. LLMs break every part of that contract. Their outputs are probabilistic, context-sensitive, and evaluated through human preference rather than a ground-truth label. You cannot compute F1 on a customer-support response.

The LLMOps market reflects the urgency. According to Research and Markets (2025), the LLMOps software market will reach $15.59 billion by 2030 at a 21.6% CAGR, driven by enterprise-scale LLM deployments and growing investment in AI observability infrastructure.

“An LLM can degrade silently for weeks before any alert fires, because the metrics that catch it do not exist in a standard MLOps dashboard.”

Meanwhile, McKinsey’s 2025 State of AI report found that only about one-third of organizations are scaling AI across the enterprise despite 65% reporting regular gen-AI use. Workflow blockers and data issues are the primary obstacles. LLMOps is the operational discipline that bridges that gap.

The Three Forms of LLM Drift You Must Monitor

LLM drift is not a single phenomenon. Teams that monitor for one type often miss the other two entirely. A 2025 industry analysis identifies three distinct forms of LLM degradation that manifest silently in production.

Prompt drift occurs when user query patterns evolve beyond the model’s training distribution. New slang, domain terminology, or interaction patterns accumulate in production logs while the model stays frozen. The model does not fail loudly. It just becomes less relevant.

Output drift is the regression in response quality or consistency that results from model updates, upstream provider changes, or infrastructure variance. Research from Khatchadourian and Franco (arXiv, November 2025) quantified output drift across five model architectures with 7B to 120B parameters. Larger models showed dramatically higher inconsistency: GPT-OSS-120B achieved only 12.5% output consistency at temperature zero, compared to 100% for Granite-3-8B and Qwen2.5-7B. Larger does not mean more reliable.

Concept drift occurs when the real-world facts the model references become stale. A model trained before a product pricing change will confidently quote the wrong price. A RAG system built on an outdated knowledge base will hallucinate from its own stale context. According to a 2025 study cited by OneReach.ai, LLM hallucinations cost businesses over $67.4 billion in losses during 2024. These are not edge cases.

Drift Detection Methods Comparison

| Approach / Tool | Key Strength | Best Used When |

|---|---|---|

| Statistical Drift Detection (KL Divergence, Jensen-Shannon) | Fast, lightweight, no LLM dependency; works on embedding distributions | You have high query volume and need real-time, low-latency drift alerts on input distributions |

| Semantic Similarity (Embedding Cosine Diff) | Captures meaning-level output drift that lexical metrics miss; model-agnostic | Output quality matters more than input distribution; RAG or summarisation pipelines |

| Activation Delta Analysis (TaskTracker / arXiv 2406.00799) | Detects task drift and prompt injection without model modification; generalises to unseen attack types | RAG pipelines with untrusted external data sources where prompt injection is a security concern |

| LLM-as-Judge Evaluation (GPT-4o / Claude scorer) | Captures semantic correctness and tone at scale; aligns with human preference | CI/CD quality gates, regression testing before deployment, or when ground-truth labels are unavailable |

| Langfuse + MLflow (Observability Platform) | End-to-end tracing, prompt versioning, experiment comparison, and CI/CD hooks in one platform | Teams that need a unified observability and experimentation workspace across multiple LLM apps |

Building an LLM Monitoring Stack from Scratch

A production LLM monitoring stack needs four distinct layers working together. Each layer handles a different failure mode and feeds signals to the next.

Layer 1: Tracing. Every LLM call should emit an OpenTelemetry-compatible trace capturing the prompt, completion, token count, latency, and model version. This is the raw material everything else depends on. Tools like MLflow Tracing (20,000+ GitHub stars) and Langfuse (25,170+ stars, YC W23) provide this natively, often with zero-configuration auto-instrumentation.

Layer 2: Evaluation. Raw traces become useful only when passed through evaluators: semantic similarity scorers, hallucination detectors, LLM-as-judge pipelines, and task-specific rubrics. The evaluators run on sampled production traffic continuously, not just at deployment time.

Layer 3: Metrics store and dashboards. Evaluation scores flow into time-series storage (Prometheus) and visualisation (Grafana). You are looking for sustained degradation trends, not single-point anomalies. A single low score is noise. A sliding-window average trending down is a signal.

Layer 4: Alerting. Alerts fire when drift scores exceed configurable thresholds for a sustained window. The alert routes to PagerDuty for human review or triggers an automated retraining pipeline depending on the severity class.

“Smaller models often outperform larger ones on output consistency in production, which means model selection for LLMOps is a reliability decision, not just a capability decision.”

Code Example 1: MLflow Auto-Instrumentation for LLM Tracing

Source: mlflow/mlflow, mlflow/tracing. The snippet below shows the fastest path from zero to full LLM observability. One call to mlflow.openai.autolog() captures every prompt, completion, token count, and latency automatically.

This single instrumentation line connects every model call to the observability layer. Token counts and latency trends land in the MLflow UI immediately. Teams can then add custom metrics on top, such as semantic similarity to a reference response, without changing the application code.

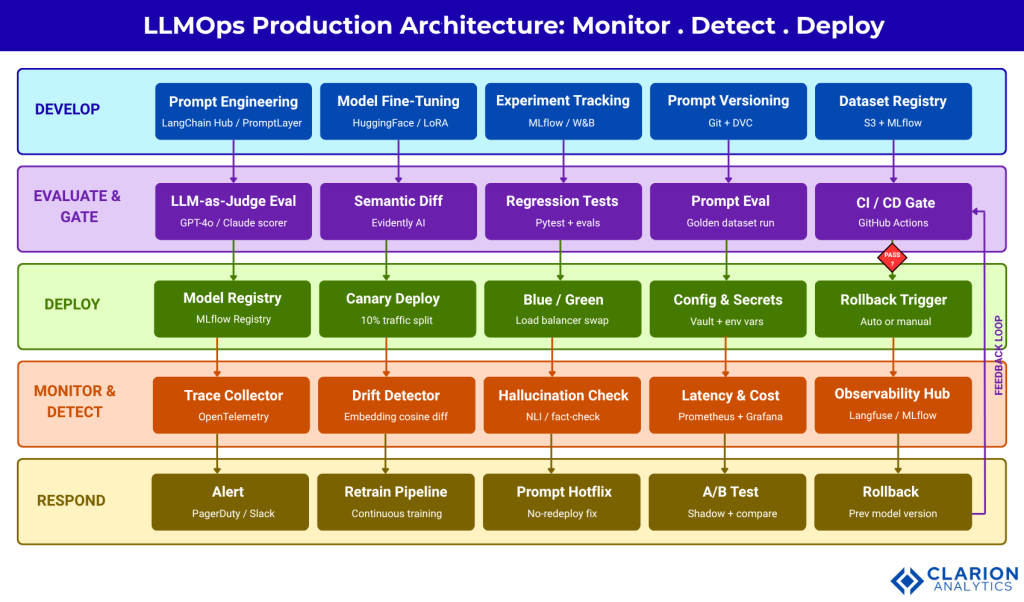

Figure 1: The five-layer LLMOps production architecture. Code and prompts flow left to right through development, evaluation, and deployment. Monitoring signals flow back through a feedback loop that triggers retraining or rollback. The CI/CD gate at the evaluation layer blocks any build that fails semantic quality thresholds.

Drift Detection Methods: From Statistical Tests to Activation Deltas

Statistical drift detection borrowed from classical MLOps still plays a role in LLMOps, but it covers only the input side. You need a stack of methods to cover the full surface area.

For input distribution drift, apply KL divergence or Jensen-Shannon distance to the embedding representations of incoming prompts. A sustained shift in the centroid of the embedding cluster signals that users are asking a different kind of question. This is fast, cheap, and runs in real time at scale.

For output quality drift, compute cosine similarity between production responses and a rolling reference set of known-good outputs. Thresholds below 0.85 on a seven-day rolling window warrant investigation. Evidently AI provides 100+ metrics for this pattern and integrates directly with GitHub Actions.

For task drift and prompt injection, the most technically sophisticated method comes from Abdelnabi et al. (arXiv 2406.00799, 2024). Their research shows that activation deltas, the difference in a model’s internal activations before and after processing external data, reliably detect when the model has been hijacked by injected instructions. The approach requires no model fine-tuning and generalises to unseen attack patterns. This is critical for any RAG pipeline that processes untrusted external content.

For zero-shot data drift, Park, Nam, and Cho (APMS 2025, Springer) demonstrated that LLMs themselves can serve as zero-shot drift detectors by leveraging contextual understanding to identify both statistical and semantic anomalies. The approach is most effective for abrupt distribution changes and works best combined with optimised input handling for gradual drift.

“The most dangerous drift is the kind that does not trigger an alert: the gradual semantic shift where outputs stay grammatical but become progressively less correct.”

Wiring CI/CD for Language Model Pipelines

LLM CI/CD differs from standard software CI/CD in three fundamental ways. First, test cases are probabilistic: the same prompt can produce different valid responses. Second, the build artifact includes prompts and model versions, not just compiled code. Third, quality gates require LLM-as-judge evaluation runs rather than binary pass/fail unit tests.

Teams building this typically find that the hardest part is not the technology but the discipline. A prompt change with no accompanying code change must still trigger a full evaluation run. According to OneReach.ai (2025), CI/CD systems like GitHub Actions, Jenkins, and cloud-native pipelines can be adapted for LLMs by adding model update gates, prompt change evaluation triggers, and automated performance testing on standardised benchmark datasets. Typical full LLMOps implementation runs three to six months.

The practical workflow looks like this: a pull request that changes a prompt file triggers a GitHub Actions workflow. The workflow runs the prompt against a golden dataset, scores outputs using an LLM-as-judge, and reports the semantic similarity delta versus the baseline. If the score falls below threshold, the PR cannot merge.

“A prompt change with no code change can break production. If your CI/CD pipeline does not version and evaluate prompts, it is incomplete.”

Code Example 2: Evidently Drift Report as a CI/CD Quality Gate

Source: evidentlyai/evidently, examples/. This snippet shows how to run a semantic drift report comparing baseline responses to current production outputs. The report can be embedded directly in a GitHub Actions workflow and will fail the build if drift exceeds a configured threshold.

Evidently’s native GitHub Actions integration means this report runs automatically on every code push. The HTML output gives reviewers a visual diff of text length distributions, sentiment trends, and semantic similarity scores between the reference and current datasets. When scores fall outside acceptable bounds, the CI pipeline blocks the merge.

MLOps vs LLMOps: What Actually Changes

The table below summarises the operational differences between traditional MLOps and LLMOps. They share infrastructure primitives, but the evaluation philosophy is fundamentally different.

| Dimension | MLOps | LLMOps |

|---|---|---|

| Output type | Deterministic numeric predictions | Probabilistic natural language |

| Eval metric | Accuracy, F1, RMSE, AUC | Semantic similarity, LLM-as-judge, BLEU, human preference |

| Drift signal | Feature distribution shift, label drift | Prompt drift, output drift, concept drift, activation deltas |

| CI artifact | Trained model weights + code | Model weights + code + prompt versions + evaluation datasets |

| Retraining trigger | Accuracy below threshold | Semantic drift, hallucination rate, RLHF feedback signal |

| Key tools | MLflow, Kubeflow, Seldon, DVC | MLflow + Langfuse + Evidently + LangChain + GitHub Actions |

The critical insight: LLMOps does not replace MLOps. It extends it. Teams already running MLflow for experiment tracking can add Langfuse for LLM tracing and Evidently for semantic drift with minimal disruption. The governance model is the same. The metrics are different. According to Gartner (March 2026), only 15% of GenAI deployments currently include LLM observability. By 2028, Gartner predicts that figure will reach 50%, driven by explainability requirements and enterprise trust mandates. The teams building these capabilities now will have a two-year operational advantage.

Frequently Asked Questions

How do I know when my LLM has drifted in production?

Monitor a combination of signals: rising latency without increased query complexity, declining semantic similarity scores between production outputs and a reference baseline, increasing hallucination rates in automated fact-checking, and rising token consumption per session without a clear cause. If any two of these trend in the wrong direction simultaneously over a seven-day rolling window, you likely have drift. Set Prometheus alerts on all four and route them to the same incident queue.

What is the difference between MLOps and LLMOps?

MLOps manages deterministic models evaluated by ground-truth metrics such as accuracy, precision, and recall. LLMOps manages probabilistic language models evaluated by semantic similarity, human preference, and LLM-as-judge scoring. The infrastructure overlaps significantly, but the evaluation philosophy is different. LLMOps also adds prompt versioning, hallucination monitoring, and RAG pipeline governance as first-class concerns that MLOps does not address.

What tools should I use for LLM monitoring in production?

Start with MLflow for experiment tracking and tracing (20,000+ GitHub stars, OpenTelemetry-native). Add Langfuse for LLM-specific observability including prompt management and multi-turn conversation tracing (25,170+ stars). Use Evidently for semantic drift reports and CI/CD quality gates (GitHub Actions integration built in). Layer Prometheus and Grafana for operational metrics like latency and cost. This stack covers tracing, evaluation, drift detection, and alerting.

How do I build a CI/CD pipeline for an LLM application?

Treat prompts as versioned artifacts alongside code. On every pull request, a GitHub Actions workflow should run your prompt against a golden evaluation dataset, score outputs with an LLM-as-judge, and compare the semantic similarity delta to a baseline. Block merges that fall below your quality threshold. Include a model version lock in your deployment manifest. When the upstream model provider updates their API, your golden dataset run will catch regressions before they reach users.

How long does it take to implement LLMOps?

A basic monitoring and evaluation stack, covering tracing, a drift dashboard, and a CI quality gate, takes two to four weeks with a dedicated engineer. A full LLMOps implementation including automated retraining pipelines, multi-environment canary deployment, and RLHF feedback loops typically requires three to six months. Start with the monitoring layer. You cannot improve what you cannot observe.

“LLMOps is not a tool you buy. It is an operational discipline you build, one monitoring signal and one quality gate at a time.”

Conclusion

Three insights stand above the rest. First, LLM drift is multi-dimensional: prompt drift, output drift, and concept drift each require a different detection signal, and monitoring for only one will leave the other two invisible. Second, output consistency is an architecture decision: research from Khatchadourian and Franco (2025) shows that smaller, purpose-tuned models often provide higher production reliability than flagship large models, challenging the reflex to always deploy the biggest available model. Third, the CI/CD pipeline must include prompts: a prompt change without an evaluation run is a silent production risk.

The operational foundation is not complicated. Instrument with MLflow or Langfuse. Detect drift with Evidently and embedding similarity. Gate deployments with GitHub Actions evaluation runs. Alert on sustained degradation, not single data points. Trigger retraining when drift crosses your domain-specific threshold.

The teams winning in production AI are not the ones with the most parameters. They are the ones who measure output quality continuously, catch degradation before users do, and ship improvements faster because they have automated the quality verification. The question is not whether to build LLMOps. It is how many months you can afford to wait.