Multimodal document processing is the combined use of optical character recognition (OCR), document layout analysis, and large language models (LLMs) to convert raw documents, PDFs, scans, and forms into validated, structured data. Each layer contributes a distinct capability: OCR reads text, layout analysis assigns spatial and semantic structure, and the LLM reasons over both to extract, classify, and normalise information at enterprise scale.

Why Enterprise Document Extraction Is Still Broken

Enterprise information extraction from documents is a persistent and costly problem. According to Gartner’s 2024 Market Guide for IDP, the intelligent document processing market will reach $2.09 billion by 2026, growing at 13% CAGR since 2021. Yet despite this investment, most organisations still rely on brittle, rule-based pipelines that break whenever a supplier changes an invoice layout or a regulator revises a form.

The scale of the challenge is staggering. A McKinsey global survey found that 70% of organisations are piloting business process automation, and nearly 90% plan to scale those initiatives enterprise-wide. Yet the core problem remains unsolved: documents were designed for human eyes, not machine parsers. A single contract can mix dense legal text, embedded tables, handwritten annotations, and multi-column layouts all in one file.

Traditional OCR reads characters. It does not understand context. An OCR engine that achieves 99% character-level accuracy on a clean government form will still misfire spectacularly on a scanned invoice where columns shift, headers repeat, and currency symbols appear mid-sentence. That gap between reading and understanding is exactly where multimodal document processing intervenes.

The gap between reading text and understanding a document is where most enterprise pipelines quietly fail every day.

The Three Layers of a Multimodal Document Processing Pipeline

A production-grade pipeline has three distinct stages. Each stage has a specific job, and each can fail independently. Understanding the layers is the first step to building something that holds up under real enterprise load.

Layer 1 – Document Ingestion and Pre-processing

Before any AI model sees a document, it must be cleaned, normalised, and routed. This layer handles deskewing, denoising, and resolution adjustment for scanned images. It also detects whether a PDF page is native (programmatic text) or a bitmap scan, because those two paths require completely different OCR strategies.

Tools like MinerU and Docling handle this routing automatically, sending native pages to a fast text extractor and scan pages to a vision-based OCR model. Getting this routing right avoids the most common failure mode: running expensive vision OCR on a PDF that already contains machine-readable text.

Layer 2 – Layout Analysis and OCR

Layout analysis is the step that transforms raw pixel data into a semantically labelled map. Models like LayoutLMv3 (Huang et al., 2022) pre-train on both text tokens and image patches simultaneously, learning to associate the word “Total” with the number below it in a table rather than with other words that follow it in a sentence. This joint pre-training is what makes layout models qualitatively different from standard OCR.

The output of this layer is a list of typed, georeferenced elements: Title at [x1,y1,x2,y2], Table at [x1,y1,x2,y2], NarrativeText at [x1,y1,x2,y2]. Each element carries the extracted text, its bounding box, a confidence score, and a semantic label. This structured representation, not raw text, is what the LLM layer needs to reason reliably.

Recent work at CVPR 2025, including DocLayLLM (Liao et al.), shows that combining chain-of-thought pre-training with layout-aware LLMs produces models that outperform OCR-dependent baselines on document understanding benchmarks with lighter training requirements.

Layer 3 – LLM Reasoning and Structured Output

The LLM layer receives the typed element list, the original document image for visual grounding, and a structured prompt that defines the target schema. The prompt includes the field names to extract, data types, validation rules, and one or two examples. The LLM then produces a JSON response conforming to that schema.

A 2025 benchmarking study (arXiv 2603.02789) found that powerful multimodal LLMs (Gemini 2.5, GPT-4o, Claude 3.7) can match or exceed traditional OCR+MLLM pipelines when given image-only input, but that carefully designed schema, exemplars, and instructions are the primary levers for further improvement. In other words, prompt engineering at this layer is not optional: it is the dominant performance variable.

In practice, teams building this typically add two guardrails: a Pydantic schema validator that rejects malformed JSON immediately and re-prompts the LLM, and a confidence threshold that flags low-certainty extractions for human-in-the-loop review rather than passing them downstream silently.

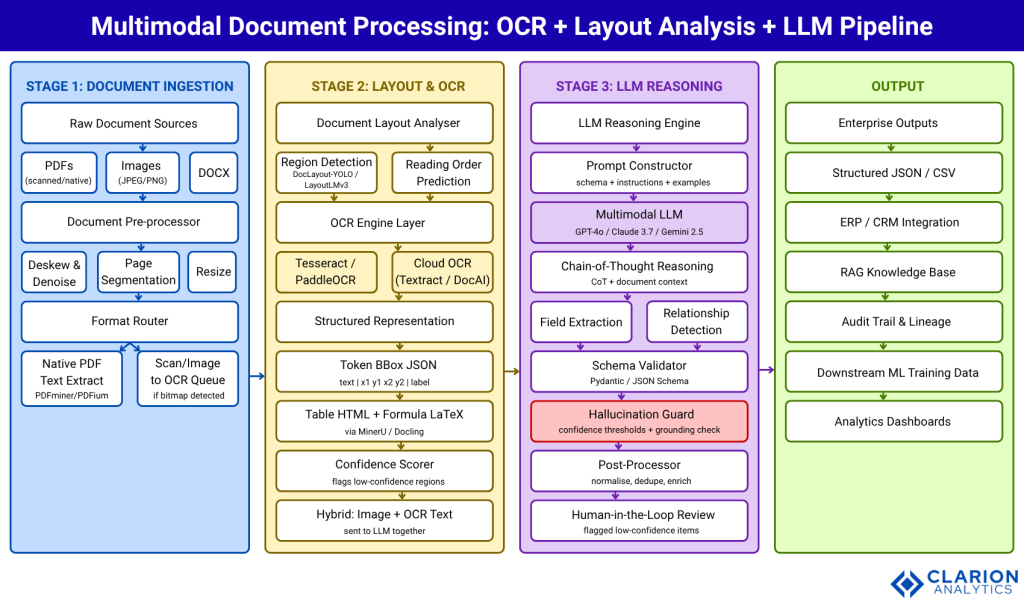

Architecture Diagram

The diagram below shows the complete end-to-end pipeline. Each stage feeds into the next via well-defined data contracts: bounding-box JSON from Stage 2 and validated structured output from Stage 3.

Caption: Figure 1 – End-to-end multimodal document processing pipeline. Stage 1 handles ingestion and format routing. Stage 2 applies layout detection and OCR to produce typed, georeferenced elements. Stage 3 feeds this structured representation and the original document image into a multimodal LLM for contextual extraction, schema validation, and hallucination checking. Validated data flows to downstream enterprise systems and RAG knowledge bases.

Layout analysis gives the LLM a map. Without it, even the most capable model is navigating a document blind.

Real-World Use Cases Across Industries

The following industries have documented, production deployments of multimodal document processing pipelines. In each case, the key requirement is the same: extract specific fields from documents that vary in layout, quality, and format.

Financial Services. Loan origination, KYC onboarding, and claims processing generate thousands of documents daily. Banks use OCR+LLM pipelines to extract borrower income, asset tables, and signature blocks from mortgage package documents that mix standard forms with appended statements in wildly different layouts. Coherent Market Insights (2025) identifies BFSI as the largest IDP segment at 31.7% of market share.

Legal and Contracts. Contract review platforms extract parties, obligations, payment terms, and penalty clauses from multi-hundred-page agreements. The layout analysis layer is critical here: nested clauses and defined terms have hierarchical structure that raw OCR collapses. An LLM with the layout map can trace a defined term back to its definition across dozens of pages.

Healthcare. Clinical notes, lab reports, and prior authorisation forms combine structured fields with free-text clinical narratives. HIPAA-compliant deployments use on-premise VLMs (Qwen2.5-VL, Llama vision variants) to avoid sending patient data to external APIs.

Logistics and Trade. Bills of lading, customs declarations, and certificate-of-origin documents combine tabular data with stamps and handwritten annotations. Hybrid pipelines route typed regions to text extractors and handwritten regions to dedicated OCR models before the LLM synthesises the full record.

Every industry drowning in documents has the same core need: context-aware extraction that holds up when layout changes without warning.

Tools and Technology Choices

The market for multimodal document processing tools has consolidated around a small set of well-maintained open-source libraries and cloud APIs. The choice between them depends on throughput requirements, data residency constraints, and the complexity of target document types.

| Approach / Tool | Key Strength | Best Used When | Watch Out For |

|---|---|---|---|

| Docling (IBM, MIT Licence) | Advanced PDF layout + table structure; native LangChain/LlamaIndex integration; runs locally | Sensitive data requiring local execution; RAG pipeline prep; multi-format ingestion | Formula recognition still maturing; GPU recommended for speed |

| MinerU (OpenDataLab, Apache 2.0) | SOTA accuracy on OmniDocBench; VLM+OCR dual engine; 109-language OCR support | Scientific/academic documents with formulas and complex tables; high-volume batch processing | Higher GPU memory footprint; newer licence terms for commercial use |

| Unstructured (Unstructured-IO) | Broadest format support (20+ types); cloud platform for enterprise scale; chunking + embedding built-in | Mixed-format document corpus; teams needing managed infrastructure; LLM ETL pipelines | Cloud version is commercial; open-source tier has reduced OCR accuracy |

| Cloud APIs (Textract, Document AI, Azure Doc Intelligence) | Managed scale; pre-trained models for forms, invoices, IDs; no infrastructure | Standard form types at high volume; teams without ML ops capacity | Data leaves your environment; per-page pricing at scale |

| Pure Multimodal LLM (GPT-4o, Claude 3.7, Gemini 2.5) | No OCR pipeline needed; handles variable layouts natively; simplest deployment | Documents under 30 pages; variable layouts; zero-infrastructure teams | Latency, cost per page; hallucination risk on deterministic fields |

Code Snippet 1 – Docling: Convert a PDF to Structured Markdown

Source: docling-project/docling

This 5-line snippet drives Docling’s full pipeline: layout detection, OCR, reading-order prediction, and table extraction all happen inside convert(). The result is a structured Markdown string ready for LLM chunking or RAG ingestion.

Code Snippet 2 – Unstructured: Layout-Aware PDF Partition with Typed Elements

Source: Unstructured-IO/unstructured

The hi_res strategy triggers layout detection before OCR. Each returned element carries a .category (Title, Table, NarrativeText) and .metadata.coordinates exactly the typed, georeferenced structure that the LLM layer needs to reason about document content rather than raw text.

A pipeline that fails silently at 3% of documents will process hundreds of thousands of records before anyone notices the systematic error.

Implementation Guidance and Common Failure Modes

Teams building this pipeline encounter a predictable set of failure modes. Knowing them in advance saves weeks of debugging.

Start with document diversity benchmarking. Before committing to any tool, collect 50 representative documents from your corpus and run them through your candidate pipeline end-to-end. Measure field-level extraction accuracy, not just character-level OCR accuracy. A tool that achieves 99% character accuracy may still extract the wrong table cell because it misread the reading order.

Schema-first design. Define your target JSON schema before writing a single line of extraction code. The schema drives everything: the prompt template, the Pydantic validator, the downstream database model, and the human review interface. Teams that retrofit the schema after building the pipeline spend three times as long refactoring.

Confidence thresholds and human-in-the-loop routing. Every field extracted by an LLM should carry an estimated confidence score, either from the model’s logprobs or from a secondary verification prompt. Fields below your confidence threshold route to a review queue rather than passing downstream silently. McKinsey (2021) found that companies with human-in-the-loop designs are twice as likely to report automation success.

Error propagation is cumulative. Each stage of a pipeline-based approach accumulates errors. A 97% accurate layout detector feeding a 98% accurate OCR engine feeding a 96% accurate LLM extractor produces a combined field-level accuracy well below any individual component. Monitor each stage independently with stage-specific metrics.

Hallucination is a document-specific problem. LLMs asked to extract specific fields from long documents will occasionally confabulate values, especially for fields that appear nowhere in the document. For high-stakes fields (amounts, dates, party names in contracts), always implement a span-attribution check: the extracted value should map back to a specific text span in the source document.

Frequently Asked Questions

How does document layout analysis work with large language models?

Layout analysis models detect and classify regions in a document image, headers, paragraphs, tables, figures and attach bounding-box coordinates to each region. The LLM receives this typed, georeferenced map alongside the extracted text and optionally the raw image, allowing it to reason about spatial relationships: which value belongs to which column header, which clause is a sub-clause of another. Without this map, even a powerful LLM processes documents as flat text, losing critical structural signals.

What are the best open-source tools for enterprise information extraction from PDFs?

The leading open-source options in 2025-2026 are Docling (IBM Research, MIT licence), MinerU (OpenDataLab, Apache 2.0), and Unstructured (Unstructured-IO). Docling excels at layout and table structure with local execution capability. MinerU leads on benchmark accuracy for complex documents with formulas. Unstructured offers the broadest format support and a managed cloud platform. The right choice depends on your data residency requirements, document complexity, and infrastructure capacity.

Is OCR or a multimodal LLM better for document extraction?

Neither approach dominates across all scenarios. Traditional OCR achieves near-perfect accuracy on fixed, high-quality forms because the layout never changes. Multimodal LLMs outperform OCR on variable-layout, poor-quality, or contextually complex documents. A 2025 benchmarking study found that powerful MLLMs can match OCR+LLM pipelines on business documents with image-only input, but optimal results come from a hybrid architecture that routes by document type and confidence level.

How do I handle hallucinations in LLM-based document extraction?

Implement three controls: schema validation (Pydantic or JSON Schema rejects malformed output immediately), span attribution (the extracted value must map back to a specific text span in the source), and confidence-based routing (low-confidence extractions go to a human review queue). For critical numeric or date fields, use a secondary verification prompt that asks the LLM to find the source sentence and confirm the extracted value matches it verbatim.

What document types benefit most from multimodal processing?

Documents with variable layouts, mixed content types, or low scan quality benefit most. Contracts, annual reports, clinical notes, bills of lading, and research papers are prime candidates because their structure varies significantly across instances and they mix tables, figures, and prose. Standard, high-volume forms (tax filings, ID documents, standardised invoices) are better handled by purpose-built OCR models with fixed field mappings, which offer higher determinism and lower cost per page.

Conclusion

Three insights should guide your next design decision. First, multimodal document processing is not a single technology but a layered architecture: document ingestion and routing, layout analysis and OCR, and LLM reasoning must each be designed and monitored separately. Second, the choice between pure OCR, pure LLM, and hybrid approaches is a document-type-specific route by complexity and confidence, not by convention. Third, schema-first design and human-in-the-loop routing at confidence thresholds are not optional refinements: they are the difference between a demo and a production system.

The market is moving fast. Straits Research projects the IDP market will grow from $2.44 billion in 2024 to $37 billion by 2033. The organisations that build reliable, modular pipelines now will compound that advantage as document volumes and model capabilities both scale.

What would it take to replace your highest-volume manual document review process with a pipeline that routes only the genuinely ambiguous cases to a human reviewer?