A production RAG (Retrieval-Augmented Generation) pipeline is an end-to-end system that retrieves semantically relevant document chunks from a vector store, injects them into an LLM prompt, and returns grounded answers at scale. Unlike a prototype, a production pipeline handles chunking strategy, embedding drift, hybrid search, re-ranking, latency budgets, observability, and rolling index updates simultaneously.

Why Most RAG Prototypes Fail in Production

According to Gartner (2024), 30% of generative AI proof-of-concepts are abandoned before reaching production. The gap is almost never the language model. It is a retrieval layer that worked on 50 curated documents and collapses when it sees 500,000. A production RAG pipeline demands decisions that prototypes never surface: which indexing algorithm, which chunking policy, how to balance recall and latency, and what telemetry tells you when things silently degrade.

McKinsey’s 2025 State of AI survey found that 78% of organizations use AI in at least one business function, up from 55% in 2023. Yet only 6% qualify as high performers generating measurable EBIT impact from AI. That 72-point gap is largely an engineering problem, and production-grade RAG pipeline architecture is where the gap closes.

“The retrieval layer is where production RAG systems win or fail, not the language model.”

RAG Pipeline Architecture for Production Systems

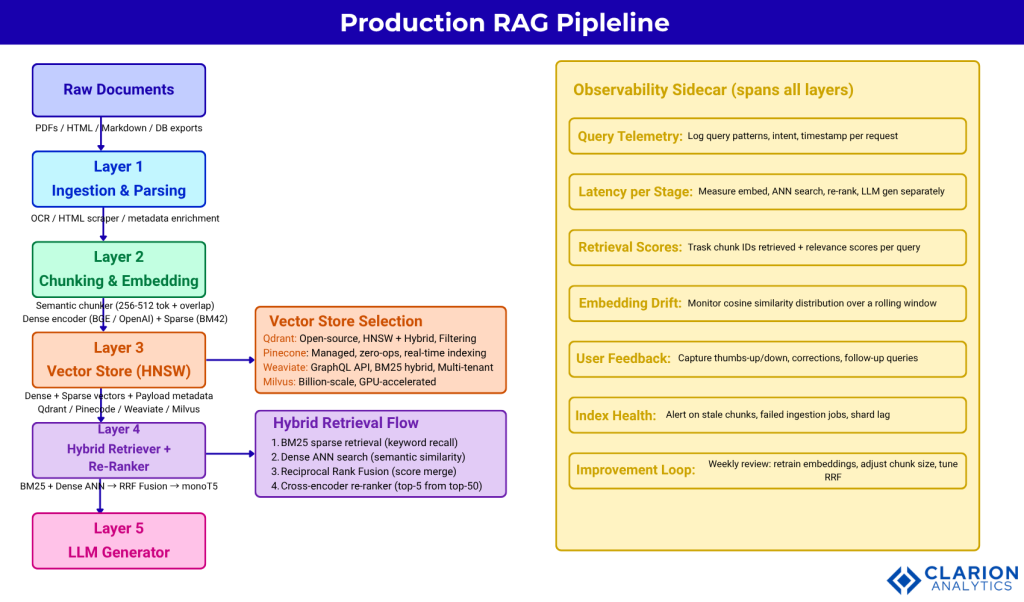

A production RAG pipeline has five layers: document ingestion and parsing, chunking and embedding generation, vector index storage, hybrid retrieval with re-ranking, and LLM generation with guardrails. Each layer introduces failure modes the next cannot compensate for. A poorly chunked index poisons retrieval precision regardless of how good your re-ranker is.

The Five-Layer Architecture

The ingestion layer handles extraction from PDFs, HTML, Markdown, and structured databases. It cleans text, resolves encoding issues, and enriches each document with metadata, source, date, author, and section that becomes payload in the vector store. The embedding layer converts cleaned chunks into high-dimensional dense vectors using models such as OpenAI text-embedding-3-small, BGE-M3, or E5-large. Those vectors land in a vector store alongside an optional sparse representation for hybrid search.

The retrieval layer runs at query time, executing a hybrid search, fusing scores, and passing the top-K candidates to a re-ranker. The LLM generation layer receives ranked context in the prompt and produces a grounded answer. An observability sidecar runs across all layers, logging query patterns, latency per stage, and retrieval relevance scores.

Where Latency Actually Lives

Most engineers expect generation to be the bottleneck. Research from ETH Zurich’s RAGO system (2024) shows that in hyperscale retrieval scenarios, over 80% of total pipeline latency sits in the retrieval stage, not generation. Vectors with 1024-to-2048 dimensions, combined with large document collections, make ANN search the true bottleneck. This means index algorithm selection and embedding cache design are latency decisions, not infrastructure afterthoughts.

This five-layer production RAG pipeline shows the directional flow from raw document ingestion through chunking and embedding, into a vector store using HNSW indexing, through hybrid retrieval (dense + sparse) and a cross-encoder re-ranker, and finally to the LLM generator. An observability sidecar spans all stages, collecting retrieval telemetry and latency metrics needed for continuous optimization. Teams skipping any single layer typically discover the gap only at production scale.

Choosing the Right Vector Store

The right vector store depends on your scale, filtering requirements, and tolerance for operational complexity. Qdrant suits teams needing high-performance hybrid search. Pinecone suits those prioritizing managed simplicity. Weaviate suits teams requiring GraphQL APIs and multi-tenant deployments.

| Vector Store | Key Strength | Best Used When |

|---|---|---|

| Qdrant | HNSW + native hybrid search (dense + sparse), payload filtering, open-source | High-throughput production with complex metadata filters and cost control |

| Pinecone | Fully managed, zero ops, real-time indexing | Teams that need fast time-to-production and have budget for managed infrastructure |

| Weaviate | BM25 + vector hybrid, GraphQL API, multi-tenancy | Applications needing hybrid search out of the box with flexible schema |

| Milvus | Billion-scale distributed search, GPU acceleration | Enterprise deployments processing hundreds of millions of vectors |

| FAISS | Lightweight, embeddable, fastest ANN search | Research prototypes or edge inference where ops overhead is unacceptable |

Research published in a systematic RAG literature review (2025) confirms that HNSW-based indexes achieve sub-millisecond Maximum Inner Product Search performance in production but require careful negotiation of accuracy-latency trade-offs and memory footprint. Organizations with mature pipelines report maintaining query times under 100ms while scaling to billions of vectors through proper index configuration.

“Choosing a vector store is an architectural commitment; pick the wrong one, and you rebuild the data plane at scale.”

Chunking Strategy and Embedding Quality

Chunking strategy is the highest-leverage variable in RAG precision. A 2024 guide to production RAG pipelines reports that poorly processed documents can reduce retrieval accuracy by up to 45%. Fixed-size chunking is fast to implement but routinely splits related sentences, table headings from their figures, and footnotes from their body text.

Fixed vs. Semantic Chunking

The SPLICE method (Semantic Preservation with Length-Informed Chunking Enhancement) has emerged as a leading approach in 2024-2025, with adopters reporting a 27% improvement in answer precision. The practical default for most production pipelines is 256-to-512 token chunks with a 10-to-20% overlap policy, combined with semantic boundary detection that respects section headers and paragraph breaks. Enterprise implementations increasingly use multiple embedding models specialized for different document types within the same pipeline.

Decoupling Retrieval Chunks from Synthesis Chunks

LlamaIndex’s production RAG guidance identifies a critical insight most teams miss: the optimal chunk for retrieval is not the optimal chunk for generation. Small chunks improve retrieval precision by reducing noise in the embedding. Larger chunks give the LLM enough surrounding context to synthesize a coherent answer. The solution is to store small retrieval chunks alongside their parent document summaries and pass the larger parent context to the LLM after retrieval, a pattern called recursive retrieval.

Code Snippet 1: Filtered Vector Search with Qdrant Source: qdrant/qdrant-client Python SDK

This snippet shows how to upsert document embeddings with structured metadata and then constrain a cosine similarity search to a specific category using Qdrant’s payload filter. In production, this pattern dramatically improves precision by narrowing the ANN search space before scoring runs. A legal assistant, for example, would never surface HR policy documents alongside contract clauses.

Retrieval Optimization: Hybrid Search and Re-ranking

Combining BM25 sparse retrieval with dense vector search, then re-ranking with a cross-encoder, is the highest-performing retrieval pattern for production RAG. Wang et al. (2024) tested this configuration across open-domain QA, multi-hop QA, and medical QA benchmarks and found that the Hybrid with HyDE + monoT5 re-ranking + Reverse repacking combination consistently outperformed both pure vector and pure keyword retrieval across all five evaluation tasks.

“Hybrid search is not optional for production RAG; it is the baseline. Pure vector search leaves recall on the table.”

Reciprocal Rank Fusion

Reciprocal Rank Fusion (RRF) merges the ranked lists from sparse and dense retrieval into a single list without requiring score normalization. RAG-Fusion (Rackauckas, 2024) extends this by generating multiple query reformulations and fusing their results, further improving recall on ambiguous queries. RRF is supported natively in Qdrant, Weaviate, and Azure AI Search.

Cross-encoder Re-ranking

A bi-encoder retriever is fast but scores query-document pairs independently; it cannot model the direct interaction between query tokens and document tokens. A cross-encoder re-ranker processes the full query-document pair together, producing much higher-fidelity relevance scores. The trade-off is speed: cross-encoders are 10-to-100x slower. The standard production pattern is retrieval of top-50 candidates with a bi-encoder, then re-ranking to top-5 with a cross-encoder model such as monoT5 or Cohere Rerank.

Code Snippet 2: Hybrid Search Index with LlamaIndex and Qdrant Source: run-llama/llama_index

This snippet activates both dense (HuggingFace or OpenAI embeddings) and sparse (BM42) vector search within a single Qdrant collection via LlamaIndex’s storage context. Setting enable_hybrid=True means every query automatically runs both retrieval paths and fuses scores before returning results. This single configuration change typically produces the largest accuracy gain available with no change to your LLM or prompt.

Latency, Observability, and Scaling to Production

Embedding caches, async ingestion pipelines, and query-level telemetry are the three infrastructure patterns that separate a stable production RAG system from one that degrades silently under load. Without these three, you are operating blind at scale.

Morphik’s 2025 analysis found that an embedding cache storing vector representations for repeat queries cuts p95 response time from 2.1 seconds to 450 milliseconds by eliminating redundant embedding generation for frequently accessed documents. The cache operates at three levels: query-level (identical searches), semantic-level (similar queries mapping to the same document clusters), and document-level (persisting embeddings across user sessions).

“A RAG system without observability is a black box, and black boxes fail in ways you cannot debug.”

The continuous improvement loop that high-performing teams use follows a three-step cycle: log retrieval telemetry including query patterns, relevance scores, and user feedback; analyze weekly to identify performance degradation and emerging query types; then retrain embedding models or adjust chunk sizes based on findings. Weekly KPI reviews should track p95 latency, retrieval precision, and answer correctness rates. This rhythm ensures the pipeline evolves with the document corpus rather than drifting from it.

Async ingestion is non-negotiable for production. Synchronous indexing blocks query throughput during document updates and creates inconsistent index states. A robust production pipeline uses a queue (Kafka or a cloud message bus) to decouple document ingestion from serving, ensuring the vector index is never partially updated during a live query.

Real-World Use Cases and Implementation Lessons

The highest-ROI RAG deployments in 2024-2025 concentrate in three domains: internal knowledge assistants answering questions from HR policy PDFs and SharePoint, IT help and runbook search across incident post-mortems and tickets, and legal-contract review systems querying termination and liability clauses. All three share the same property: hallucination cost is high, document freshness is critical, and structured metadata filtering at retrieval time adds significant precision.

In practice, teams building these systems most commonly discover that their chunking strategy was wrong, not their LLM or vector store. A legal contract assistant that chunks by fixed 512-token windows will routinely split a clause across two chunks. The retriever fetches the second half. The LLM sees an incomplete context and generates a plausible-sounding but inaccurate answer. Switching to semantic chunking that respects clause boundaries, combined with metadata tagging for document sections, resolves this class of failure more reliably than any model upgrade.

A 2024 survey of AI engineers found that poor data cleaning was the primary cause of RAG pipeline failures in 42% of unsuccessful implementations. The ingestion layer is unglamorous engineering. It is also the highest-leverage place to spend time before touching retrieval optimization.

Frequently Asked Questions

How do I choose between Pinecone, Qdrant, and Weaviate for my RAG pipeline? Choose Pinecone if you want a fully managed service and can accept higher per-query cost. Choose Qdrant if you need open-source control, complex payload filtering, and high-performance hybrid search on self-hosted infrastructure. Choose Weaviate if your team prefers a GraphQL API and needs multi-tenancy with BM25 hybrid search out of the box.

Why does my RAG system give good answers in testing but bad answers in production? Testing typically uses a small, clean document set where any retrieval method works. Production introduces distribution shift: new document types, ambiguous queries, and high document volumes where chunking errors compound. The fix is systematic evaluation with a diverse query set, structured metadata filtering, and weekly telemetry review of real query patterns against retrieval scores.

What chunk size should I use for production RAG? The practical default is 256-to-512 tokens with a 10-to-20% overlap. More important than size is boundary awareness: chunks should respect semantic boundaries such as paragraph breaks, section headers, and clause endings. For hierarchical retrieval, store small chunks for precision retrieval and link them to larger parent chunks for synthesis.

How does hybrid search improve RAG retrieval accuracy? Hybrid search combines sparse BM25 retrieval (which excels on exact keyword matches) with dense vector retrieval (which excels on semantic similarity). Reciprocal Rank Fusion merges the two ranked lists. Wang et al. (2024) found this combination outperforms either method alone across open-domain QA, multi-hop, and medical benchmarks. The gain is largest on queries containing specific entity names, dates, or product codes.

How do I monitor and improve a RAG pipeline after deployment? Log retrieval telemetry at every query: chunk IDs retrieved, relevance scores, query-to-answer latency per stage, and user satisfaction signals. Review weekly. Track p95 latency, retrieval precision (are the right chunks appearing?), and answer correctness rate on a golden evaluation set. When precision degrades, investigate chunking and metadata first; when latency degrades, investigate index warming and embedding cache hit rates.

Conclusion

Three insights define production RAG engineering. First, retrieval quality is upstream of everything: no prompt engineering or model upgrade compensates for wrong chunks. Second, hybrid search with re-ranking is now the production baseline; pure vector search is a prototype pattern. Third, observability is not optional; a RAG pipeline that cannot explain why it retrieved a chunk cannot be systematically improved.

The teams closing the gap between RAG adoption and measurable business impact are not using better models. They are engineering better retrieval. The question worth sitting with: if you removed your LLM tomorrow and exposed only the retrieval layer to a user, would the right information surface?