A vector database stores high-dimensional numerical embeddings and retrieves the most semantically similar ones at query time using approximate nearest neighbour (ANN) algorithms. In an enterprise RAG pipeline, the vector database sits between the embedding model and the LLM, returning relevant document chunks so the model can generate grounded, source-cited answers. The four leading options, Pinecone, Weaviate, Qdrant, and pgvector, trade off managed convenience, hybrid search capability, raw query throughput, and operational simplicity in distinct ways.

Why Vector Database Selection Is Now a Strategic Decision

The vector database sits at the critical path of every RAG query. Choose wrong, and you inherit a performance ceiling, an ops burden, or a vendor lock-in that compounds every sprint. Gartner (2025) projects vector databases will grow at a 75.3% CAGR, the fastest segment in the entire DBMS market. A separate Menlo Ventures survey (2024) found RAG now drives 51% of enterprise AI implementations, up from 31% just a year earlier.

Gartner also forecasts that by 2026, more than 30% of enterprises will have adopted vector databases specifically to enrich foundation models with business data. That adoption is not uniform. Teams building their first RAG proof of concept face a very different set of constraints than platform engineers scaling a multi-tenant knowledge base to hundreds of millions of documents.

Most teams start with a vendor claim and end up re-evaluating after their first production incident. This post gives you the benchmark data, architectural patterns, and decision criteria to make the right call before you build.

The wrong vector database does not just slow your RAG pipeline. It adds an ops burden that compounds every sprint.

The Four Contenders at a Glance

Pinecone is fully managed with zero ops; Weaviate leads native hybrid search; Qdrant delivers the highest raw QPS and filtered search; pgvector keeps vectors inside Postgres for teams below 10M embeddings. Each reflects a distinct architectural philosophy.

Pinecone is a fully managed, serverless vector database. You push embeddings in via API and query them out. There is no cluster to size, no index to tune in production, and no on-call rotation to staff. The tradeoff is that Pinecone does not expose HNSW parameters, which means you accept whatever recall-latency balance Pinecone chose for you.

Weaviate is open-source and available as a managed cloud service. Its primary differentiator is first-class hybrid search: BM25 keyword retrieval and vector similarity run in a single query, scored and merged natively. This makes Weaviate the default choice when your retrieval quality depends on both semantic meaning and exact keyword matches.

Qdrant is written in Rust and purpose-built for speed and filtered search. Its payload indexing builds dedicated indexes for common filter fields (such as tenant_id, document_type, or date ranges) that integrate tightly with HNSW traversal. At 1M vectors, independent benchmarks place Qdrant at 1,840 QPS, the highest of the four.

pgvector is a Postgres extension. It adds a vector column type and HNSW indexing directly inside your existing database. No new service, no new SDK, no extra infrastructure cost. AWS RDS, Azure Database for PostgreSQL, Google Cloud SQL, Supabase, and Neon all support it out of the box.

Comparison: Pinecone vs Weaviate vs Qdrant vs pgvector

| Option | Key Strength | Best Used When | Est. Cost (10M vectors) | License |

|---|---|---|---|---|

| Pinecone | Zero ops, serverless, scales to billions | Fast production with no infra team | $200-400/mo managed | Proprietary |

| Weaviate | Native BM25 + vector hybrid, multi-tenancy | Keyword relevance matters alongside semantics | $700-1,500/mo cloud; $0 self-hosted | BSD-3 / Apache 2 |

| Qdrant | Highest QPS (1,840 at 1M vecs), best filtered search | Latency-critical with complex metadata filters | $600-1,200/mo cloud; $0 self-hosted | Apache 2.0 |

| pgvector | Inside Postgres, zero extra infra | Existing Postgres stack, under 10M vectors | $0 marginal on existing Postgres | PostgreSQL License |

Picking the fastest vector database is the wrong goal. Picking the one that fits your ops model and scale curve is the right one.

Performance Deep Dive: What the Benchmarks Actually Show

At 1M vectors, Qdrant benchmarks at 1,840 QPS; pgvector reaches approximately 640 QPS; Pinecone’s serverless tier adds network round-trip latency; and Weaviate adds 15-30ms for hybrid search. Past 10M vectors, the gap between pgvector and dedicated engines widens significantly.

The narrative that Postgres is slow for vector search comes from the IVFFlat era. With HNSW indexes, available since pgvector 0.5.0, pgvector matches or beats dedicated databases at 1M-vector scale. Supabase benchmarks showed pgvector HNSW outperforming Qdrant on equivalent compute at 99% accuracy for sub-1M datasets. At that scale, the database is not the bottleneck. Embedding generation is, typically consuming 90ms of a 300ms total query latency.

The picture changes sharply at 50M vectors. Published benchmarks (VectorDBBench, 2025) show pgvectorscale reaching 471 QPS at 99% recall, versus Qdrant at 41 QPS on the same dataset. The takeaway: pgvector is the right default below 10M vectors, and a carefully considered option up to 50M with pgvectorscale, but it is not the right tool for a 200M-vector knowledge base.

Pinecone’s serverless tier introduces a separate variable: geographic latency. One benchmark across 14 workloads recorded Pinecone P50 latency at 300ms compared to Qdrant at under 5ms. Most of that difference is network round-trip to Pinecone’s us-east-1 region. Co-locate your application and your Pinecone index in the same region and the numbers change dramatically.

One performance dimension that benchmarks undercount consistently is filtered search. Unfiltered nearest-neighbour search is easy to optimise. Filtered search at high concurrency, where a query asks for the top-10 similar chunks where tenant_id = ‘acme’ and document_date is after 2024-01-01, exposes the actual architectural differences between these systems.

Architecture for a Production Enterprise RAG Pipeline

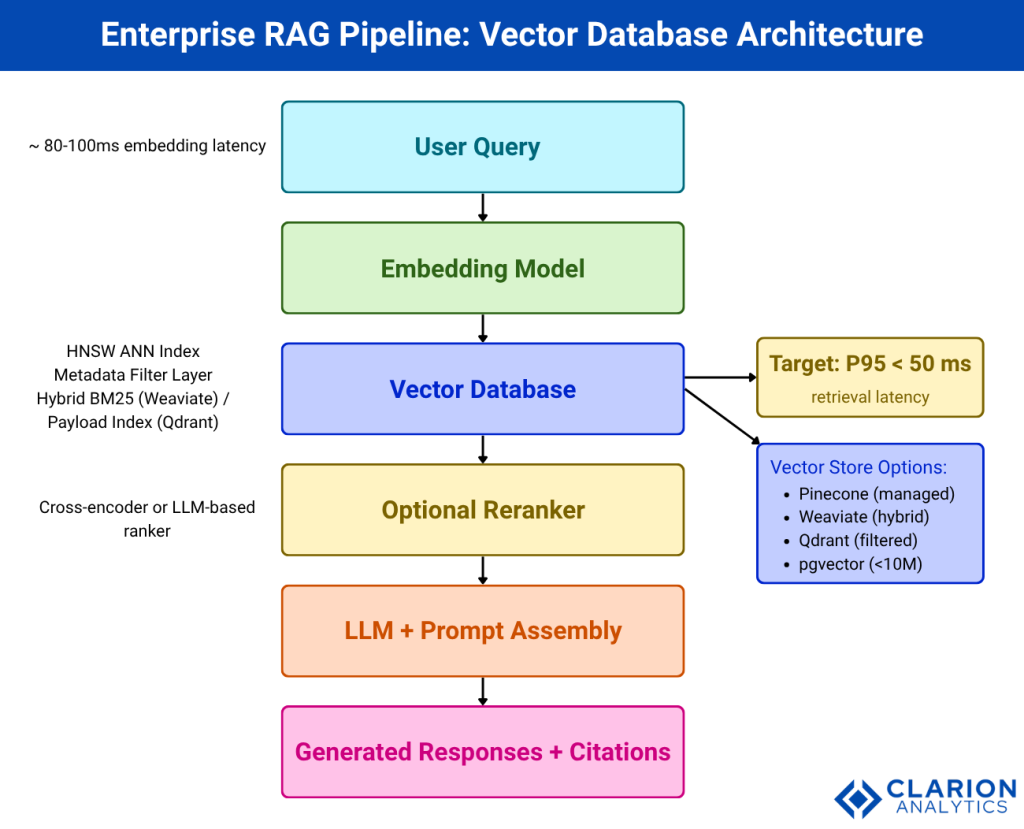

A production RAG system routes user queries through an embedding model, into the vector store for ANN retrieval with metadata filtering, then through an optional reranker before the LLM generates a grounded response. The vector database sits at the critical latency path, and its index type plus filtering strategy determine both recall accuracy and response time.

Architecture caption: A production enterprise RAG pipeline routes every user query through three latency-sensitive stages: embedding, retrieval, and generation. The vector database governs the middle stage, where index type, filtering strategy, and ANN algorithm determine both recall accuracy and response latency. Teams typically target P95 retrieval latency under 50ms before the reranker. The choice of vector store, its index configuration, and whether it co-locates with application data or runs as a dedicated service shape the entire operational profile.

Research from ETH Zurich and IBM (RAG-Stack, 2025) proposes treating vector database selection as a three-pillar decision: algorithmic (index type, quantisation, filtering strategy), systems (concurrency, replication, sharding), and hardware (RAM vs. disk-mapped storage). This framing explains why vendor benchmarks diverge so sharply from real-world results: most benchmarks only measure one pillar.

Teams building enterprise RAG also need to account for reranking. A well-tuned HNSW retrieval step returns 20-50 candidate chunks, and a cross-encoder reranker filters that to the top 5 before prompt assembly. Semantic Pyramid Indexing research from UCLA and Columbia (2024) shows that single-resolution flat indexing degrades relevance for diverse query types, and multi-resolution control improves both speed and context quality for heterogeneous enterprise corpora.

Code Snippet 1: pgvector HNSW Index Setup and Similarity Query

Source: pgvector/pgvector GitHub README – This snippet demonstrates how a developer adds HNSW indexing to a Postgres table and runs a cosine similarity search in pure SQL. It is the best first-look example for any team already running Postgres, because it shows that zero new infrastructure is required.

The <=> operator computes cosine distance. A lower value means higher similarity. The HNSW index makes this query sub-millisecond for datasets up to approximately 10M rows on modest hardware.

At sub-10M vectors, pgvector HNSW returns results in 5-8ms. The database is not your bottleneck. Embedding generation is.

Filtered Search: The Feature That Changes the Enterprise Decision

Qdrant’s payload indexing integrates metadata filters directly into HNSW traversal, maintaining sub-10ms P50 latency even with complex filters. Pinecone’s filtering adds noticeable latency and its HNSW parameters are not user-tunable. This single difference often decides the selection in multi-tenant enterprise deployments.

In a real enterprise RAG deployment, almost every query carries filters. A legal team’s document assistant scopes results to the current engagement. A customer support bot restricts chunks to the querying customer’s account. A compliance tool filters by jurisdiction and document date. These are not edge cases; they are the dominant query pattern.

Qdrant’s architecture separates payload storage, vector storage, and the HNSW graph with explicit memory-mapped disk support. Common filter fields get dedicated payload indexes that are traversed in parallel with the ANN graph. The result is that filter-then-search remains fast even at 100M vectors, and memory requirements drop 3-5x compared to fully in-memory configurations through quantisation.

Weaviate uses a different approach: its BM25 and vector search pipelines run in parallel and merge results via a configurable fusion algorithm. A search for “GDPR compliance checklist” benefits from both the exact phrase match and the semantic neighbourhood.

Code Snippet 2: Qdrant Filtered Vector Search (Python SDK)

Source: qdrant/qdrant-client examples – This snippet shows how Qdrant combines an embedding vector with a metadata filter in a single API call. The must condition on tenant_id ensures the ANN search never crosses tenant boundaries, a critical requirement for multi-tenant enterprise RAG.

Qdrant evaluates the filter condition at the payload index level before entering the HNSW graph, not after. This pre-filtering approach keeps query latency nearly constant as tenant count grows, which is why Qdrant is the most common choice for SaaS platforms that serve hundreds of isolated customer namespaces from a single cluster.

When to Upgrade from pgvector and What to Upgrade To

Migrate from pgvector when your corpus exceeds 10M vectors, when filtered queries degrade P95 latency past your SLA, or when you need built-in hybrid BM25+vector search. Qdrant is the most common upgrade path for performance; Weaviate is the upgrade path when hybrid search is the core product feature.

In practice, the migration trigger is almost never a round number of vectors. Teams notice it when HNSW index size pushes Postgres RAM usage to the limit and the DBA asks for a dedicated instance, or when a filter-heavy query starts returning in 200ms instead of 20ms after a corpus refresh.

The arXiv paper on vector search cost-performance trade-offs (Abtahi et al., 2025) shows that in-memory ANN indexes create escalating operational costs as datasets grow. Qdrant’s memory-mapped storage and scalar quantisation address this: you can store vectors on disk and keep only the HNSW graph and hot payload data in RAM, cutting infrastructure cost by 60-80% at large scale without a meaningful recall penalty.

For teams already invested in a managed Postgres stack, pgvectorscale (from Timescale) is a useful middle step before migrating to a dedicated system. It extends pgvector with a DiskANN-inspired index that significantly improves throughput at 50M+ vectors while keeping everything inside Postgres.

Enterprises are choosing RAG for 30-60% of their AI use cases. The vector store you pick today becomes load-bearing infrastructure tomorrow.

Frequently Asked Questions

What is the fastest vector database for RAG in 2025?

Qdrant consistently delivers the highest raw query throughput among self-hosted options, benchmarking at 1,840 QPS at 1M vectors and maintaining sub-10ms P50 latency under filtered queries. For managed cloud, Pinecone offers competitive latency when your application and index are co-located in the same AWS region. Latency at the embedding stage, typically 80-100ms, usually dominates total query time for smaller datasets.

Can pgvector handle production RAG workloads?

Yes, for datasets under 10M vectors. With HNSW indexing, pgvector returns results in 5-8ms and integrates directly with your existing Postgres-based application stack. The practical ceiling is approximately 10M vectors before HNSW index memory requirements and filtered query latency make a dedicated vector database more economical. Above 50M vectors, even pgvectorscale trails purpose-built engines like Qdrant significantly.

What is the difference between Pinecone and Weaviate for enterprise use?

Pinecone is a fully managed proprietary service optimised for zero operations overhead and fast time-to-production. Weaviate is open-source, self-hostable, and differentiated by first-class hybrid BM25+vector search in a single query. Pinecone suits teams that want to avoid infrastructure; Weaviate suits teams that need keyword relevance alongside semantic similarity and are willing to manage or pay for a managed cluster.

How does Qdrant handle filtered search better than other vector databases?

Qdrant builds dedicated payload indexes for common filter fields (such as tenant_id, document_type, or date) and integrates them directly into HNSW graph traversal. This means filters are evaluated before entering the ANN search, not after. The result is that P50 latency stays below 10ms even with two or three active filter conditions, where Pinecone and pgvector see latency increase proportional to filter selectivity.

When should I move off pgvector to a dedicated vector database?

Plan a migration when your corpus approaches 10M vectors, when Postgres RAM is constrained by HNSW index size, or when P95 query latency under concurrent load exceeds your SLA. Qdrant is the most common first destination for performance-driven migrations. Weaviate is the right choice when your product roadmap requires native hybrid search. Both offer Docker deployments for a low-friction evaluation on your own data before committing.

Choosing the Right Vector Store for Your Enterprise RAG Stack

Three conclusions deserve emphasis. First, the Postgres-is-slow-for-vectors narrative is outdated: pgvector with HNSW is a production-grade choice for the majority of enterprise RAG workloads below 10M vectors. Second, filtered search is the real differentiator at scale, and Qdrant’s payload indexing architecture is purpose-built for the multi-tenant, metadata-heavy query patterns that characterise enterprise deployments. Third, Pinecone and Weaviate solve distinct problems: managed convenience versus native hybrid search.

Match your vector store to your scale curve, not your current size. A team at 2M vectors today that is growing 50% quarter-over-quarter should design for 20M vectors, not 2M.

The question worth sitting with: if your retrieval recall dropped by 10% tomorrow, would your current vector database give you the observability and tuning knobs to diagnose and fix it before your users noticed?

Table of Content

- Why Vector Database Selection Is Now a Strategic Decision

- The Four Contenders at a Glance

- Performance Deep Dive: What the Benchmarks Actually Show

- Architecture for a Production Enterprise RAG Pipeline

- Filtered Search: The Feature That Changes the Enterprise Decision

- When to Upgrade from pgvector and What to Upgrade To

- Frequently Asked Questions

- Choosing the Right Vector Store for Your Enterprise RAG Stack