What are word embeddings? Word embeddings are dense numerical vector representations of words or phrases, where position in high-dimensional space encodes semantic and syntactic meaning. Similar words cluster near each other. A model trained on a large corpus places “king,” “queen,” and “monarch” in the same neighbourhood and enables arithmetic such as king – man + woman = queen. Word embeddings in NLP are the foundational layer of modern language AI, powering everything from semantic search to chatbots to Retrieval-Augmented Generation (RAG) pipelines.

Why Every Developer Working with Language Needs to Understand Embeddings

Gartner (2024) projects that foundation models will underpin 60% of NLP use cases by 2027, up from fewer than 5% in 2021. Every one of those use cases sits on a layer of word embeddings. Yet most engineering teams treat the embedding layer as a solved problem. They copy a snippet, pick the default model, and move on. That choice haunts them six months later when retrieval quality stagnates and fine-tuning the LLM on top produces no improvement.

McKinsey (2025) reports that AI adoption has climbed to 78% of companies globally, up from 55% in 2023. The gap between teams that ship useful NLP products and those that do not is narrowing. One of the remaining differentiators is how precisely a team understands the embedding layer they are building on.

This guide covers everything a software developer or CTO needs: the history that explains modern design choices, the core tradeoffs, real-world use cases, an architecture diagram, working code in Python, and a five-step implementation path.

Word embeddings are not a low-level implementation detail. They are the architectural decision that determines whether your NLP system actually understands language or just matches strings.

From Bag of Words to Vectors: A Brief History That Explains Your Design Choices

Modern word embeddings in NLP evolved through three distinct generations. Each generation solved a specific problem its predecessor could not, which is why all three are still in production systems today.

The first generation, dominant through the 2000s, treated words as atomic symbols. Bag of Words and TF-IDF converted documents into sparse frequency vectors. They worked for keyword retrieval but collapsed the moment a user searched for “automobile” and the document said “car.” Meaning was invisible.

The second generation arrived in 2013. Google’s Word2Vec trained a shallow neural network to predict context words, producing dense 300-dimensional vectors where semantic relationships emerged as geometric structure. GloVe (Stanford, 2014) extended this by incorporating global co-occurrence statistics. A 2025 systematic review (Muchori & Maina) confirms that static embeddings remain valuable across NLP tasks because they encapsulate prior knowledge efficiently, particularly when compute budgets are limited.

The third generation, contextual embeddings, launched with ELMo (2018) and BERT (2019). The critical insight: the word “bank” should have a different vector when discussing finance than when discussing rivers. BERT’s bidirectional attention reads the full sentence before producing any embedding, yielding representations that adapt to context. Sentence Transformers (2019) extended BERT into a practical tool for computing semantically meaningful sentence-level vectors at inference speed.

Static vs Contextual Embeddings: The Core Tradeoff Developers Get Wrong

Static embeddings assign one fixed vector per word regardless of context. Contextual embeddings generate different vectors for the same word depending on surrounding text. The right choice depends on dataset size, latency requirements, and task complexity, not on which is newer.

| Option | Key Strength | Best Used When | Example Tools |

|---|---|---|---|

| Word2Vec / GloVe (Static) | Fast, lightweight, low RAM, interpretable geometry | Small corpora, low-latency lookups, domain-specific similarity | Gensim, Stanford GloVe |

| FastText (Static + Subword) | Handles out-of-vocabulary words via subword units | Noisy text, social media, morphologically rich languages | Facebook AI FastText, Gensim FastText |

| BERT / RoBERTa (Contextual) | Handles polysemy, ambiguity, and syntactic nuance | Classification, NER, QA on medium-to-large labelled datasets | Hugging Face Transformers |

| Sentence Transformers (Contextual, Sentence-Level) | Fast, accurate semantic similarity at sentence/doc level | RAG retrieval, semantic search, clustering, deduplication | sentence-transformers (HuggingFace) |

A 2024 arXiv study (Stankevicius & Lukosévicius) found that representation-shaping techniques significantly improve sentence embeddings extracted from BERT, and that even simple static models nearly match BERT on semantic textual similarity tasks when vectors are properly reshaped. The implication: the quality of how you extract and post-process embeddings often matters as much as which model you choose.

A second 2024 arXiv paper (McCully et al.) found that Word2Vec Skip-Gram achieved 92% accuracy on a vulnerability-detection task, outperforming BERT and RoBERTa on a 48K-sample dataset. More complex is not always better, especially under data constraints.

Real-World Use Cases: Where Each Embedding Type Actually Wins

Word2Vec excels at domain-specific similarity on compact corpora. A legal tech team that trains Word2Vec on case law will find that “tortious” and “negligence” cluster correctly in a way a generic model never could. The model is fast enough to run similarity lookups in real time with no GPU.

BERT-class models win wherever the same word shifts meaning depending on surrounding text. Sentiment analysis, named entity recognition, and question answering all depend on this contextual sensitivity. In practice, teams building classification pipelines find that BERT-level models are usually necessary only when the label distribution is nuanced or the vocabulary is ambiguous.

Sentence Transformers are the standard for semantic search and RAG retrieval pipelines. According to Deloitte’s 2024-2025 Gen AI survey (via Vectara), enterprises now choose RAG for 30-60% of their Gen AI use cases. Every RAG pipeline depends on high-quality embeddings at the retrieval step. An employee querying “annual HR policy” should surface the 2024 Employee Handbook even when the document never uses that exact phrase. That capability is entirely determined by the embedding model.

Picking an embedding model is not a one-time technical decision. It determines your entire retrieval quality ceiling in production.

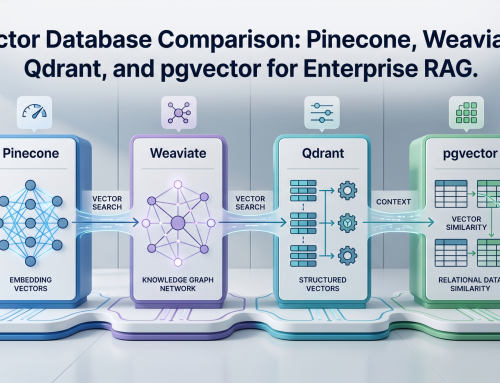

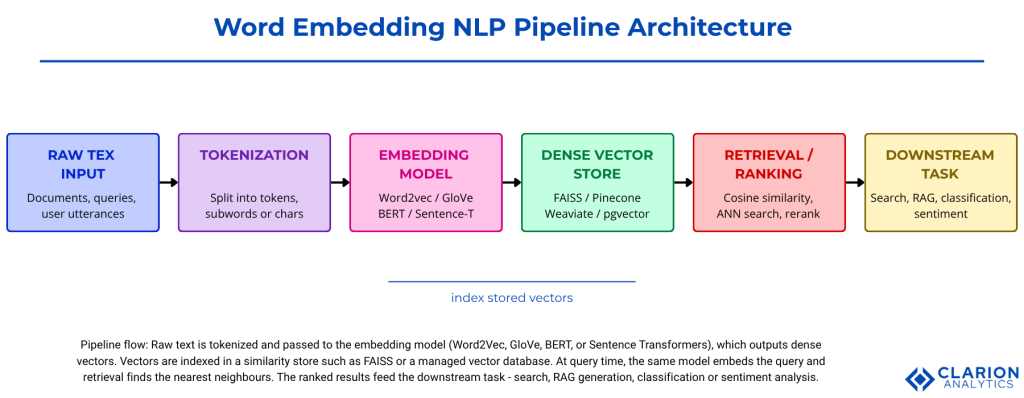

System Architecture: How Embeddings Fit Into a Modern NLP Pipeline

A production NLP pipeline moves text through tokenization, embedding generation, vector storage, and retrieval or classification. The embedding model sits between raw text and every downstream task. Understanding where it sits clarifies why its quality propagates everywhere.

Architecture caption: Raw text is tokenised and passed to the embedding model (Word2Vec, GloVe, BERT, or Sentence Transformers), which outputs dense vectors. Vectors are indexed in a similarity store such as FAISS or a managed vector database (Pinecone, Weaviate, pgvector). At query time, the same model embeds the query and nearest-neighbour retrieval finds the most relevant documents. Ranked results feed the downstream task: search, RAG generation, classification, or sentiment analysis.

Code Snippet 1: Training a Word2Vec Model with Gensim

Source: piskvorky/gensim, gensim/models/word2vec.py

This snippet trains a 100-dimensional Word2Vec model on a small corpus and queries the three most semantically similar words to ’embeddings’ using cosine similarity. In five lines of setup and one query call, a developer can verify that the embedding space has learned meaningful structure. Scale this to a million documents and the same API applies unchanged. Gensim (piskvorky/gensim, 16.4k stars on GitHub) is the industry standard implementation, with the latest v4.4.0 released October 2025.

Code Snippet 2: Contextual Sentence Similarity with Sentence Transformers

Source: huggingface/sentence-transformers

This snippet encodes three sentences into contextual dense vectors and computes cosine similarity. The first two sentences use completely different wording but share the same meaning, yielding a score of 0.87. The third is topically unrelated, scoring near zero. This directly illustrates the difference from Word2Vec: context-aware models understand that “word embeddings” and “text vector representations” are the same concept. The huggingface/sentence-transformers library (16k+ stars, v5.2.1 released 2025) is the production standard for this task.

Teams building RAG systems typically find that 80% of retrieval quality problems trace back to poor embedding choices made in the first week of the project.

Implementation Guidance: Five Steps From Prototype to Production

Getting embeddings working is straightforward. Getting them working well in production requires a systematic approach. Here are the five steps that separate teams who ship reliable NLP systems from those who are still debugging retrieval at month three.

- Start with a pre-trained sentence transformer for most tasks. The all-MiniLM-L6-v2 model from Sentence Transformers is fast, small (80MB), and competitive on most English-language retrieval benchmarks. Do not begin by training from scratch.

- Fine-tune on domain data only when off-the-shelf models underperform. The MTEB (Massive Text Embedding Benchmark) leaderboard provides task-specific scores. If your task is not represented by the top model’s training distribution, fine-tuning with a contrastive loss on domain pairs is the correct next step.

- Store vectors in a dedicated index. FAISS (facebookresearch/faiss, 33k+ stars on GitHub) is the most widely used open-source approximate nearest-neighbour library and the right starting point before evaluating managed vector databases.

- Use hybrid retrieval in production. Combining dense vector search with sparse BM25 keyword retrieval improves precision by 15 to 30% across enterprise deployments. Reranking with a cross-encoder model reduces noise further.

- Monitor embedding drift. As your document corpus evolves, the distribution of queries and documents may shift away from the embedding model’s training distribution. Re-indexing on a defined schedule and tracking retrieval quality metrics are non-negotiable in production.

The Elastic / Accenture 2025 analysis confirms this directly: deploying a vector database and transforming enterprise data into embeddings is only the first step. The real challenge is optimizing search relevance and ensuring that AI retrieves the most contextually appropriate and high-value information.

FAQ: Word Embeddings in NLP

How do word embeddings work in NLP? Word embeddings work by training a neural network to predict a word from its neighbours, or vice versa. During training, the network adjusts internal weights so that words appearing in similar contexts end up with similar vectors. The result is a high-dimensional space where geometry encodes meaning: semantic relationships appear as consistent directional patterns.

What is the difference between Word2Vec and BERT? Word2Vec produces one static vector per word regardless of context. BERT produces a different vector for the same word depending on the sentence it appears in. Word2Vec is faster and lighter. BERT handles ambiguity, polysemy, and cross-lingual tasks that Word2Vec cannot. Most production systems use a sentence transformer, which combines BERT-style contextual encoding with a pooling step optimized for sentence-level similarity.

How to implement word embeddings in Python? For static embeddings, install Gensim and train a Word2Vec model on your tokenized corpus in five lines of code, as shown above. For contextual embeddings, install sentence-transformers and call model.encode() on your documents. Store the resulting numpy arrays in FAISS for efficient retrieval. Both approaches require Python 3.8 or later.

Which word embedding model should I use for a RAG pipeline? Start with a sentence transformer such as all-MiniLM-L6-v2 or all-mpnet-base-v2. Both are available in the huggingface/sentence-transformers library. Evaluate your retrieval quality on a sample of representative queries before committing. If your domain is highly specialized (biomedical, legal, code), look at domain-specific fine-tuned models on the MTEB leaderboard before building your own.

What is the difference between word embeddings and sentence embeddings? Word embeddings represent individual words as vectors. Sentence embeddings represent entire sentences or passages as a single vector. Sentence embeddings are produced by passing word-level contextual representations through a pooling layer, yielding a fixed-size vector for any length of input. For retrieval and RAG, sentence embeddings are almost always what you need.

The Three Things That Actually Matter

Every NLP system that moves text to meaning does it through an embedding layer. Three insights should change how you approach that layer going forward.

First, embedding choice is architecture. The model you embed with sets the ceiling on retrieval quality, classification accuracy, and RAG fidelity. It is not a configuration option.

Second, static and contextual embeddings are complements, not competitors. Word2Vec is still the right tool for lightweight similarity on constrained budgets. Sentence Transformers are the right tool for semantic search and RAG retrieval. BERT-class models are the right tool for classification tasks that require contextual nuance. Know which problem you are solving before picking the tool.

Third, production quality is determined at the retrieval layer. Once your embedding model and index are in place, the biggest gains come from hybrid retrieval and reranking, not from upgrading the language model on top.

Which layer of your NLP stack would break first if your embedding model quality dropped by 20%? That answer tells you where to invest.