Definition: An LLM hallucination occurs when a large language model generates output that is factually incorrect, fabricated, or misleading, yet presents it with full confidence. Unlike simple model errors, hallucinations are architecturally systemic: they arise from probabilistic pattern-matching rather than verified reasoning. For production systems, hallucinations are not a corner case. They are a latent, continuous risk requiring deliberate detection, prevention, and correction at every layer of the stack.

When Your AI Lies Confidently: The Business Cost of Hallucination

LLM hallucination mitigation has moved from research discussion to board-level priority. Production data makes the reason clear: benchmarking studies show overall hallucination rates frequently exceed 15% across enterprise deployments, and industry reports estimate financial losses exceeding $250M annually from hallucination-related incidents in production systems.

The numbers only tell part of the story. A 2024 Stanford study found LLMs hallucinated on at least 75% of legal queries about court rulings. In healthcare, even best-in-class models still fabricate potentially harmful information on roughly 2.3% of medical queries. For a customer service system handling 100,000 daily interactions, that rate produces 2,300 wrong answers every single day.

The deeper problem is how hallucinations fail. Unlike a 500 error or a null pointer exception, a hallucinated response looks identical to a correct one. It follows correct grammar, matches the expected format, and uses appropriate terminology. Users have no signal to distrust it.

“Hallucination is not a model bug you can patch. It is an architecture problem you must design around.”

What Actually Causes LLM Hallucinations (And Why Prompts Alone Won’t Fix It)

LLM hallucinations arise when a model’s training data, retrieval pipeline, or generation process fails to ground output in verified facts. The root causes fall into three categories: knowledge gaps in training data, poor retrieval quality in RAG systems, and overconfident decoding behaviour.

The first cause is training data gaps. LLMs are prediction engines, not knowledge stores. When a model encounters a query about a topic poorly represented in training, it does not pause to indicate uncertainty. It synthesises a plausible-sounding answer from adjacent patterns. This is expected and unavoidable in base models.

The second cause is retrieval failure in RAG pipelines. Many teams add RAG expecting it to solve hallucination automatically. It does not. If the retriever returns stale, conflicting, or semantically irrelevant documents, the model receives bad context and produces bad output. As B EYE’s 2025 enterprise analysis notes, most enterprise hallucinations trace back to data quality and retrieval problems, not model defects.

The third cause is overconfident decoding. Training objectives optimise for fluency and plausibility, not epistemic accuracy. Models learn to sound confident. Without explicit instructions to acknowledge uncertainty, they fill every gap with the most statistically likely next token, regardless of whether that token is true.

This is why prompt engineering alone cannot solve hallucination. Prompts operate at the surface level. The architecture underneath, specifically how knowledge is retrieved and how outputs are verified, determines reliability.

“Benchmarking studies show overall hallucination rates frequently exceeding 15%, even in well-tuned enterprise deployments.”

The Real-World Risk Surface: Where Hallucinations Hit Hardest

The highest-risk domains for LLM hallucinations are legal, financial, healthcare, and enterprise knowledge management, where fabricated outputs can trigger compliance failures, financial losses, or patient harm.

In legal applications, the exposure is asymmetric. A hallucinated citation looks authoritative. A lawyer who relies on it may face sanctions; a company that bases a contract on fabricated precedent faces liability. In finance, hallucinations in risk models contributed to documented losses in 2024. Regulatory fines for AI-enabled misinformation in financial advice are trending upward, with major incidents carrying penalties in the $200M range.

In enterprise knowledge management, the risk is subtler but widespread. When an internal AI assistant answers employee questions about policies, HR benefits, or compliance procedures, a confident wrong answer spreads instantly. Glean’s 2025 enterprise analysis documents how models synthesise plausible project details, revenue figures, and policy guidance that simply do not exist.

The industry response reflects the severity. 76% of enterprises now include human-in-the-loop processes to catch hallucinations before deployment, and 39% of AI-powered customer service bots were pulled back or reworked due to hallucination errors in 2024.

“76% of enterprises have added human-in-the-loop processes specifically because automated hallucination controls alone are not sufficient.”

Strategy 1: Retrieval-Augmented Generation – Grounding the Model in Verified Facts

RAG reduces hallucinations by injecting verified context at inference time, giving the model facts to cite rather than patterns to extrapolate. Structured RAG constrained to verified corpora lowers hallucination rates 30–40% with minimal compute overhead. When implemented with hybrid retrieval, the reduction can reach 71%.

Basic RAG uses dense vector search to retrieve semantically similar documents. Production RAG goes further. A hybrid retrieval layer combines dense embeddings (vector search) with sparse retrieval (BM25 keyword matching) and a cross-encoder re-ranker that scores relevance on the combined result set. This matters because dense search misses exact-match queries and sparse search misses paraphrases; combining both captures what either misses alone.

Knowledge graph augmentation adds another reliability layer. Research from 2025 shows that graph-based retrieval (KRAGEN, GraphRAG) reduces hallucinations by 20–30% on complex, multi-hop queries by surfacing entity relationships the model could not infer from flat document retrieval alone.

Code Snippet 1: NeMo Guardrails – Self-Check Hallucination Rail

Source: NVIDIA/NeMo-Guardrails – nemoguardrails/library/hallucination/actions.py

The configuration below attaches a self-check hallucination rail to an LLMRails instance. The output rail instructs the model to verify its own answer against retrieved context. Low-confidence outputs are flagged before reaching the user, requiring two additions to an existing config.yml file.

yaml

# config.yml — add to existing rails configuration

rails:

output:

flows:

- self check facts # verify claims against retrieved context

- self check hallucination # flag responses unsupported by contextAdding these two output flows intercepts generation results before they reach the API response layer. The self_check_hallucination rail asks the model to evaluate whether each claim in its output is supported by the retrieved documents. If it is not, the response is flagged for human review or replaced with a graceful fallback.

Strategy 2: Guardrails, Confidence Scoring, and Human-in-the-Loop Controls

Guardrails intercept model outputs before they reach users, applying fact-checking, self-consistency checks, and confidence thresholds to flag or block low-reliability responses. Tools like NVIDIA NeMo Guardrails enable these controls with minimal code changes and full LangChain compatibility.

In practice, teams building this typically find that confidence scoring is the highest-leverage addition after RAG. Assigning a reliability score to each output lets the system route edge cases to human review rather than serving them directly. The threshold calibration varies by domain: legal and medical applications may require 95%+ confidence before autonomous serving; internal knowledge assistants can tolerate lower thresholds.

Self-consistency checking offers a complementary approach. The model generates multiple completions for the same query and compares them. High-variance responses signal low confidence. This technique adds computational overhead (typically 2–3x inference cost) but provides a strong signal for high-stakes queries.

Code Snippet 2: TruLens – Groundedness Evaluation with the RAG Triad

Source: truera/trulens — Metric API (v2.6, Feb 2026)

The snippet below implements the TruLens RAG Triad. It wraps a RAG application with three feedback functions: context relevance (did we retrieve the right documents?), groundedness (is the response supported by those documents?), and answer relevance (does the response address the question?). All three scores are logged to a dashboard for continuous monitoring.

Running both metrics on every production call gives an ongoing hallucination signal. When groundedness scores drop below threshold on a rolling basis, it typically indicates retrieval quality degradation. Both signals appear on a live dashboard before any user reports a problem.

“RAG is not a silver bullet. Poor retrieval increases hallucinations instead of reducing them.”

Strategy 3: Continuous Evaluation – You Cannot Improve What You Don’t Measure

Continuous hallucination evaluation using the RAG Triad (context relevance, groundedness, answer relevance) gives teams a quantitative signal to track improvements and catch regressions before they reach production.

Monitoring should start on day one, not after an incident. Most teams instrument production too late. Research from the EMNLP 2024 Industry Track shows that Hallucination-Aware Tuning (HAT) using Direct Preference Optimisation, where corrected outputs from human review become preference data for fine-tuning, creates a compound improvement cycle. Each human correction makes the model measurably less likely to produce the same error again.

Drift detection completes the monitoring stack. IBM’s guidance on LLM observability highlights that answer drift, where model responses gradually deviate from expected output over time without a redeployment event, is a common but underdiagnosed production failure mode. Telemetry data and rolling hallucination rate baselines catch this automatically.

Architecture: Four-Layer Hallucination Mitigation Stack

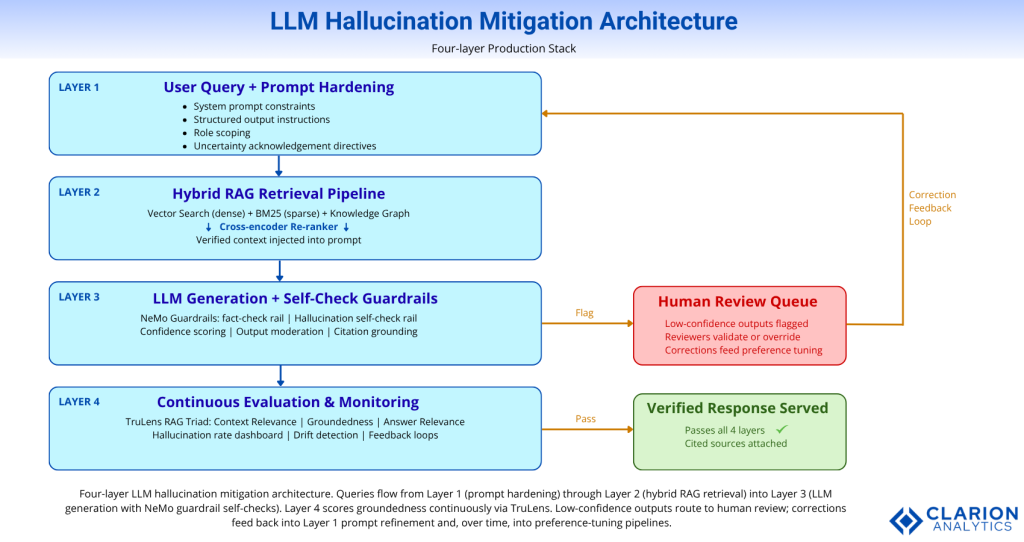

Figure: Four-layer LLM hallucination mitigation architecture. Queries flow from Layer 1 (prompt hardening) through Layer 2 (hybrid RAG retrieval) into Layer 3 (LLM generation with NeMo self-check rails). Layer 4 scores groundedness continuously via TruLens. Low-confidence outputs route to human review; corrections feed back into prompt refinement and fine-tuning pipelines.

Mitigation Approach Comparison

| Approach | Key Strength | Limitation | Best Used When |

|---|---|---|---|

| Prompt Engineering Only | Zero infrastructure cost; fast iteration | Addresses symptoms, not root causes; no memory of errors | MVP / demo stage only |

| RAG + Output Guardrails | Grounds output in verified facts; intercepts bad responses before users see them | Adds latency (5–300ms); requires clean, current knowledge base | Production apps with domain-specific accuracy requirements |

| RAG + HAT Fine-tuning + Monitoring | Embeds hallucination resistance into the model; 15–82% accuracy improvement achievable | Highest implementation complexity; requires labelled preference data | High-stakes domains: legal, healthcare, finance |

| Confidence Scoring + HITL Only | Catches what automated rails miss; builds a correction dataset over time | Expensive at scale; requires trained human reviewers | Regulated industries where liability requires human sign-off |

Implementation Playbook: Deploying a Multi-Layer Mitigation Stack

A production-grade hallucination mitigation stack combines hybrid RAG retrieval, output guardrails, confidence thresholds, and continuous evaluation. Teams should instrument all four layers before exposing LLM features to end users.

The right sequencing matters. Most teams try to build the monitoring layer last. Build it first. Without baseline hallucination rate data, you cannot measure the impact of any subsequent change. Instrument the RAG Triad from the first prototype, then use the data to prioritise whether retrieval quality, generation guardrails, or fine-tuning delivers the highest return on your team’s time.

For data pipeline hygiene, run deduplication and freshness checks before any document enters the vector store. Conflicting documents force the model to synthesise, and synthesis is where hallucinations originate. As the Vectara hallucination index data shows, model-level hallucination rates vary from 0.7% to nearly 30%. At equivalent prompt volumes, data quality is the primary differentiator between these outcomes.

For guardrail threshold calibration, start with the confidence threshold at 70% and measure false positive rates over two weeks. High false positive rates indicate retrieval quality problems, not model overconfidence. Adjust the retrieval pipeline before tightening confidence thresholds further.

The NIST AI Risk Management Framework (2024) recommends structured red-teaming as part of the deployment process. Feed adversarial prompts designed to elicit hallucinations into the system under test. Record failure modes. Use those failures to expand the guardrail configuration and improve retrieval coverage.

Frequently Asked Questions About LLM Hallucination

What is the hallucination rate of current LLMs in production?

Hallucination rates vary widely by model, domain, and query type. As of April 2025, Vectara’s leaderboard shows a range from 0.7% for Google Gemini-2.0-Flash-001 to 29.9% for smaller open-source models. For enterprise knowledge tasks, rates frequently exceed 15% across all model types. Legal domain queries show 6.4% hallucination rates even among top-tier models.

Does RAG fully eliminate LLM hallucinations?

RAG significantly reduces hallucinations but does not eliminate them. When implemented with hybrid retrieval and re-ranking, RAG cuts hallucination rates by 30–71% compared to baseline. However, poor retrieval quality, stale documents, or conflicting sources in the knowledge base can actually increase hallucination frequency. RAG addresses knowledge gaps; it does not address overconfident decoding or logic errors.

What is the best open-source tool for detecting LLM hallucinations?

TruLens (truera/trulens, ~6,000 GitHub stars, now maintained by Snowflake) is the most widely adopted open-source evaluation framework for production RAG systems. It implements the RAG Triad (context relevance, groundedness, answer relevance) as automated feedback functions. NVIDIA NeMo Guardrails complements TruLens by providing runtime interception, while Haystack from deepset provides the orchestration layer that ties both together in a production pipeline.

How do I choose between fine-tuning and RAG for reducing hallucinations?

Use RAG when the problem is missing or outdated knowledge. Use fine-tuning (specifically HAT with DPO, from EMNLP 2024) when the model consistently misinterprets or distorts information it has access to. In practice, the two are complementary: RAG grounds the model in facts; HAT fine-tuning teaches it to cite those facts accurately rather than embellish them. Start with RAG. Add HAT only when groundedness scores plateau despite strong retrieval quality.

What latency impact should I expect from adding hallucination guardrails?

Research published in May 2025 measured computational overhead for guardrail techniques across 15 application domains. Detection-based guardrails (NeMo self-check rails, TruLens feedback functions) add 5–300ms per request, depending on implementation complexity. Self-consistency checks that generate multiple completions add 2–3x inference cost. For latency-sensitive applications, run evaluations asynchronously and use synchronous guardrails only for high-risk query categories.

Three Things You Need to Do Before Your Next Deployment

Three conclusions carry the most weight for teams moving to production.

First, treat hallucination as an architecture problem, not a model problem. The model is not the primary variable. Your retrieval quality, prompt constraints, and monitoring infrastructure determine your hallucination rate in production far more than model selection does.

Second, instrument before you launch. Deploy TruLens groundedness scoring from the prototype stage. Without a baseline, you cannot demonstrate improvement, and you cannot detect regressions. Teams that skip early instrumentation invariably discover their hallucination problem through a user complaint, not a metric alert.

Third, RAG and guardrails are not optional for high-stakes domains. A 1% hallucination rate at 10,000 daily queries is 100 wrong answers per day. In legal, financial, or healthcare contexts, even that rate is unacceptable without human review routing for flagged outputs.

The final question worth sitting with: if your organisation does not know its current hallucination rate in production today, what would it take to find out by next week?