DEFINITION A transformer is a neural network architecture that processes sequences in parallel using a mechanism called self-attention. Each token attends to every other token in the input simultaneously, assigning learned weights that capture contextual meaning. In LLM coding tools, this attention over token sequences allows the model to understand variable scope, function signatures, and multi-file dependencies, making transformers the engine behind every modern code assistant.

Why Every Developer Needs to Understand the Transformer

Transformers are the architectural foundation of every major LLM coding tool in production today, and developers who understand how they work gain a decisive advantage in building, fine-tuning, and deploying AI-assisted software systems.

McKinsey (2023) found that developers using generative AI tools completed coding tasks up to twice as fast. That statistic gets cited everywhere. What gets cited far less is why those gains are uneven: teams that treat LLMs as black boxes plateau early, while teams that understand how transformers work in LLM coding continue to unlock deeper gains through fine-tuning, prompt architecture, and model selection. Gartner (2025) predicts that by 2028, 90% of enterprise software engineers will use AI code assistants — up from under 14% in early 2024. The developers who reach senior positions in that world will be the ones who can reason about what happens inside the model.

“Developers who understand the transformer’s attention mechanism don’t just use AI coding tools — they control them.”

The Self-Attention Mechanism: What Actually Happens When You Autocomplete

Self-attention computes three vectors, Query, Key, and Value, for each token, then scores every token against every other token to produce a weighted context vector that drives the next prediction.

When you type def calculate_ and GitHub Copilot or any transformer-based assistant completes the line, here is what runs: your text is tokenized into integer IDs, each token becomes an embedding vector, and then in every transformer block, the model computes attention scores between every pair of tokens. The word “calculate” attends strongly to the preceding function signature. The parameter type hints attend to the class constructor. This global dependency graph, computed in parallel across all tokens, is why transformers outperform recurrent models on code: code has long-range dependencies (a variable declared 50 lines ago matters now), and self-attention handles those dependencies in a single pass rather than encoding them through a compressed hidden state.

Vaswani et al. (2017, NeurIPS) — the “Attention Is All You Need” paper first demonstrated that a network built entirely on attention mechanisms, with no recurrence and no convolutions, outperformed all prior sequence models on translation tasks while training faster on parallel hardware. Every code LLM you use today inherits that architecture directly.

Source: karpathy/nanoGPT – model.py, CausalSelfAttention class

This ~20-line class is the complete inner loop of every autoregressive code LLM. c_attn projects the input into Q, K, V simultaneously. scaled_dot_product_attention applies the causal mask so the model cannot see future tokens critical for autoregressive generation. c_proj merges the multi-head outputs back into a single representation. Understanding this loop means you can reason about why increasing n_head affects quality, why context length is a memory cost (the KV cache grows linearly with token count), and what FlashAttention optimises.

Decoder-Only vs. Encoder-Decoder: Choosing the Right Architecture for Code

Decoder-only transformers (GPT-style) excel at code completion and generation because they predict the next token autoregressively; encoder-decoder models suit tasks like code translation or test generation that require understanding an entire input before producing an output.

As Sebastian Raschka’s 2025 LLM state analysis notes, state-of-the-art models still use the decoder-only transformer as their core, with the leading innovation being efficiency modifications to the attention mechanism rather than departures from the fundamental architecture. Knowing which variant to select determines your model’s cost, latency, and task fit.

| Architecture | Key Strength | Best Used When |

|---|---|---|

| Decoder-only (GPT, LLaMA, DeepSeek) | Fast autoregressive generation; minimal KV cache overhead | Building inline code assistants, copilots, or chat-based coding tools |

| Encoder-Decoder (T5, CodeT5) | Bidirectional input understanding before generating output | Code translation, automated test generation, documentation synthesis |

| Mixture-of-Experts (DeepSeek-V3, Mixtral) | Scales parameters without proportional compute cost; only ~37B of 671B params active per token | High-throughput production systems with diverse code tasks and cost constraints |

“Choosing between decoder-only and MoE is not an architecture debate; it is a business decision about latency, cost, and task diversity.”

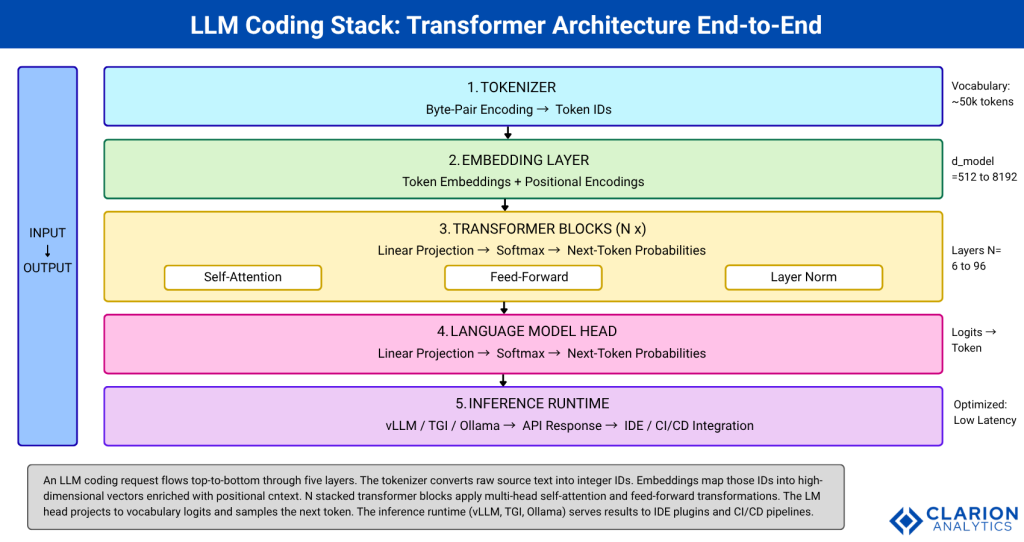

The LLM Coding Stack: From Tokenizer to Inference

A production LLM coding stack has five layers: tokenizer, embedding, transformer blocks, language model head, and inference runtime, and developers who can reason about each layer can diagnose latency, cost, and accuracy problems that a black-box API cannot explain.

Teams building this typically find that latency problems live in the inference runtime (under-provisioned KV cache, wrong batching strategy), quality problems live in the tokenizer and context window configuration, and cost problems live in the choice of model size and MoE vs. dense architecture. None of those diagnoses are accessible to a team that only sees the API.

Source: huggingface/transformers – Standard AutoModel API

Three imports, one generate call, this is the full transformer stack in production. AutoTokenizer handles BPE tokenization. AutoModelForCausalLM loads the decoder-only transformer with all attention layers. torch_dtype=torch.bfloat16 cuts memory by half, critical for fitting a 6.7B model on a single A10 GPU. temperature=0.2 reduces sampling entropy for code, where correctness matters more than variety. This snippet is the starting point for every custom coding assistant your team will build.

Fine-Tuning and RAG: Adapting Transformers to Your Codebase

Fine-tuning with LoRA injects trainable low-rank matrices into the transformer’s attention layers, updating less than 1% of parameters while capturing domain-specific coding patterns from your own repositories.

Huang et al. (2024) survey the landscape of architectural upgrades that make transformers practical for long-context code: extended positional encodings like RoPE, sparse attention patterns, and hybrid SSM layers that handle repository-scale inputs. For most engineering teams, two adaptation strategies cover 90% of use cases. First, LoRA (Low-Rank Adaptation) fine-tunes only small update matrices injected into Q and V projections, leaving base weights frozen. A 7B model can be fine-tuned on a team’s private codebase overnight on one A100 GPU. Second, RAG (Retrieval-Augmented Generation) appends relevant code context function signatures, docstrings, dependency graphs to the prompt before each inference call, without touching model weights. RAG is preferable when the codebase changes frequently; LoRA is preferable when domain-specific syntax and style consistency matter most.

“LoRA lets a team fine-tune a 7B-parameter model on their internal codebase overnight on a single GPU that is not a research curiosity; it is a production technique.”

McKinsey (2024) notes that gen-AI high performers are far more likely to customise models through significant fine-tuning or proprietary training rather than relying on off-the-shelf models. That gap between high performers and average adopters maps directly onto the LoRA vs. raw API divide.

Real-World Use Cases: Where Transformers Change the SDLC

Transformers drive measurable productivity gains across five SDLC stages: inline completion, docstring generation, code review assistance, automated testing, and legacy code migration, with McKinsey (2023) reporting up to 2x faster task completion in controlled developer studies.

Dong et al. (2025, arXiv) define the new category of LLM-based code generation agents by three properties: autonomy over the full task workflow, expanded scope across the entire SDLC, and a shift of research emphasis toward practical engineering challenges like system reliability and tool integration. This means the transformer is no longer just an autocomplete engine. In practice, teams building code agents typically find that the biggest gains come not from the model itself but from how the agent orchestrates tool calls: running linters, executing tests, fetching relevant files, and looping on the output until tests pass.

Gartner (2024) reports that 80% of engineering roles will require upskilling to work alongside these agentic systems through 2027. The implication for CTOs is structural: the teams that invest now in understanding transformer architecture, attention tuning, context window management, fine-tuning pipelines will absorb that transition. Teams that do not will be playing catch-up against a moving target.

“The teams winning with LLM coding tools are not the ones with the biggest models; they are the ones who aligned the architecture to the specific SDLC task.”

Frequently Asked Questions

What is self-attention in simple terms for developers? Self-attention is the mechanism that lets every token in your prompt “look at” every other token simultaneously. For a function definition, it means the model sees the parameter types, return annotations, and class context all at once, not sequentially. This parallel global view is why transformers understand code structure better than earlier RNN-based models.

How is a decoder-only transformer different from a full transformer? The original transformer (Vaswani et al., 2017) has an encoder that reads the full input and a decoder that generates output. Decoder-only models like GPT and LLaMA drop the encoder and use only the decoder with a causal mask. This makes them faster and simpler to scale, which is why every major code-completion LLM uses this design.

Can I fine-tune an LLM on my company’s private codebase? Yes, using LoRA or QLoRA you can fine-tune a 7B–13B parameter model on a private codebase with a single enterprise GPU (A100 or H100) in hours to days, depending on dataset size. The base model weights stay frozen; only small adapter matrices are trained. No data leaves your infrastructure, and the adapter can be merged with the base model before deployment.

What is Mixture-of-Experts and when should a CTO consider it? MoE is an architecture where the model contains many “expert” sub-networks but activates only a small subset for each token. DeepSeek-V3 has 671B total parameters but activates roughly 37B per token. The result is near-large-model quality at medium-model inference cost. Consider MoE when you need a single model to handle diverse code tasks (Python, SQL, YAML, Terraform) at high throughput with controlled serving costs.

How do transformers handle code that spans multiple files? Current transformers handle multi-file code through extended context windows (some models now support 128K–1M tokens) and retrieval-augmented generation. RAG retrieves relevant files, functions, or imports and injects them into the context window before inference. The transformer’s attention mechanism then reasons across all injected content simultaneously, but the quality degrades if the context exceeds the model’s effective attention range, which is shorter than the nominal context length.

Conclusion: Three Insights That Should Change How You Build

Three things are now clear. First, the transformer’s self-attention mechanism is not a magic box; it is an auditable, parameterisable system that developers can reason about, configure, and extend. Second, architecture choice (decoder-only, encoder-decoder, MoE) is a product decision with direct consequences for latency, cost, and task fit, not a decision to delegate to a machine learning team at the end of a project. Third, the transition from API consumer to transformer practitioner through LoRA fine-tuning, context window management, and inference optimisation is where the real competitive advantage lives.

Gartner (2025) projects that 90% of enterprise engineers will use AI code assistants by 2028. The question is not whether your team will use transformers. The question is whether they will use them knowingly.

“The transformer architecture is not a detail to leave to the data science team; it is the infrastructure decision that determines whether your AI coding tool scales or stalls.”