Definition: Attention Mechanism: In a transformer-based LLM, the attention mechanism is the computational process by which each token dynamically weights every other token in the input sequence to build a context-aware representation. Formally, it computes Score = softmax(QKᵀ / √d_k) · V, where Q, K, and V are learned linear projections of the input embeddings. This single operation is responsible for language understanding, long-range reasoning, and coherent generation across all modern LLMs.

Why Attention Is the Brain of Every Modern LLM

The attention mechanism in LLMs is the single most consequential engineering decision in any transformer stack. Get it wrong and your model is slow, memory-starved, or incapable of reasoning across long contexts.

According to McKinsey’s 2024 State of AI survey, 71 percent of organizations now regularly use generative AI in at least one business function, up from 65 percent just six months earlier. That velocity of adoption puts extreme pressure on engineering teams to ship LLM-powered features that are both capable and cost-efficient.

The bottleneck almost always lives in the attention layer. Self-attention has quadratic time and memory complexity with respect to sequence length. At 4,096 tokens, that cost is manageable. At 128,000 tokens, now a standard context window in production models, it becomes the dominant factor in both compute budget and latency.

“The bottleneck in almost every production LLM is not parameters, it is the cost of attending to context.”

According to Gartner (2025), 45 percent of organizations with high AI maturity keep their initiatives in production for three years or more. That means the architectural choices you make today, including which attention variant you deploy, must survive multiple model generations.

How Self-Attention Actually Works

Every token decides how much it cares about every other token. The attention score is the weight that encodes that decision.

The mechanism starts with three learned weight matrices: W_Q (Query), W_K (Key), and W_V (Value). For an input sequence X, the model computes Q = XW_Q, K = XW_K, V = XW_V. The attention score matrix A = softmax(QKᵀ / √d_k) · V then re-represents each token as a weighted combination of all values in the sequence.

The division by √d_k, the square root of the key dimension, prevents the softmax from saturating in high-dimensional spaces. Without it, dot products grow large, softmax outputs collapse to near-one-hot distributions, and gradients vanish during training.

The arXiv survey by Sun et al. (2025) on efficient attention mechanisms confirms that standard self-attention has O(n²) time and memory complexity with respect to sequence length n. For a 128K context window, the full attention matrix requires over 16 GB just to store in FP16 before any computation.

“Self-attention sees the entire sequence simultaneously. That omniscience is its superpower, and its computational liability.”

Multi-Head, GQA, and MLA: Picking the Right Variant

Not all attention heads are equal, and not all heads need their own key-value cache.

Multi-Head Attention (MHA) runs h independent attention operations in parallel, each with its own W_Q, W_K, W_V, then concatenates and projects the results. The intuition: different heads learn to track syntax, co-reference, long-range dependencies, and positional patterns simultaneously. MIT and IBM researchers (NeurIPS 2025) showed that their PaTH Attention variant consistently outperformed standard MHA on reasoning benchmarks by adding path-based positional memory.

Grouped-Query Attention (GQA), adopted in Llama 3 and Mistral 7B, reduces the number of key-value heads while keeping query heads. This cuts KV cache memory by 30 to 40 percent without measurable quality loss at moderate context lengths, making it the default choice for inference-heavy deployments.

Multi-Head Latent Attention (MLA), introduced in DeepSeek-V3, compresses the KV cache into a low-rank latent vector. The result is roughly 40 percent lower VRAM usage during inference. According to Towards AI’s 2025 analysis, MLA is now considered the leading memory-efficient attention design for models above 30 billion parameters.

Attention Variant Comparison

| Attention Variant | Key Strength | Best Used When | Representative Models |

|---|---|---|---|

| Multi-Head Attention (MHA) | Full context coverage; most expressive | Smaller models (<7B) or training from scratch | GPT-2, BERT, T5 |

| Grouped-Query Attention (GQA) | 30-40% lower KV cache VRAM vs. MHA | Serving large models; memory-constrained inference | Llama 3, Mistral 7B |

| Multi-Head Latent Attention (MLA) | Compresses KV to latent vectors; ~40% VRAM saving | Very large models (30B+) with long context | DeepSeek-V3, DeepSeek-R1 |

| FlashAttention Kernel | 3-5× throughput via IO-aware tiling; exact output | Any transformer on A100/H100; default for production | All major open-weight LLMs |

| Sparse / Sliding-Window Attention | Linear or sub-quadratic cost; handles 1M+ tokens | Long-document tasks, RAG retrievers, code assistants | Mistral Sliding, MiniMax-01 |

“MLA compresses the KV cache to a fraction of its original size, enabling 70B parameter models to serve 128K context on hardware previously reserved for 13B.”

FlashAttention and Sparse Attention: Speed Without Compromise

FlashAttention does not approximate attention. It reorders how attention is computed so that memory bandwidth stops being the bottleneck.

Standard attention reads Q, K, and V from High Bandwidth Memory (HBM), computes the score matrix, writes it back to HBM, reads it again for the softmax, and writes V back once more. For long sequences, this I/O cost dominates. Dao-AILab’s FlashAttention repository (23k+ GitHub stars, actively maintained as of March 2025) solves this by tiling the computation so it fits in the much faster on-chip SRAM. The result is a 3 to 5× throughput improvement over naive PyTorch, with no change to the mathematical output.

FlashAttention is integrated into PyTorch, Hugging Face Transformers, NVIDIA Megatron-LM, and Microsoft DeepSpeed. Enabling it in Hugging Face is a single line: set attn_implementation="flash_attention_2" in from_pretrained().

Sparse attention takes a complementary approach. Rather than reordering computation, it restricts which tokens attend to which using sliding windows, block patterns, or learned routing. Sliding-window attention gives O(n·w) complexity where w is the window size. The IJCAI 2024 survey on long-context LLMs found that multi-head self-attention occupies more than half of inference overhead once sequence length exceeds 512 tokens.

Code Snippet 1: Enabling FlashAttention-2 in Hugging Face Transformers

Source: huggingface/transformers – Attention Interface

This single parameter switch activates IO-aware tiled computation (FlashAttention-2) instead of the default eager matrix multiplication. On an A100 GPU, it typically reduces memory usage by up to 20× for long sequences and boosts tokens-per-second by a factor of three to five. No architectural changes, no approximations.

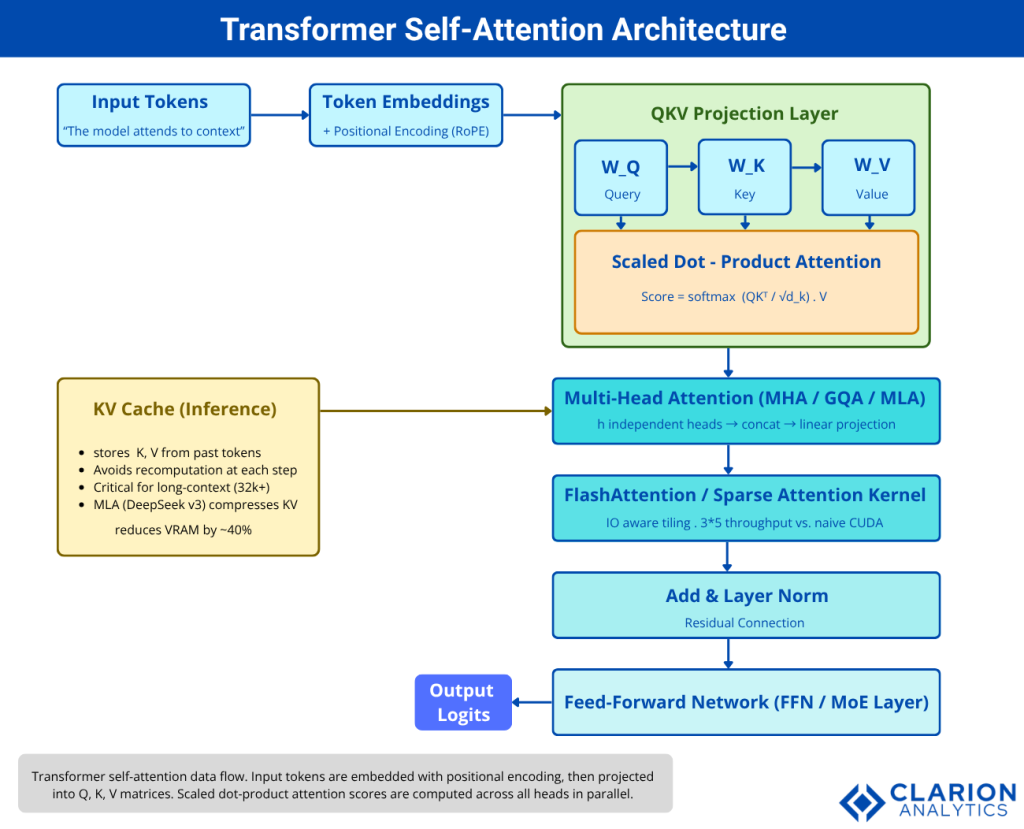

Transformer Self-Attention Architecture

Figure 1: Transformer self-attention data flow. Input tokens are embedded with positional encoding (RoPE), projected into Q, K, V matrices, and passed through scaled dot-product attention. Multiple attention heads run in parallel, with FlashAttention kernels tiling computation in SRAM to bypass HBM bottlenecks. The KV cache stores past keys and values to enable efficient autoregressive decoding. Output logits emerge after the feed-forward layer and residual connection.

Implementing Attention in Production: What Teams Actually Do

The gap between a working prototype and a production attention stack is wider than most engineering timelines account for.

In practice, teams building LLM inference pipelines consistently encounter three failure modes: (1) running out of KV cache memory when context lengths grow beyond initial estimates, (2) latency spikes caused by non-contiguous GPU memory from incremental decoding, and (3) silent quality regressions when switching attention backends.

The standard production checklist is: enable FlashAttention-2 by default for any model on CUDA hardware; use GQA or MLA variants for models that will serve long contexts; set the dtype to bf16 or fp16 before enabling FlashAttention; and benchmark KV cache memory consumption at your p99 input length, not your average.

Teams building this typically find that switching from MHA to GQA in a 7B model cuts per-request memory from roughly 8 GB to 5 GB at 8,192 tokens, enough to double serving throughput on a fixed GPU budget.

Code Snippet 2: Basic Scaled Dot-Product Attention in PyTorch

Source: karpathy/nanoGPT – model.py

This is the foundational attention implementation used in nanoGPT (37k+ stars). The key insight is in the scaling step: dividing by √d_k is what prevents the dot products from growing so large that softmax returns near-zero gradients. Every production attention implementation, including FlashAttention, computes mathematically equivalent output; the innovation is in how and where the computation happens in hardware.

“Understand the vanilla implementation before reaching for FlashAttention. Every optimization makes sense only when you know what it is optimizing.”

FAQ: Attention Mechanism in LLMs

What is the attention mechanism in LLMs and why does it matter?

The attention mechanism lets each token in a sequence query every other token and assign a learned relevance weight, producing a context-aware representation. It matters because it replaces fixed-size hidden states from older RNN architectures, allowing LLMs to capture long-range dependencies, reason over entire documents, and generate coherent outputs, all in a single differentiable operation.

How does self-attention differ from multi-head attention?

Self-attention is one instance of the attention computation: Q, K, and V projected from the same input. Multi-head attention runs h independent self-attention operations in parallel, each learning to focus on different patterns (syntax, co-reference, position), then concatenates and projects the results. Multi-head attention consistently outperforms single-head attention because no single head can track all dependency types simultaneously.

What is FlashAttention and when should I use it?

FlashAttention is an IO-aware implementation of exact attention that tiles computation in GPU on-chip SRAM, avoiding repeated reads from slower High Bandwidth Memory. Use it by default for any transformer trained or served on CUDA hardware with fp16 or bf16 inputs. It delivers 3 to 5× throughput gains over naive PyTorch, reduces memory usage by up to 20× for long sequences, and produces mathematically identical output.

What is the quadratic complexity problem in attention?

Standard self-attention computes an n × n score matrix, where n is sequence length. Doubling the context window quadruples the memory and compute cost. At 128K tokens, the full attention matrix exceeds 16 GB in FP16. Efficient alternatives such as FlashAttention (reordered computation), sparse attention (restricted token sets), and linear attention (kernel approximations) all address this bottleneck through different tradeoffs between exactness, speed, and quality.

How do I choose between MHA, GQA, and MLA for my model?

Use MHA for models under 7B parameters where quality is the sole priority. Use GQA for mid-size models (7B to 30B) deployed in memory-constrained inference environments; it cuts KV cache by 30 to 40 percent with minimal quality loss. Use MLA for very large models (30B+) handling long contexts, as it compresses KV to a latent representation and reduces inference VRAM by roughly 40 percent. All three are production-proven in models available today.

Conclusion

The attention mechanism in LLMs has moved from a single elegant formula to a family of highly optimized variants, each targeting a specific constraint in the compute and memory budget. Three insights should drive every architecture decision.

First, understand the math before you optimize. Scaled dot-product attention, QKV projections, and residual connections are the foundation. Every efficient variant, GQA, MLA, FlashAttention, is a targeted optimization of that foundation.

Second, the bottleneck at scale is always the KV cache and memory bandwidth, not FLOPs. FlashAttention addresses bandwidth. GQA and MLA address cache size. You will likely need both.

Third, adoption does not guarantee value. McKinsey (2025) found that more than 80 percent of organizations are not yet seeing measurable EBIT impact from generative AI. The engineers who understand what happens inside the attention layer and can tune it for their workload are the ones who close that gap.

What attention bottleneck is your team still working around in production?