Definition: Multi-camera person re-identification (Re-ID) is the computer vision task of matching a query individual across two or more non-overlapping camera feeds using appearance, spatial, and temporal features. A complete Re-ID system combines a person detector (e.g., YOLOv8), a per-frame tracker (e.g., DeepSORT), and a feature-embedding model that produces discriminative identity vectors robust to viewpoint change, lighting shift, and occlusion.

Why Multi-Camera Re-ID Is Now a Business-Critical Problem

Multi-camera person re-ID enables organisations to track individuals across large, distributed camera networks, a capability now required for loss prevention, crowd management, and smart-city safety as manual review of video at scale becomes impossible.

The numbers make the urgency clear. Grand View Research (2024) estimates the global AI surveillance market at USD 6.51 billion in 2024, growing at a 30.6% CAGR to reach USD 28.76 billion by 2030. Meanwhile, MarketsandMarkets (2024) pegs the same market at USD 3.90 billion with a 21.3% CAGR, reflecting consistent multi-analyst consensus on explosive growth. The business driver is equally stark: retail shrink reached USD 132 billion globally in 2024, up from USD 112 billion in 2022, and a Deloitte 2024 Retail Tech Survey found 68% of U.S. retailers piloting or actively implementing computer vision to address exactly this problem.

Traditional single-camera tracking solves the easy half of the problem. When a suspect moves from Camera 3 to Camera 11 through an unmonitored corridor, the identity thread breaks. Multi-camera person re-ID restores it.

“A surveillance network without cross-camera Re-ID is just expensive storage — the intelligence begins when cameras can reason about the same person across scenes.”

How Multi-Camera Person Re-ID Works: Core Architecture

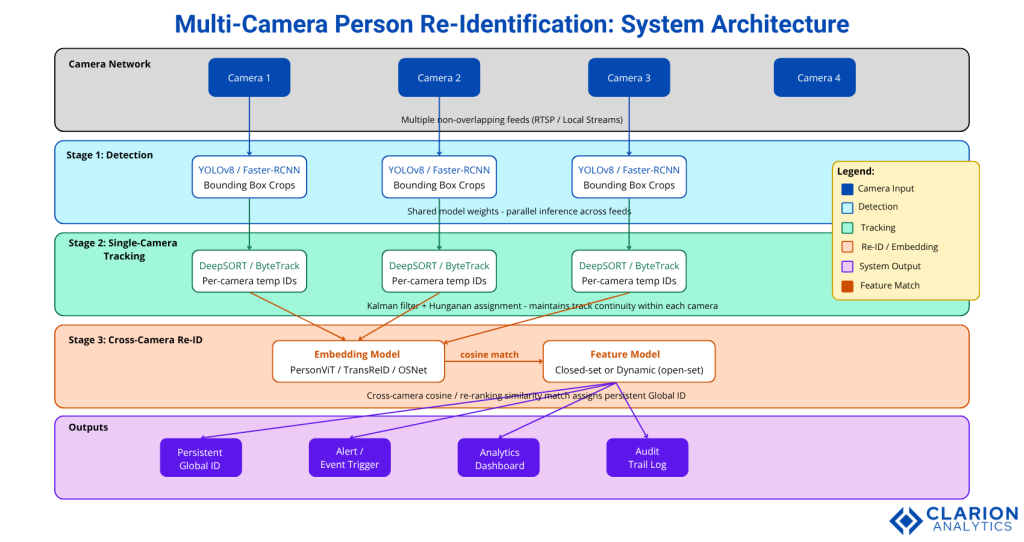

A modern Re-ID pipeline chains three stages; detection, single-camera tracking, and cross-camera feature matching, where a deep embedding model computes an identity vector that persists across camera handoffs.

Stage 1 – Person Detection

Every frame from every camera passes through a detector that outputs bounding-box crops of each visible person. YOLOv8n is the current default for production: it runs at over 100 fps on a single GPU and balances precision against recall across dense scenes. For higher accuracy at lower frame rates, Faster R-CNN remains a strong alternative.

Stage 2 – Single-Camera Tracking

Within each camera view, a tracker assigns a temporary local ID to each bounding box across frames. DeepSORT uses a Kalman filter for motion prediction and the Hungarian algorithm for assignment. ByteTrack improves on DeepSORT in dense crowds by associating every detection box, not just high-confidence ones. Both are supported in the JDAI-CV/fast-reid ecosystem.

Stage 3 – Cross-Camera Re-Identification

This is where multi-camera person re-ID separates from standard tracking. An embedding model takes each cropped detection and produces a fixed-length feature vector. Cosine similarity (or re-ranking) compares this vector against a gallery of known identities. A match assigns a persistent global ID; no match adds a new gallery entry. The MICRO-TRACK paper (Cunico & Cristani, arXiv 2024) demonstrates this open-set gallery approach in a real industrial deployment.

Figure 1: The three-stage pipeline for multi-camera person Re-ID. (1) A detector crops bounding boxes per frame. (2) A within-camera tracker assigns temporary IDs. (3) A cross-camera embedding module computes a feature vector and matches it against a gallery to assign persistent global IDs. In open-set deployments, the gallery grows dynamically as new individuals are encountered.

The Feature Embedding Models That Actually Work

Vision Transformer (ViT) models pre-trained with self-supervised masked image modeling now deliver state-of-the-art Re-ID accuracy, outperforming earlier CNN baselines on benchmark datasets including Market-1501 and MSMT17 without requiring expensive manual annotation.

PersonViT (Bin Hu et al., arXiv 2024) pre-trains a ViT backbone on 130 million unlabelled person images using Masked Image Modeling combined with discriminative contrastive learning. The result beats supervised CNN baselines on four benchmark datasets. For developers, the implication is significant: you no longer need a labelled per-camera dataset to build a competitive Re-ID system.

TransReID adds camera-ID and viewpoint side-information as extra tokens in the ViT architecture, letting the model learn camera-specific appearance shifts. It remains the top choice when you have labelled training data and server-grade hardware. OSNet, from the Torchreid library, runs comfortably on edge GPUs and achieves strong cross-domain accuracy with far fewer parameters.

“Self-supervised ViT models trained on unlabelled data are closing the annotation bottleneck that blocked production Re-ID for years.”

Table 1: Re-ID Approach Comparison

| Approach | Key Strength | Best Used When |

|---|---|---|

| CNN (ResNet-50 / OSNet) | Low latency, easy edge deployment | Single-domain, edge-constrained hardware |

| TransReID (supervised ViT) | High accuracy, camera-aware encoding | Well-labelled datasets, server-grade GPU |

| PersonViT (self-supervised ViT) | No manual labels, strong generalisation | Multi-domain, limited annotation budget |

| MICRO-TRACK (open-set) | Open-set gallery, real-time industrial | Unknown individuals, dynamic environments |

| DeepSORT + FastReID | End-to-end pipeline, proven baseline | Rapid prototyping, benchmark validation |

Real-World Use Cases

Multi-camera person Re-ID is deployed across retail loss prevention, airport and transit security, smart-city crowd management, industrial site safety, and sports analytics; any scenario requiring persistent identity tracking across physically separated camera zones.

Retail is the highest-volume deployment context. A typical large-format store operates 40 to 80 cameras across parking, entrance, floor, and checkout zones. Re-ID lets loss-prevention teams flag a suspect from entry to exit without manual camera switching. A 2024 Deloitte survey found 68% of U.S. retailers are implementing or piloting computer vision, with Re-ID a core component.

In practice, teams building retail Re-ID deployments typically find that the hardest problem is not the embedding model, it is gallery initialisation. At store opening, the gallery is empty. Within the first hour, thousands of new entries accumulate, and without active pruning, cosine similarity degrades as the gallery grows noisy.

Industrial facilities present a different challenge. The MICRO-TRACK system (arXiv, Sep 2024) addresses this with open-set tracking: the system applies Re-ID only when detection confidence is high, uses a tracking module to fill gaps, and grows the gallery dynamically. This modular design makes it easy to integrate into existing industrial surveillance infrastructure.

Code Snippet 1: Cross-Domain Training with Torchreid Source: KaiyangZhou/deep-person-reid – scripts/main.py

bash

# Train on DukeMTMC-reID, evaluate on Market-1501

python scripts/main.py \

--config-file configs/im_osnet_x1_0_softmax_256x128_amsgrad.yaml \

-s dukemtmcreid \ # source dataset (train)

-t market1501 \ # target dataset (eval — unseen domain)

--transforms random_flip color_jitter \

--root $PATH_TO_DATAThis command trains OSNet on one camera domain and evaluates cross-domain accuracy on a different one, exactly the generalisation challenge in multi-camera deployments. Color jitter augmentation replaces random erasing to boost domain robustness. The config file controls backbone, batch size, learning rate schedule, and loss functions, making the pipeline reproducible across teams.

Implementation Guide: From Research to Production

A production-ready multi-camera Re-ID system requires five decisions: detector selection, single-camera tracker, embedding model, gallery management strategy (closed-set vs. open-set), and inference runtime (ONNX/TensorRT for edge, native PyTorch for server).

“The gallery management strategy, not the embedding model is where most production Re-ID systems fail or succeed.”

Gallery Strategy: Closed-Set vs. Open-Set

Closed-set Re-ID assumes the identity set is fixed and known at inference time. This fits access-controlled environments (e.g., a corporate campus with enrolled employees). Open-set Re-ID, as demonstrated by MICRO-TRACK, allows the gallery to grow at runtime and must handle unknown individuals without false positives. Most production surveillance systems need the open-set approach.

Latency vs. Accuracy Trade-offs

FastReID (JDAI-CV) supports both ResNet and ViT backbones and provides ONNX and TensorRT export out of the box. On a server GPU, a ViT-Large backbone delivers the highest mAP. On an NVIDIA Jetson edge device, OSNet-x1.0 is the practical choice: it achieves competitive rank-1 accuracy at a fraction of the FLOPs. Design your pipeline so the backbone can be swapped without changing the gallery management or tracking code.

Camera Calibration and Topology Constraints

If you know the physical layout of your cameras, encode it. A person cannot teleport from Camera 1 (entrance) to Camera 20 (loading dock) in two seconds. Transition time constraints rule out impossible matches and cut false-positive rates significantly. The AIP Conf. Proc. paper (Mishra & Chinnathambi, 2025) validates this spatial constraint approach in a real multi-feed deployment.

Code Snippet 2: FastReID ViT Training and ONNX Export Source: JDAI-CV/fast-reid – tools/train_net.py

bash

# 1. Train with ViT backbone (FastReID config)

python tools/train_net.py \

--config-file configs/Market1501/bagtricks_vit.yml \

MODEL.WEIGHTS /path/to/pretrained_vit.pth

# 2. Export trained model to ONNX for TensorRT deployment

python tools/deploy/onnx_export.py \

--config-file configs/Market1501/bagtricks_vit.yml \

--name vit_reid_market \

--output outputs/onnx_model \

--batch-size 32 \

--opts MODEL.WEIGHTS market_vit.pthThis two-step workflow, train with ViT on labelled data, export to ONNX closes the gap between research-grade accuracy and edge-deployable inference. The ONNX model runs in TensorRT on edge hardware with minimal latency overhead.

Tools and Libraries: Where to Start

The three most production-ready Re-ID libraries are FastReID (JDAI-CV), Torchreid (KaiyangZhou), and the PersonViT codebase (hustvl), each covering different points on the accuracy-latency-annotation spectrum.

“FastReID, Torchreid, and PersonViT cover every point on the accuracy-latency-annotation spectrum there is no reason to build a Re-ID pipeline from scratch in 2025.”

FastReID (~3.9k GitHub stars) is the most comprehensive toolbox. It ships a model zoo with pretrained weights for ResNet, OSNet, and ViT backbones across Market-1501, DukeMTMC-reID, MSMT17, and vehicle Re-ID datasets. Its export scripts handle Caffe, ONNX, and TensorRT, covering the full spectrum from research to production.

Torchreid (deep-person-reid) (~4.6k stars) excels at cross-domain generalisation. OSNet, its flagship architecture, uses omni-scale feature learning to capture both fine-grained textures and global body shape — exactly what you need when your target cameras were not in your training distribution.

PersonViT (hustvl) ships pre-trained ViT models that require zero manual annotation. If your deployment environment is too dynamic to maintain a curated training dataset, PersonViT is the starting point. Fine-tune on a small set of camera-specific crops, and you will close most of the domain gap.

Frequently Asked Questions

What is multi-camera person re-identification?

Multi-camera person re-identification is a computer vision task that matches a specific individual across multiple camera feeds that do not share overlapping views. It uses deep learning feature embeddings to compare appearance across different viewpoints, lighting conditions, and time gaps, assigning a consistent global identity to each tracked person across the entire camera network.

How accurate is person re-ID across cameras?

State-of-the-art supervised models achieve Rank-1 accuracy above 95% on benchmark datasets like Market-1501. Self-supervised models such as PersonViT (2024) reach competitive accuracy without labelled data. Real-world accuracy is typically 70 to 90%, due to occlusion, resolution variation, and domain shift between training and deployment cameras.

What is the difference between person re-ID and object tracking?

Object tracking maintains an identity within a single camera view using motion continuity, it loses the identity the moment the person leaves the frame. Person re-ID re-establishes identity across cameras by matching visual features, not motion. A complete multi-camera system needs both: tracking within each camera and re-ID across cameras.

Can person re-ID work without labelled training data?

Yes. Self-supervised methods like PersonViT (arXiv 2024) pre-train on large unlabelled datasets using Masked Image Modeling and contrastive learning, then fine-tune with minimal or zero per-camera annotation. Federated and unsupervised domain adaptation approaches further reduce the labelling burden in privacy-sensitive deployments.

What hardware do I need to run multi-camera person re-ID in real time?

For a 4-camera system at 1080p, a single NVIDIA GPU (RTX 3080 or equivalent) handles detection, tracking, and embedding inference in real time when using an optimised model like OSNet with TensorRT. Larger networks benefit from dedicated edge devices per camera cluster or a centralised GPU server. FastReID’s ONNX export path makes hardware-agnostic deployment straightforward.

What to Build First: A Practical Summary

Three insights should drive your next build. First, choose your gallery strategy before choosing your model; open-set or closed-set determines the entire downstream architecture. Second, self-supervised ViT models remove the annotation bottleneck; PersonViT gives you SOTA embeddings without a single labelled bounding box. Third, FastReID and Torchreid give you a proven, configurable pipeline in hours, not weeks; use them as your baseline before building anything custom.

The market pressure is real: surveillance AI is growing at over 20% annually, retail shrink is accelerating, and smart-city deployments are scaling globally. Teams that invest in multi-camera Re-ID infrastructure now will have a durable advantage as camera network density increases.

The question worth sitting with: if your current camera network resets every identity the moment a person walks off-screen, what business decisions are you making on incomplete data?