DEFINITION: Explainable AI (XAI) is a set of techniques and frameworks that make the internal decision-making of machine learning and deep learning models transparent and interpretable to humans. Rather than accepting a model’s output as a black-box verdict, XAI surfaces the “why” which features drove the prediction, and how much weight each carried.

The Black-Box Problem: Why XAI Is No Longer Optional

Deep learning models achieve high accuracy but cannot explain their reasoning. This opacity creates legal, ethical, and operational risk, especially in regulated industries.

According to McKinsey’s 2024 State of AI survey, 40% of respondents identified explainability as a key risk in adopting generative AI, yet only 17% were actively working to mitigate it. That gap is dangerous. Organizations investing in AI at scale while leaving explainability unaddressed are sitting on a compliance and reputational time bomb.

The pace of adoption makes this more urgent. McKinsey (May 2024) reported that 65% of organizations now regularly use generative AI, nearly double the share from just ten months prior. More deployment without better explainability means more unexplainable decisions in the wild.

In practice, teams building deep learning systems often tell us the same thing: the model works in testing, but nobody can explain why a loan got denied or why an anomaly alert fired. That gap erodes trust among both users and auditors.

“The model gets deployed. The explanation never does. That is the risk no CTO can afford to ignore.”

Regulatory pressure is adding urgency. The EU AI Act classifies many deep learning applications as high-risk, requiring transparency and human oversight. Gartner projects that by 2026, organizations operationalizing AI transparency and trust will see a 50% improvement in adoption, business goals, and user acceptance through their AI TRiSM framework. Explainability is no longer a nice-to-have; it is a growth lever.

The Four Core XAI Techniques Every Developer Should Know

The four main XAI techniques for deep learning are SHAP (game-theoretic feature attribution), LIME (local surrogate models), Grad-CAM (gradient-based visual heatmaps), and Integrated Gradients (path-attribution for neural networks). Each serves a different context; choosing the wrong one wastes engineering effort.

SHAP (SHapley Additive exPlanations) treats each model feature as a player in a cooperative game and the prediction as the payout. It computes how much each feature contributed to shifting the output above or below the baseline. SHAP delivers both global insight (which features matter across thousands of predictions) and local insight (why this specific prediction happened).

Code Snippet 1: SHAP beeswarm visualization – source: shap/shap on GitHub

This 8-line snippet trains a model, computes SHAP values, and renders a beeswarm plot showing how each feature pushed each prediction. Replace the XGBoost model with any deep learning wrapper to explain your neural net.

A 2024 peer-reviewed perspective in Advanced Intelligent Systems (Salih et al.) found that SHAP consistently outperforms LIME because it provides both global and local explanations and can detect nonlinear associations, whereas LIME is local-only and fits a linear surrogate model that discards non-linear relationships. Both methods are affected by feature collinearity, so treat SHAP output as an investigative tool, not an infallible audit trail.

Grad-CAM uses the gradients flowing into the final convolutional layer of a CNN to produce a heatmap that highlights which spatial regions of an image drove the prediction. No changes to your model are needed. Grad-CAM output is immediately legible to product managers and clinical stakeholders, making it the preferred choice for image classification explainability.

Integrated Gradients attributes the model’s prediction to its input features by accumulating gradients along a straight-line path from a baseline (often a zero vector) to the actual input. It is the go-to technique when you have full gradient access to a PyTorch or TensorFlow model and need a theoretically grounded attribution.

Real-World Use Cases: Where XAI Is Already Delivering Value

XAI is proven in credit scoring, medical imaging, and fraud detection. In each case, explainability is not a debugging aid; it is a core product requirement.

In financial services, credit risk models must show regulators why a loan was declined. SHAP feature attribution maps each applicant’s data points to a ranked list of contributing factors. The global XAI market reached $7.79 billion in 2024, with fraud and anomaly detection commanding the largest application share, precisely because financial institutions cannot operate black-box models under GDPR and similar regulation.

In medical imaging, Grad-CAM overlays on chest X-ray classifiers allow radiologists to confirm that a pneumonia prediction is highlighting the lung opacities, not a spurious artifact in the image corner. A 2024 survey in the Journal of Imaging covering over 400 papers found that gradient-based methods like Grad-CAM dominate medical imaging XAI because clinicians need visual confidence, not abstract feature scores.

In autonomous systems and predictive maintenance, SHAP explanations on time-series sensor data let engineers verify that an anomaly alert is triggered by a real mechanical signal, not by a spurious data artifact. Transparency at this level is required before operators will trust an autonomous recommendation.

XAI Architecture for Production Deep Learning Systems

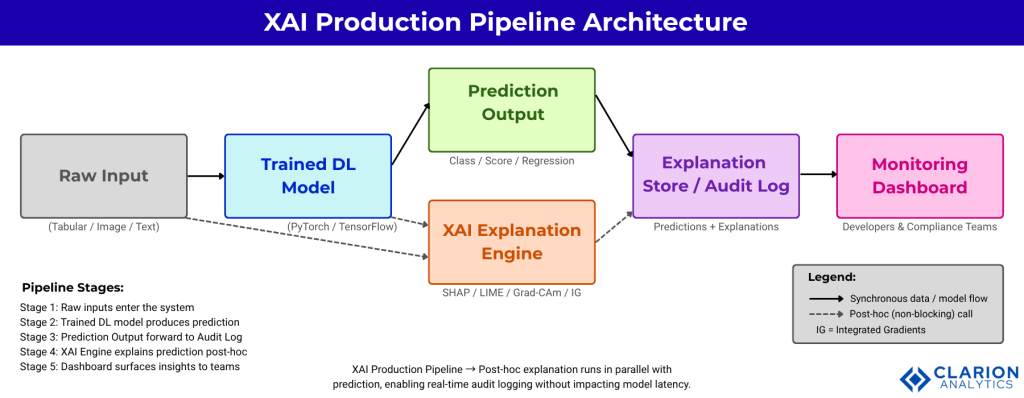

A production XAI pipeline wraps the trained model with a post-hoc explanation layer, generates explanations on demand, stores them with prediction logs, and surfaces them via a monitoring dashboard.

Figure 1: XAI Production Pipeline – Post-hoc explanation runs in parallel with prediction, enabling real-time audit logging without impacting model latency. Dashed arrows indicate asynchronous, non-blocking calls. The five stages are: (1) Raw Input, (2) Trained DL Model, (3) Prediction Output, (4) XAI Explanation Engine (SHAP/LIME/Grad-CAM/IG), and (5) Explanation Store/Audit Log feeding the Monitoring Dashboard.

“Explainability cannot be bolted on after deployment. It must be designed into the pipeline from day one.”

The key architectural principle is decoupling: the XAI Engine calls the model post-hoc, asynchronously. Explanation generation never sits in the critical path of the inference response. A typical setup logs the raw input, the prediction, and the SHAP/Grad-CAM artifact to an immutable explanation store that compliance teams can query on demand.

Teams building this typically find that the explanation store doubles as a model debugging tool. When a production model starts drifting, SHAP value distributions change before accuracy metrics do. Monitoring feature attribution over time is an early-warning system for data drift.

SHAP vs. LIME vs. Grad-CAM: Which Tool Fits Your Use Case?

Use SHAP for global feature attribution on structured data; LIME for fast local explanations on any model type; Grad-CAM for visual heatmaps on CNN image classifiers.

| Tool | Key Strength | Best Used When |

|---|---|---|

| SHAP | Global + local attribution; game-theory guarantees; detects nonlinear feature relationships | Tabular/structured data, tree models, or any DL wrapper; when auditing feature importance across the full dataset |

| LIME | Model-agnostic; fast single-prediction local explanation; works on text, images, tabular | Quick debugging of one prediction; any model type; when you need a human-readable local reason immediately |

| Grad-CAM | Visual spatial heatmap; no model changes needed; output legible to non-technical stakeholders | CNN / image classifiers; communicating results to clinicians, product managers, or regulators |

| Integrated Gradients | Path-based; theoretically grounded; handles deep neural nets directly with full gradient access | PyTorch/TensorFlow models where gradient access is available; safety-critical applications requiring formal guarantees |

Step-by-Step: Adding XAI to Your Existing Deep Learning Pipeline

To add XAI to an existing model: install the relevant library (shap or captum), wrap the trained model with an explainer object, compute attributions on a representative sample, and store explanation artifacts alongside predictions in your logging system.

Step 1: Install your XAI library. For PyTorch models, Captum (pytorch/captum) is the best choice. For any other framework, shap/shap with a model wrapper covers your case.

Step 2: Wrap the model. Neither SHAP nor Captum requires model retraining. You create an explainer object around the already-trained model. This is a non-destructive operation.

Step 3: Compute attributions on a representative batch, ideally 50 to 500 samples that cover the prediction distribution. Store the results alongside raw inputs and predictions in your logging infrastructure.

Code Snippet 2: Captum Integrated Gradients for PyTorch – source: pytorch/captum on GitHub

This snippet wraps any PyTorch model with Integrated Gradients and produces per-pixel attribution scores. The output overlays on the input image as a heatmap, no changes to model weights or architecture required.

Step 4: Log everything. The explanation artifact (SHAP values or attribution map) should be stored with the prediction ID, timestamp, model version, and input hash. This creates the audit trail that compliance teams need and regulators increasingly require.

“Storing explanations alongside predictions is not overhead, it is the foundation of every AI governance audit your team will ever face.”

A 2025 unified evaluation framework study (arXiv 2503.05050) found that no single XAI method dominates across all model types: LIME scores highest on human-reasoning agreement, while Attention Mechanism Visualization shows superior robustness. Build a flexible pipeline that can swap techniques without changing the logging contract.

FAQ: Explainable AI in Deep Learning

What is the difference between SHAP and LIME for deep learning models?

SHAP uses game theory to calculate each feature’s contribution both globally across the entire dataset and locally for a single prediction. LIME builds a simplified local surrogate model around one prediction to approximate feature weights. SHAP is generally more reliable for deep learning because it captures nonlinear relationships; LIME is faster and works on any model without gradient access.

Does adding XAI slow down my model in production?

Not if architected correctly. The XAI Explanation Engine runs post-hoc and asynchronously; it is never in the inference critical path. Explanation generation for a single prediction typically takes 10ms to 500ms depending on method and model size. Batch explanation jobs run offline against stored predictions without touching production latency.

Which XAI method should I use for image classification with a CNN?

Grad-CAM is the standard choice for CNN image classifiers. It produces a spatial heatmap showing which image regions drove the prediction, requires no model changes, and generates output that clinicians, product managers, and regulators can interpret visually. Integrated Gradients is the better choice when you need per-pixel attribution with formal theoretical guarantees.

Is XAI required for EU AI Act compliance?

Yes, for high-risk AI systems. The EU AI Act mandates transparency, interpretability, and human oversight for AI deployed in credit scoring, medical devices, hiring, law enforcement, and other high-risk categories. Explainability outputs stored with prediction logs form the technical basis of compliance documentation. Low-risk applications have lighter requirements but are still expected to implement good-faith transparency measures.

Can I use XAI to detect bias in my deep learning model?

Yes, and it is one of the most powerful uses of XAI. By computing global SHAP importance across demographic subgroups, you can identify whether protected attributes or proxies are driving predictions disproportionately. Segmenting SHAP waterfall plots by user cohort is a practical bias audit workflow that most compliance and fairness teams can run without a statistics background.

Three Things Your Team Should Do This Quarter

Here are the three most important takeaways from everything above.

First: Explainability is a recognized risk at scale; McKinsey (2024) found 40% of AI adopters flagged it but only 17% acted. Your window to build explainability infrastructure before a regulatory or reputational event is still open, but narrowing.

Second: The architecture is straightforward. Add an asynchronous XAI Explanation Engine alongside your existing inference pipeline. Log SHAP values or Grad-CAM artifacts with every prediction. The engineering lift is days, not quarters.

Third: No single method wins everywhere. SHAP excels on structured data and global analysis; Grad-CAM is the default for image models; Integrated Gradients provides formal guarantees for PyTorch and TensorFlow. Pick the method that matches your model type, not the one with the most GitHub stars.

The question is not whether your deep learning models need to be explainable. The question is how much trust from users, auditors, and regulators you are willing to leave on the table while you wait to build it.