Definition: A Vision Transformer (ViT) for OCR is an encoder-decoder deep learning architecture that splits an input image into fixed-size patches, encodes each patch as a token, and applies multi-head self-attention to model global spatial relationships across the entire image. Unlike CNN-RNN pipelines, ViT-based OCR requires no convolution layers and handles distorted, multilingual, and handwritten text with state-of-the-art accuracy.

Why Your Legacy OCR Pipeline Has a Ceiling

The global OCR market is forecast to reach USD 46.09 billion by 2033 (IMARC Group, 2024), growing at a CAGR of 13.06%. That number is not just market research; it signals the scale of unmet demand. The pipelines most development teams rely on today, built on CNN encoders and RNN decoders, were designed for clean, structured documents. Production rarely delivers those.

Traditional OCR works locally. Convolutional filters scan small image regions sequentially. That works on a crisp printed invoice. It struggles with a photographed form under poor lighting, a multilingual shipping label, or a physician’s handwritten note. The system processes each region without knowledge of the rest of the image.

Vision Transformers change the fundamental mechanism. Every patch in an image attends to every other patch simultaneously. The model builds global context before generating a single character. That is why teams replacing CNN-RNN pipelines with vision transformers for OCR report the biggest gains not on clean documents — but on the 15 to 20 percent of edge-case inputs that used to require manual review.

“The global OCR market is on track to exceed USD 46 billion by 2033, and the architects racing to claim that value are all betting on Vision Transformers.”

What Is a Vision Transformer and How Does It Work for OCR?

A Vision Transformer for OCR splits an image into N non-overlapping patches, projects each patch into a fixed-dimensional embedding, adds positional encodings to preserve spatial order, and processes the full sequence with stacked multi-head self-attention layers. The decoder then generates text token by token using cross-attention over the encoded image features; no convolutional layer required.

Patches, Embeddings, and Self-Attention – The Core Mechanism

Take any input image. Resize it to a fixed resolution, say 384×384 pixels. Divide it into non-overlapping 16×16 patches. You get 576 patches. Flatten each patch into a vector, project it to a 768-dimensional embedding, add a learned positional encoding, and you have a sequence of 576 tokens — the same format a BERT model would process. The Transformer encoder then runs multi-head self-attention across all 576 tokens in parallel.

Self-attention means every token can directly attend to every other token in a single operation. For OCR, this matters enormously. The character ‘I’ in a legal contract looks identical whether it is a numeral or a letter; only surrounding context disambiguates it. CNNs cannot see that context without deep stacking. Self-attention sees it in the first encoder layer.

How ViT Differs from CNN-RNN in Practice

CNN-RNN OCR systems encode features with convolutions, inherently local operations, then decode with a recurrent network that processes one timestep at a time. Sequential decoding limits parallelisation and forces the model to compress all visual information into a fixed hidden state before generating text.

ViT-based models like TrOCR and PaddleOCR-VL process the entire image in parallel at the encoder stage and use cross-attention in the decoder, where each output token can directly attend to any input patch. This produces better accuracy on complex layouts and enables significantly faster training on modern GPU clusters.

Real-World Use Cases Where ViT-Based OCR Changes the Game

Vision Transformer OCR outperforms legacy systems in four high-value scenarios: financial document extraction, medical record digitisation, autonomous vehicle sign reading, and multilingual e-commerce cataloguing. All four share the same trait, variable, real-world text conditions that defeat template-based approaches.

Document Automation in BFSI

Banks and insurers process millions of forms, contracts, and invoices weekly. According to McKinsey (2024), 70% of organisations are piloting document workflow automation in at least one business unit. The blocker is accuracy on non-standard documents. A ViT-based pipeline processes a hand-completed mortgage application with the same architecture it uses for a printed bank statement because it learns from global context rather than template-matching.

“In practice, teams replacing CNN-RNN pipelines with TrOCR report the biggest gains not on clean printed documents, but on the 15-20% of edge-case inputs that used to require manual review.”

Healthcare and Handwriting Recognition

Medical records, prescription pads, and clinical notes remain stubbornly handwritten in many healthcare systems. The HTR-VT paper (Li et al., Pattern Recognition, 2024) demonstrates that a data-efficient ViT encoder with span masking achieves state-of-the-art performance on the IAM handwriting benchmark without external pre-training data. That matters for healthcare teams who cannot share patient data with external model providers.

Scene Text for Autonomous Systems and E-Commerce

Reading a street sign, product label, or retail shelf tag from a moving camera requires robustness to perspective distortion, variable lighting, and partial occlusion. MGP-STR, a ViT-based multi-granularity prediction model, achieves state-of-the-art results across scene text benchmarks without requiring an external language model, meaning it works on text types the language model has never seen.

ViT-Based OCR Architecture and How the Pipeline Is Built

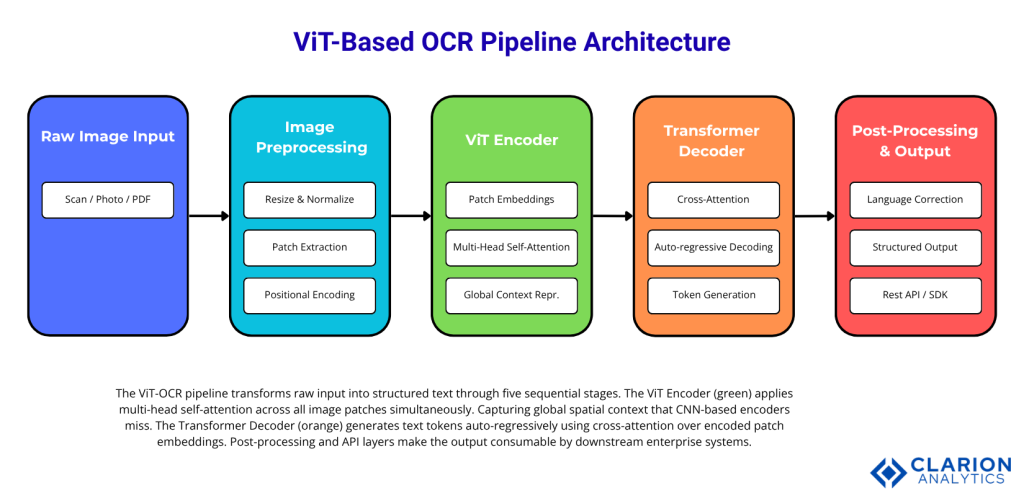

A production ViT-OCR pipeline consists of five stages: image preprocessing and patch extraction; ViT encoder producing contextual patch embeddings; cross-attention decoder generating text tokens; post-processing for language correction; and an inference API layer for integration into existing systems.

Figure 1: ViT-OCR Pipeline Architecture. The ViT Encoder processes all image patches in parallel via multi-head self-attention, producing global context representations. The Transformer Decoder generates text tokens auto-regressively using cross-attention over encoder patch embeddings. Post-processing and API layers deliver structured, integration-ready output.

Choosing Your Tools – TrOCR, PaddleOCR, and MGP-STR Compared

For most developers starting with ViT-based OCR, TrOCR via HuggingFace offers the lowest barrier to entry. PaddleOCR suits production multilingual deployments. MGP-STR is the best choice for scene text in uncontrolled environments. Here is how to decide.

| Tool | Key Strength | Best Used When |

|---|---|---|

| TrOCR (Microsoft) | Pre-trained, convolution-free, HuggingFace integration in 4 lines of Python | Fast PoC or fine-tuning on custom printed/handwritten data |

| PaddleOCR (PaddlePaddle) | 100+ languages, 50,000+ GitHub stars, production-grade with detection + recognition + LLM/RAG support | Multilingual production deployments and LLM pipeline integration |

| MGP-STR | Multi-granularity prediction, no external language model, strong on irregular scene text | Uncontrolled environments: retail, automotive, logistics, and sign recognition |

Code Snippet 1: TrOCR Inference for Handwritten Text Source: microsoft/trocr-base-handwritten — HuggingFace

This snippet loads Microsoft’s TrOCR model, a ViT encoder paired with a RoBERTa decoder and runs inference on any PIL image in five lines. The processor handles patch extraction and positional embedding automatically. There is no CNN feature extractor to configure and no CTC decoder to tune. This is the practical proof that ViT-based OCR has crossed the usability threshold for production teams.

Implementing a ViT-OCR Pipeline – Practical Steps for Development Teams

A working ViT-OCR integration follows three phases: select and load a pre-trained model via HuggingFace or PaddleOCR; preprocess images to the model’s expected patch size and resolution; then wire the output into your existing document processing workflow via API or SDK.

“Teams building ViT-OCR pipelines typically find that 80% of their engineering effort is not in the model, it is in image preprocessing, output validation, and graceful fallback for edge cases.”

Step 1: Model Selection and Environment Setup

Start with TrOCR for printed or handwritten English documents. For multilingual production workloads, deploy PaddleOCR, which now carries over 50,000 GitHub stars and supports 100-plus languages with a detection-recognition pipeline built for LLM and RAG integration. For scene text labels, signs, screens evaluate MGP-STR first.

Step 2: Image Preprocessing for Patch-Based Input

ViT models expect images at a fixed resolution (typically 384×384 for TrOCR-large). Your preprocessing pipeline must resize without distortion, normalise pixel values, and handle document deskewing before the image reaches the model. Poor preprocessing is the leading cause of accuracy regressions in production ViT-OCR deployments.

Code Snippet 2: MGP-STR Scene Text Recognition Source: HuggingFace Transformers – MgpstrForSceneTextRecognition

MGP-STR applies multi-granularity prediction via ViT, generating character-, subword-, and word-level predictions simultaneously within one forward pass. This internal linguistic integration removes the dependency on a post-hoc language model, the source of most latency in traditional scene text recognition pipelines.

Step 3: Integration, Monitoring, and Fallback

Wire the model output into a confidence-scored queue. Outputs below a confidence threshold route to human review, not to a reject bin. Log character-level error rates per document class and use them to prioritise fine-tuning datasets. Teams building this typically find that two or three targeted fine-tuning cycles with domain-specific data move accuracy by 8 to 12 percentage points on edge-case document types.

Frequently Asked Questions

How accurate are vision transformers for OCR compared to traditional methods? ViT-based models like TrOCR achieve state-of-the-art results on printed, handwritten, and scene text benchmarks (Li et al., 2021). AI-powered OCR now exceeds 95% accuracy on printed English text, up from 88% just three years ago (market data, 2024). The gap widens further on multilingual, handwritten, or distorted inputs, where CNN-RNN pipelines degrade significantly.

Can ViT-based OCR handle handwritten text? Yes. The HTR-VT model (Li et al., Pattern Recognition, 2024) demonstrates state-of-the-art handwriting recognition on the IAM dataset using only a ViT encoder with span masking, no large-scale external pre-training needed. The key enabler is the model’s global self-attention, which captures ligatures and connected strokes that convolution-only models miss.

What is TrOCR and how does it work? TrOCR is Microsoft’s end-to-end Transformer-based OCR model, published on arXiv in 2021 and presented at AAAI 2023. It pairs a pre-trained ViT image encoder with a pre-trained text Transformer decoder. Images are split into patches; the encoder produces contextual embeddings; the decoder generates wordpiece tokens via cross-attention. No CNN backbone. No CTC layer. No post-processing language model.

Is PaddleOCR suitable for production deployments? PaddleOCR is one of the most production-proven open-source OCR toolkits available, with over 50,000 GitHub stars (as of 2025) and deployment as the core OCR engine in large-scale RAG and LLM document pipelines. Version 3.0 integrates a NaViT-style Vision Transformer encoder with a 0.9B language model, supporting 109 languages and returning structured output for tables, formulas, and charts.

How much training data do I need to fine-tune a ViT-OCR model? Less than most teams expect. The HTR-VT research (2024) shows strong results on the IAM dataset with just 6,428 training samples. Pre-trained models like TrOCR already encode rich visual-linguistic priors. Fine-tuning on 1,000 to 5,000 domain-specific labelled images typically achieves significant accuracy gains on specialised document types.

“The shift from CNN-RNN to Vision Transformer is not an incremental improvement in OCR; it is an architectural generational change comparable to the move from rule-based to neural methods a decade ago.”

Three Insights That Should Change How You Build OCR Systems

First, the OCR accuracy ceiling that your team has been debugging around is architectural, not a data problem. Switching to a ViT encoder fundamentally changes what the model can see, global context versus local patches and that translates directly to accuracy on the documents that matter most.

Second, the barrier to adoption is now near-zero. With TrOCR available through HuggingFace in four lines of Python and PaddleOCR deployable as a production service, proof-of-concept cycles take days, not quarters. The risk of not evaluating these architectures now outweighs the risk of evaluating them.

Third, the market will not wait. Grand View Research (2023) projects the OCR market reaching USD 32.90 billion by 2030 at a 14.8% CAGR. Engineering teams that have already shipped ViT-based document pipelines are building compounding accuracy and cost advantages. The question for your team is not whether to adopt Vision Transformers for OCR; it is how quickly you can run your first benchmark.